Predicting Housing Prices in Ames, Iowa using Machine Learning Techniques

Introduction

Providing accurate valuation of home prices is an integral function of modern day online real-estate marketplace platforms such as Zillow and Redfin. Homebuyers rely on these estimations to gain quick insight into the current market and to plan their purchases while sellers refer to them to set expectations. Especially in the case of flippers and iBuyers (which Zillow ceased its operation in back in October 2021), having accurate estimations of the housing prices is likely the most important factor in being able to turn a profit. The task of accurately estimating housing prices is far from trivial since many factors can contribute to a shift in value and to various degrees. Therefore, it makes sense to utilize machine learning techniques to uncover the intricate relationships between the various features and the home value.

The goal of this project is take on the role of a real estate database company looking to arrive at a machine learning model that produces the most accurate housing price predictions. The dataset from the famous Kaggle competition on Ames housing price predictions will be used to evaluate the performance of various machine learning models as well as tunable parameters and the best combination will be used for the submission to the competition.

Data Cleaning and Feature Engineering

The dataset is comprised of a 50/50 split for training and testing set on around 3000 entries with 79 features. Based on the competition setup, the evaluation of the models will be performed on the training set and the testing set will only be used for the predictions submission.

As a first step, the training and testing set were combined and checked for duplicate entries. No duplicate entries were found.

It is also important to ensure the data type of the features are correct. All feature types are correct with the exception of "MSSubClass" which is a code to identify the type of dwelling and should be a categorical feature instead of numeric.

Resolving Missing Values/NAs

The following features had missing values and were resolved in the stated way.

- Utilities (Type of utilities available): Impute with “AllPub” since it’s the most common by far.

- GarageYrBlt (Year garage was built): Impute with “YearBuilt” since they almost always coincide.

- Exterior1st & Exterior2nd (Exterior coverings on house): Impute both with “VinylSd” since it’s the most common.

- MasVnrType & MasVnrArea (Masonry veneer type and area): For cases where both are NA, impute with None and 0. For one entry with area, impute type as “BrkFace” since it’s the most common.

- Bsmt (Basement) variables: Two entries with no basement but values are NA instead of 0, change to 0.

- Functional (Home functionality): Impute with “Typ” since it’s the most common.

- KitchenQual (Kitchen quality): Impute with “TA” since it’s the most common.

- GarageCars & GarageArea: Replace with 0.

LotFrontage (Linear feet of street connected to property) had a very high number of NA’s (259 in training set and 227 in testing set). Simply replacing NA’s with 0 seems to be a bad idea, especially since LotArea is not 0 and every LotConfig and LotShape has NA’s, including “FR2”, “FR3” and “Reg”. Logically speaking, would expect LotFrontage to be relatively highly correlated to LotArea. Study scatter plot to see if this is indeed the case. Only look at units with LotArea <= 20000 to avoid outliers and only LotShapes of Reg and IR1 since most units are of those two types.

For LotShape = Reg, the relationship appears to be fairly linear and with IR1 it is much more random. Proceed to do simple regression on all LotShape = Reg entries and impute missing with predicted value. This will introduce multicollinearity issues but will deal with it later on in the project.

Feature Engineering

The following changes were made to eliminate redundancy, simply the data where appropriate and generate features that made more sense or were believed to better explain the target variable.

- YearBuilt (Original construction date): The age of the house when sold makes more sense as a variable, so create variable which is AgeSold = YrSold - YearBuilt and remove YearBuilt.

- YrSold (Year sold): Convert to nominal categorical since the factor being evaluated now is really the economy and real estate market during the year.

- MoSold (Month sold): Same logic as YrSold and shouldn’t be considered an ordinal feature. Simplify MoSold to the quarter of the year and convert to nominal categorical.

- YearRemodAdd (Remodel date): Just convert to whether the house was remodelled or not.

- GrLivArea & TotalBsmtSF (Above ground living area square feet & Total square feet of basement area): GrLivArea = 1stFlrSF + 2ndFlrSF + LowQualFinSF and TotalBsmtSF = BsmtFinSF1 + BsmtFinSF2 + BsmtUnfSF so they are both redundant information. Remove both features.

- Bathrooms (Above ground and basement): To reduce some dimensions, consider half bathrooms to be 0.5 bathrooms and add to number of full bathrooms.

- TotRmsAbvGrd (Total rooms above ground, not including bathrooms): Convert to number of non-bedroom or kitchen rooms. Create variable OtherRms = TotRmsAbvGrd - BedroomAbvGr - KitchenAbvGr and remove TotRmsAbvGrd.

Data Exploration

Since multiple linear regression will be used as one of the models, the data exploration will be done from the perspective of checking some of the linear regression assumptions and modifying the data accordingly.

Multicollinearity

Multicollinearity results in coefficients with higher standard errors and causes the model to become unstable. Therefore, need to check for and resolve multicollinearity issues between features. Plot correlation matrix and see if some highly correlated features can/should be removed.

Feature pairs with correlation > 0.7

- LotFrontage & LotArea: This is expected, especially given that LotFrontage NA's were imputed with linear regression with LotArea as the independent variable. Will remove LotFrontage due to many NAs as well as LotArea logically being more important with higher correlation to target variable.

- GarageYrBlt & AgeSold: This is expected since for a vast majority of houses the year built will be the same. Will remove GarageYrBlt since AgeSold is logically more important with higher correlation to target variable.

- GarageCars & GarageArea: This is expected since garage size in car capacity is directly indicative of the garage area. Will remove GarageArea since GarageCars has higher correlation to target variable.

Normality

The assumption that residuals are normally distributed can be violated due to non-normally distributed variables as well as the presence of outliers.

Target Variable Skew

The target SalePrice is skewed, thus apply log-transformation to SalePrice. Other features are also not necessarily normally distributed, such as 1stFlrSF shown below. However features are not what needs to be normally distributed, just the residuals should be. Therefore will run model first and check if assumption is met. If not, come back and apply log transformation to variables.

Outliers

Check for outliers with the top 5 features with highest correlation to sale price. Have suspicion that some outliers may be based on SaleCondition being abnormal, so color points to show SaleCondition.

Want to systematically remove outliers instead of arbitrarily picking them out. Therefore, for each of the 4 top ordinal features, remove data points below 0.5 percentile and above 99.5 percentile for each group. Doing so can remove enough points such that certain feature values don't have any entries, such as OverallQual of 1. It is possible to implement method where if group has less than a certain count then do not remove outliers but will just go with this method for now. Removed 59 data points from the training set down to 1401.

Outliers Removed

Check 1stFlrSF for outliers.

Do not see any obvious outliers, will not remove any more.

Linearity

Sample scatter plots

There are too many features to check and do not have good way to systematically resolve non-linearity, therefore just leave features as they are for now.

Preparation for Modeling

Encoding

- Use OrdinalEncoder on ordinal categorical features.

- Use OneHotEncoder on nominal categorical features and always drop one column. After OneHotEncoder, realized some features are in training set but not in test set and vice versa. Remove features that are not shared. End up with 198 features.

- Tree-based models do not need OneHotEncoder. Create a separate set where nominal categorical features are also ordinally encoded. Will try both on for tree-based models and see which route produces better results.

Setup K-Fold Cross Validation and Performance Metric

Setup 10 fold cross validation on training set to be used by all models.

Use RMSE as error metric to compare model performance.

Machine Learning Models

Use Scikit-Learn library to implement machine learning models.

Multiple Linear Regression and Regularized Linear Regression

MLR is just a simple fit onto model.

Since regularized linear regression penalizes the coefficients equally, need to standardize/normalize the features. Also look to tune alpha value for each model. Ridge, Lasso and Elastic Net all use the pipeline below and goes through GridSearchCV.

![]()

Tree-based Models

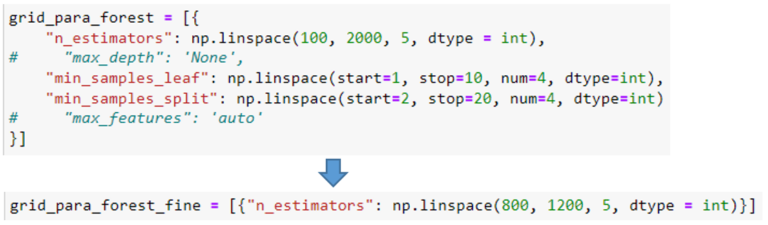

Tree-based models do not require standardization/normalization, therefore no pipeline needed and just GridSearchCV. Took an approach where started off with coarse parameter tuning followed by another finer GridSearch. Example below.

Followed this approach for Random Forest and Gradient Boost and tried both the OneHotEncoded set and the all ordinally encoded set for each. Random Forest had better score with all ordinally encoded set and Gradient Boost had better score with the OneHotEncoded set.

SVM

Since all kernel methods are based on distance, features need to be standardized/normalized so the ones with greater numeric ranges do not dominate. Therefore, similar to regularized linear regression, the pipeline below is used.

Model Comparison and Competition Submission

As shown in table, Elastic Net had the lowest mean RMSE out of all tested models. The best alpha value from GridSearchCV was ~0.0038.

For the competition submission, used optimal alpha value and Elastic Net model to predict SalesPrice using the test set. Make sure to transform the test set using the same StandardScaler fitted to the training set. Since predicted result is natural log of SalesPrice, apply exponential function and round to nearest integer to arrive at predicted price in dollars.

1304th Place out of ~4000 Submissions

Further Exploration

Feature Importance

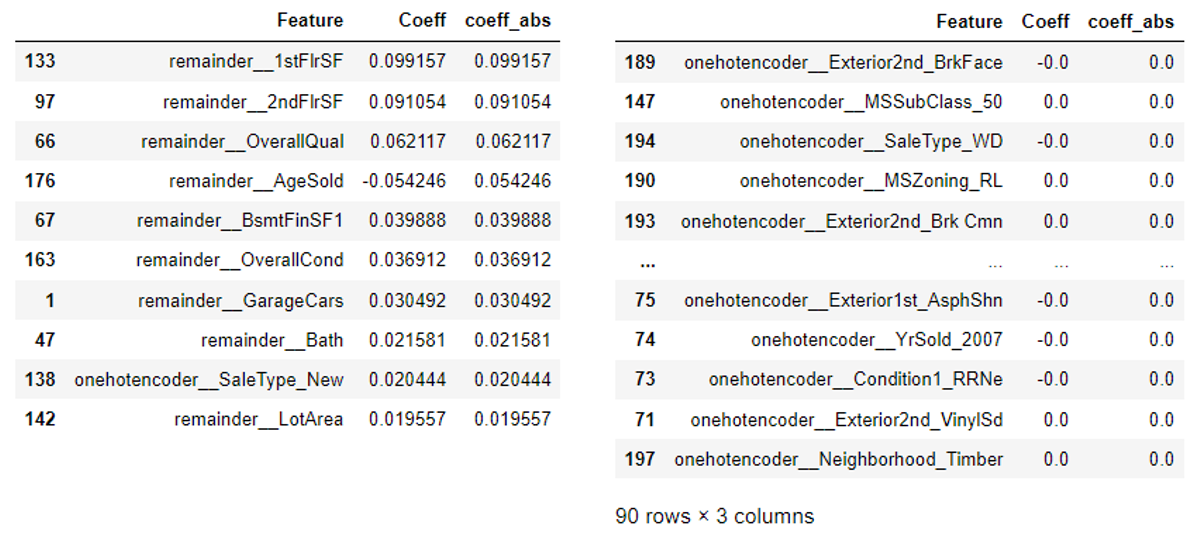

Since in this case Elastic Net was found to be the best model, it is possible to study the coefficients and discover which features impact SalePrice the most according to the model. Since the features have been standardized, can directly compare coefficient magnitude.

It can be seen that the surface area is the biggest factor contributing to the price, followed by the overall quality and age of the house. The top ten highest coefficients all logically make sense as major contributors to price. Also checked how many coefficients went to 0 with Elastic Net with the tuned alpha value. 90 features out of 198 became 0, suggesting that possibly around half of all included features do not contribute significantly to the target variable.

Linear Regression Assumptions Revisited

Fit the MLR portion of the Elastic Net and check that linear regression assumptions mentioned previously are satisfied.

Normality

It can be seen from the plots that the residuals do appear to be normally distributed.

Heteroscedasticity

It can be seen from the plot that the residuals do appear to have relatively constant variance.

Closing Remarks

Of all the machine learning models evaluated, Elastic Net with a tuned alpha value of ~0.0038 turned out to be the best performing in this case. Fitting the model to the data, it can be confirmed that factors such as square footage, overall quality and condition, age, garage size and number of bathrooms were expectedly amongst the most important in terms of price contribution. On the other hand, a lot of the provided features were also shown to be insignificant in affecting the price. Although there is no established criteria or target in terms of prediction accuracy, the goal of being able to provide good estimations to housing prices can be met by utilizing the best performing model and parameters determined by this project.

Future Work

It was made aware to the author that there exists other versions of the dataset that includes detailed geographical information which would enable factoring in the effect of location beyond just the categorical neighborhood feature. Since location is generally known to be one of the most important factors in determining home price, having the extra dimensions to the data would undoubtedly improve the accuracy of the model.

It is also possible to attempt the project from a more descriptive modeling approach where instead of focusing on tuning the models to arrive at the most accurate predictions, can shift focus to figuring out the reason for the discount on larger homes in terms of $/ft2 as well as various other data analysis ideas and observed phenomena.

Another approach could also be to try to reduce dimensionality by using regularized linear regression or principle component analysis first to reduce/simplify the dataset before training and evaluating the models. With reduced number of features, it also becomes more feasible to address some of the linear regression assumptions, such as transforming features to establish actual linear relationships.

Would also like to try stacking/emsembling models and seeing if performance could be improved.