Funded or Failure? Predicting whether a Kickstarter project will succeed

Have you ever had a creative project in mind but lacked the funds to bring it to life?

Maybe you want to publish a cookbook, film a documentary, or release an album. With the growing popularity of crowd-funding sites, that idea doesn't have to be a daydream. Kickstarter has grown into one of the most popular crowd-funding platforms on the web since its inception in 2009. Over 140,000 projects have been funded thanks to the patronage of millions of Kickstarter backers. From smart watches to card games about combustible felines, Kickstarter has actualized projects that have impacted technology, design, and pop culture.

So you have your idea, and you are ready to deploy your Kickstarter page...

Not to deter you, but only about 36% of projects get funded. So what is the magic formula to get your project fully funded? What factors effect whether a project succeeds or fails? This dataset, provided by Kaggle, can give us insight into Kickstarter projects launched between 2009 and 2018...

(Follow along on my RShiny App here; see my code on GitHub here)

The Dataset:

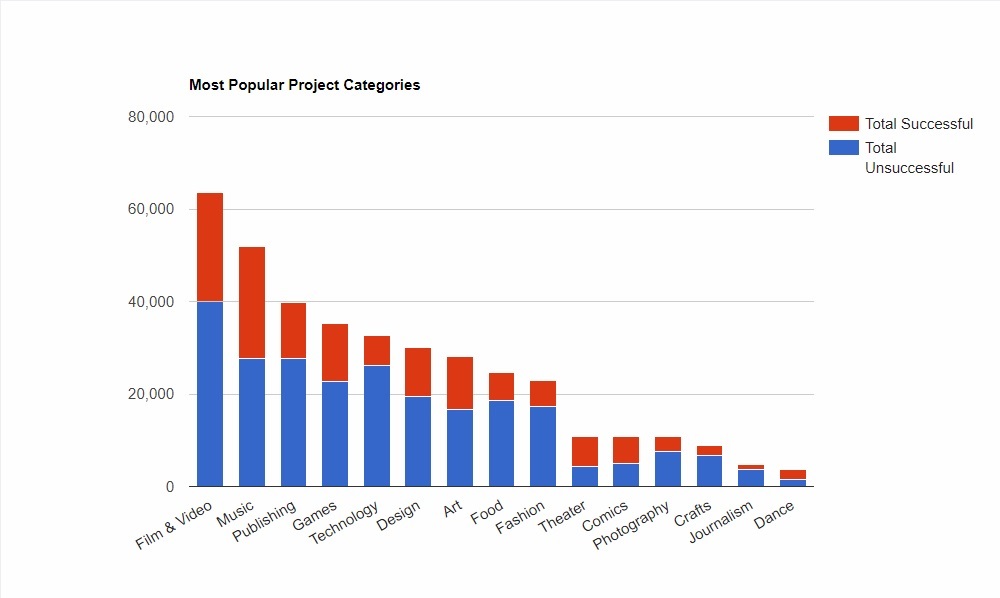

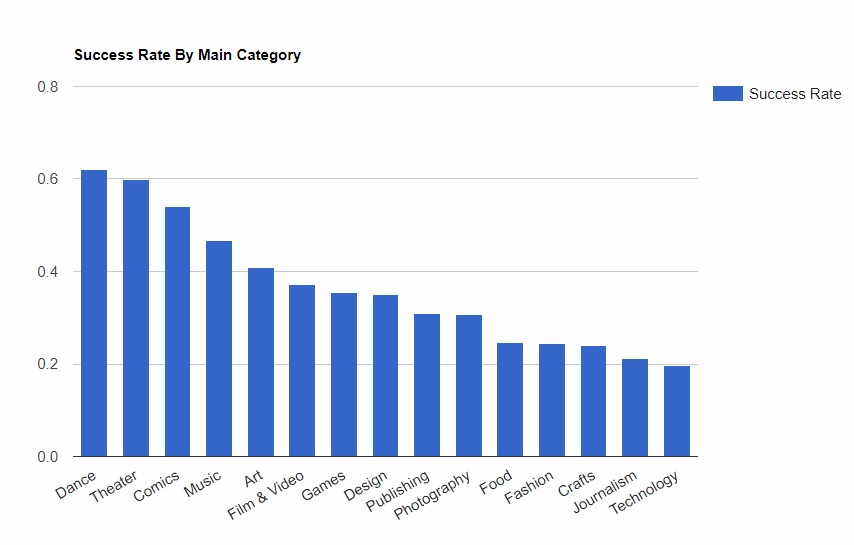

In the data table, projects are broken down into main categories. Which main categories are the most popular? Which have the have the highest likelihood of getting funded?

Here we see "Film & Video" projects are the most popular projects on Kickstarter; however, they do not have the highest success rate. If you are looking to bump up your chance of success, consider a dance, theater, comic, or music project. If your planning a tech project take heed: With a success rate of only 20%, technology projects are the most difficult to execute. Why is it so hard to fund a tech project?

Well, on average, technology project have higher funding goals than other categories. Good luck raising $150,000 to launch that smart watch!

Can we predict the outcome of a project before it is launched?

Before investing hours to project preparation and press buzz, wouldn't it be nice to know if your idea even stands a chance of being funded?

Turns out we can use machine learning to do just this.

In the next steps, I train a Support Vector Machine model (with a radial kernel) on a randomized subset of the data to handle this task. I included two new features (season launched and project duration) to the dataset that I created using feature extraction:

- In my EDA of the Kickstarter data set in my R shiny app (see the calendar graph), I noticed that more projects tend to be launched in the summer months (June-September). Maybe winter holidays veer attention away from kickstarter projects. Or people are less likely to back a project when they have to spend their money on holiday gifts. So, I simplified the "project launch date" feature by grouping these dates by season: Summer, winter, spring and fall.

- I also wondered if the duration of the project impacted its outcome. Super short projects might not have a chance to generate enough buzz. Long projects might deter people from backing because the deadline is no where in sight. By subtracting the project end date by the project launch date, I was able to generate a new column of the total days of the project.

My final list of features used to train my SVM model were: Main category, subcategory, the project goal (in USD), the country where the project is based, the duration of the project in days, and the season when the project was launched.

Checking the Receiver Operating Characteristic (ROC) Are Under Curve (AUC)

With the model trained, we apply it to the test set and evaluate how the model performs.

In classification problems, it is better to evaluate predictions based on probabilities. For example, instead of predicting whether a project succeeds or fails, it's better to determine the probability of whether a project will succeed or fail. An ROC-AUC plot can help visualize this.

Let’s see the area under curve for our model:

Our AUC is about 63%. AUCs greater than 0.5 tell us our model is predicting better than just a random guess. However, an AUC of 0.63 leaves much in the way of model improvements. Although we can't determine project outcomes with utmost certainty, we are definitely on the right track! We will need more data and better feature engineering in order to improve the accuracy of our model.