Ames, Iowa Housing Price Prediction: Application of Machine Learning Methods

Abstract:

There are many factors that can affect a property’s sale price; its location, age, size, etc. are all important features to consider. Our team analyzed and modeled data on home sales in Ames, Iowa using advanced statistical techniques and machine learning methods, namely principal component analysis, regressions models, boosting models, and stacked ensemble models. We employed different machine learning frameworks on R like Caret and H2O.ai to improve the efficiency of codebase management, model training, and tuning. Our final models achieved low prediction error rates.

Team Ostrich Pillow, JLP: Devon Blumenthal, John Bentley, Siyuan Li, Yiyang Cao.

Introduction:

We, team Ostrich Pillow, A.K.A Team JLP, were tasked to solve the housing sale price prediction problem of “House Prices: Advanced Regression Techniques” from Kaggle.com, a data science competition platform.

The Ames Housing dataset was compiled by Dean De Cock and includes 79 features for 3000 houses describing almost every aspect of residential property information in Ames, Iowa. With a mix of numerical and categorical variable in high dimensionality, we believe that the dataset is perfect for employing statistical analysis and machine learning methods to explore the relationship between various features and the sale price of properties.

Data Cleaning, Exploratory Data Analysis, and Data Transformation:

Missing data and imputation:

Upon receiving the dataset and doing a brief inspection of the provided training and testing dataset, we found that some features have significant percentage of missing values; some of them due to the inherent nature of the feature and some of them is simply missing for no provided reason.

For example, some values in the field of whether the property has a side alley access is missing is due to the property having no side alley access, and some fields related to garage are missing due to the property not having a garage. We imputed these values using a proper “None” value, indicating that the property does not have these features.

We are fairly certain that missing values in the front lawn square footage were missing for some reason other than because the properties do not have front lawns. Therefore, we imputed these values based on the neighborhood average.

There were also a few instances of missing values in electrical setups and standard utility features for the properties. We imputed them using the mode (most occurred value) of the features.

Creating and discarding features:

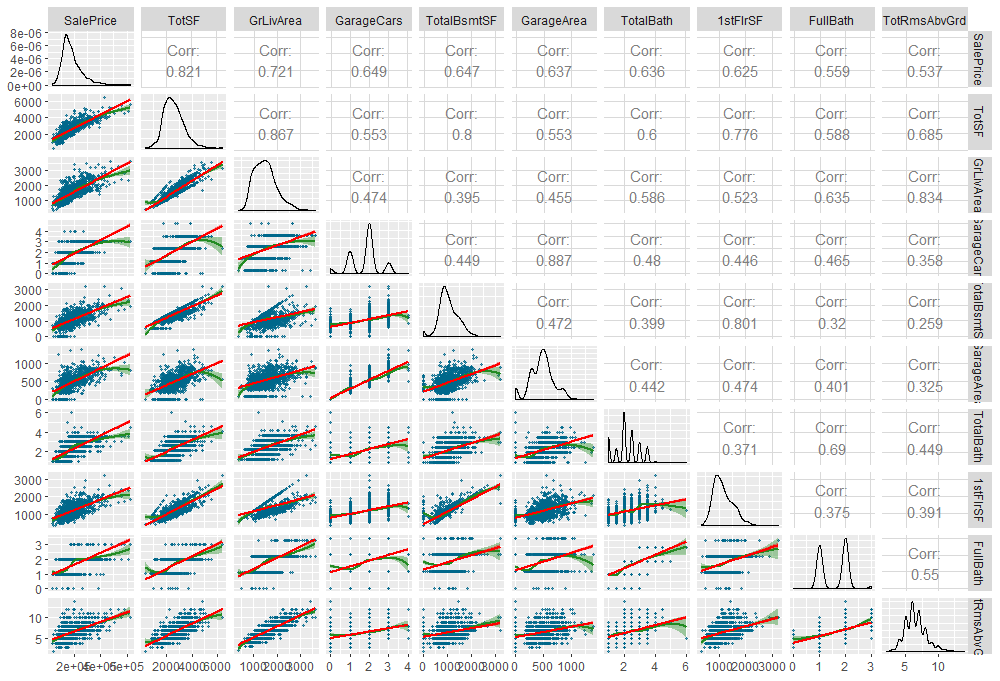

We created “TotalSF”, total square footage of the property, as the sum of “GrLivArea” (total above ground living area) and “TotalBsmtSF” (total basement square footage); “TotalBath”, total number of bathrooms using the sum of all the bathroom count variables from above ground and basement; “PorchTotSF”, total square footage of porch areas, combining the square footage values from different types of porch setups.

Exploratory Data Analysis (EDA):

A total of four data points were treated as outliers and removed from the dataset. All four had GrLivArea significantly larger (> 4000 square feet) and with sale prices that were out of line with the rest of the dataset (two data points over $725,000 and two more underpriced at less than $200,000).

Using the "corrplot" library, a correlation plot was created to determine which of the variables have the biggest correlations with Sale Price. As expected, the greater the Square Footage, Garage Size, and Number of Bathrooms, as well as the younger the Age, all had positive correlation upon Sale Price.

Transformation of predictor and response variables:

From our EDA, we saw that many numerical features and the response variable are right skewed. To ensure we satisfy the normality assumptions of linear models, we log-transformed all the numerical variables having a skewness greater than 2.

We also saw that our response variable, sale price, has a right skewness, indicating abnormality, which prompted us to do a Box-Cox transformation of the variable. The Box-Cox analysis shows lambda close to 0, which suggests that we do log transformation on the response variable. After log-transformation, the response shows proper normality.

Dummy-coding categorical variables:

We also dummy-coded the categorical variables hoping that the machine learning models would pick up impacts of specific feature values, potentially aiding in feature selection and model tuning.

At this point, we were ready to proceed to statistical analysis and machine learning implementations.

Principal Component Analysis (PCA):

We decided to experiment with PCA on the Ames data set, mostly as a learning experience but also to reduce dimensionality for possible later use in a KNN algorithm, and to reduce multicollinearity for multiple linear regression. We only applied PCA to the continuous variables, separating out the dummified categorical variables before calculating the covariance matrix.

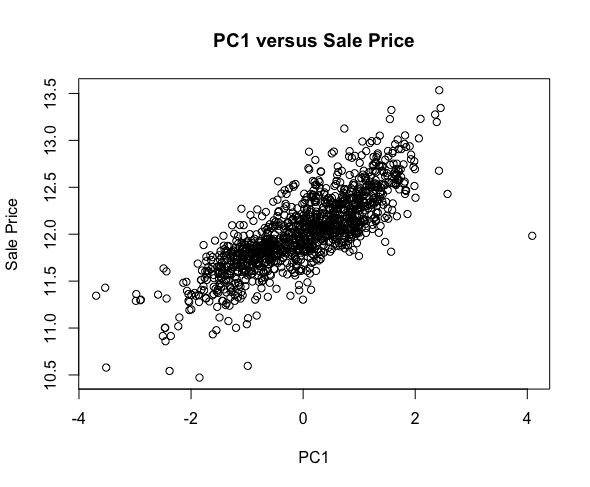

PCA revealed a very nice-looking linear relationship the first principal component and the dependent variable (which was not included in the PCA-transform), but this component, despite being the direction of greatest variance, accounted for only ~33% of total variance. There was virtually no linear relationship between the other principal components and the dependent variable.

PCA enabled this helpful visualization, which informed us that a multiple linear regression likely would not produce stellar results. Upon feeding the PCA-transformed data into several simple models (multiple linear regression, LASSO regression, RandomForest), we discovered that in general, the models performed slightly worse with the PCA-ed data than the unchanged data. This is unsurprising because we did lose some information when we selected only the most important principal components (only the top six components had eigenvalues > 1) and did not change anything about the data besides rearranging it into a new, rotated, orthonormal coordinate system.

| No High VIF Predictors | Multiple Linear Regression | Lasso Regression | Random Forest | |

| PCA-Transform | Include Categorical | .16 | .15 | .18 |

| PCA-Transform | Exclude Categorical | .22 | .22 | .21 |

| Original Data | Include Categorical | .16 | .15 | .17 |

| Original Data | Exclude Categorical | .21 | .21 | .18 |

| Include High VIF Predictors | Multiple Linear Regression | Lasso Regression | Random Forest | |

| PCA-Transform | Include Categorical | .15 | .14 | .17 |

| PCA-Transform | Exclude Categorical | .18 | .18 | .17 |

| Original Data | Include Categorical | .14 | .14 | .15 |

| Original Data | Exclude Categorical | .17 | .17 | .16 |

It would be interesting to feed the PCA-transformed data into a KNN model, where the benefits of reduced dimensionality might outweigh the loss of information. We have yet to try that, as we ended up focusing more of our attention on the exciting results of our ensembled models, but expect an update soon!

K-Nearest Neighbor (Clustering) Model:

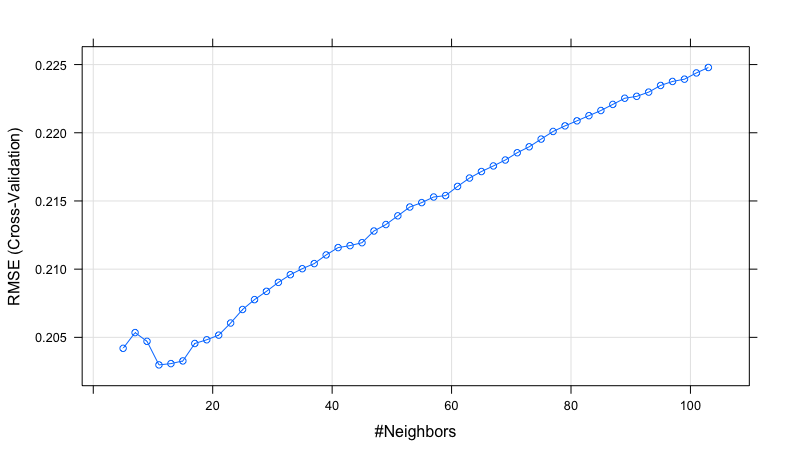

While k-Nearest Neighbor is not typically used for predictive models, we used a kNN model without the PCA transformed data as a baseline for the next predictive models. kNN attempts to predict new data by taking the average outcome of the nearest k observations. Using cross-validation 12 was found to be the optimal amount of observations for prediction. After using an 80-20 percent split of the data between training and test, the kNN model had a root mean square error of 0.148.

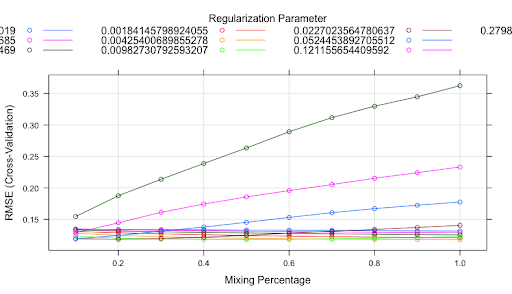

Elastic-Net Regression:

Elastic-net regression was the next model fit to the training data. Elastic-net is a combination of Ridge and Lasso regression, which are regression models with an added penalization. Ridge regression uses the L2 regularization term, while Lasso uses the L1 regularization term. Elastic-net is a combination of these two techniques with two parameters: alpha, which dictates how much of a mixture is used between the two regularization terms, and lambda, which acts as a penalizing term. Due to the elastic-nets' need for dummy coded factors, where a factor with more than 2 levels is split into F - 1 feature, where F is the total number of factors, and each feature is now a binary predictor of that particular factor. This lead to the dataset having over 300 predictors.

After using cross-validation, the two best hyperparameter estimations were 1 for alpha, indicating a pure lasso regression equation, and 0.004 for lambda. One of the effects of a pure lasso regression is that some feature coefficients are dropped to zero, effectively acting as feature selection and removing them from the model.

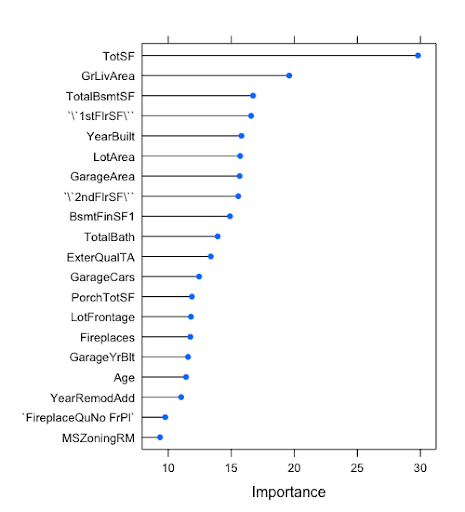

The model gives us an idea of what features were most effective in predicting housing prices. We see that Total Square Footage, one of the features we created, holds the highest predictive power for the model. After predicting on the test split, the elastic-net model had a root mean square error of 0.095.

Random Forest Models:

The third model used to predict housing prices is a random forest. Random forests are a type of decision tree model, but rather than creating one tree creates hundreds of them. This corrects the issue of overfitting that single decision trees typically have. Rather than using the entire set of features for each tree in the random forest model, random forests instead using a random subset of features at each split, indicated by the "mtry" parameter. The random forest was run using the dummy coded dataset used for the elastic-net model. While this is unnecessary because random forests can handle categorical features with more than two factors, for sake of continuity it was used to train the model. Once again using cross-validation, the most optimal size of the random subset of features was 66.

Random forests also give an idea of feature importance. We once again see that the predictor Total Square Footage is the most important. The importance of features is fairly similar between the elastic-net and random forest models with some small differences. After predicting on the test split, the random forest model had a root mean square error of 0.119.

Gradient Boosting Models:

Gradient Boosting models utilize the idea of ensembling a group of simple and weak classifiers and making predictions using the weighted average of their output. These models are built in a stage-wise manner, each learning from information that the previous weak model cannot learn.

Gradient Boosting Machine using decision trees:

One of the most used weak classifier type for gradient boosting models is decision trees. To build a boosting model with decision trees, we build shallow trees each with a few levels deep. In our case, after grid searching for best parameters for a gradient boosting model, we construct the final model with 400 shallow trees each with a depth of 3 and 3 terminal nodes. The final model achieves a root mean square error of 0.1025.

Extreme Gradient Boosting (XGBoost):

XGBoost is an open-source project which provides an efficient and scalable gradient boosting framework. Essentially, XGBoost is a more efficient model framework comparing to standard gradient boosting machines, plus it also includes some additional functionalities that improve training efficiency and more importantly model accuracy.

However in our case, with the same structure comparing to GBM, adding a 75% subsample rate which works similar to cross-validation that subsamples a portion of the training data to reduce overfitting, the XGBoost model performed slightly worse with a root mean square error rate of 0.1096.

Using H2O.ai Machine Learning Framework:

Machine learning tools have been constantly evolving over the recent years and we thought it would be interesting to find out if there are more efficient framework that we can utilize to improve our model.

“H2O” is a machine learning framework developed by the teams at H2O.ai. It uses Java as backend programming language so that it interacts with the hardware directly. This enables the framework to fully utilize the computation power of one’s computer. This framework also includes many popular machine learning algorithms such as Random Forest, XGBoost, and even neural networks. H2O is not only available on R but also Python, Scala, Java and many more. It features an interactive web console so that we not only can work with it through programming languages, but also a graphical user interface that allows data loading, training monitoring, and model inspection.

We used this framework to train the same Random Forest, and Gradient Boost models we obtained through Cares. It is significantly more efficient in doing grid search and model training. On average, H2O is 4 times faster than the regular packages in R that are bound by R’s memory and thread limit.

However, the framework did not give us better results. Both Random Forest and Gradient Boosting Machine models exhibit similar accuracy on the test data, with root mean square error rate of 0.1169 and 0.1056 respectively. Thus we go back to Cares and explore stacked models.

Stacked Ensemble Model:

In an effort to obtain the best root mean square error possible, a stacked model was run using the previous four models. A stacked ensemble model works by running each model over a cross-validated dataset. For each fold, predictions are calculated and then saved. Using these predictions you create new features based on the total number of models used. This created for new features, with each observation having a predicted value from each of the models. These features are then fed into a secondary model. We used another elastic-net for the secondary model, in an effort to reduce multicollinearity across the different models. The stacked model was what was used for our submission to Kaggle. In the end, our root mean square error was 0.126, indicating that there was some potential overfitting in our training models. This root mean square error was good enough to land within the top third of all submissions.

Conclusion:

We explored a multitude of machine learning algorithms and frameworks on predicting the house price of Ames, Iowa. Our best model, a stacked ensemble model, performed really well and scored in the top 30% on Kaggle competition's leaderboard. It was quite a learning experience that we implemented and experimented various machine learning techniques for solving a business problem. Since the world of machine learning is constantly evolving, we hope to explore even more models and techniques that may give us more efficient and accurate models to achieve our task. If you would like to see our code, please visit our repo >>here<<. Thank you for reading!