Data Analysis on Critic and User Movie Reviews

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

Data shows Metacritic stands as one of the most popular review aggregators for all types of media. What that means is that critic reviews from The New York Times, Washington Post, and others are read by staffers at Metacritic, and assigned a numerical grade based on their review reading experience.

However, that seems pretty subjective and the main motivation for me personally was to try to reverse-engineer a review scoring system using some recently-learned natural language processing (NLP) techniques. Another motivation of mine was to try to analyze the differences between how critics and users rate movies. All the tools used for the scraping and analysis below, can be found at my GitHub repo.

Data Scraping

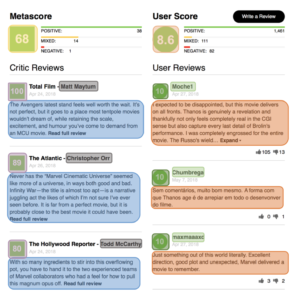

I used scrapy to gather review data on all of the most discussed movies from the years 2010 - 2018. An example of the elements scraped from each movie page can be found below:

With 100 movies per each year, making 800 in total, I grabbed a total of 133,180 reviews separated between critics and users.

Data Analysis

I started off my analysis at looking at the divide between critics and users. The easiest way to visualize this was quantitatively. In order to better compare the two, I converted the user scores from a 0 - 10 scale to a 0 - 100 scale. After looking at the breakdown of genres amongst the most discussed movies, it became very clear that users are generally more forgiving than critics across the entire spectrum. It came to no surprise that the more than 50% of the most discussed movies were action movies, thanks to the meteoric rise of Marvel superhero movies.

More curious about the biggest divide between both groups, I looked at 20 movies with the biggest difference from both metascore to user score.

Reasons for the divide between critics and users can be inferred from external reasons. For instance, critics gave the 2016 Ghostbusters film a moderate score of 60. The film garnered lots of controversy prior to release from die-hard fans of the original. Bright on Netflix was a similar case, but in the opposite direction: universally panned by critics whereas users gave it a second chance.

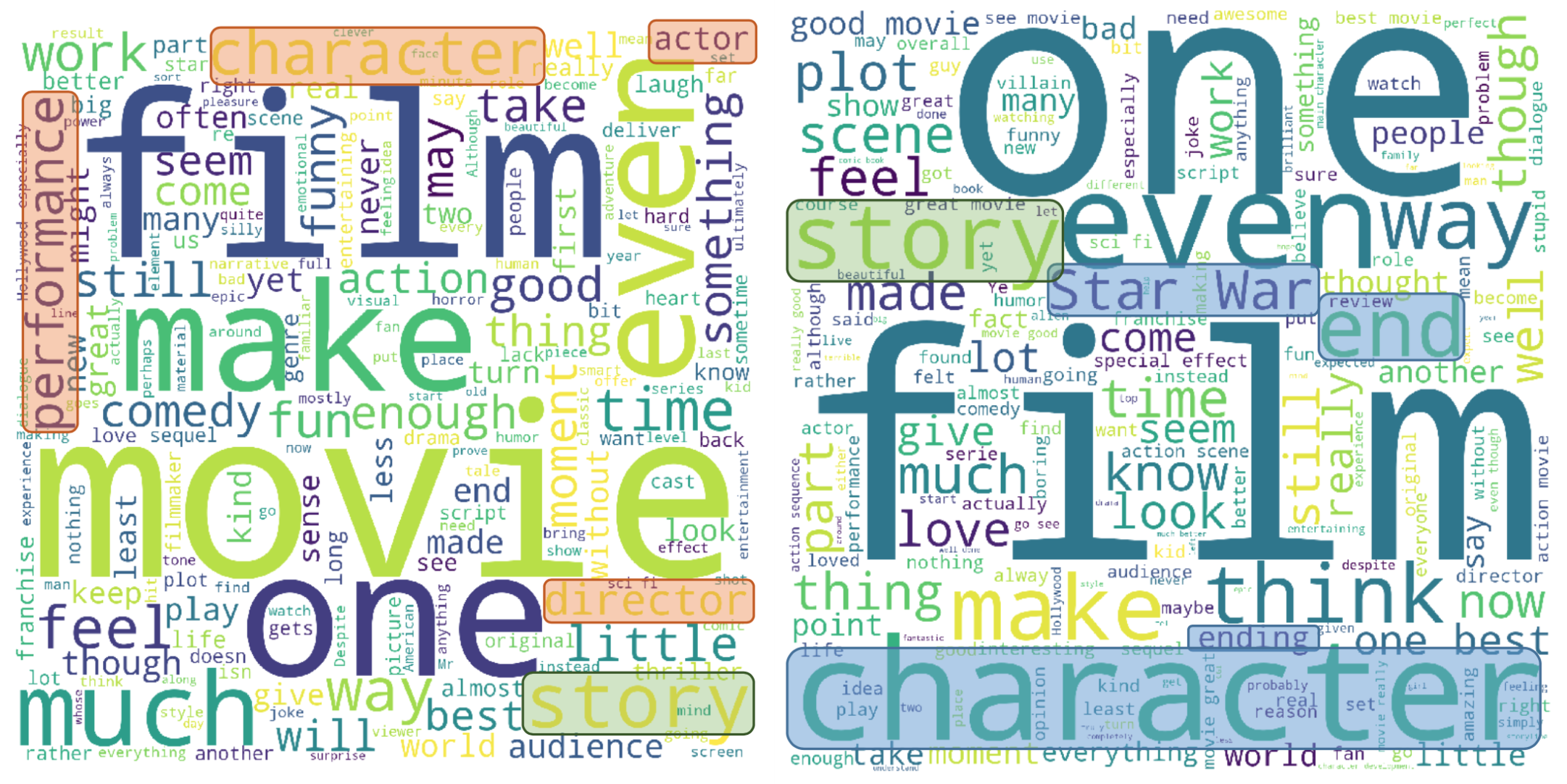

After looking at the reviews in a quantitative way, I decided to analyze word choice between the two groups. After filtering and lemmatization of all the reviews, I created a wordcloud with word sizing based on relative frequency.

Word Cloud

Critic Reviews User Reviews

From the word cloud, we can see some interesting choices that I have highlighted. It seems like critics are more focused on assessing the technical side of a movie while reviewing, with words like "actor", "performance", "director" showing up more often. It seems like aspects of the story and the world of each movie shows up more within the user reviews, with words like "plot", "scene", and "character" having higher emphasis.

Another NLP technique I have employed is sentiment analysis (SA) via the TextBlob python library. With this tool, it is possible to estimate the mood of a passage of text on a scale of -1 to 1 (-1 being a very negative message and 1 being most positive). My thought was that I could measure the mood of each review and rescale it from 0 to 100, being that a more positive review would correlate to a more positive message that SA would read.

As you can see from the graph above, it seems like SA read most reviews as a more neutral score, with both critic and user SA score distributions looking similar. The Pearson correlation coefficient between the user score and the SA of their reviews were 0.5091, and for the critics reviews, the correlation coefficient was 0.2443. The large discrepancy in correlation coefficients make sense, when you consider that reviewing movies is a critic's profession, so having a neutral message while conveying a review is important for that professional environment.

Conclusion

The results show that SA alone is not an accurate tool to predict review scores from a given review. Future work would include implementing a TF*IDF analysis, which measures term frequency multiplied by an inverse document frequency factor per review grade scale (overall positive, mixed score, and negative scores), to look at the important words used for reviews for a given grade range. This would allow me to have a much better fitting predictive score model.