BookLab: Helping You Discover New Books With Machine Learning

Overview

For our capstone project, the team decided to create BookLab, a book recommendation engine for Barnes & Noble, a traditional brick and mortar bookstore, to help them increase book sales and customer loyalty. We used hybrid ensembled machine learning models (Random Forest) with collaborative filtering to make BookLab's recommendation results more creative than a simple book and author matching.

I. Project Background

Target Audience

We envision BookLab to be an app that helps traditional brick-and-mortar booksellers like Barnes & Noble customers find the books that meet their needs whether it is for school, work, or leisure reading. We feel that B&N is lagging behind Amazon, the current market-leader in terms customer engagement and monetization using data analytics. Their website allows customers to search for books by title, author, or category. However, it is not possible to get a book recommendation based on your personal preference such as your favorite books.

In the example above, a customer searches for Harry Potter and the search engine provides all Harry Potter books and memorabilia. However, there were no similar books recommended feature unlike in Amazon.

Searching for the same book in Amazon will also provide the customer a selection of similar books not necessarily from JK Rowling. “Diary of a Wimpy Kid: Double Down” by Jeff Kinney and “Magnus Chase and the the Gods of Asgard, Book2: The Hammer of Thor” by Rick Riordan was part of the Top 5 of algorithm recommendation.

Presenting these 2 books of similar genre but different authors motivates the customer to explore new books. This is beneficial for both the customer and for the bookstore.

Goals

We want to help Barnes and Noble’s customers find great books that will inspire them, make them laugh, make them cry, and invoke their curiosity. Books that will make them a book-lover and continue to go to BN’s website to discover new books. To do this, we will:

- analyze reader behavior and preferences using EDA and clustering

- develop a machine learning algorithm that predicts the reader satisfaction rate for books

- create a recommendation engine algorithm to select the top matches for their needs

- design a customer friendly interface that can be used by Bookstore specialists and customers

Languages, Tools, Platforms

- Languages: R, Python

- Platform: Spark, Data Science Studio, GraphLab

- API: Google , GoodReads

II. Dataset and Pipeline

We sourced book ratings data from the University of Freiburg’s Department of Computer Science, which scraped data from the Book-Crossing website with the permission of the website owner in 2004. The dataset contained 278,858 users (anonymized but with demographic information) providing 1,149,780 ratings (explicit / implicit) for 271,379 books.

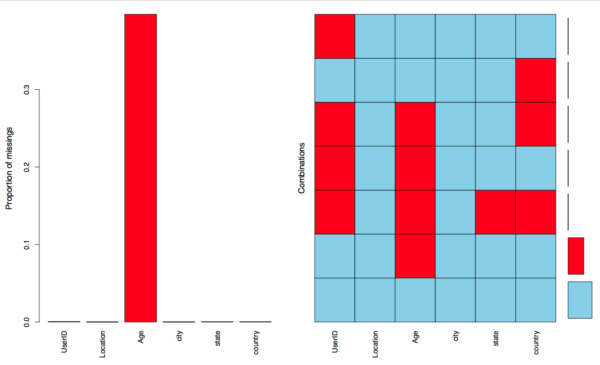

Similar to real business scenarios, our dataset had missing information and formatting issues. Upon inspection, we saw that we had to deal with the following challenges:

- formatting issues such as misspelled City, State, and Country information

- Book title issues especially for non-English titles

- Missing user data such as age and location (City, State, and Country)

- Read but unrated books exceeding read and rated books

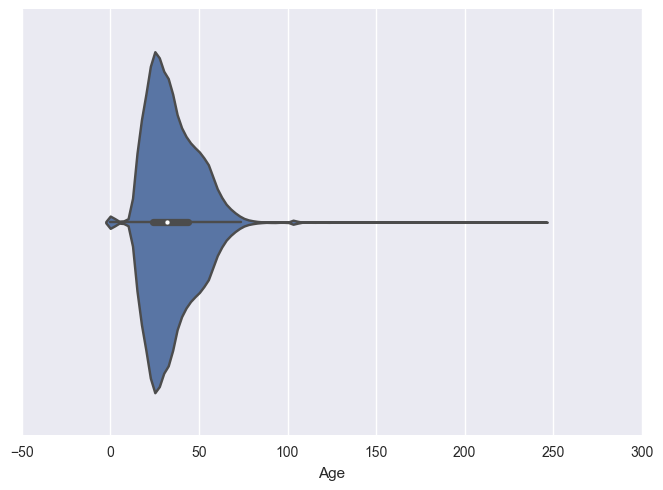

- Unreal user age exceeding 100 years old all the way to 250 years old

Our approach was to pre-process the data carefully ensuring that we preserve the original data structure as much as possible to avoid inducing bias. The appendix below details this process.

Apart from this dataset, the team gathered information from Google Books API and Goodreads API to gather the following features:

- Book Genre

- Page Count

- Maturity

III. Reader Insights from Exploratory Data Analysis

Insight 1: Global Readers Are Using Social Media To Feed their Love for Books

To have a quick understanding of the reader demographics, we created a geographical map to plot their location. Book-Crossing has users from all over the world with majority of the readers coming from United States. There were also readers from the African continent namely from Egypt, South Africa, and Nigeria.

Insight2: The Young and Dissatisfied, the 30s and Happy

It is interesting to note that on average most of the low ratings came from Book-crossing users between the ages of 16-18. On the other hand, most readers in their 30s rated their books higher on average.

It would be interesting to find out what drove these younger readers to rate books lower. That will be in another post.

Insight3: Readers Seem Happier to Escape Reality

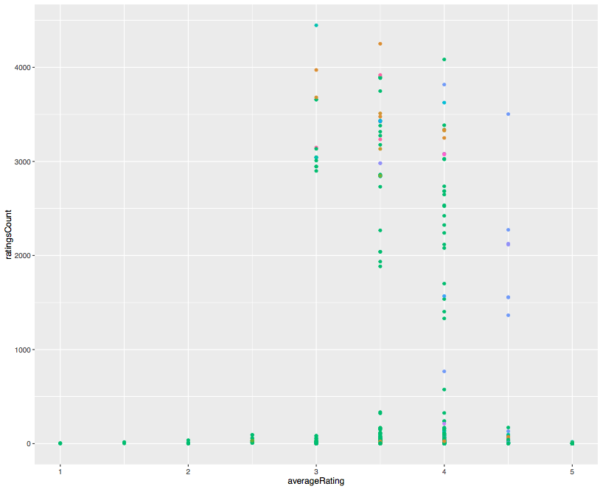

Fiction represented by green points seemed to be the most read genre by book-crossing members. This needs to be taken with a grain of salt as it seems like there is sparsity of other types of books that are non-fiction.

Insight 4: Short Stories versus Long Stories Ratings

It appears that it is more common for shorter books with less than 250 pages to be rated low. The same pattern seems to be visible for books with 750 pages and above. After further research, we found out that "one reason it's harder for a new author to sell a 140,000 word manuscript is the size of the book. A 500+ page book is going to take up the space of almost two, 300 page books on the shelves. It's also going to cost more for the publishers to produce, so unless the author is well known, the book stores aren't going to stock that many copies of the 'door-stopper' novel as compared to the thinner novel."

IV. Supervised Learning: Predicting Book Approval Rating Classification

Since the team found more value in determining which books lead to high or low satisfaction rate, books with no ratings in our overall dataset was excluded in the training dataset. Below are 2 graphs breaking down rated and unrated book titles count.

Model Selection: Logistic Regression and Random Forest

The team decided to use Logistic Regression and Random Forest to perform a 10 multi-class prediction with the expectation that the Logistic Regression model will allow us to have a highly interpretable model which would be easy to explain to B&N's management. On the other hand, the Random Forest seems more robust for our dataset type given that it is better in dealing with uneven distribution and outliers. Therefore, theoretically giving us a more accurate prediction in terms of sensitivity and specificity.

Cross-Validation: Imbalanced Classes

We saw that most of the reviews were 8-10 and realized that we were faced with an imbalanced target class challenge. First, we performed a K-Fold cross-validation split on the entire rated dataset.

Performing cross-validation and then model fitting on the rated dataset with 10 classes for prediction resulted to low accuracy rate, low sensitivity, and low specificity.

Tuning the Model and Feature Engineering

To improve the predictive power of the models, team revised the problem into a 3-class prediction. With this revision, the model performed better overall with a higher AUC.

Resampling: Under-sampling and Over-sampling

This updated model performs much better than random classification and the previous model. However, a third adjustment can be done by the team using resampling methods that under samples the majority class, in this case, the High Rating class and over-sampling the minority class, Low Rating.

In a separate blog post, the team will test penalized models which imposes additional cost for making incorrect minority class prediction such as penalized SVM and penalized LDA to see if they will perform better than model 2.

The team recognizes that it is essential that BookLab is able to offer higher sensitivities in detecting lower rated books. We want to decrease the chances of our users encountering lower rated books as part of its trust-building efforts.

V. Unsupervised Learning: Book Recommendation

Collaborative Filtering

The team used a distance-based similarity scoring algorithm called Collaborative Filtering (CF) to build BookLab, our recommendation engine. Since the Book Crossing Dataset has many zero implicit ratings, we replaced these ratings with average ratings when possible [for detail, please review Appendix-B]. Then we re-tested our engine with the enhanced dataset. And we observed that the number of ratings available to CF do impact the recommendations made by the engine.

Book Recommendation Functions

*** For detail, please review Appendix-C.

In addition to basic ETL functions for loading data and transforming data structures, we have three main types of functions:

- Functions which calculate distance-base similarity score:

-

- sim_euclidean : calculate the Euclidean distance between user1 and user2.

- sim_pearson : calculate the Pearson correlation coefficient for user1 and user2.

- Function topMatches which find similar users or items:

-

- If you give it a prefs matrix and an user as input, it returns top matched similar users.

- If you give it a critics matrix and a book title as input, it returns top matched similar books.

- Function getRecommendations which finds items for users or users for items:

-

- If you give it a prefs matrix and a user as input, it returns few book recommendations.

- If you give it a critics matrix and a book as input, it returns few users who may want to read the book.

Experiment & Observation

*** For detail, please review Appendix-B.

We have tested the function topMatches and getRecommendations with:

- Euclidean distance and Pearson correlation.

- Original dataset (less ratings) and our enhanced dataset (more ratings).

We cannot confirm if any recommendations made are valid since:

- The dataset is not ideally clean.

- The dataset does not have enough information about users or books.

- Marcel Caraciolo’s approach does not make use of users’ profile.

But we do observe that the number of ratings available to CF do impact the recommendations made by the engine.

More Sophisticated Model

Although Marcel Caraciolo’s collaborative filtering algorithm is simple, it does provide us with many basic functionalities to build a book recommendation engine. We believe we can build a more sophisticated engine by combining the important features we identified in the machine learning part of this project.

For example, “category” and “publisher” are two important features we identified. We can expand our data with “category” and “publisher”.

Let’s say we have one million user-item-rating available.

Case-1

|

Case-2

|

Basically,

- For case-1, we reduce our data with user profile in order to provide reasonable recommendation as soon as possible.

- For case-2, we expand our feature space and hope to cover more potential readers.

Moreover,

- For case-2, the reason, in Action-1, we pick an existing book which similar to the new book, is to deal with the cold start problem.

- Let's say James' friend M, who never buy from us, wants our book recommendation, how can we handle the cold start problem of a new user for scenario case-1? In this case, we will first ask her about her taste (category, publisher, ..), then we just follow the same Action-2 to provide her our recommendations.

BookLab Interface Using GraphLab and Python

We wanted to have a user-interface for both the clients and the customers to experience BookLab’s recommendation system. This initial version uses Python language to perform Collaborative Filtering and show the results with a GraphLab User Interface. The GraphLab syntax and objects are quite similar with Python making it faster for the team to learn it and design an interface very quickly. For example, GraphLab has its own version of data frames and arrays called SFrames and SArrays.

This initial version allows users to get a book recommendation using an ISBN or book title. The idea is users can type in their favorite books (its ISBN) and BookLab will provide them 5 new book recommendation.

Vi. Business Application

BookLab can be implemented by Barnes and Noble using their own proprietary dataset. We expect that with the rich dataset they have from their BN Members and over 6 million books, BookLab will render more accurate book classification and recommendation results compared to the more limited dataset the team used for this capstone.

The recommendation algorithm can also be used for more personalized email marketing campaigns to BN members wherein every month TopMatch books alerts will be sent instead of generic email ads.

Future Work

- Perform SMOTE data balancing and other penalizing models to check if better ROC, sensitivity, and specificity can be achieved

- Add more book features such as pricing via scraping to understand price sensitivity of customers

- Identify interesting customer clusters after the addition of more features

Sources & Credits

- Book Crossing Dataset

- Barnes & Noble website

- Amazon Website

- Collective Intelligence

- Quora

- Collaborative Filtering : Implementation with Python

Appendix

Appendix A - Experiment & Observation

Appendix B - Imputation

Step-1-Baseline

- BRCF.1.Baseline.ipynb is used to check Caraciolo's implementation against the Book-Crossing Dataset.

- Our baseline model produced same results as shown in Caraciolo's article.

- However, we observed many exceptions occurred during data loading and Caraaciolo only utilized non-zero ratings:

- There are 1,149,781 ratings in BX-Book-Ratings.csv.

- When loading it to Caraciolo's implementation, there is 1 Value exception and are 49,818 Key exceptions.

- There are only 383,853 non-zero ratings used to build dictionary prefs (for user-based filter) which has 77,805 entries.

- We believe we can treat zero ratings as missing values and impute them with average rating.

- Let say 100 users bought the Book-A, but only 10 user provided ratings.

- We can compute average rating for Book-A based on 10 user ratings and then feed it back to 90 zero-ratings.

- Like a lot of Amazon customers, they buy book without feedback. But they see the average rating from customers who provided ratings. Thus, one can argue that they implicitly agree with the average rating posted on Amazon.

Following steps are our approach for such imputation.

Step-2-CleanerData

- BRCF.2.CleanerData.ipynb is used to capture what Caraciolo's implementation used from BX-Books and BX-Book-Ratings and save them in true comma-seaparate files: MC.Books.csv and MC.Ratings.csv.

- We also need to eliminate one line from MC.Ratings.csv with editor:

- Line "130499,,.0330486187,6" as there are more than 3 fields

Step-3-VerifyData

- BRCF.3.VerifyData.ipynb is used to verify MC.Books.csv and MC.Ratings.csv.

- MC.Books.csv and MC.Ratings.csv produced same results as shown in Caraciolo's article.

Step-4-ImputeImplicit

- BRCF.4.ImputeImplicit.ipynb is used to create Good.Ratings.csv which replace zero ratings with average ratings if available.

- We read 1,149,781 from BX-Book-Ratings.csv.

- 433,671 have non-zero ratings; thus, no impute needed.

- We use average ratings for 494,024 records.

- We can only use zero rating for 222,085 records as buyers of those books not provide rating.

- We wrote 1,149,780 records to Good.ratings.csv

- Thus, we double ratings available for building CF.

Step-5-DataImpact

- BRCF.5.DataImpact.ipynb is to re-examine our baseline model using two times more ratings from Good.Ratings.csv.

- As expected, more ratings changed the recommendations.

- BRCF.1.Baseline.ipynb

- BRCF.2.CleanerData.ipynb

- BRCF.3.VerifyData.ipynb

- BRCF.4.ImputeImplicit.ipynb

- BRCF.5.DataImpact.ipynb

Appendix C- Data Structure for Collaborative Filtering

We used two types of 2D matrices, implemented using Python dictionary, to capture the user-item-rating information.

1. prefs

To provide recommendation for a user, 2D prefs matrix will have users as rows and items as columns; i.e., rating stores as prefs<user><item>. We provide two prefs matrices:

- prefsLess which is based on non-zero ratings from original dataset.

- prefsMore which includes prefsLess plus additional non-zero ratings by imputing average ratings.

2. critics

To provide recommendation for an item, 2D critics matrix will have items as rows and users as columns; i.e., rating stores as critics<item><user>. We provide two critics matrices:

- criticsLess which is just a re-arrangement of prefsLess.

- criticsMore which is just a re-arrangement of prefsMore.