Capstone: Mapping Brands to Transactional Data

The Why

Our capstone project aimed to help a debit-card startup, which “empowers teens to make the best financial decisions.” Through an easy-to-use app, teens can gain financial freedom, while parents can achieve peace of mind over their kids’ spending habits.

Through this project, we helped the company efficiently and accurately predict the merchants used in each teen’s transaction. The ask was to map at least 50% of the data with 90% confidence. At present, merchant data exists in long convoluted strings, composed of a mix of brand name, address, store number, and random characters. Predicting the right brand based on the provided string will help this company best serve its clients; it will enable parents to restrict their teens to selected sellers for purchases, provide more granular reporting of specific brands, and add features for other prospective clients. Additionally, this will save them time through automating brand prediction and improving the data asset of the company.

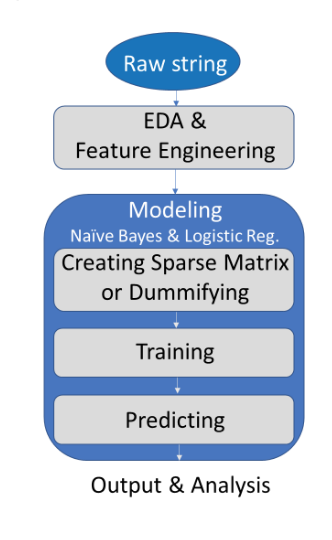

To tackle this problem, we sought the best models to handle multi-class classification, imbalanced classes, and categorical features, including text. Upon cleaning the data, engineering features, and balancing classes, we implemented Naive Bayes and Multinomial Logistic Regression models. The following sections walk through our process to optimize our predictions.

EDA & Feature Engineering

The Data

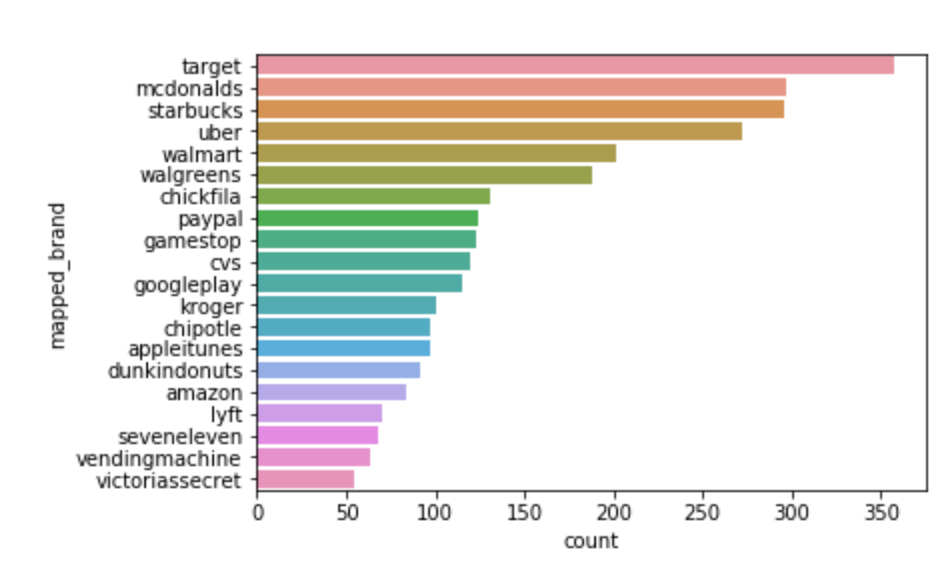

Our data contained a limited number of fields, three predictors (“merchant string,” “merchant category,” “network”) and the response variable (“mapped brand”). We had over 300,000 observations, which we needed to map into over 500 categories. Many of these categories were infrequent and could have just one entry, making imbalanced classes something to address. On the other hand, we had one very frequent class (ATM), comprising roughly 60% of the training data. After ATM, the twenty brands that appeared most often amongst labeled data included the ones that appear in the graph below: Cleaning & Feature Engineering

Cleaning & Feature Engineering

To clean the merchant strings, we first applied common NLP techniques including tokenizing, adjusting to lowercase, removing stopwords, and applying regex on certain number combinations. For example, through this process we converted a string like “CHICK-FIL-A #02241” to “chick fil a”. String cleaning posed a difficult challenge of removing irrelevant portions while preserving patterns important to brand recognition. We created a cleaning function that easily incorporated various parameters (for instance, what to split on, what words to remove) to enable simply rerunning to optimize the cleaning for different models.

One new feature type we created stemmed from concatenating cleaned merchant string and merchant category (“mcc”). This feature engineering increased our models’ score from 90% to 95%, a significant marginal improvement.

Modeling

Naive Bayes

Naive Bayes is a probabilistic supervised classification method that is particularly useful in handling various categorical variables with many possible values. It also is able to pick up on small effects that can add together to have meaningful impact. We utilized multinomial naive bayes to predict brand based on frequency.

After cleaning and feature engineering, we used the bag-of-words approach via CountVectorizer to count word frequency throughout our merchant strings. We opted to include n-grams as part of the vectorization to increase our model’s ability to recognize brand with multiple words (like “dollar general”). This process created the sparse matrix which we fed into the model as its predictors.

In order to train Naive Bayes on more data, we created a dictionary that linked mapped brands to common substrings within the merchant strings and used it to fill in some of the unlabeled data. Once we optimized the dictionary, we were able to label 53.5% of the data. The accuracy of these labels on our training set was 99.6%.

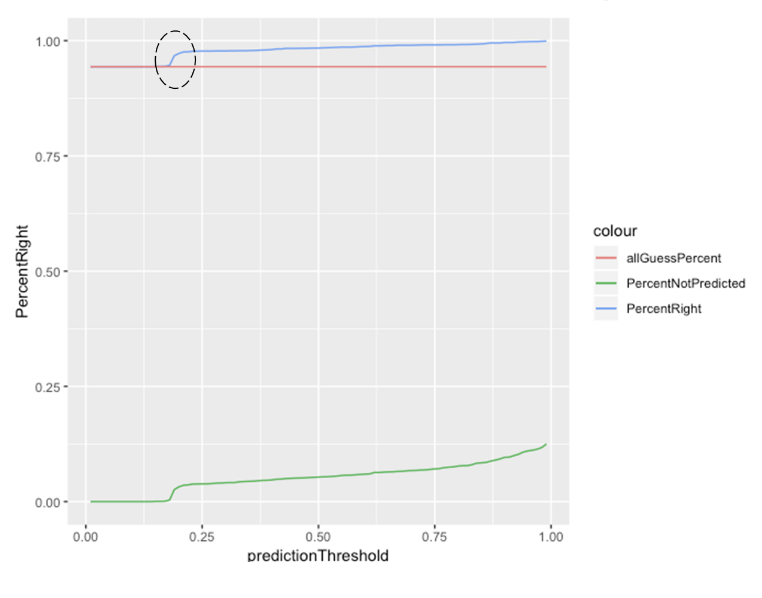

To view our performance and continue to improve, we graphed the Naive Bayes model prediction accuracy (on a holdout set of the labeled data) against the Naive Bayes confidence level. While we recognize that accuracy is not the best metric of a classification model since it gives equal weight to false positives and false negatives, we started by using this metric to improve our model and considered other metrics later in the modelling process.

The graph allowed us to isolate jumps where the model accuracy improved greatly as we examined groups of more confident predictions. When we saw these jumps, we isolated the batch of incorrect predictions and improved our processing accordingly (cleaning and/or modeling). In the example below, we added a feature to our data set that indicated if the mcc was not available. This decreased our model's predictability, which chose 'ATM' (the most frequent label) over other labels when mcc was not available. Reprocessing our data ameliorated these misclassifications smoothing the below curve.

Multinomial Logistic Regression

We also built a multinomial logistic regression model to predict our various labeled brands. Multinomial logistic regression is a supervised model that fits a softmax function to a multiclass categorical response. The model produces the probability that each observation belongs to a particular class, an advantage over over other classification techniques.

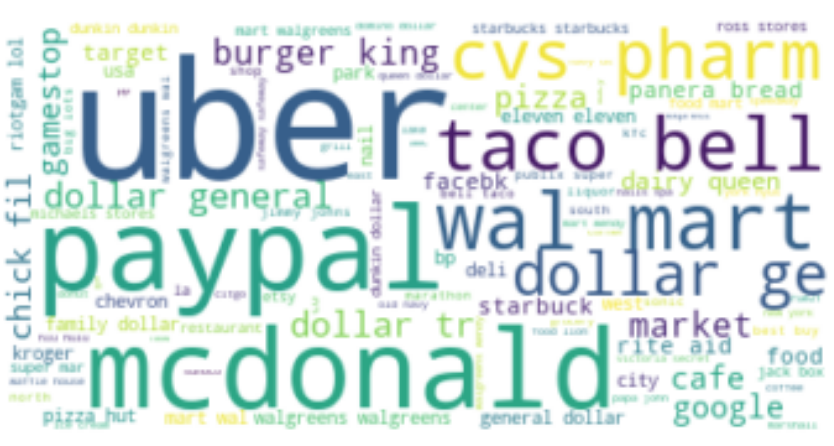

After cleaning the mapped brands, we built a function to extract the most common words. Each word then became a predictor in our model, representing whether the merchant string contained that specified word or not. These words can be visualized below, though it was an iterative process to gain the cleanest list: Thereafter, we balanced the classes, trained our model, and predicted the brand corresponding to each observation. We then gauged how correct our predictions were on our test data, using a dictionary as a proxy.

Thereafter, we balanced the classes, trained our model, and predicted the brand corresponding to each observation. We then gauged how correct our predictions were on our test data, using a dictionary as a proxy.

Model Performance

The accuracy of our Naive Bayes model on the training data set was 97.9%. This decreased to 95.3% when looking at a holdout set of labeled data. We achieved that same 95.3% accuracy level on our Multinomial Logistic model on the training data set.

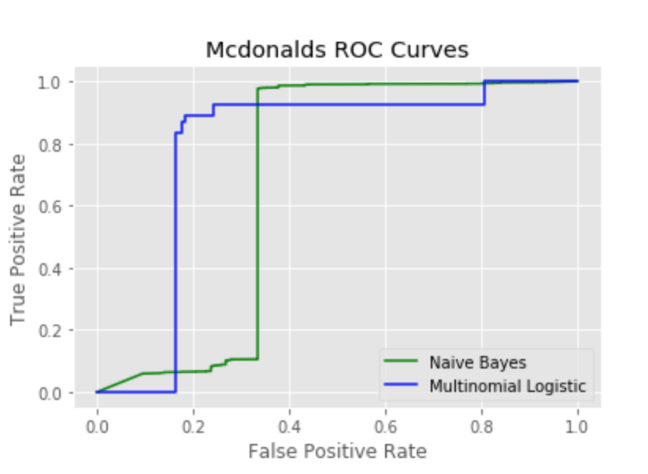

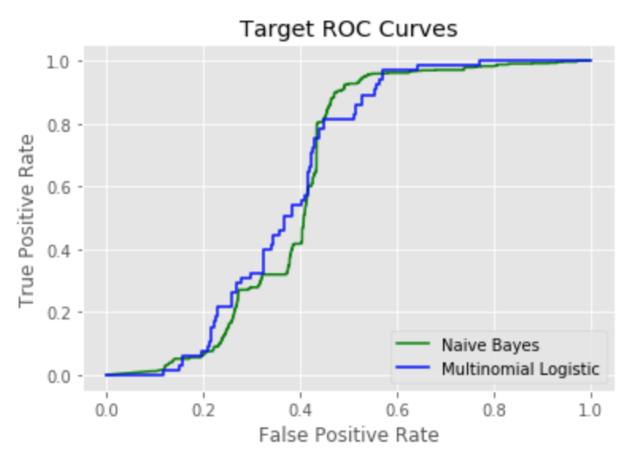

We further examined model performance by looking at ROC curves for some of the top brands and comparing the results of the Naive Bayes to Multinomial Logistic. ROC curves helped us consider sensitivity and specificity which are important metrics beyond accuracy.

For both McDonald’s and Starbucks, the AUC of the Multinomial Logistic Regression was higher than for Naive Bayes.

The reason Naive Bayes performed more poorly with identifying Starbucks transactions could be because when the term “store” was in the merchant string, Starbucks was often incorrectly predicted. This is due to the independent nature of Naive Bayes: given the term “store” was often coupled with “Starbucks,” the Naive Bayes model would predict Starbucks when “store” appeared. The Multinomial Logistic model, on the other hand, would consider all the most common words we fed into the model instead of giving an inordinate amount of weight to a single word to predict the mapped brand.

The Multinomial Logistic model performed better on predicting “McDonald’s” than the Naive Bayes model for a similar reason. The hanging ‘s of “McDonald’s” was always coupled with “McDonald’s” so Naive Bayes would predict McDonald’s when “ ‘s “ was present. The Multinomial Logistic model gave this attribute less weight.

Last, we looked at how well the models predicted Target transactions.

Both models had an AUC of 0.6. This was one of the lower AUCs among the various brands. It’s possible the model doesn’t predict Target transactions as well because Target is a more generic store with associated transactions that could easily fit other brands. Consequently, some Target transactions are incorrectly labelled as “dollar general” or “paypal”.

Output

We provided the company with a model that can take one or more observations in real-time and offer a predicted brand. We reduced the prediction time to ~2 seconds after storing the fit-model as an object.

The code is packaged into an organized pipeline enabling the company not only to run the prediction quickly, but also to easily view the files supporting the Naive Bayes and Logistic Regression model fitting. The predicted output of our final product was an ensemble of both Naive Bayes and Logistic Regression.

This tool will achieve the company’s goal of quickly determining the mapped brand of a transaction to enable parental supervision when teens use their debit cards. This will both improve the customer experience and save the company a significant amount of time by reducing the need to physically look up each transaction and map it to a brand.