Fraud Detection, Hackers and Crooks for Money

Project GitHub | LinkedIn: Niki Moritz Hao-Wei Matthew Oren

The skills we demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Background:

Financial fraud is the cause of considerable losses every day.. Hackers and crooks around the world constantly find new ways of defrauding people of their money.. Consequently, relying exclusively on rule-based, conventionally programmed systems for detecting financial fraud would not provide the appropriate time-to-market. But that doesn’t mean that the crooks have won. Machine learning shines as a unique solution for just this type of problem.

The main challenge in finding the appropriate solution is working through a suitable model. The challenge of modeling fraud detection as a classification problem is due to the fact that in real world data, the majority of transactions are not fraudulent. Investment in technology for fraud detection has increased over the years so this shouldn’t be a surprise, but this brings us a problem of imbalanced data.

There are various sampling methods that can be used to handle such imbalanced data, we’ll see below. Before we get to that, though, we’ll look into our data.

Our Data:

Our dataset contains credit card transactions made in two day in September 2013 by European cardholders. Out of 284,807 transactions, 492 were fraudulent.

Due to confidentiality reasons, the data was anonymized . Variable names were renamed to V1, V2, V3 until V28, which are the result of a PCA transformation. Moreover, most of it was scaled, except for the Time, Amount and Class variables, the latter being our binary, target variable.

The feature 'Time' contains the seconds elapsed between each transaction and the first transaction in the dataset. The feature 'Amount' is the transaction Amount. The feature 'Class' is the response variable, and it takes value 1 in case of fraud and 0 otherwise. Our data is clean and has no missing values.The dataset is highly imbalanced, with the positive class (frauds) accounting for only 0.172% of all transactions.

Given the class imbalance ratio, I am measuring the accuracy using the Area Under the Precision-Recall Curve (AUPRC). Confusion matrix accuracy is not meaningful for unbalanced classification.

EDA:

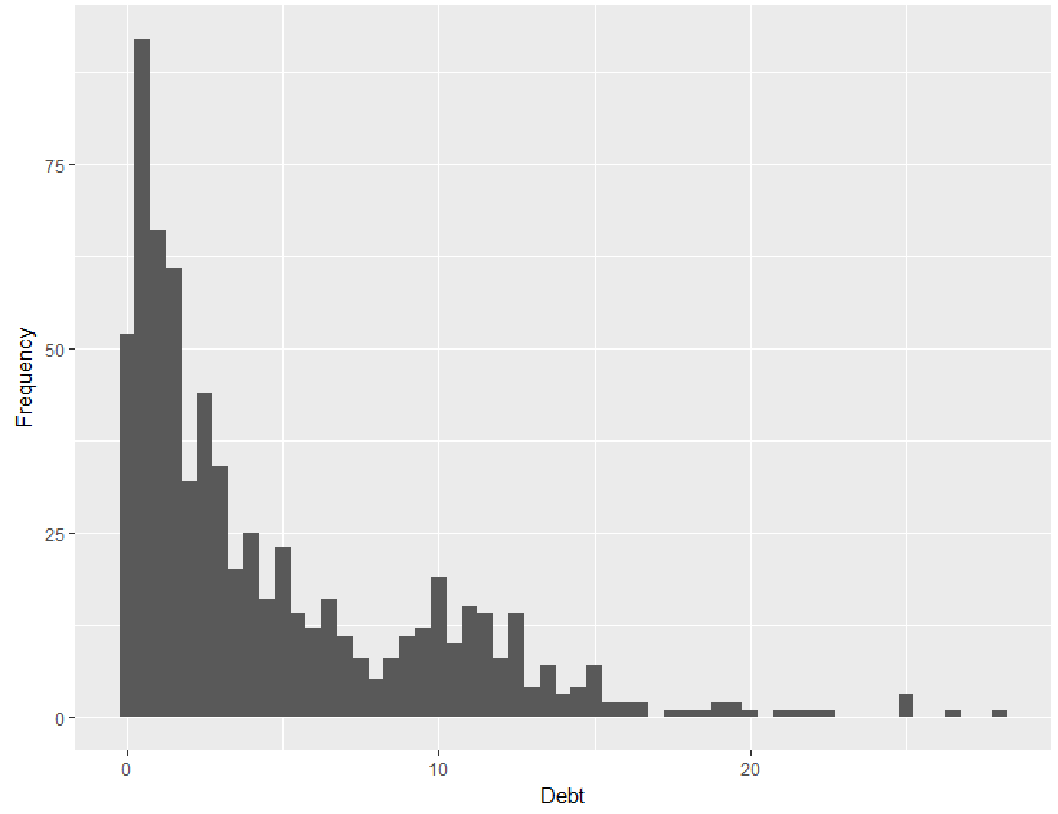

Let us first start by looking at the distribution of the Class variable.

We clearly see that the data is imbalanced.It is very common to see this type of data when treating frauds. An imbalanced dataset is one where the number of observations belonging to one group or class is significantly higher than those belonging to the other classes.

First, we will explore through the Time and Amount and then through the V's features, that are PCAs.

Hour of the day seems to have some impact on the number of Fraud cases. As the plot below shows, there is some activity happening around 3-4am, and the highest rate of activity occurs around 6-8pm.

Counts histogram of Legit/Fraud over hour of the day.

*We can barely see the Fraud cases since there are so little of them.

Below are the Violin plots displaying the distribution of the transaction amount for each hour in a day. The activity seems to increase around 3-4 am as seen earlier.

Let's now see how well the PCA components complement each other by looking at their interactions with each other. Included are all the features from V1-V28.

It appears that most features show both Fraud and Legit purchases overlapping each other, with V4, V11, V17, showing a slightly separate distributions.

Let's get to model building and see how well our data can separate the classes.

PreProcessing:

Scaling and distribution:

We first scale the columns comprised of Time and Amount. Time and amount should be scaled as the other columns. At the same time, we need to also create a subsample of the dataframe in order to have an equal amount of Fraud and Non-Fraud cases, helping our algorithms better understand patterns that determines whether a transaction is a fraudulent or not.

• Scaled amount and scaled time are the columns with scaled values.

• There are 492 cases of fraud in our dataset so we can randomly get 492 cases of non-fraud to create our new sub dataframe.

• We then concat the 492 cases of fraud and non fraud, creating a new sub-sample.

Modeling:

Logistic Regression on imbalance data

I proceeded to modelling, selecting Logistic Regression as my algorithm. I trained the algorithm with the original unbalanced dataset. Below was my accuracy and Recall:

0.999204147794436

0.6190476190476191

Notice that the accuracy is very high, despite the presence of false positives and false negatives. However, the Recall score tell a better story of the actual model performance.

Challenges of dealing with Imbalanced datasets

Machine learning algorithms are very likely to produce faulty classifiers when they are trained with imbalanced datasets. These algorithms tend to show a bias for the majority class, treating the minority class as a noise in the dataset. With many standard classifier algorithms, such as Logistic Regression, Naive Bayes and Decision Trees, there is a likelihood of the wrong classification of the minority class.

There is also a problem of vanity metrics in measuring the performance of algorithms on imbalanced datasets. If we have an imbalanced dataset containing 1% of a minority class and 99% of the majority class, an algorithm can predict all cases as belonging to the majority class. The accuracy score of this algorithm is 99% ,which seems impressive, but is it really? The problem is that the minority class is totally ignored in this case.

The consequence of that can prove expensive in some classification problems, such as the case of a credit card fraud, which can cost individuals and businesses significant amounts of money.

There are many ways of dealing with imbalanced data. We will focus on the following approaches:

1. Undersampling — RandomUnderSampler

2. Using Class weights (Logistic Regression)

3. Oversampling — SMOTE

Under-sampling: In this method, the imbalance of the dataset is reduced by focusing on the majority class. One popular type is explained below:

Random Undersampling: In this case, existing instances of the majority class are randomly eliminated. This technique is not the best because it can eliminate information or data points that could be useful for the classification algorithm.

Oversampling: This method involves reducing or eliminating the imbalance in the dataset by replicating or creating new observations of the minority class. One popular type is through SMOTE.

SMOTE Technique (Over-Sampling): SMOTE stands for Synthetic Minority Over-sampling Technique. Unlike Random UnderSampling, SMOTE creates new synthetic points in order to have an equal balance of the classes. This is another alternative for solving the "class imbalance problems.”

Splitting the Data (Original DataFrame)

Before proceeding with the Random UnderSampling technique, we have to separate the original dataframe. We are splitting the data when implementing Random UnderSampling or OverSampling techniques, we want to test our models on the original testing set not on the testing set created by either of these techniques. The main goal is to fit the model either with the dataframes that were undersampled and oversampled (in order for our models to detect the patterns), and test it on the original testing set. We use the scikit-learn train_test_split() function to split into 70-30 ratio.

ReSampling - Under Sampling

Before resampling let’s have look at the different accuracy matrices

Accuracy = TP+TN/Total

Precision = TP/(TP+FP)

Recall = TP/(TP+FN)

TP = True positive means no of positive cases which are predicted positive

TN = True negative means no of negative cases which are predicted negative

FP = False positive means no of negative cases which are predicted positive

FN= False Negative means no of positive cases which are predicted negative

Now for our case recall will be a better option because in this case, the number of normal transactions will be much higher than the number of fraud cases, and sometime a fraud case will be predicted as normal. So recall will give us a sense of only fraud cases

Random Under-Sampling:

In this phase of the project, we will implement "Random Under Sampling," which basically consists of removing data in order to have a more balanced dataset and avoid overfitting our models.

Steps:

1. The first thing we have to do is determine how imbalanced our class (use "value_counts()" is on the class column to determine the amount for each label)

2. Once we determine how many instances are considered fraud transactions (Fraud = "1"), we should bring the non-fraud transactions to the same amount as fraud transactions (assuming we want a 50/50 ratio); this will be equivalent to 492 cases of fraud and 492 cases of non-fraud transactions.

3. After implementing this technique, we have a sub-sample of our dataframe with a 50/50 ratio with regards to our classes. Then the next step we will implement is to shuffle the data to see if our models can maintain a certain accuracy everytime we run this script.

The main issue with "Random Under-Sampling" is that we run the risk that our classification models will not perform as accurate as we would like to since there is a great deal of information loss (bringing 492 non-fraud transaction from 284,315 non-fraud transaction)

Now that we have our dataframe correctly balanced, we can go further with our analysis and data preprocessing.

Logistic Regression:

We performed modeling on the Undersample data using Logistic Regression as the classifier. Below are the Recall and Accuracy results on the training and testing data.

Training:

0.9115646258503401 0.9155405405405406

Test data:

0.9251700680272109 0.9612607235232845

AUROC : 0.943

We can see that the PR Curve does not fit well on the data.

Logistic Regression Class Weights:

The scikit-learn logistic regression has a option named class_weight when specified does class imbalance handling implicitly. Using this, we got the below results:

Recall:0.9183673469387755

Accuracy:0.976896878620835

AUROC : 0.948

Looking at the normalized confusion matrix, it seems like our classifier is doing very well! We have a 98% True Negative rate and a 92% True Positive rate! However, if we look at the PR curve, this classifier is more or less same as the under sample.

SMOTE - OverSampling:

In this section we will train two types of classifiers and decide which classifier will be more effective in detecting fraud transactions. Before we have to split our data into training and testing sets and separate the features from the labels.

We start with using Logistic regression and use GridSearch CV to get the best parameters.

Recall metric in the train dataset: 92.17391304347827%

Recall metric in the testing dataset: 91.83673469387755%

The PR Curve thus obtained using SMOTE is by far the best.

We then use the Random Forest Classifier to see if it outperforms the results obtained from Logistic Regression.

Below are the results from Random Forest Classifier:

SMOTE + RandomForest classification:

accuracy: 0.9995786664794073

precision: 0.8947368421052632

recall: 0.8095238095238095

f2: 0.8252427184466019

From the PR Curve and the results thus obtained, we can see that its over-fitting. We can thus conclude that SMOTE Oversampling outperformed other sampling techniques.

Conclusion

Imbalanced data can be a serious problem for building predictive models as it can affect our prediction capabilities and mask the fact that our model is not doing so well. Implementing SMOTE on our imbalanced dataset helped us with the imbalance of our labels.

In our undersample data our model is unable to detect for a large number of non fraud transactions correctly and instead, misclassify those non fraud transactions as fraud cases. Imagine that people that were making regular purchases got their card blocked due to the reason that our model classified that transaction as a fraud transaction, this will be a huge disadvantage for the financial institution. The number of customer complaints and customer dissatisfaction will increase.

The next step of this analysis will be to do an outlier removal on our oversample dataset and see if our accuracy in the test set improves. We can also use a Classification methods, Anomaly detection methods to further investigate. To get more deeper, Neural networks can also be leveraged for fraud detection.

Scikit Imblearn provides some great functionality for dealing with imbalanced data. It is important to consider the trade-off between precision and recall and decide which one to prioritize in light of possible business outcomes.