Data Analysis on Credit Card Approval

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Preface:

The decision of approving a credit card or loan is majorly dependent on the personal and financial background of the applicant. Factors like, age, gender, income, employment status, credit history and other attributes all carry weight in the approval decision. Credit data analysis involves the measure to investigate the probability of a third-party to pay back the loan to the bank on time and predict its default characteristic. Analysis focus on recognizing, assessing and reducing the financial or other risks that could lead to loss involved in the transaction.

There are two basic risks: one is a business loss that results from not approving the good candidate, and the other is the financial loss that results from by approving the candidate who is at bad risk. It is very important to manage credit risk and handle challenges efficiently for credit decision as it can have adverse effects on credit management. Therefore, evaluation of credit approval is significant before jumping to any granting decision.

Objective:

Algorithms that are used to decide the outcome of credit application vary from one provider to another and across sectors and geographies. However, there are high degrees of similarities in the attributes used to generate those algorithms. In this project, I have collected data from the Credit Approval dataset available in the archives of machine learning repository of University of California, Irvine(UCI) (http://archive.ics.uci.edu/ml/datasets/credit+approval)

The main objective of developing a Credit Card Approval Shiny App is to show the impact of different fields like Gender, Age, Income, Number of years employed etc on the approval for a Credit Card. This app have some static graphs(which include histograms, scatter plots, box plots etc) and some interactive plots that will help user to select the fields of interest.

The primary objective of this analysis is to implement the data mining techniques on a credit approval dataset. Risks can be identified while lending,data-based conclusions can about probability of repayment can be derived and recommendations can be put forward.

Look into the Data:

The Credit Approval dataset consists of 690 rows , representing 690 individuals applying for a credit card, and 16 variables in total. The first 15 variables represent various attributes of the individual like fender, age, marital status, years employed etc. The 16th variable is the one of interest: credit approved(or just approved). It contains the outcome of the application, either positive(represented by “+”) meaning approved or negative (represented by “-“) meaning rejected. This dataset is a multi- variate dataset, having continuous, nominal and categorical data along with missing values.

Below is the structure of the dataset:

str(Credit_Approval)

Classes ‘tbl_df’, ‘tbl’ and 'data.frame': 690 obs. of 16 variables:

Male : chr "b" "a" "a" "b" ...

Age : chr "30.83" "58.67" "24.50" "27.83" ...

Debt : num 0 4.46 0.5 1.54 5.62 ...

Married : chr "u" "u" "u" "u" ...

BankCustomer : chr "g" "g" "g" "g" ...

EducationLevel: chr "w" "q" "q" "w" ...

Ethnicity : chr "v" "h" "h" "v" ...

YearsEmployed : num 1.25 3.04 1.5 3.75 1.71 ...

PriorDefault : chr "t" "t" "t" "t" ...

Employed : chr "t" "t" "f" "t" ...

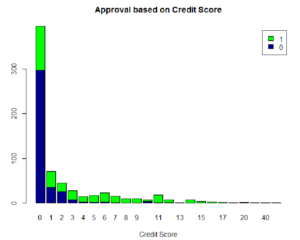

CreditScore : num 1 6 0 5 0 0 0 0 0 0 ...

DriversLicense: chr "f" "f" "f" "t" ...

Citizen : chr "g" "g" "g" "g" ...

ZipCode : chr "00202" "00043" "00280" "00100" ...

Income : num 0 560 824 3 0 ...

Approved : chr "+" "+" "+" "+" ...

And some stats for all these fields

Below is a quick overview of the missing values in the dataset:

Data PreProcessing:

Preprocessing of the data includes data cleaning, data integration, data transformation , data reduction, missing values imputation among other tasks. Below are some of the data transformations that were done to the Credit Approval dataset before we apply any EDA techniques.

- The Credit Approval dataset contains categorical values that are transformed to binary values or factors of 1s and 0s. For eg., Approved field having values of + and – are changed to 1 and 0 respectively, 1 being the card is approved. Similarly, Gender having values ‘a’ changed to 1 representing male and ‘b’ changed to 0. Prior default and Employed both have categorical values ‘t’ and ‘f’ which are transformed to 1 and 0. 1 as binary value considered true/yes/pass and 0 represents false/no/fail.

- Missing data: The missing values constitute to 5% of the entire dataset. And the missing values are represented by “?”. Converted all the missing values to NA first, and then imputed them(See below for more details)

- Variable Names: Initially the fields were named from A1-A16 but with the help of some documentation available, there were renamed appropriately. However, All attribute names and values have been changed to meaningless symbols to protect confidentiality of the data.

- Data Types: Converted the ‘t’ and ‘f’ into factors.

- ZipCode: The values for this field were mostly zeros or invalid. This field will not be considered for any analysis.

- Number of Records: This dataset only has 690 observations, limiting us to come to a conclusion.

Missing data Treatment:

The missing values are found to exist in attributes Age, Gender,Marital Status, Bank Customer. Education level, Ethnicity, and Zip Code which we filled by NAs. Out of these, Age is a continuous variable. There are different methods to impute missing value, ranging from deleting the observations, deleting the attribute if of no importance, zero them out or plug the mean/median/mode value from all the values.

Here we imputed the values by using the median value for Numerical fields. For remaining attributes with categorical values, the missing values are imputed using the frequency count of the observations. The Class group with highest frequency was used.

Exploratory Data Analysis:

To start with, the distribution of 5 continuous variables Age, Debt, Credit Score, Income and Years employed was observed to get a sense of the nature of the dataset.

These initial plots showed that all variables have distributions that are skewed to the right, indicating that the data is not well-distributed about the mean. In order to reduce the skew,log transformations were applied and then plotted again.

Below are the plots of the discrete variables that appear to influence whether a credit application is approved.

As expected, Prior Default and employment status appear to have the most significant effect on the approval. Persons with prior default are rejected more than 90% of the time, and those who not employed are rejected 70% of the time.

Education

Let’s see if the education level has any effect:

From the graph , we see that people with education level ‘x’ have an 85% chance of approval as-compared to ‘ff’ who are rejected 85% of the time.Among the continuous variables, Income and Credit Score seem to also have significant effect on the outcome of the credit application.

As we see, a high credit score resulted in approval 90% of the time, and applicants with higher income have a higher than average approval rate.

Finally, I did a pairwise comparison of all the fields using a scatter plot you can see below:

These plots do seem to have a scaling problem. One reason for this could be the presence of outliers. The range of the values is high, causing the regression line to adjust for these outliers. For now, we will not be working on handling these. But from the plot, we can see that Years Employed has the highest linear correlation with the Approved field.

Conclusion / Future Scope:

From this initial analysis, we are able to conclude that the most significant factors in determining the outcome of a credit application are Employment, Income, Credit Score and Prior Default.

Based on these insights, we can work on building some predictive models. They can be used by analysts in financial sector and be incorporated to automate the credit approval process. These results can also serve as a source of information for the consumers.

Modern credit analyses employ many additional variables like the criminal records of applicants, their health information, net balance between monthly income and expenses. A dataset with these variables could be acquired. It’s also possible to add complementary variables to the dataset. This will make the credit simulations more , similar to what is done by the banks before a credit is approved.

The shiny application is available on this link:

https://kiranmayinimmala.shinyapps.io/creditapp_shiny/

And the code is available on GitHub location below:

https://github.com/KiranmayiR/Credit_Shiny