Data Study on a Successful Kickstarter Campaign

The skills we demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

What characteristics maximize the probability of a successful Kickstarter Campaign?

I. ABSTRACT

Kickstarter is one of the most popular crowdfunding platform on the internet, with an accumulated 3.9 billion in pledged US Dollars. The aim of this project is to web scrape data from Kickstarter and identify key characteristics that comprise a successful project.

II. INTRODUCTION

Investors on the Kickstarter platform, in contrast to traditional funding (angel investors, small business loans or using one's own assets/cash), truly believe in the creator's project. I am not talking about confidence in a project based on its scalable revenue model or its presentation as a high growth investment. Instead, investors are attracted via 'rewards' setup by the project creator that guarantee a certain level of gift related to the project, which corresponds to the level of the donation. Essentially, Kickstarter matches creators with investors who share a true passion and interest for the project.

The steps to launch a Kickstarter project are simple:

- create a project

- set the minimum funding goal

- set reward levels, and

- choose a deadline.

Aspects of these steps will be further explored below, in V. Data Analysis, to demonstrate how a campaign's probability of success can be optimized since projects that fail to secure 100% funding will see individuals receive refunds for their .

III. DATA WEB-SCRAPING PROCESS

The first step in creating a script for data scraping is to determine how to iterate through each individual Kickstarter project page to extract 20+ variables. To do so, I created three main loops and one subsidiary loop.

- Loop#1 went through each category and subcategory you see below, which provided the front page for each subcategory. I found that Kickstarter only allows users to reach page = 200 for a subcategory.

- Loop#2 used all urls provided by loop#1 with a page number added from [1;x] with x being given by the user. Loop#2 then extracted all project specific urls for each page, with each subcategory having max. 12 projects/page.

- Loop#3 went through each individual project page and obtained the variables needed for analysis: pledged $s, creation date, final date, creator, location, category, etc...

- Loop#4, the subsidiary loop, was of a much smaller size and extracted information from the FAQ section of each url in loop#3 to compliment the majority of variables pulled in loop#3.

When inspecting Kickstarter's html elements and testing my XPaths’ in Scrapy Shell, it became evident that the website was almost entirely run on JavaScript. Unfortunately, Scrapy on its own completely ignores JS elements which meant I could only collect about 15% of my requested data. After a bit of research, I integrated a lightweight web browser, ScrapySplash, which properly renders JS pages in a manner that enables Scrapy to read the pages’ elements.

Another issue was being IP banned by Kickstarter. I ended up having to increase my download delay from 1 second to 3 seconds and running my script on another machine.

IV. DATA CLEANING

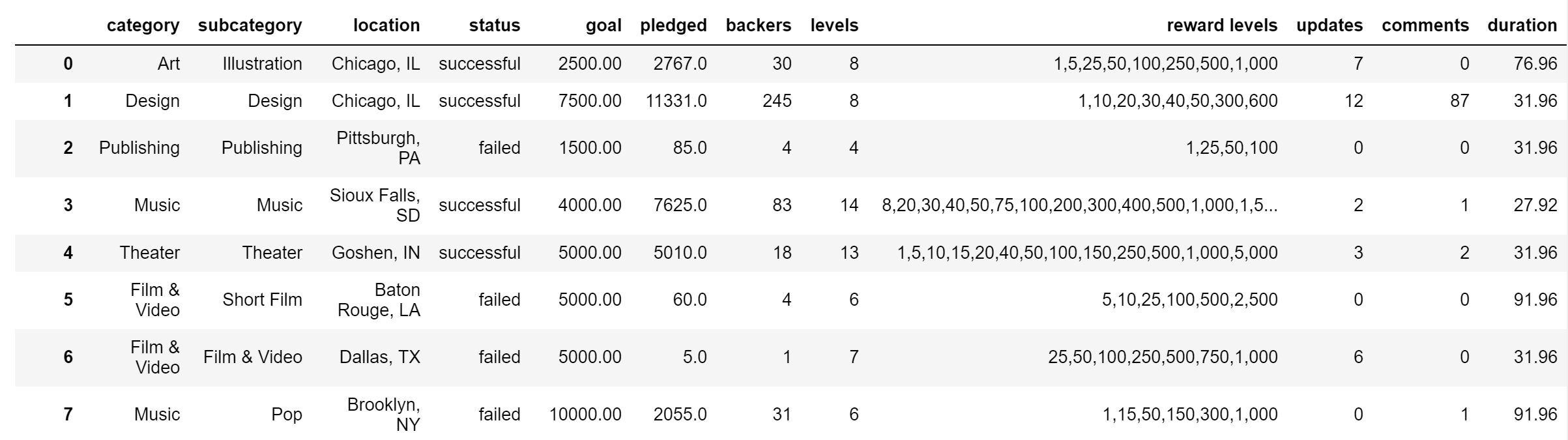

Having extracted Kickstarter's project data, there were multiple modifications that had to be done in python to clean-up the data for analysis (below are the 5 main changes):

- convert location string into separate 'city' and 'state' strings

- convert strings of the number of updates, rewards levels, created projects and date into integers

- create a '%funded' variable ($s funded/$s pledged) as the success metric for my project

- create a 'duration' of project variable based off of project creation and end dates

- eliminate rows with NA or null values

V. DATA ANALYSIS

I first took a look at the distribution of success rates:

As is quite apparent, there are severe outliers in the data. To remedy this situation, I applied a basic IQR of Q1 and Q3 to my data. I then tweaked the IRQ range so as to encompass relevant funding %s. Results are seen below:

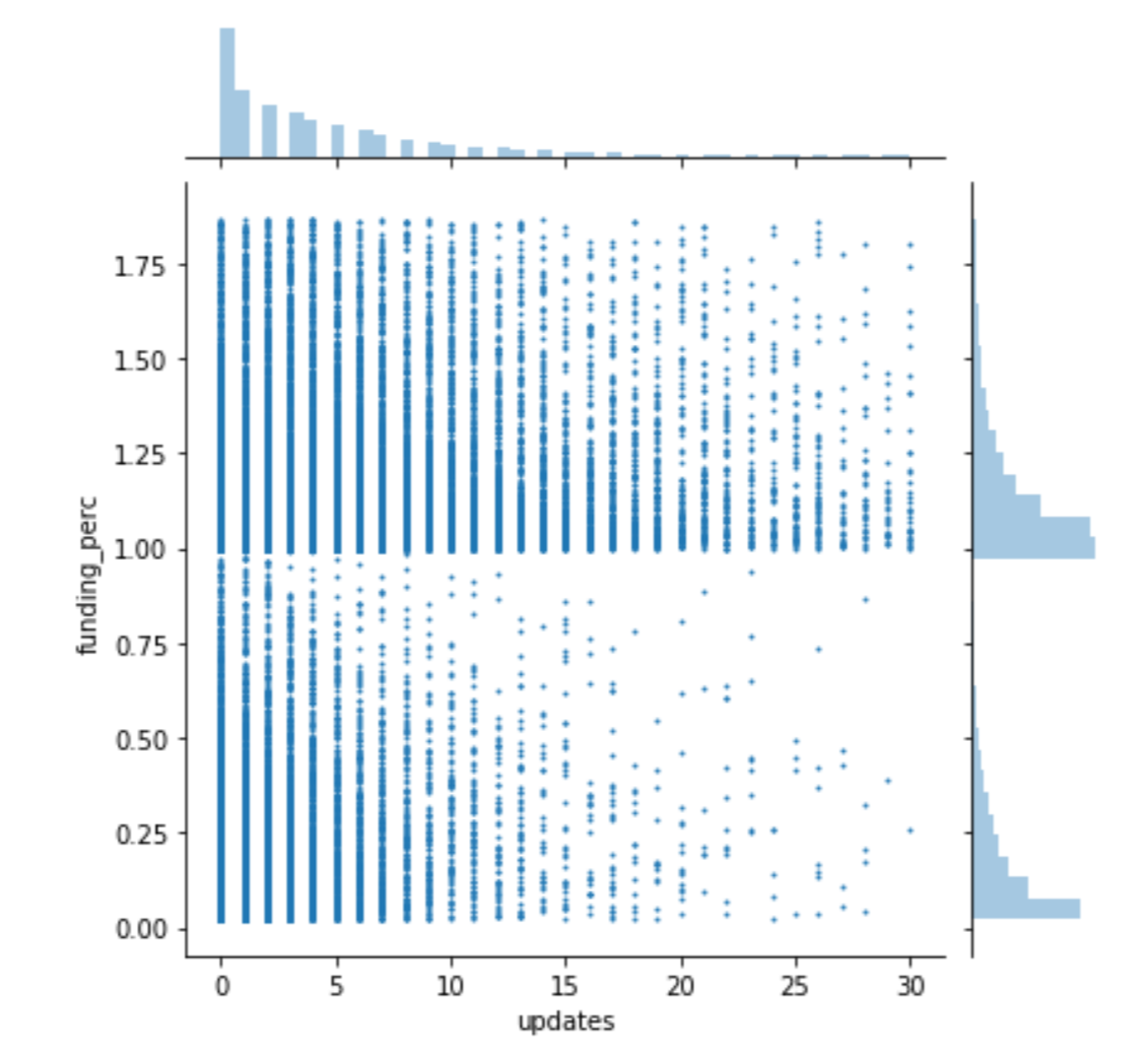

The next step was determining the main characteristics that made up a successful project:

1. Type of project to be launched based on quartile distribution vs %funded: Dance, Theater or Music.

At a sub-category level, Dance and Theater both had similar inter-category distributions, however, for Music, it would be best to stay away from Hip-Hop and Electronic Dance music as both means are below 40% funded.

2. Ideal funding goal for the project: between [$300;$1700] is the ideal range and more specifically, $400 and $300.

3. Duration of campaign: except for a 1-day campaign, the ideal duration range is [1 week; 4 weeks] with a much higher probability of success for 1, 9 and 15 day campaigns.

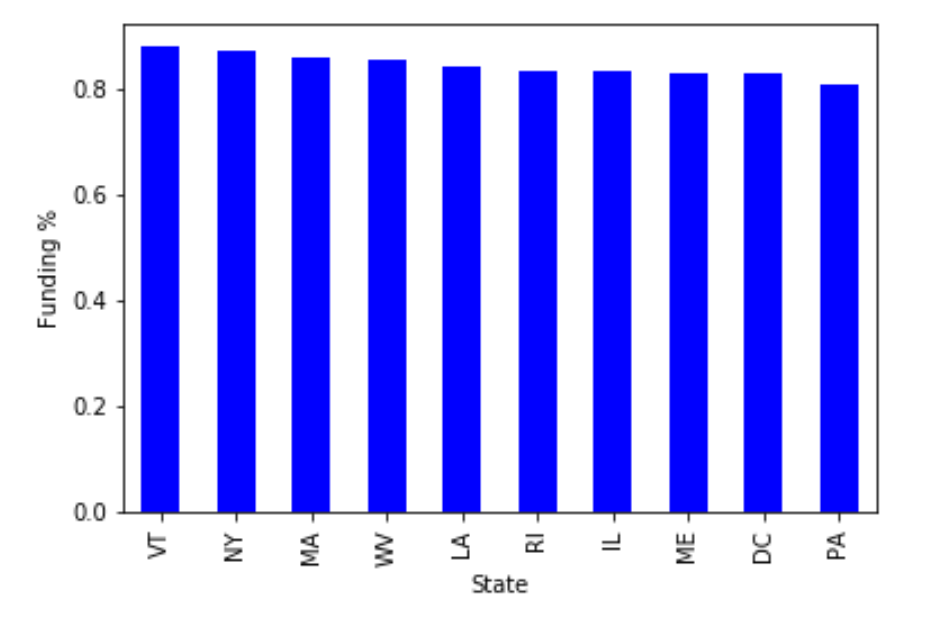

4. Campaign launch location: Vermont is the best state and Wyoming is this worst.

5. Additional features: number of updates, reward levels and comments: Comments and updates have the heaviest impact on funding %, with values above 20 for both values strongly increasing the probability of a successful campaign.

VI. FURTHER WORK

1) Obtain more data: at least 200 rows / subcategories

2) Make scrapy code more efficient to minimize time taken to scrape

3) Build a model to predict the success of a project