Data Study to Predict NBA Player Positions

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Goal

The goal of this project is to predict the position of a NBA player based on their data statistics.

Solution

Decision Tree & Random Forest Classification

Introduction

Traditionally, the 5 basketball positions normally employed by NBA teams are the point guard, shooting guard, small forward, power forward, and the center. Despite the fact that the NBA has tried to move toward more position-less basketball, there exists clear distinctions between position based on player statistics. In this project I will attempt to classify players into one of the five main basketball positions based players statistics scraped from statscrunch. I make use of decision trees and random forest to do the prediction.

Data

1. Center

The center is usually the tallest player on the team. He should be able to post up offensively, receiving the the ball with his back to the basket and use pivot moves to hit a variety of short jumpers, hook shots, and dunks. He also must know how to find the open player in the paint and grab rebounds and blocks.

Average Height: 6’11.25″

Average Weight:257lbs

2. Point Guard

The point guard is usually the shortest player on the team. Should be the team’s best passer and ball handler; not primarily a shooter. Traditional role is to push the ball up-court and start the offensive wheels turning. Should either take the ball to the basket or remain near the top of the key, ready to retreat on defense. Point guards are known to get the most assists and steals.

Average Height: 6’2″

Average Weight: 189 lbs

3. Shooting Guard

The shooting guard is usually a little bit taller than the point guard but shorter than a small forward. The shooting guard is considered as the teams best shooter generally. A good shooting guard comes off screens set by taller teammates prepared to shoot, pass, or drive to the basket. In addition, the shooting guard must also be versatile enough to handle some of the point guard’s ball-handling duties.

Average Height: 6’5.25

Average Weight: 209lbs

4. Small Forward

The small forward is considered as the all-purpose player on offense. An strong and aggressive who is tall enough to mix it up inside but agile enough to handle the ball and shoot well. Must be able to score both from the perimeter and from inside.

Average Height: 6’7.75″

Average Weight: 225lbs

5. Power Forward

Finally, the power forward is a strong, tough re-bounder, but also athletic enough to move with some quickness around the lane on offense and defense. Expected to score when given the opportunity on the baseline, much like a center, but usually has a range of up to 15 feet all around the basket.

Average Height: 6’9.5″

Average Weight: 246lbs

Variables

My analysis sets out to predict what position a player is based on 15 player statistics. The 15 variables I use are :

- Points per Game

- True Shooting Score

- Offensive Rebounds

- Defensive Rebounds

- Total Rebounds

- Assists

- Blocks

- Turn Overs

- Team Play Usage

- Offensive Ratings

- Defensive Ratings

- Offensive Win Shares

- Defensive Win Shares

- Win Shares

- Steals

Data Visualization

To start my analysis off, let's look at how some of these variables are distributed between positions. Looking at the distributions, we start to see which variables will best help us to differentiate between each position, as well as give us an indication of the main splitting points the decision tree will make use of. This visualization shows us how the 15 variables are distributed across the 5 positions.

We can clearly see that Centers and Power-Forwards dominate the rebound and block statistics. This might lead to some issues in my decision trees, since the more similarities there are between positions, the more difficulty it will be to distinguish between them. The clearest distinction becomes apparent when we look at assists. Point guards have by far the most assists, which leads me to believe that this will be the easiest post to consistently predict correctly.

Summary Statistics

Distribution

Heatmap - by Player

Heatmap - by Position

Prediction Using Decision Trees and Random Forest Classification

1. Decision Tree - Good Fit

Decision Trees are like a game of 20 questions. It asks different questions based on the answers to previous questions, and then at the end it makes a guess based on all the answers. Decision trees are simple to understand and to interpret, and can be easily visualized.

In order to make predictions with the tree, we start at the top (the “root” node), and ask questions, traveling left or right in the tree based on what the answer is (left for true and right for false). At each step, we reach a new node, with a new question. In my example, in this particular tree, we can see that if TRB > 13(different each time), and steals are less than 0.95, the position of the player is predicted to be a Center. Very easy to visualize and interpret indeed.

Results :

The results above represents how well my tree performed. Centers were predicted correctly 56% of the time, and power-forwards 41% of the time. We see a lot of overlap between these two categories. Not surprising due to the fact that these two positions share a very similar distributions of independent variables. Not surprisingly, point guards were predicted correctly 80% of the time. I suspect some of the miss-predictions will be corrected when we implement a random forest.

2. Decision Tree - Over Fit

Over-fitting is technically defined as a model that performs better on a training set than another simpler model, but does worse on unseen data, as we saw above. Correct predictions of Centers decreased by 13%, power-forwards by 2%, point guards by 18%, small forwards by 23%. Predictions for shooting guards increased by 18%, but overall the over-fitted model performed substantially worse. We went too far and grew our decision tree out to encompass massively complex rules that may not generalize to unknown player positions. It important to find the sweet-spot in the bias-variance trade-off.

3. Random Forest

The random forest (see figure below) takes a decision tree to a whole-nother level by combining decision trees with the notion of an ensemble. As we can see from the diagram below, random forests add some randomness into the equation.

- One type of randomness involves the set of examples used to build the trees. Instead of training with all of the training examples, we use only a random subset. This is called “bootstrap aggregating,” and is done to stabilize the predictions and reduce over-fitting.

- The other type of randomness involves the set of features used to build each tree. Different trees will consider different features when asking questions. Random forests have low bias, and by adding more trees, we reduce variance, and thus over-fitting.

Before I go into the results, I'll first explain how the system is trained. Lets generalize the number of trees to T. The first step is to sample N(100) trees at random and create a subset of the data. Usually we use about 2/3rds of the data to train our model. At each node of the tree, m predictor variables are selected at random. Next, the variable that happens to be the best split is used to do a binary split on that node. At the next node, choose another m variables at random from all predictor variables and do the same.

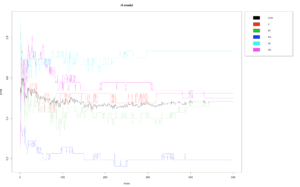

In my case study, I decided to stick to tuning only two parameters - mtry and ntree. These two parameters usually have the biggest impact on the final accuracy. ntree represents the number of trees I will build, and mtry represents the number of variables randomly sampled as candidates at each split.

Tuning Parameters

For the first parameter I started tuning was the number of trees I used. I decided I decided to use a 180 trees . The plot below shows that the out of bag error is the lowest around this number.

For the mtry parameter, I decided to randomly sample 9 variables each time. I made my decision based on the following plot. We can see that the out of bag error is minimized at 9.

Results

We can see that the random forest performed better than the decision tree. It significantly predicted point guards better. We also saw increases in center predictions as well as small forward predictions. There was little decreases in power forward and shooting guard predictions. My data set was rather small, so the random forest will most likely perform a lot better if we had more data to work with. As a next step in my project, I plan on scarping data from 50 seasons, and do a similar analysis and see how my results and models perform with more data.

Conclusion

This analysis set out to predict player positions based on a whole array of players statistics. I made use of a decision tree, and then extended that tree to a whole forest. The random forest performed better, but still left a lot of room for improvement.

https://gist.github.com/jurgen-dejager/7f96c61529ed72c3c846db018572c303