Data Visualization on Local Retail Business Reviews

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

Yelp.com is a crowd-sourced local business and service review site that has an average of 92 million unique mobile users per month. As an influential and powerful data portal for a small business’s digital public relations management, the site spotlights customer feedback – both positive and negative. Users can upload pictures, a text review, and rate the business using a star-based rating system (out of five). From customer reviews, then, business owners have a uniquely direct opportunity to see what enhances or detracts from a customer’s experience.

This project seeks to extract insights found from scraping the Yelp reviews of Levain Bakery, located on Manhattan’s Upper West Side neighborhood. The primary research question posed in this project concerns the actual context and specific feedback behind a business’s general star rating average – that is, with respect to Levain Bakery, what does a typical positive review center on? And what does a typical negative review specifically take issue with?

By using a natural language processing analysis approach, this project aggregated and contextualized typical customer likes and dislikes ultimately in order to present the business with recommendations that could improve the customer experience and the business's overall customer retention.

Methods

The data used was scraped from Yelp.com using Python and the Scrapy framework. Four variables and 3618 reviews were scraped, up to and including reviews made in August 2020. Pandas, regex, and ntlk were used to clean and preprocess the data. WordCloud and matplotlib were used to visualize the natural language processioning analysis.

Data Results

In exploring this dataset, because wordcloud only parses word frequencies, it was necessary to group reviews by rating in order to add dimension and contextualize each wordcloud visualization. Wordcloud visualizations were made for five star and aggregate ‘low star’ ratings (three stars and lower) for comparison.

Words Used in Five Stars Reviews

In summarizing the findings, the natural language processing analysis revealed that five star reviews frequently used words with clear positive affect. For example, words like ‘delicious,’ ‘best,’ and ‘amazing’ were easily noticeable in this wordcloud visualization. Across rating groupings, the most frequently used phrase of ‘chocolate chip’ (amongst other cookie flavors) suggests this cookie flavor is the bakery’s most ordered and/or most prominent product.

Fig. 1: Five-star wordcloud.

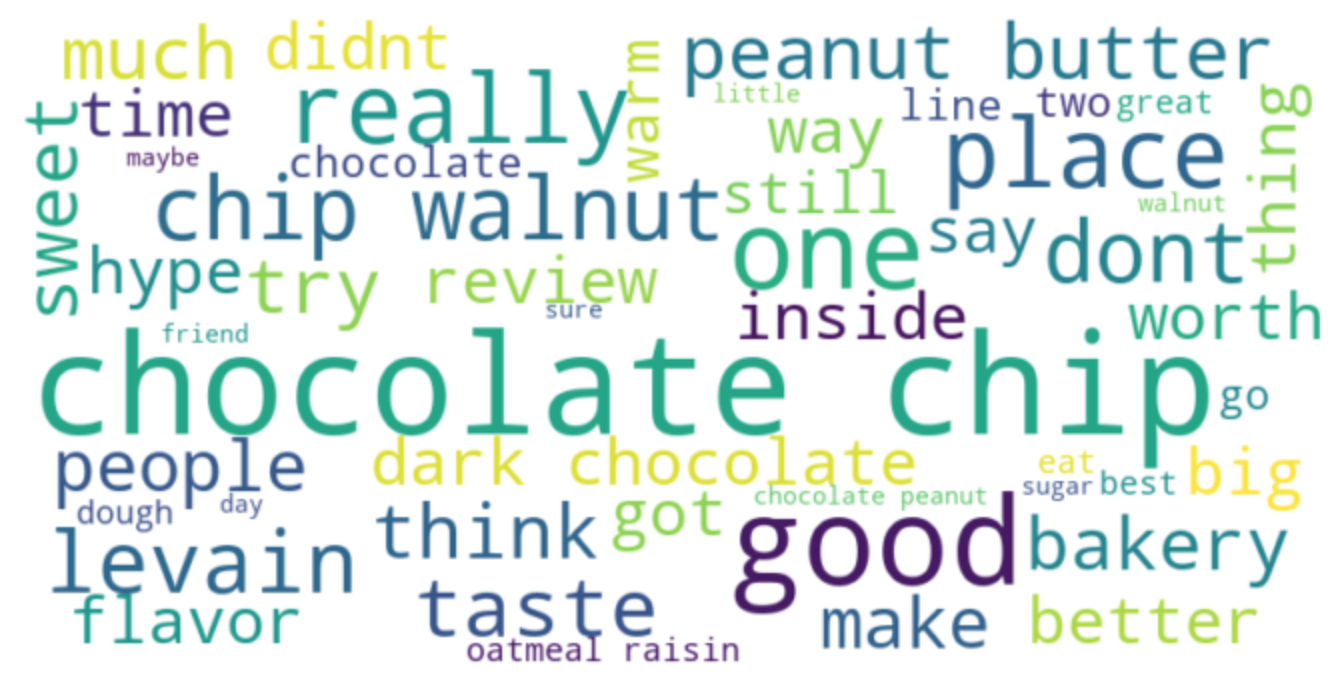

Low Stars Reviews

In the low star, or three star and lower, wordcloud the word with highest positive affect was ‘good’ – a much milder descriptor compared to the effusive words used in the five star reviews. Additionally, words like ‘hype’ and ‘better’ suggested that low star reviewers took issue with the perceived overrated-ness of the bakery and its goods (i.e., they had had ‘better’).

A quick glance at the raw review text confirms the presence of this sentiment throughout the three star reviews. Notably, though, there were no words with obvious negative affect (i.e., words like ‘bad’ that on their own explicitly suggested a negative attitude toward the bakery/product).

Fig. 2: Low star wordcloud.

Contrast

Another interesting difference between the two visualizations regards how both the words ‘people’ and ‘review’ were prominent in the low star wordcloud visualization, suggesting that low star reviewers were possibly drawn to the bakery simply because of its highly-vaunted reputation on Yelp as a foodie tourist destination.

This contrasts with the prominence of ‘friend’ in the five star wordcloud, which possibly suggests that reviewers either brought their friend with them or were recommended by a friend.

This difference seems to support the idea that people with a better experience at the bakery may have had a more direct referral to the bakery (i.e., vetted by a friend who had already gone) or simply had different expectations – that is, reviewing the bakery and its products as part of a social experience rather than as an ultimate epicurean destination in and of itself. Randomly sampling and scanning raw review text that included the word ‘friend’ informally confirmed this usage context.

Fig. 3: Raw text from five-star reviews illustrating the social experience factor.

Fig. 4: Raw review text from low star reviews demonstrating customer disappointment from hype-based expectations.

In an interesting twist, relatively frequent usage of words like ‘line’ and ‘inside’ across ratings suggests that all customers waited to get into the establishment. However, the degree to which the customers felt like it was ‘worth’ the wait likely varied respectively with their rating.

Conclusion

Ultimately, in response to the original research question, what seemed to differentiate customer experience and satisfaction was their expectation from the outset. Those expecting an extraordinary cookie were possibly more likely to be disappointed simply because they had gone to the bakery solely to try the product based on the business’s impressive online presence.

Contrastingly, the prominence of the word ‘friend’ in the five star wordcloud suggests the bakery seems to garner more favorable impressions as part of a social experience (i.e., as part of a trip out on the city, or to the bakery). This reveals the possibly double-edged nature of the brand’s formidable online presence (i.e., inflating a customer’s expectations of the product).

The business could address and potentially reduce the amount of disappointed customers by expanding the seating area and adding more tables, or including promotions for first-time visitors that encourage them to bring a friend. Emphasizing the social aspect of customers' experience in brand messaging could then improve overall business by capturing more positive first impressions from more customers and boost the pool of customers likely to return to the store.

Future Research

Future research might explore the geographic distribution of reviewers – for example, which state are most visitors from? Are there more out-of-state reviewers? Does rating correlate with whether or not a visitor was from in-state versus out-of-state? Additionally, while this natural language processing analysis revealed some interesting insights, a more fine-grained approach to the raw text could reveal more precise, context-dependent information – for example, utilizing an n-gram analysis approach.

While this research places considerable weight on a business’s Yelp reviews (bringing into focus what kind of customer is more likely to read reviews or review a business on Yelp, as well review authenticity/the Yelp review authentication process), it also represents a targeted and empirically supported approach to a small business’s reputation management and subsequent business operation improvements.

Link to code: Github repository

Sources of Our Data

Levain Bakery. Aug, 2020. https://www.yelp.com/biz/levain-bakery-new-york?osq=Levain+Bakery

28 Groundbreaking Yelp Statistics to Make 2020 Count. May 19, 2020. https://review42.com/yelp-statistics/