Data Analysis on Apple's Customer Satisfaction on Products

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

As a self-proclaimed tech-enthusiast, I've been following the tech review and data community, especially on YouTube, for quite a while. During that time, I recognized a certain pattern emerge after every new iPhone release: highly popular videos (as well as articles) would be released criticizing initial problems with the new iPhone.

However, Apple's sales numbers do not seem to be impacted by this negative atmosphere around its release date. This made me wonder who is most impacted by these videos and articles and whether or not they affect Apple's customer satisfaction. Should these reviews, in fact, impact Apple's customers, a more loosely defined release schedule, giving Apple more time to refine new features of the iPhone, might increase Apple's customer satisfaction.

Why amazon.co.uk?

I chose to scrape amazon.co.uk for several reasons. Firstly, Europe, besides China, is arguably the most important foreign market for the iPhone. Thus, customer reviews from the UK present relevant information for Apple. Secondly, Amazon enabled me to not only gather information regarding the reviews themselves (Rating, Title, Text, Helpful Votes) but also regarding the Amazon user that published the review. By clicking on the username, I was able to collect information about each user, such as the total number of helpful votes and reviews as well as all published reviews.

In conducting my research, I chose to exclusively focus on reviews for the iPhone X from November 2017 to September 2018 to only gather reviews for the newest iPhone at that point in time. Future research could apply the same concept to older iPhones.

Workflow of Our Data

I used Selenium to scrape amazon.co.uk, mainly because of its flexibility in navigating between different websites.

After having scraped the data, I prepared and cleaned the data, mostly using Pandas, NumPy, and RE. This step included identifying and appropriately dealing with missing values, adapting my code, and reformatting the gathered data to enable further processing and analysis.

Then, I manipulated and analyzed the data, breaking it down into subgroups and comparing their characteristics.

Finally, I visualized the results of my analysis with matplotlib, seaborn, and wordcloud.

Number of Reviews per Month

The first part of my analysis focused on the number of reviews per month from the iPhone's first reviews in November 2017 until September 2018. Against my initial expectations, there were relatively little reviews around the time of the iPhone's release. Then, around December 2017, the number of reviews started significantly increasing, reaching its peak in March/April of 2018.

My suspicion regarding the relatively low number of reviews around the release is that, firstly, the iPhone is released later in Europe than it is in the US, possibly causing a delay in reviews. Furthermore, customers in the UK might have waited to purchase the iPhone until Christmas, which could explain the increase in December.

Verified versus Unverified Reviews

Next, I split the reviews into two categories: verified and unverified reviews. Generally, verified reviews clearly outnumbered unverified reviews. However, the only moment at which the number of unverified reviews exceeded the number of verified reviews was November 2017, directly after the iPhone's release. This finding fueled my suspicion that the generally negative atmosphere on the Internet at the time of the iPhone's release is not created by Apple customers, but by people disliking Apple as a company.

Furthermore, this line plot shows that the previously identified increase in reviews is almost exclusively driven by verified reviews, while unverified reviews decrease after November 2017 and never really increase again.

Having proven that the substantial difference between the number of verified and unverified reviews, I decided to dig deeper and compare the average rating of these two groups of reviews. The following box plot visualizes the result:

Averages

This box plot shows that the two types of reviews not only tremendously differ when it comes to the number of reviews but also the average rating. While the average ratings for verified reviews are clustered between 4.50 and 4.75 with the 1st and 3rd quartile being extremely close to each other, the average ratings of unverified reviews exhibit substantial variability. On top, the median of unverified average ratings at approximately 3.50 provides further evidence for my suspicion that actual Apple customers are generally very satisfied with their iPhone's and not affected by negative reviews on the Internet.

To confirm these findings, I took a closer look at reviews published in November 2017 grouped by the type of review:

While the relatively low number of reviews does not allow for very reliable conclusions, there still is a visible difference between the verified and unverified ratings with the verified ratings generally being more favorable than the unverified ratings.

Analyzing the Review Text and Data

In my next step, I exclusively focused on the review texts of verified reviews and after preparing the data, generated a word cloud that allows for a quick overview of the general sentiment among verified reviews:

Again, the majority of words in this word cloud are positive and express satisfaction with the iPhone X ("happy", "excellent", "best"). In addition, the delivery seems to have been very important for customers, which is not necessarily an insight for Apple, but definitely for Amazon.

Finding negative words in this word cloud requires either very good eyes or a magnifying glass. If you possess either, you might be able to spot "crashed" or "smashed", however, the size and rarity of negative words provide further evidence for the high satisfaction of actual Apple customers.

Fake Review Index

At this point, I was almost ready to wrap up my project and include a few more describing visualizations to prove my point.

However, after sampling some of the reviews, I started having doubts as to how many reviews were published by people actually having purchased the product. Some reviews, even verified reviews, seemed very suspicious to me. In order to decrease the uncertainty introduced by not knowing which reviews are real, I came up with something that I named the Fake Review Index.

To incorporate the Fake Review Index into my research, I decided to go back to scraping and gathered not only reviews but also relevant information regarding each user. Then, I used this information to calculate the Fake Review Index based on the following 6 factors:

Calculation Steps

I calculated the Fake Review Index by assigning weights and categorizing several scenarios for each of these factors. For instance, the highest score for the Number of Helpful Votes/Number of Reviews factor is 10. One scenario for this factor would be the following: if the result of this calculation for a given user returns a number smaller than or equal to 1 (= the user received less than or equal to 1 helpful votes for all of his posts on average), the user's Fake Review Index gets increased by 10. Therefore, the lower the Fake Review Index, the more likely it is that the review is genuine.

While the Fake Review Index is not extremely sophisticated (yet), I am very confident it is able to at least identify the most obvious fake reviews and filter them out.

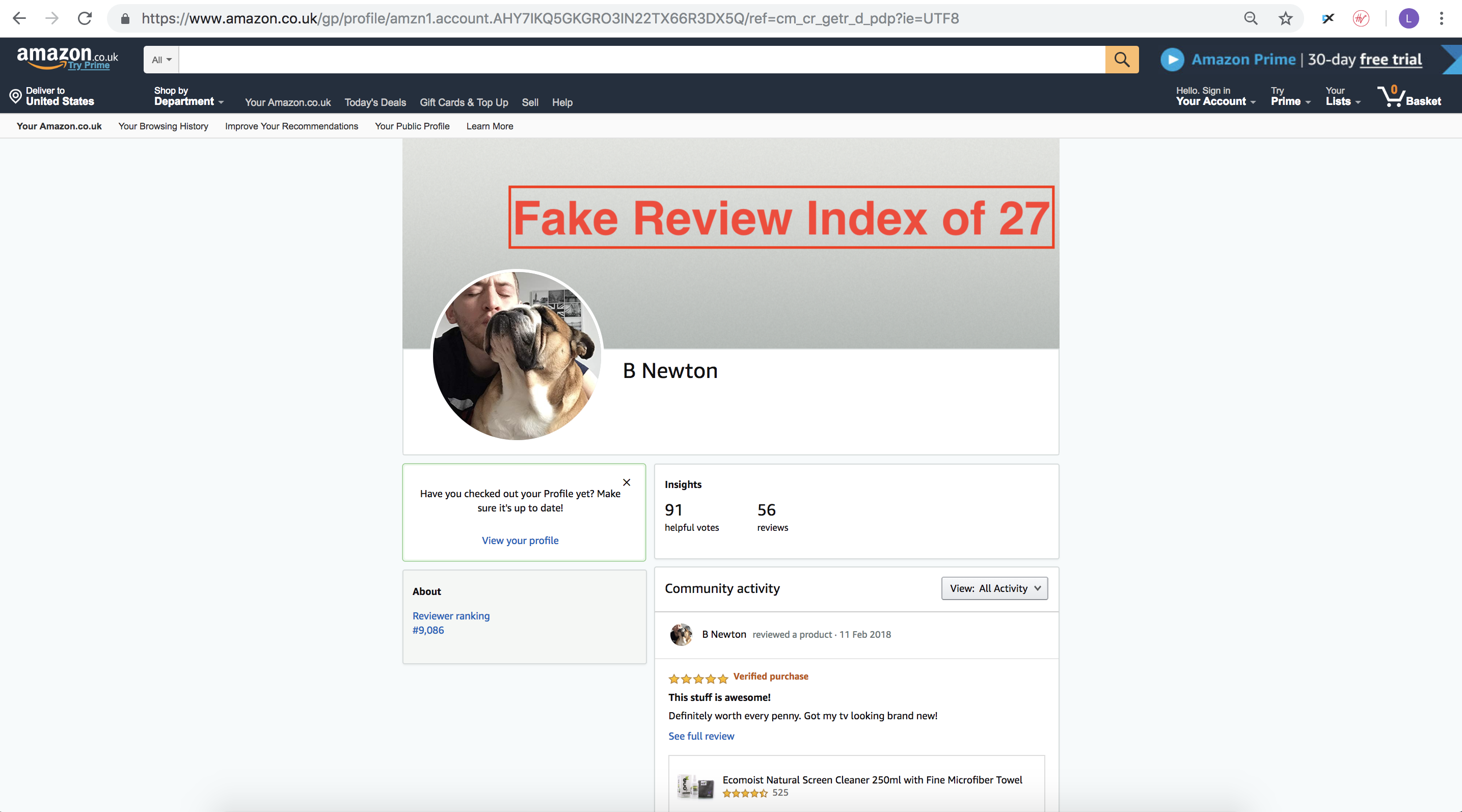

To illustrate the Fake Review Index, I included two scores of two different users that have published a review for the iPhone X:

This user received a Fake Review Index of 74.5. As evident from his reviews, he barely receives helpful votes and his reviews do not appear to be genuine whatsoever. Furthermore, this user mainly uses 5-star ratings and publishes several reviews for different products on the same day. Thus, he receives a high Fake Review Index Score.

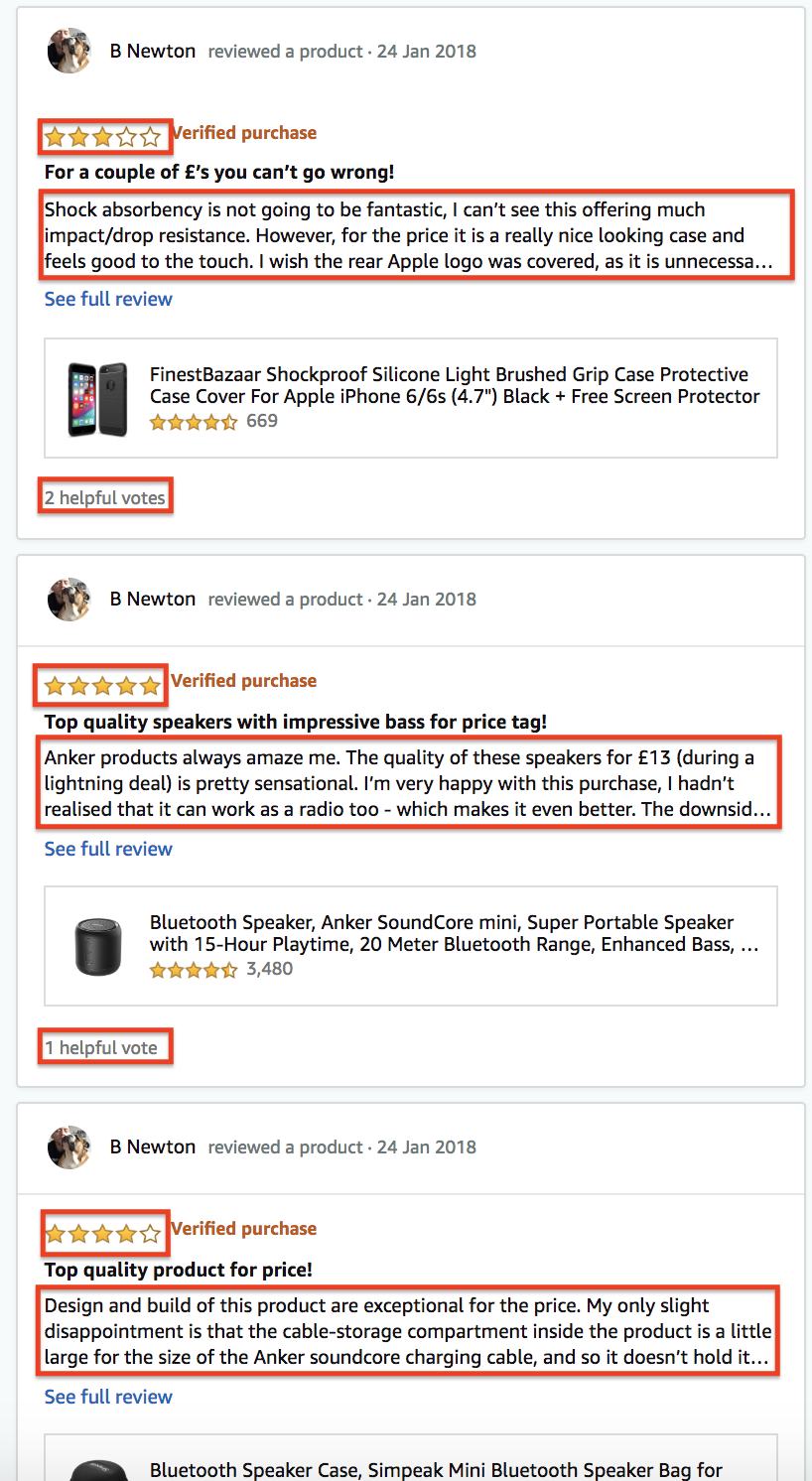

The second user received a Fake Review Index of 27, making his reviews likely to be genuine. Indeed, this profile seems to belong to an active member of the Amazon review community as his reviews actually detail his experience with the product, do not only consist of 5-star ratings, and receive more helpful votes:

Findings

Finally, after grouping the reviews by their Fake Review Index, I made an interesting observation: the more likely a review is genuine, the lower the average rating. While the decrease is not extremely large, it is still significant, as the average rating for reviews that are very likely genuine is approximately 3.8. This rating is still very high and proves that Apple's customers are very satisfied, nevertheless, it is not as astronomically high as the previously discussed 4.5 - 4.75 average for verified reviews.

Wrap-Up

In summary, Apple's customer satisfaction is still very high. The negative atmosphere on the Internet at the time of the iPhone's release can mainly be attributed to non-customers. Apple's customer satisfaction, however, is not as high as one might suspect at first glance when considering how genuine the reviews are. To answer my initial research question, there does not seem to be an urgent need for Apple to implement a more loosely defined release schedule.

Future extensions of this project would include a more refined and sophisticated fake review index that could then be universally applied to different review websites to enable companies to filter out the most relevant reviews and trends among their customers.