Exploring Jim Cramer's Stock Recommendations Via SeekingAlpha.com

Introduction:

As a casual investor, I have always been interested in making more data-driven investment decisions. It is well-known that there is an overload of information in the form of stock recommendations, warnings, and hundreds of thousands of pundits offering "the next big" thing if you subscribe to his or her blog.

With that, what I sought to achieve with my web scraping project was to cut through the noise by gathering a recommender's historic recommendations and evaluate his or her performance over the long-term. I decided to pick the popular stock website SeekingAlpha.com and one of the more notable analyst personalities, Jim Cramer. Jim Cramer is the host of the CNBC show "Mad Money" (since 2005) and has gained much popularity for his theatrics on set. Within each of the show's episodes, Jim hosts a segment called a "Lightning Round" where he fields audience member's calls and then labels several stocks as either bullish or bearish for that day.

Methodology:

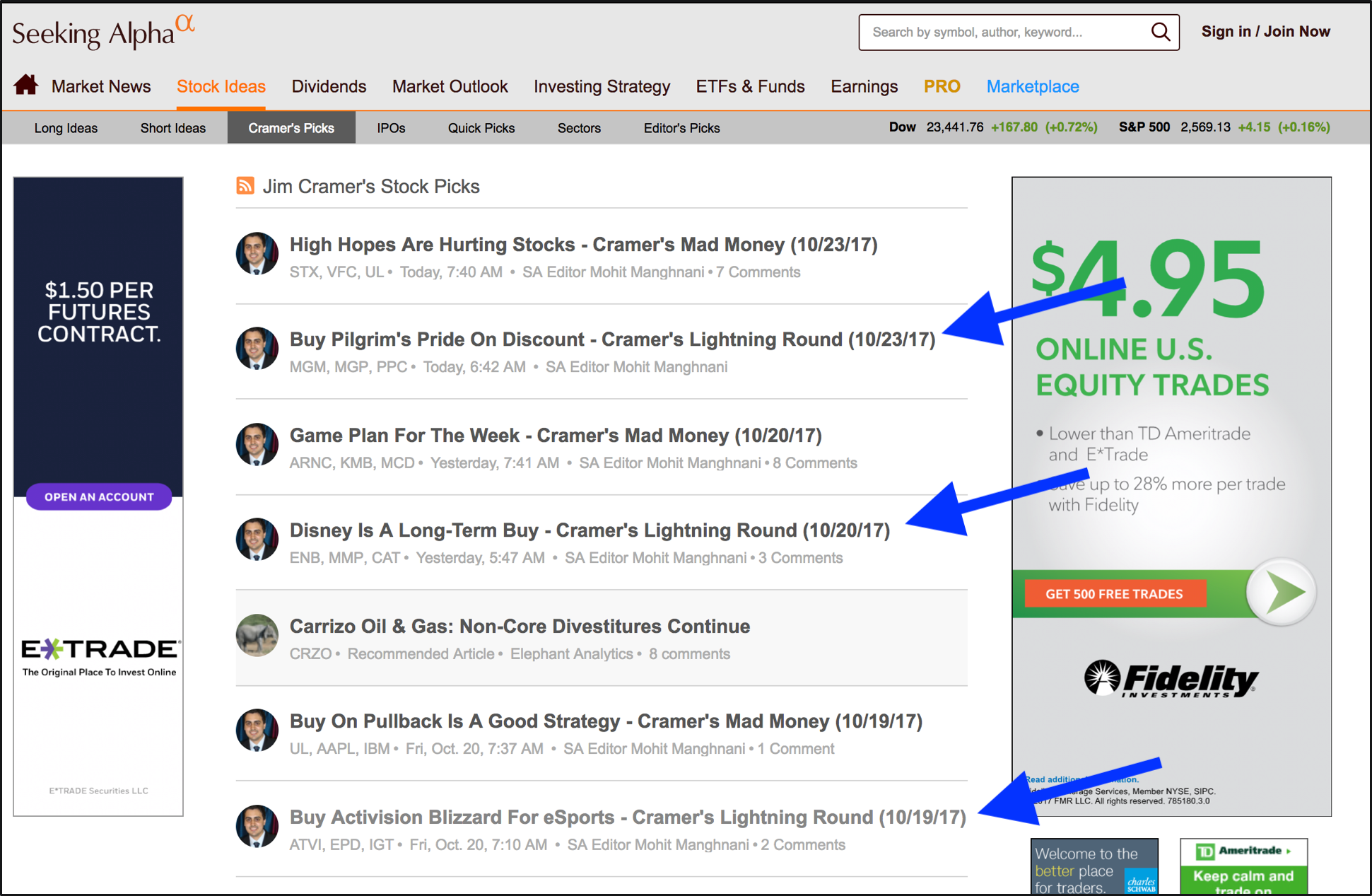

On the website SeekingAlpha.com is a featured section called "Cramer's Picks." And within that feed of articles are summaries of Jim's lightning rounds as you can see in the image below.

Using the Python package Selenium, I ran a script to repeatedly scroll through each page of these links and pull the URL of each article with lightning round in the title until a specified number of links was retrieved. Then, to gain experience with additional packages, I opted to use another scraping framework, Scrapy, to click through each of the links and retrieve the relevant ticker symbols. As you can see from the below image, the articles were typically structured with a paragraph of "Bullish Calls" followed by a series of recommendations.

While this strategy was successful for the first few articles, I quickly encountered SeekingAlpha's anti-scraping measures and was continually getting redirected to the below error page.

Undeterred, I researched effective ways to circumvent such blocks and found that cycling through different IP addresses and User-Agents would likely yield results. While I found many available paid services, I opted to use the open-source TOR network, which provides anonymous browsing from a unique IP address on every page load. After finding relevant packages, I added a TOR middleware component into my framework and successfully scraped the desired data.

Analysis:

To actually evaluate whether these recommendations were valuable I then sought to retrieve historic pricing data for each of the scraped ticker symbols. I added code to my script to pull data from the Yahoo Finance API and retrieved adjusted returns for the ticker symbols on each of the dates they were mentioned. For a rudimentary analysis, I decided to also pull the price of each stock 7, 30, and 180 days after it appeared in an article to gain a rough understanding of the optimal holding time. With this, I was able to analyze a final dataset of 1,127 articles that appeared as below:

Findings:

I visualized returns in the following chart by plotting both the distribution as well as box-plot of each individual stock. I have embedded the below interactive visualization using the Python implementation of Plot.ly. When hovering over a specific box-plot, one can view some summary statistics (mean, median, quartiles, etc.). A few observations here indicate that variance increased as the holding time increased, but the 180-day timeframe also had the highest mean and median. While it was surprising that the overall trend was positive, the means and medians are very close to zero, which may show that his recommendations bear minimal influence on reaping significant returns.

To further investigate some of the more notable articles, I summarized the top and bottom performing stocks in the below slide:

One observation I noted was that in for some of the worst-performing recommendations, the actual name of the stock was mentioned in the article title. While this observation definitely warrants further validation, I thought it was interesting to note in the cases that I discovered:

- 7-day Article: Cramer's Lightning Round - Pfizer Wasn't Built In A Day (8/22/12)

- 30-day Article: Cramer's Lightning Round - It Is Hard to Get Better Than MarkWest (7/18/11)

Summary:

Lastly, I provided some summary information of the data collected in the slide below:

Overall, the 30-day strategy produced the best returns, but as expected, Cramer's recommendations did not outperform the market as a whole. Simply purchasing an index such as the S&P 500 and holding it for the same time period as the articles were pulled would've yielded a nearly 93% return. Nevertheless, I found it interesting to visualize how an analyst's recommendations performed and explore whether any hidden trends exists.

While the focus of my project was developing the technical implementation behind building a new data source and integrating with external APIs, I would love to explore more nuanced financial analyses and extend this project:

- Gathering more granular financial data and understand if his recommendations influence a particular price over the next hours, days, or weeks.

- Dividing recommendations by industry and comparing results to the relevant competitors/ETFs. Perhaps his recommendations in total underperform, but he may do particularly well in a given sector.

Thanks for reading!

Thanks for browsing my work and don't hesitate to reach out if you have any additional questions or feedback on my approach and techniques used within this project-- Feel free to access my code repository on github here.