H-1B Visa Applications Exploration using Shiny

Contributed by Sharan Naribole. He is currently undertaking the part-time online bootcamp organized by NYC Data Science Academy (Dec 2016- April 2017). This blog is based on his bootcamp project - R Shiny app.

Abstract

In this project, I expand on my R EDA project on H-1B visa applications in the period 2011-2016. My project goal is to create a web app that enables flexible exploration of the key metrics including number of applications and annual wage for different Job positions under different employers in different states in different time periods. The app can be accessed at https://sharan-naribole.shinyapps.io/h_1b/. In this blog, I will describe the key features of the app.

H-1B Visa Data Introduction

The H-1B is an employment-based, non-immigrant visa category for temporary foreign workers in the United States. For a foreign national to apply for H1-B visa, an US employer must offer a job and petition for H-1B visa with the US immigration department. This is the most common visa status applied for and held by international students once they complete college/ higher education (Masters, PhD) and work in a full-time position. The Office of Foreign Labor Certification (OFLC) generates program data that is useful information about the immigration programs including the H1-B visa. The disclosure data updated annually is available at https://www.foreignlaborcert.doleta.gov/performancedata.cfm

In this project, I utilize over 3 million records of H-1B petitions in the period 2011-2016.

App Inputs

Figure 1. Shiny app homepage

As shown in Figure 1, the input to the app can be provided in the side panel. The app takes multiple inputs from user and provides data visualization corresponding to the related sub-section of the data set. Summary of the inputs:

- Year:

Slider input of time period. When a single value is chosen, only that year is considered for data analysis. - Job Type:

Default inputs are Data Scientist, Data Engineer and Machine Learning. These are selected based on my personal interest. Explore different job titles for e.g. Product Manager, Hardware Engineer. Type up to three job type inputs in the flexible text input. I avoided a drop-down menu as there are thousands of unique Job Titles in the dataset. If no match found in records for all the inputs, all Job Titles in the data subset based on other inputs will be used. - Location:

The granularity of the location parameter is State with the default option being the whole of United States - Employer Name:

The default inputs are left blank as that might be the most common use case. Explore data for specific employers for e.g., Google, Amazon etc. Pretty much similar in action to Job Type input. - Metric:

The three input metric choices are Total number of H-1B Visa applications, Certified number of Visa Applications and median annual Wage. - Plot Categories:

Additional control parameter for upper limit on the number of categories to be used for data visualization. Default value is 3 and can be increased up to 15.

App Outputs

Map

Figure 2. Map output

As shown in Figure 2, the Map tab outputs a map plot of the metric across the US for the selected inputs. In this case, the map is showing three layers. The first and bottom layer is roughly that of the US map. This is followed by the a point plot of the Metric across different Work sites in US with the bubble size and transparency proportional to the metric value. When only a particular state is selected in the app input instead of the default USA option, then the bubbles appear only for the selected state. The top most layer is that of pointers to the top N work sites with the highest metric values, where N stands for the Plot Categories input.

Below the map plot, you can also find the corresponding Data table displaying metrics for all considered work sites. This data table visualization below plot is a common theme across all output tabs of the app.

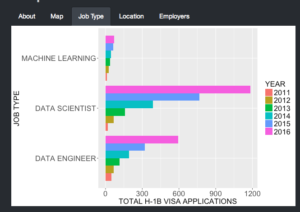

Job Type

Figure 3. Job Type

As shown in Figure 3, Job type tab compares the metric for the Job types supplied in the inputs. If none of the inputs match any record in our dataset, then all Job Titles are considered and the top N Job types will be displayed, where N stands for Plot Categories input. You can try this by providing blank inputs to the three Job Type text inputs. This output enables us to explore the Wages and No. of applications for different Job positions for e.g., Software Engineer vs Data Scientist. In the above figure, I am comparing Data Scientist against Data Engineer and Machine Learning positions. We can see an exponential growth in the number of H-1B visa applications for both Data Scientist and Data Engineer roles with Data Scientist picking up the highest growth.

Location

Figure 4. Location-based comparison

As shown in Figure 4, Location tab compares the metric for the input jobs at different Worksites within the input Location region. In the above figure, the selected input was the default USA. In that case, the app displays the metric for the top N work sites, where N is the Plot Categories input. The top N sites are chosen by looking across all the years in the year range input. In the above example with default job inputs related to Data Science, we clearly observe San Francisco leading the charts closely followed by New York.

Employers

Figure 5. Employer-based comparison

The Employers tab output is similar to the Job Types tab with the only difference being the comparison of employers instead of Job Types. If no Employer inputs are provided or none of the provided match with any records in our dataset then all employers are considered. Figure 5 shows Facebook, Amazon and Microsoft as the leading employers for Data Science positions.

Coding Hacks

I will briefly discuss a few interesting hacks picked up during this project.

Dropbox rdrop2

Initially, when deploying my app, I included the RDS file from which I read my dataset. The file size was ~ 80MB and crashed my app every time it ran. Then, I came across rdrop2 package that allows you to access Dropbox files from the Shiny server thus eliminating the hassle of uploading your dataset with the app bundle. For this to work, all rdrop2 needs is for you to first save the dropbox authentication token as follows:

Non-standard-evaluation (NSE) with dplyr using lazyeval

I faced the issue of non-standard-evaluation when I had to pass column names of a dataframe as arguments to a function. lazyeval package came to the rescue. I illustrate a simple example of its usage below.

Conclusion

I created a Shiny app based on the H-1B visa petition data that I analyzed in my R EDA project. The app can be accessed at https://sharan-naribole.shinyapps.io/h_1b/.

The GitHub code for this project can be found here. Thanks for reading!