Data Analysis on Fraud Detection in American Healthcare

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Github | LinkedIn (SN) | LinkedIn (AA)

Background

About 10 percent of the annual healthcare spending, which is about 10 cents of every dollar goes toward paying for fraudulent claims. Health insurance was established originally in the 1920s; hospitals started to offer hospital services for monthly insurance premiums. It set the foundation for our modern healthcare system. Currently, the United States spends more than $1 trillion every year on healthcare.

However, with the expansion of the industry, there also has been a rise in healthcare fraud. Healthcare fraud is a white-collar crime where medical facilities submit fabricated claims, resulting in more than $100 billion in loss per year.

Fraud claims come in different forms such as

- billing for services not provided,

- misrepresenting provided services by charging for more complex or expensive services,

- providing non-covered services while billing for covered services,

- duplicating claims for the same or different patients, and

- submitting claims for providing unnecessary medical procedures.

Healthcare fraud is prosecuted under title 18 section 1347 and might result in incarceration, fines, and possibly losing the right to practice in the medical industry. However, there is a 30-day investigation limit for claims that are potential fraud. As a result, the time constraint makes it difficult to do an adequate investigation before the insurance has to pay.

Mission Statement

The purpose of this project is

- discover important parameters that can be of help in detecting the behavior of potential fraud providers,

- use those parameters to label potential fraud providers based on the claims filed, and

- characterize the behavior of potential fraud providers.

About the data set

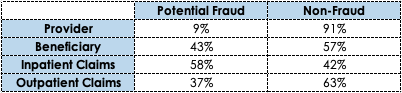

The dataset is composed of patient information (Age, Gender, Race, DOB, State, County), inpatient & outpatient claim information (Dates, Amounts, Medical Codes, Physician IDs), and Provider fraud labels (yes/ no). The below table shows the percentage of potential fraud in Providers, Beneficiary, Inpatient Claims, and Outpatient Claims. There are about

Exploratory Data Analysis

At first, all the train datasets were merged by matching the "Provider ID" and "BeneID" and missingness imputation was performed. Physician IDs with missing values were replaced with 'None' and 'Medical Codes' with missing values were replaced with 0. One of the features from 'Medical Codes' was dropped as it had 100% missing values for all the observations.

After cleaning the data, we ran some initial data analysis before we moved on to feature engineering. Figure 1 is a boxplot which is comparing the distribution of chronic conditions for inpatient and outpatient. The y-axis represents how many chronic conditions a patient has. Both inpatient and outpatient have similar distributions of chronic conditions. Inpatient patients have a median count of chronic conditions as 6 compared to outpatient with a median chronic condition count as 4, which implies that inpatient patients have worse health conditions compared to outpatient patients.

Figure 1: Boxplot comparing inpatient and outpatient distributions of chronic condition count per patient

Figure 1: Boxplot comparing inpatient and outpatient distributions of chronic condition count per patient

Figure 2 shows line plots of total provider count per state for potential fraud and non-fraud. Both groups have a similar trend, meaning the count of providers fluctuates similarly for both potential fraud and non-fraud. If we compare Figure 2 to Figure 3, which is showing the population per state, the fluctuations are somewhat similar to Figure 2. These similarities in fluctuations might indicate that states with a higher population tend to have a higher count of providers, which is logical as there is a higher need for more providers.

This is not always the case though; California has much more population than Georgia but Georgia has more Providers than California does. Thus, there is also more room for a higher number of fraudulent activities. However, when we compare claim counts in Figure 4, both potential fraud and non-fraud providers have similar trends and similar counts for some of the states (such as California, Georgia, North Carolina) which indicate that potential fraud providers have a similar patient base as non-fraud providers and the size of the potential fraud providers are comparable to non-fraud providers.

2: Line plots of total providers per state for potential fraud and non-fraud

2: Line plots of total providers per state for potential fraud and non-fraud

3: Line plot of population per state for year 2009

3: Line plot of population per state for year 2009

4: Line plots of claim count per state for potential fraud and non-fraud

4: Line plots of claim count per state for potential fraud and non-fraud

Insurance reimbursement and Deductibles are higher for potential fraud providers compared to non-fraud providers, as shown in Figure 5. Even though both provider groups have similar trends for patients with chronic conditions up to age 45 and increased chronic condition count for non-fraud providers after age 45 (Figure 6), potential fraud providers are charging higher deductibles and reimbursements for all ages.

It also seems that patients age 60 or more are more likely to visit doctors and get admitted to a hospital (Figure 6), which might also give potential fraud providers a better opportunity for doing fraud.

5: Line plots of deductibles and reimbursements per age for potential fraud and non-fraud

5: Line plots of deductibles and reimbursements per age for potential fraud and non-fraud

6: Line plots of chronic condition count per age for potential fraud and non-fraud

6: Line plots of chronic condition count per age for potential fraud and non-fraud

Feature Engineering

The label for potential fraud is given on providers. As a result, we performed feature engineering to create new features on providers as the initial features are per claims. Here is a list of all the new features.

- Top 10 (CDC codes, Procedure codes, Group diagnosis codes)

- Count per Provider

-

- Male/ Female Patients, State, County, Age(41-60), Age(61-80),

- Age(81-100), Race, Hospital Stay, Payment, Claim Length,

- Alzheimer Patient, Heart Failure Patient, Kidney Disease Patient,

- Cancer Patient, Obstructive Pulmonary Patient, Depression,

- Diabetes Patient, Ischemic Heart Patient, Osteoporosis Patient,

- Rheumatoid Arthritis Patient, Stroke Patient, Renal Disease Patient,

- Diagnosis Group Code

Machine Learning (ML) Data Models

Since there is an imbalance in the data with a 1:9 ratio of potential fraud and non-fraud, we needed to balance the dataset for our models. For logistic regression, we transformed the scale of the dataset to log scale and for the tree-based models, the "class_weight = 'balanced' " parameter was used to account for the imbalance in the dataset between potential fraud and non-fraud. Table 2 summarizes all the machine learning models and their performances. At first, we ran all four ML models on all the features.

From each model, we picked the top features (coefficients > 0 for Logistic Regression & feature importance > 1% for tree-based models) and re-ran the ML models. As shown in Table 2, comparing all four ML models (Logistic Regression, Random Forest, Gradient Boosting, Support Vectors) with the top features, Random Forest gave us the best recall score at 74% and the smallest count of false negatives at 42. So, we picked Random Forest to be the final model and analyzed its features in the next section.

Below are the top (23) features used for the final model (Random Forest):

- Payment, Hospital Stay, Diagnosis Group Code, Claim Length Total

- CDC_2724, CDC_42731, CDC_25000, CDC_4280, CDC_2449, CDC_4019

- CPC_4019, CPC_2724, CPC_66

- Stroke, Kidney Disease, Ischemic Heart, Obstructive Pulmonary, Rheumatoid Arthritis

- Race White, Male Patient, County Count

- Age(81-100), Age(61-80)

Table 2: Summary of machine learning models showing values for false positive, false negative, and recall values. From "All Features" models top features were selected and used for final models.

Table 2: Summary of machine learning models showing values for false positive, false negative, and recall values. From "All Features" models top features were selected and used for final models.

Data Analysis

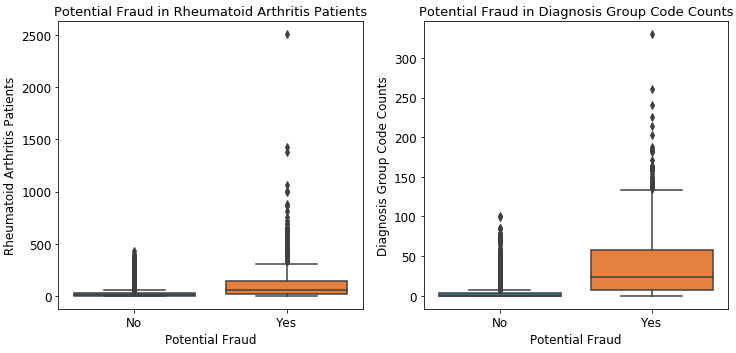

Once we had our top features we decided to do a deeper dive into some of those features in order to see how they relate to potential fraud. Our first feature analysis was on the top 5 chronic conditions.

As you can see from these boxplots above, the distribution claims that come from a potentially fraudulent provider is much higher than that of the non-fraud providers for all 5. We also found that it was interesting that 4 out of 5 chronic conditions were cardiovascular-related. This essentially means that we were able to infer that patients with cardiovascular conditions were more likely to be victims of fraudulent claims.

The 6th boxplot from above is the distribution of claims for diagnostic group codes. Much like that of the chronic conditions, the distribution of claims is a lot higher when it comes to fraudulent providers than non-fraud providers. This indicates that potentially fraudulent providers tend to use diagnostic group codes much more often than not fraud.

Second Data Analysis

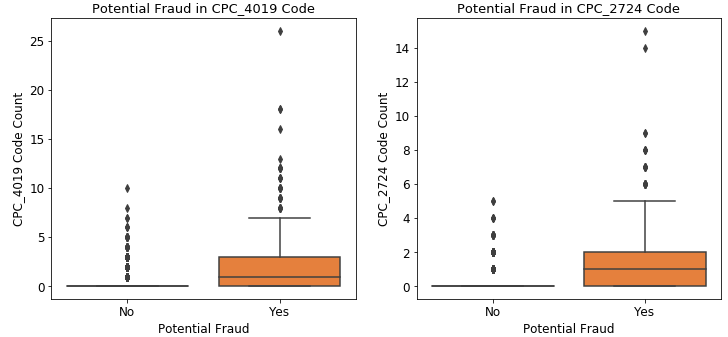

Our second feature analysis was for the Claim Procedure Codes or CDC. The top 3 codes are CPC 4019, CPC_2724, and CPC_66.

As we saw with the Chronic Conditions before, there is a higher distribution of claims in potential fraud than non-fraud for these codes. What else is interesting about them is that the first two procedures are meant for further analysis of symptoms. The third one, CPC_66 was for Coronary Angioplasty, which is a cardiovascular-related procedure. This made sense due to the most prevalent chronic conditions being cardiovascular-related.

What we were able to infer about this was that the most common procedures in fraudulent providers were used to have further testing done, which would boost the cost of claims.

Our third feature analysis was on the Claim Diagnostic Codes or the CDC codes. The top 6 codes were CDC_4280, CDC_2724, CDC_42731, CDC_25000, CDC_2449, and CDC_4019.

Figure 9: Boxplots comparing top Chronic Diagnosis Code (CDC) counts for Potential Fraud and Non-Fraud Providers

Potential fraud

Similar to the boxplots featured before, there is a higher distribution of claims in potential fraud than non-fraud for these codes as well. CDC_42731, as depicted in its caption, is for Atrial Fibrillation, which is a cardiovascular-related condition where the heart beats irregularly causing poor blood flow to the heart. This diagnosis made sense based on the majority of chronic conditions and CPC_66 mentioned above, but there was something odd about the other CDC codes.

What we found interesting about these codes is that all except CDC_42731 are labeled “Unspecified”, so we decided to look up what an unspecified code means. According to American Medical Association (AMA), unspecified is defined as, “Coding that does not fully define important parameters of the patient condition or the condition was not known at the time of coding”. We came to the conclusion that this may indicate that these codes may be used in order to perform procedures to further diagnose a patient, which was verified by the most prevalent CPC codes.

Summary

After our analysis of the dataset, we were able to come to a few conclusions. Firstly, we determined that potentially fraudulent providers tend to utilize CDC codes that are unspecified. This enables these providers to conduct more tests in order to gain more information about the patient, which normally comes in the form of procedures. This can prolong the hospital stay of a patient, which will inevitably increase their total payment by increasing the length of the claim.

Secondly, we were able to determine that male patients older than 60 years of age are the most vulnerable group to be the target of insurance fraud. This likelihood is also increased if the patient also has a cardiovascular chronic condition such as Ischemic heart disease, obstructive pulmonary disease, stroke, and kidney disease, but also seems to be frequent in patients with Rheumatoid Arthritis.

Third, based on our analysis we came to the conclusion that hospitals with multiple locations are also more susceptible to fraud. This was probably since they have a wider patient base. Finally, we were able to conclude that the average amount of money per transaction that the insurance company paid to potentially fraud providers in 2009 was around $1,400. Based on this knowledge, If 10% of providers have claims that are potentially fraudulent, our model would’ve saved the Insurance Company $24,049,304 for the year of 2009 for this dataset.

Future Work

We were able to complete this project within the 2-week deadline and compile the analysis shown above, but given more time there were a few additional things we wanted to and still intend to do. For example, we want to test out our machine learning model on new datasets with fraud labels to know how well it performs. Along with this we wanted to apply 10 fold cross-validation to increase the accuracy of our model.

We also want to study fraudulent patterns in the provider's claims to understand the future behavior of providers and engineer more features to improve the performance of the model. Lastly, we want to come up with labels for claims and create a model to predict potentially fraudulent claims so that our model would be able to detect transactional fraud as well.