Data Analysis on Amazon Review Ratings

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

LinkedIn | GitHub | Author Bio

Overview/Abstract:

Online customer reviews of goods and services have become commonplace with the continued rise of e-commerce and are a potentially valuable source of information for consumers and businesses alike. It is widely recognized that multiple biases are present in consumer reviews, resulting in polarized and imbalanced data. Prior research has focused on the distribution of online reviews as a whole and on the variations from platform to platform.

This analysis focused on the distribution of Amazon.com customer ratings grouped at the product level, and applied the results to develop a tool that helps companies gain insights into how their products compare to others on the site.

About Online Customer Reviews:

Significant research has focused on the investigation and characterization of online customer reviews. Typically the crowdsourcing of preferences and experiences from a large, diverse group of consumers results in data that exhibit normal distributions[1].

However, previous studies have shown that the majority of online reviews are at the positive end of the scale, with few in the mid-range, and some reviews at the negative end of the scale[2]. Most work in the field attempts to explain why this J-shaped distribution occurs, and whether the behavior is consistent across online platforms[3]. It is often described along two dimensions, polarity and imbalance, and attributed to biases introduced through the self-selection of reviewers.

Imbalance of Reviews

Polarity is the observed overrepresentation of positive and negative reviews and underrepresentation of mid-range reviews. This is often ascribed to polarity self-selection bias, where customers generally take the time to review only products with which they are very satisfied or unsatisfied[2].

Imbalance is observed through the large overweighting of positive reviews. A commonly attributed factor is purchase self-selection. This is the idea that customers who decide to purchase a specific product are more likely to be satisfied with it than the general population[4] and that on average consumers are often satisfied with the products they purchase[5]. Another contributing factor is the hesitancy of individuals to transmit negative information over weak ties and to large audiences[6].

Amazon.com review data is a frequent area of research, with the popularity of the website leading to large sample sizes and widely available data for academic research. This project differentiates itself from previous studies through its focus on extracting business insights rather than attempting to characterize and explain the reviews distribution.

The Data:

Given the numerous academic studies conducted on online customer reviews and the ease of accessing this information, there are many readily available data sources.

This analysis was conducted using a dataset of 82 million Amazon.com customer reviews for 9.8 million unique products between 1996-2014. These were gathered by Julian McAuley of UCSD and used in the paper Ups and downs: Modeling the visual evolution of fashion trends with one-class collaborative filtering (He & McAuley, 2016). Using the unique product key and star rating from each review, the data was transformed to yield the average ratings and distributions of ratings when grouped by product.

Figure 1. Example of the transformed data used in this analysis.

Product Distribution Data Analysis:

After grouping the reviews by product, the graphical representation of the average product rating distribution revealed neatly organized data. The data took on the appearance of a normal distribution with a fat lower tail, very different than the J-shaped distribution described in prior analysis without product-level grouping.

Figure 2. Distribution of Average Ratings for Products on Amazon.com.

The effect on the distribution of average product ratings from changes in the number of products included in the analysis was investigated. Different cutoff levels of minimum reviews per product were tested. This metric makes sense to use as a sorting feature, since the average rating for a product is highly dependent upon the presence of infrequently observed negative product reviews. Prior research indicates that only 5%-15% of reviews for an online marketplace like Amazon.com are negative[3]. Without enough total reviews present, these negative reviews are unlikely to appear in a representative proportion.

Varying the minimum reviews per product cutoff level from 25 reviews up to 300 reviews resulted in a large range of product counts included in the analysis. The number of included products ranged from about 25,000 (for 300 reviews/product minimum) to 500,000 (for 25 reviews/product minimum). Despite this large range, the average product ratings distribution stayed remarkably consistent. This robust relationship between the specific products included in the analysis and the distribution of average product ratings led to the conclusion that the ratings for a set of products depended on the ratings behavior of consumers, not on how frequently-reviewed a product was.

Figure 3. Distribution Comparison for Different Review Cutoff Levels.

Rating By Product Data

In addition to the average ratings distribution being robust to changes in the minimum review cutoff level, the observed proportions of individual ratings levels per product was found to be relatively stable as well. The consistency of these distributions show that the results of this project are applicable to all reviewed products on Amazon.com, as the average ratings distribution is relatively independent of the number of reviews a product has received.

Figure 4. Star Ratings Distributions by Product.

With this information, it was a straightforward process to create a cumulative frequency table for the average ratings of products on Amazon.com. This allowed for the placement of a product with a given average rating into a percentile of all products on Amazon.com, a valuable insight for businesses who sell products on the site.

Sample Size Data Analysis:

The next important question to address was how many reviews an individual product needed in order to have a meaningful average rating. Without a large enough number of reviews, the presence (or lack of presence) of just a few negative reviews could greatly change the average rating observed. In order to analyze this, random sampling was conducted on a 'typical' product to observe the sample outcomes from a population with known characteristics.

Random Sampling

For choosing the product to conduct random sampling upon, the dataset of reviews grouped by product was filtered for products that had an average rating close to the median of 4.27 (out of 5). It was then filtered again for products that had a star ratings distribution close to the median proportions seen in Figure 4. From the remaining products, a single one was selected that best fit these criteria and had a large amount of reviews (in this particular case, 14,114 reviews).

Random sampling was then conducted upon the product, with the reviews per trial number varying from 25 reviews to 300 reviews. Five thousand random sets were pulled from the product's population of reviews for each reviews per trial level that was tested. Each distribution of average trial ratings per reviews level tested was analyzed separately for the variance in trial outcomes.

Figure 5. Distributions for Randomly Sampled Averages at Various Reviews per Trial Levels.

Data Analysis

Upon visual examination of the distribution of average product ratings (Figure 2), it was determined that the maximum allowable range for a confidence interval was +-0.10 stars. This was precise enough to provide useful information on where a product's average rating fell relative to other products on Amazon.com.

A 90% confidence interval was chosen as this allows for a high level of certainty that the true population mean lies within the range, without posing an excessively high hurdle. Combining these constraints led to the determination of a 300 reviews per product minimum as the cutoff level for products to be included in this projects' analysis. It should be noted that the results generalize to products with lower ratings counts due to the robustness of the average ratings distribution (explored earlier), but that the confidence interval for products with fewer reviews was excessively wide for the purposes of this project.

Figure 6. Comparison of Variance in 300 Reviews per Product Sampled Trials to Overall Amazon.com Product Averages Distribution.

Recap of Analysis:

It was determined the median product on Amazon has an average rating of about 4.27 stars, where 61% of the reviews were 5-star ratings and 5% of the reviews were 1-star ratings. Importantly, a cumulative frequency table was constructed allowing the placement of a product with an average customer rating into a percentile of products on Amazon.com. Another key result of the study was the determination of a 300 reviews per product minimum in order to extract meaningful information from the average customer rating.

Figure 7. Cumulative Frequency Table for the Distribution of Average Product Ratings on Amazon.com.

Reviews Analysis Tool:

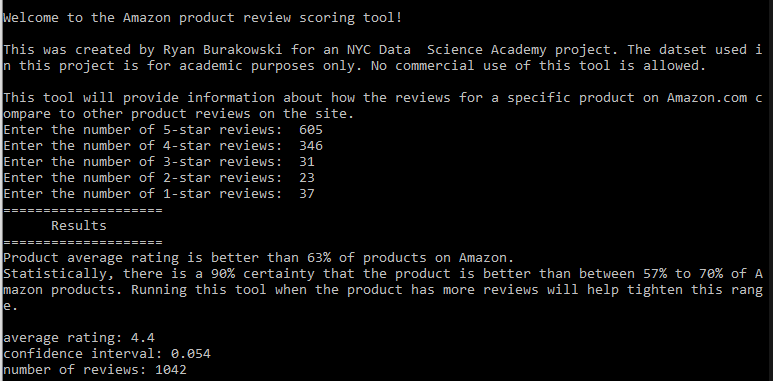

With the analysis completed, it was time to put the results to use. The final product of this study was a tool to help companies extract information from online customer reviews of their products sold on Amazon.com. When the user inputs a set of reviews into the program it performs a comparative analysis of how the reviews set stacks up to other product review sets. A message is outputted to the user describing the results of this analysis.

When using the tool, a user is prompted to enter in the number of reviews for the product of interest at each star rating level. The program then takes this information and determines the percentile of the product's rating in relation to all average product ratings, using the cumulative frequency table generated earlier in the project (Figure 7).

Usage of the Tool

In addition, the random sampling process previously described is implemented using a reviews sample size equivalent to the number of reviews entered by the user. This provides an accurate confidence interval for the percentile estimate generated from the program. The tool was developed using Python, and runs right from the command line interface. Concise, aggregated datasets were generated to aid in the speedy functioning of the tool.

Figure 8. Screenshot of the Amazon Product Review Tool Running in the Command Line Interface.

Significant consideration was given to what output the user should receive to both provide useful information and to highlight the limitations of the result. It can be difficult to provide sufficient context for the appropriate use of a program's results to users who do not understand the underlying model, and the development of this tool was no different. Special attention was paid to prevent user overconfidence in the precision of the result.

In addition to providing the percentile rank of a product with an inputted review distribution, a message was outputted providing the 90% confidence interval of the result as well as steps that could be taken to tighten up the confidence interval in the future. When the program is run on a set of reviews smaller than the 300 reviews per product cutoff level established during this project, a result is still outputted to the user but includes a message relaying that the minimum recommended reviews size for optimal results is 300 reviews.

Future Extensibility:

This tool allows companies to extract meaningful insights from the online customer reviews of their products on Amazon.com despite the biased and skewed nature of online reviews. There are multiple interesting areas of further study for this tool, the first of which is to extend its usefulness beyond Amazon.com. Reviews distributions for other product marketplaces, or even services websites such as Yelp.com, could be analyzed using the methods from this project and the functionality of the reviews tool could be extended to those domains.

In addition, using newer, post-COVID data could help investigate whether customer reviewing behavior has changed with the wider demographic and increased prevalence of online shopping during the pandemic. A third and potentially most interesting area of expansion for this tool would be the application of natural language processing techniques to extract frequently cited praise or criticism in positive or negative reviews. This would transform the tool from being a descriptive analysis of a company's product into a prescriptive suggestion of methods for product improvement.

References:

1. Hu, Nan, Jie Zhang, and Paul A. Pavlou (2009), “Overcoming the J-shaped distribution of online reviews,” Communications of the ACM, 52 (10), 144-147.

2. Hu, Nan, Paul A. Pavlou, and Jie Zhang (2017), “On self-selection biases in online product reviews” MIS Quarterly, 41 (2), 449-471.

3. Schoenmuller, Verena, Oded Netzer, and Florian Stahl, “The extreme distribution of online reviews: Prevalence, drivers and implications,” Columbia Business School Research Paper, 2019, (18-10).

4. Dalvi, Nilesh N., Ravi Kumar, and Bo Pang (2013), “Para 'Normal' Activity: On the distribution of average ratings,” ICWSM.

5. Anderson, Eugene W., and Claes Fornell (2000), “Foundations of the American Customer Satisfaction Index,” Total Quality Management, 11 (7), 869-882.

6. Zhang, Yinlong, Lawrence Feick, and Vikas Mittal (2014), “How males and females differ in their likelihood of transmitting negative word of mouth,” Journal of Consumer Research, 40 (6), 1097-1108.