House Hunting with Data and Machine Learning

Our team did a thorough analysis of the Ames, Iowa Housing data set and trained several machine learning models to predict house prices. We also deployed one of these models in a web-app to help potential buyers find the right house based on their budget.

Data Science Background

The data was collected by Dean De Cock in 2001 as an alternative to the famous Boston Housing Dataset for teaching linear regression. The data consists of 2580 sale prices for houses sold in Ames Iowa from 2006 to 2010, along with over 80 explanatory variables for each house. This dataset can be found on kaggle.

Our group was inspired by the popular "house hunter" style television shows, and we decided to use machine learning to help home buyers find their home. Our goal is to take someone's requirements for a house (size, number of bathrooms, etc.) and predict what their hypothetical house would cost. Our value proposition is that if their predicted house did not match their budget, we can analyze what things to add or remove in order to reach their budget and find their dream home!

Exploratory Data Analysis

To kick off the project we set out to find out as much as we could about the 80 explanatory feature in the dataset and see how they relate to the sale price so that we could use these features to inform a home buyer. Below are some of our findings.

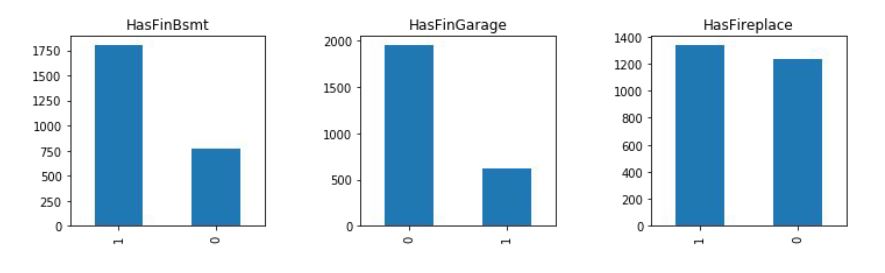

When searching for a home, homebuyers tend to have optional additions in mind during their search. We decided to transform many variables detailing square footage of optional additions into binary variables indicating if they have the addition or not. This was done for the pool, fireplace, finished garage, porch, deck, and finished basement features. We ran a student's two-tailed t-test on whether the house had the feature or not. We found that every feature ws associated with a statistically significant increases to the home sale price. On average, having a pool had on average a $78,000 change in worth. Having a fireplace and having a finished garage also had a moderate positive correlation with the sale price.

Next, we saw that 10 features that spoke about the quality of the house including overall quality. When creating a box-plot of overall quality to the log sale price we saw that as the quality increased from 1 to 10 so did the log sale price, showing a linear trend between the two. To see how the overall quality feature was engineered from the individual quality metrics, we looked at the correlation between the metrics and overall quality. We found that the exterior quality, kitchen quality, and basement quality were all highly (>0.6) correlated with the overall quality.

Our secondary dataset contained Ames Real Estate data, which included the property addresses that were used to find the latitude and longitude coordinates of the houses and the neighborhood. By merging the two datasets we were able to compare features based on neighborhood. We looked again at overall quality and asked: Does the price sensitivity on quality depend on the neighborhood? We split the neighborhoods in half; those above the median price and those below. For the neighborhoods above the median, we found a sharper increase in Sale Price when overall quality increased indicating that they are more sensitive to changes in quality.

There were two types of house styles in the dataset either ranch or colonial. Around 60% of houses in the Ames housing dataset are ranch style. The popularity of the house style was different in each neighborhood and didn’t seem influenced by whether it was an above-median or below median sale price neighborhood. As ISU is the largest employer of Ames, IA, we used the latitude and longitude and geopy to calculate the distance between the properties and the university. The neighborhoods with a more convenient job commute to ISU are SW ISU, Crawford, and Edwards.

In conclusion, as overall quality increased so did the Sale Price. More affluent neighborhoods were more sensitive to changes in quality. There were moderate correlations with having a Fireplace and having a Finished garage and Sale Price. 60% of the houses in the dataset were ranch style. We used these findings to inform our feature selection and engineering for our models.

Pre-Processing

Data quality is an important topic in machine learning - the old adage of "garbage in, garbage out" is applicable here. Therefore, we cleaned the data to remove as many low quality rows from the data as possible. For instance, the data included home sales under foreclosure, houses that were severely damaged, and homes zoned for agriculture on 40 acres of land. None of these are appropriate for analyzing an average family home, so they were dropped. In total, 300 rows were removed from the data. This was acceptable, because 2,250 rows still remained, which is plenty for data analysis and machine learning.

Tree-Based Machine Learning

The advantage of using tree-based machine learning models is for early feature selection. By analyzing the features higher up on the decision tree, we can draw conclusions about what feature are more important to predicting the house price. This is convenient when we have over 80 variables to consider.

Feature Engineering Pt. 1

We were guided in our feature selection and feature engineering through the information we acquired during exploratory data analysis. For instance, we created a curb appeal feature that was a combination of 11 of variables like: masonry veneer area, lot frontage, lot shape, land contour, lot configuration, land slope, roof style, roof material, exterior material, exterior quality, and exterior condition. We also used the latitude and longitude coordinates of each house to calculate the distance to the big college in town (Iowa State).

Model Results

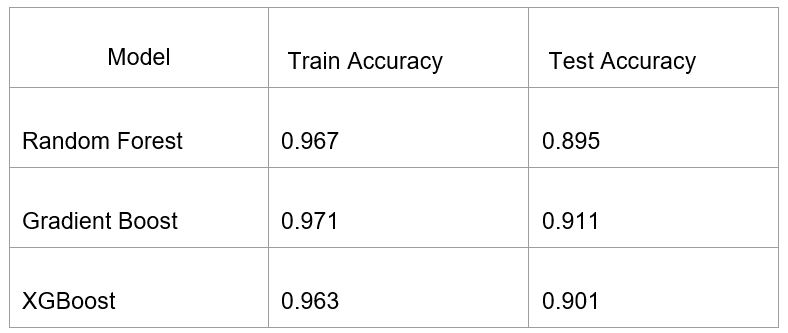

We used three different tree-based models: Random Forest, Gradient Boosting, and XGBoost. All three were tuned using grid searches with cross-validation by splitting the data into training and test sets. All three performed similarly in terms of accuracy. The average test accuracy was around 90%, which means that the model could a house on average within 90% of the sale price. We also saw a 6% error between the train and test sets, so we may have had a problem with overfitting. However, the Gradient Boost model performed slightly better than the other 2 models with slightly higher train and test scores and just a slightly lower error.

Feature Selection Pt. 1

Now that we had an accurate model, we can use the decision trees to analyze the important variables.

The above diagram shows the top three levels of a single decision tree from the Random Forest Model. Decision trees make splits on the features that return the largest information gain. In the tree above, the first split has been made on the overall quality feature, so the cut on this feature provided the most information. In the second level, the splits are on curb appeal and neighborhood. Therefore, this reveals that for homes with lower overall quality < 7.5, curb appeal is the most important feature, but for homes with higher quality > 7.5, the most important feature is the neighborhood. Note: this could be insightful for a real estate expert, but may be hard for new home buyers to interpret.

On the other hand, something we can interpret is a ranking of which features are the most important. This graph shows the ranking of feature significance in predicting sale price, and we can use it to inform our next round of modeling.

Linear Regression

The advantage of using linear regression in house pricing is the interpretability. As we saw earlier, tree-based models can be difficult to interpret, but linear models will output a formula in the form of "y=m*x+b" that we learned in elementary algebra. For example, a linear model to predict house price may generate a formula like "(house price) = $20*(square footage) + $1000*(number of bedrooms) + $500*(number of bathrooms)."

Feature Engineering Pt. 2

In order to make the model useful to a home buyer, we had to create more variables based on typical house buyer questions like, does it have a finished basement? does it have a garage? does it have a fireplace? These became binary variables where "1" means garage and "0" means no garage.

Feature Selection Pt. 2

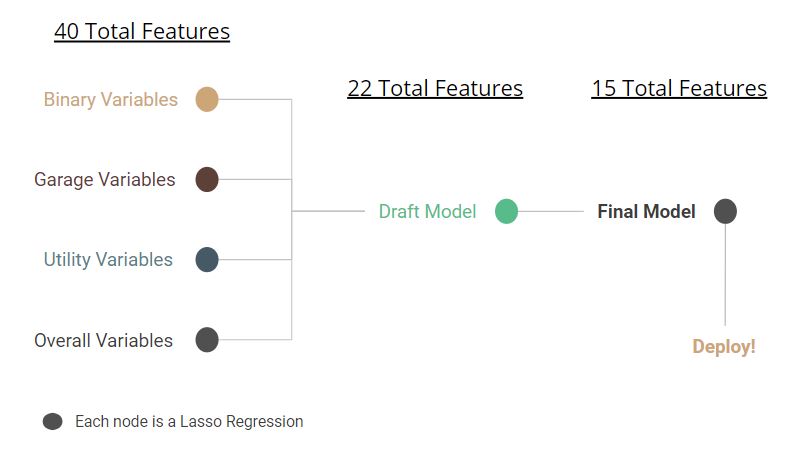

After combining the important features from the tree-based models with our new binary features, we had 40 features to consider for linear regression. We had a hunch that some of these features were correlated, which could be a problem. Linear regression has a requirement where the input variables are not allowed to be correlated with each other.

In order to systematically drop redundant variables, we used a series penalized linear regressions, specifically Lasso Regression. In a Lasso Regression, the model will set the coefficient of an input variable equal to zero if that variable is redundant. Using this strategy, we narrowed the number of features down to just 15.

Final Model

Using this strategy, we narrowed the number of features down to just 15. We found that the five most important features of a house in Ames Iowa are:

- Total Living Area

- Whether or not it has central air

- Total Lot area

- Whether or not it has a finished basement

- Overall quality of the house (1-10 scale)

We used two scores to measure our model: coefficient of determination (R^2) and mean absolute error (MAE). The R^2 was 88%, which means that our model explains 88% of the fluctuations in house price. The MSE was $11,600, which means that on average, our model predicts within $11,600 of the sale price.

Web Application

Now that we have a linear model that is tuned for our target user, we needed to create a dashboard so home buyers can interact with the model. We created a dashboard with the Python Dash library and deployed the app on Heroku. The app can be accessed here: https://ames-housing-app.herokuapp.com/

The dashboard allows users to enter their budget and specify features they would like in their house such as gross living area, lot area, neighborhood, qualities and other features as shown below:

The selected features become inputs into the linear model, and the output is a predicted housing prices. The dashboard compares the user's budget and the predicted price and recommends 10 changes that the user can make to bring the target price closer to their budget. The recommendation system subtracts or adds features depending on whether the user is over or under budget and predicts a new price. The top 10 changes that bring the target price closest to the budget are shown to the user.

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.