Data on Housing: Data Analysis and Machine Learning

Project GitHub | LinkedIn: Niki Moritz Hao-Wei Matthew Oren

The skills we demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Outline

-

Introduction

-

Exploratory Data Analysis

-

Data Transformations / Feature Engineering

-

Modeling

-

Results and Future Improvements

1. Introduction

When the time comes to buy or sell your first home, there are many factors to research and consider. A home is one of the most expensive investments in a person's life so picking the right one is crucial. When selling, it'd be beneficial to know which features of the home according to data to improve in order to maximize the resale value of the house.

What are some factors that may impact the price of a home? Square footage, location, and a garage are some factors that initially come to mind. Although there are many other factors, the goal of this machine learning project is to analyze 79 house variables and predict the price using a dataset of over 1,450 houses sold in Ames, Iowa.

The plan is to explore the data, drop multicollinear and insignificant features, correct missing values, create new features, and then apply different machine learning models to the cleaned dataset.

2. Exploratory Data Analysis

The dataset is sourced from a Kaggle competition and comes in two files as a training and testing dataset. Starting off, I studied the correlations between the variables and Sale Price using a heatmap. Some pairs are correlated naturally like Garage Cars and Garage Area while others are correlated by deduction such as TotalBsmtSf and 1stFlrSF. In the next section, I transform the highest correlated variables and drop some of the lowest correlated variables to Sale Price.

Next, I want to see if any features have skewness using pairplot. Features like SalePrice, TotalBsmtSF, YearBuilt, and TotalRmsAbvGrd are clearly skewed and will be transformed in the section.

3. Data Transformations / Feature Engineering

- Log Transformations

The first order of business is to transform the target variable Sale Price by calculating the log of it and then moving it out of the training data.

- Missing Data

Next, I split the training set into numerical and categorical to look for any patterns in the missingness. For the categorical variables, I filled the Nan's with "No" and then transformed them into numerical variables by encoding them. Next, I combined existing features and dropped insignificant ones. For the remaining numerical variables, the median was used to fill missing values.

- Skewness

To figure out which variables have a nonlinear shape, I used the above pairplot to examine them. Then, a log transform of the skewed numerical features was then done to lessen the impact of outliers.

4. Modeling

The first models used were linear models --Lasso, Ridge, and ElasticNet.

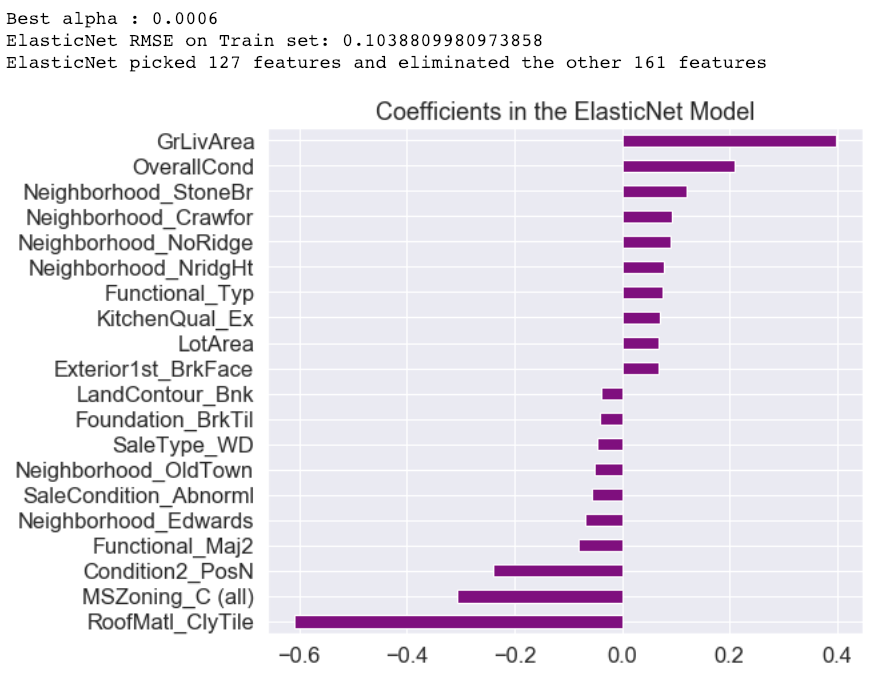

Ridge and Lasso regression are techniques to reduce model complexity and prevent over-fitting resulting from simple linear regression. In ridge regression, a penalty term is added to shrink the coefficients and helps reduce model complexity and multicollinearity. In Ridge regression, all coefficient magnitudes are taken into account which helps with feature selection. ElasticNet is a combination of both that attempts to shrink and do a sparse selection at the same time. I optimized the model using cross-validation against different alphas. The results show it only used 44% of the features.

Next, I chose two ensembling models, Random Forests (RF) and Gradient Boosting.

They're easy to implement and both predict by combining the outputs from individual decision trees. Boosted Trees is usually preferred over RF because it trains on new trees that compliment the ones already built. This normally gives you better accuracy, which was the case here.

We can see from the above graphs that neighborhood features ranked much higher in the linear models than with non-linear due to their different learning algorithms. Gradient Boosting feature importances are also more evenly dispersed than in the linear models.

5. Results and Future Improvements

For the competition, submissions are evaluated on root-mean-squared-error (RMSE) between the logarithm of the predicted values and the logarithm of the observed sales price that was initially missing from the initial test set. Overall, the Random Forest model gave me the lowest training RMSE of 0.05431. However, it's Kaggle score was the worst! I'm sensing some overfitting happening here, which I will improve upon with further hyperparameter tuning.

The ElasticNet model gave me the best Kaggle score of 0.12135. The training wasn't too far off but surely a bit more feature engineering will improve it.

For all preprocessing and modeling, the code is available on GitHub.