How vulnerable are US jobs to automation?

" 47% could be done by machines 'over the next decade or two' "

Carl Benedikt Frey and Michael Osborne, Oxford University, 2013

The 30-second version:

- A frequently-quoted study on technological automation suggests around half of US jobs could be done by machines in the medium-term. This dynamic dashboard allows you to explore possible impacts from this analysis across industries and geographies; you can also explore how the view has changed between 2010 and 2018.

- However, there are plenty of reasons to think the future is unlikely to play out this way. The modelling approach makes a number of simplistic assumptions and the research is framed narrowly, focusing on current jobs which are theoretically computerisable. In the real-world jobs will change, new roles will appear and resource constraints or policy could slow or stop transformation.

- Subsequent research paints a more conservative picture of the scale of automation; I want to explore related research further

Note: this post and the accompanying dashboard are still WIP

Introduction

Recent advances in big data, deep learning and robotics could threaten jobs across the world. In the US, Andrew Yang has popularised this concern, emphasising the risk that self-driving vehicles could displace around three million US truck drivers. In my own lifetime, I have seen the power of technology to transform jobs with marketers and cashiers being the examples that spring to mind.

But it’s easy to extrapolate wildly with science fiction stories about AI actors and robot doctors - whilst recent advances in deep learning have been impressive, many "common sense" tasks and real-world physical problems remain beyond reach for now.

There are lots of smart voices saying totally different things on this topic - how can researchers come up with quantified impact predictions given the overwhelming uncertainty?

My aims

My project focuses on research from the "The Future of Employment" (1) by two Oxford academics in 2012, supported by the Future for Humanity institute, which attempts to directly quantify the risk of automation for specific job roles. Their findings hit the headlines in the UK and have inspired related research by other groups.

My aims:

- provide a dynamic tool for visualisation of the possible impact of computerisation of US jobs over the medium-term

- explain the underlying approach and assumptions

- update the analysis to account for the latest available information

Why pay attention?

Part of me wants to dismiss this as too speculative; what's the use of figures when the uncertainty might dwarf any signal?

However, the projected impacts are profound and far-reaching - so even if you attach low credence to this sort of analysis, it may be worth factoring when thinking about the medium-term:

- For individuals, this analysis could help guide a sustainable career trajectory. This analysis suggests specific skills will be a harder to automation, so it may make sense to emphasise or develop these - use the "Individual jobs" or "Data drill-down" tabs to explore your area.

- For businesses there are both threats and opportunities. Perhaps a combination of early investment in automation alongside retraining programs could be effective? For specific industries where long-term planning is required (eg insurers, pension providers) the possibility of extreme change in the make-up of the working population could influence optimal strategy now.

- For policymakers this helps provide a dynamic and holistic view of impacts across industries, geographies and demographics.

- For data scientists, we can be more aware of the impacts our work could have on society.

The approach

The underlying research question is as follows:

“Can the tasks of this job be sufficiently specified, conditional on the availability of big data, to be performed by state of the art computer-controlled equipment"

The research produces a % estimate for this question using a combination of granular data, expert judgement and statistical modelling:

- Granular datasets from the Department of Labor provide annual snapshots dividing the US working population of ~130m in to around 800 detailed job roles across each state, with basic salary information. Accompanying datasets capture job characteristics for each role across more than 100 factors; these are updated regularly through research reviews.

- Expert judgement: a group of machine learning researchers and futurists discussed the above question for c.70 specific roles - these are then labelled as fully computerisable or not. In addition, they selected the nine most important job characteristics which they expect to determine whether a role can be automated.

- Statistical modelling is used to generalise the relationship between the expert predictions and the job characteristics across the full space of jobs.

A few examples are instructive here - have a look at the "Individual jobs" tab to explore directly:

- Chief execs, clergy, athletes, physicians/surgeons and fashion designers were picked by the panel as being non-computerisable

- Credit analysts, accountants, taxi drivers, cooks and cleaners were selected as computerisable professions

- Some of the variables that are judged to matter are originality, negotiation, fine arts knowledge and caring/assisting others

The modelling approach then takes these "labels" (y/n to being computerisable) and the "features" (the nine job characteristics) and finds any underlying patterns linking these inputs and outputs.

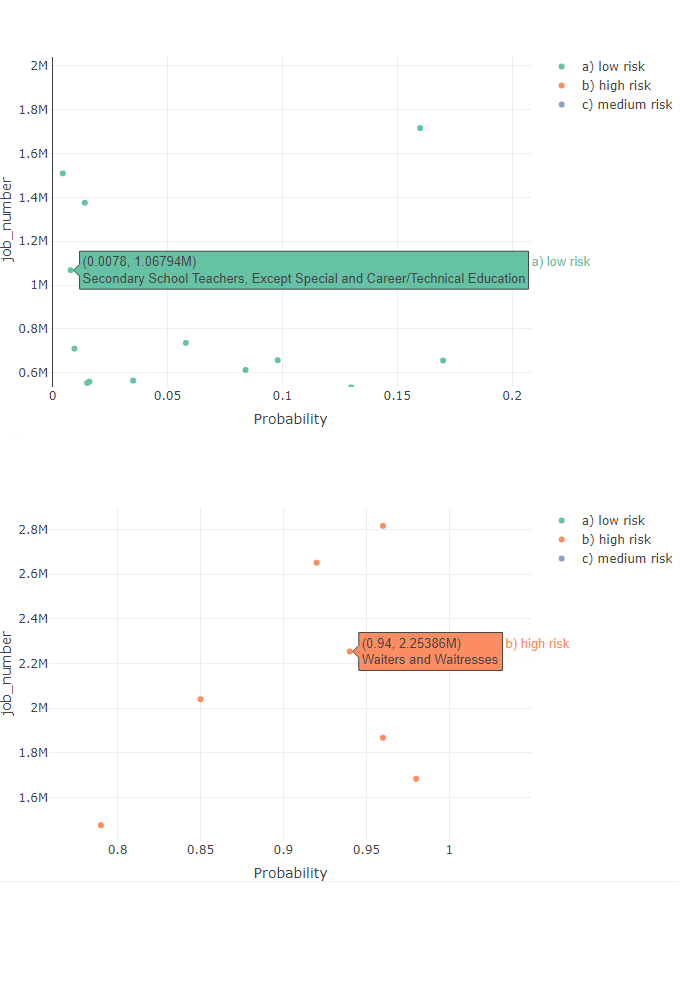

The result is a % likelihood that a role can be fully automated. At one end of the spectrum are low risk roles like secondary school teacher with a 0.78% chance of automation, while the other end captures high risk roles like waiters/waitress with a 94% probability of computerisation.

The findings

If you buy in to this job-by-job approach, the analysis can be generalised across all roles for insights on the whole workforce.

1. Around half of the current US jobs are considered at high risk (>70%) of being theoretically computerisable. Impacts are unlikely to be felt evenly: changes would be expected to hit some industries and states harder - explore the "Over industries" and "Over states" tabs in the dashboard.

2. The analysis suggests roles are clustered around being very likely/unlikely to be automated, with a lower density in between. One interpretation is that there could be a flurry of automation across roles, then a long lull before many others become technically feasible

3. High risk roles tend to have lower salaries and require more physical, sensory and pyschomotor skills - so job displacement might impact the most vulnerable first. The relationship between salary and job characteristics is explored in the "Capabilities tab"

An update

The original research was performed in 2013 using 2010 data - so it's possible that the outlook has changed significantly. For instance, some of the transformation may have already have played out - you might expect shrinkage in high risk roles as jobs become computerised and new lower risk roles appear.

In an ideal world I would (a) update all the job and skills data (b) check in with the experts to see if their views have changed and (c) update the modelling for every year. For now I'll have to focus on (a) and (c)

It should be noted that the Department of Labor indicate that their data is less useful for measuring changes over time due to movements in data collation, scope etc. There was a major change in the job classification (SOC code) in 2018 so this is the focus of the update.

On a best efforts basis I have re-run the analysis to focus instead on the 2018 jobs classifications and skill-sets - this view should be more reflective of the current risk profile of US jobs. This approach is then projected back in time - in reality a combination of the original and updated analysis would be most reflective of any changes in automation risk over time (and I'm working on this).

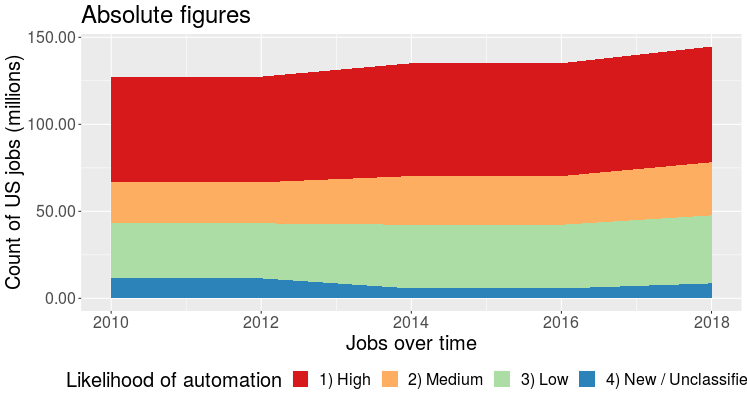

On this basis, the below figure shows how the labour force has grown since 2010, but the proportion within each risk bucket have remained broadly flat (at ~46%). This view is presented in the "Updated modelling" dashboard tab.

The caveats and limitations

Humans are not very good at predicting the future; in fact, that’s one of the reasons computations might transform society. There are a host of limitations with this analysis but I’d group these in to a few broad buckets:

- The approach for estimating probabilities uses a lot of simplifying assumptions.

- The analysis addresses the narrow question of job substitution, not future jobs.

- The authors make no rigorous attempt to define the time horizon given the uncertainty over if or when technical barriers can be overcome.

To expand on (1), these figures are based on a simple statistical model trying to find patterns in the intuition of an expert panel. We have to treat the expert predictions as "ground truth" (even though their predictions haven't been tested yet) and the specific modelling approach makes assumptions about the job characteristics which are hard to justify in advance (e.g. being normally distributed within each class)

Furthermore, the data-driven approach assumes that a job can be summarised in a short list of minimum skill requirements. But nine variables are probably not enough and the skills to be a junior accountant might be far more computerisable than those of a senior specialist.

Subsequent research has highlighted that bundles of skills may really matter, so there may be parts of most roles which protect against full automation. Other approaches tend to produce smoother estimates of the likelihood of automation, rather than the highly polarised results in this research.

On (2) the narrow focus on job substitution misses several real-life factors:

- Creative destruction – this analysis looks at existing jobs only with no account of replacing jobs. In the past, technology has tended to augment existing roles and create new opportunities which could not be readily foreseen; plenty of voices think the future AI/data-driven changes will be no different.

- Not all roles that can be theoretically automated will be - regulation or public reaction could slow or stop automation in some areas and there are limited resources (money, robust data, compute, data scientists) to work on these challenges.

- Job growth - the Department of Labor does produce estimates of job growth but these are not accounted for. It is not obvious how this should be interpreted - if we expect to have 20% more (human) nurses but nurses are 20% likely to be computerised, how should we aggregate these views?

In the round...

This particular study offers a fascinating but alarming projection of the potential results of automation, if technical barriers are overcome and impacts are not mitigated. No doubt it will be wrong in hundreds of specific ways, but if it's "right" in a general sense then we should be prepared to account for automation trends when we think about medium-term forecasting.

Ongoing work

There are lots of ways that I want to extend this work in the near future

- Work on the thorny mapping issues to reduce the proportion of "new/unclassified" roles - currently these gaps prevent firmer conclusions amount movements over time.

- Integrate other qualitative risk ratings or prediction approaches to provide a more balanced viewpoint.

- Expand to cover data from other countries via the international ISCO mappings.

The technical bit

Data & modelling

I use data from the Department of Labor covering 2010, 2012, 2014 , 2016 and 2018 - even years are taken to keep the data size manageable

The visualisation was built in R Shiny with statistical modelling in python – see github repository here

The statistical model used is a Gaussian process classifier - this assumes that the nine bottleneck variables are normally distributed within each class (with the class being computerisable or not). My approach differs slightly from the original research - this used the GPML toolbox in Matlab with an exponentiated quadratic; my updated analysis used a rational quadratic implemented using the scikit-learn library in Python

References

https://www.oxfordmartin.ox.ac.uk/downloads/academic/The_Future_of_Employment.pdf

https://www.bls.gov/oes/tables.htm