Kaggle Competition: House Price Prediction 2017

Introduction

Exploratory Data Analysis

Let's first do EDA to gain some insights from our data. Let's plot the distribution of sale price (target). Figure shows that only a few houses are worth more than $500,000.

Size of living area may be an indicator of house price. Figure shows that there are only a few houses are more than 4,000 square feet. This information may be used to filter out outliers.

Size of living area may be an indicator of house price. Figure shows that there are only a few houses are more than 4,000 square feet. This information may be used to filter out outliers.

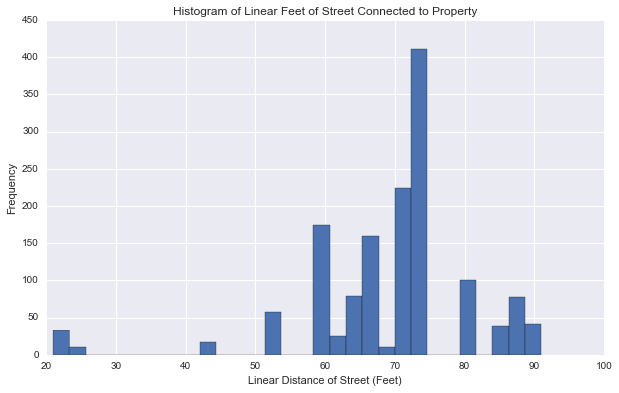

Also, linear distance of street connected to property may be a useful feature. We group by neighborhood and fill NA using the median of the group's linear distance. Figure shows that there are only a few outliers having a distance of less than 40 feet.

Also, linear distance of street connected to property may be a useful feature. We group by neighborhood and fill NA using the median of the group's linear distance. Figure shows that there are only a few outliers having a distance of less than 40 feet.

It may be useful to characterize the properties by the months in which they are sold. Figure shows that May to August are the hottest months in terms of number of sales.

It may be useful to characterize the properties by the months in which they are sold. Figure shows that May to August are the hottest months in terms of number of sales.

We also found some numerical features which are highly correlated with the sale price and plot the correlation matrix of these features. This information is useful to determine the correlation of features. We all know that multicollinearity may make it more difficult to make inferences about the relationships between our independent and dependent variables.

We also found some numerical features which are highly correlated with the sale price and plot the correlation matrix of these features. This information is useful to determine the correlation of features. We all know that multicollinearity may make it more difficult to make inferences about the relationships between our independent and dependent variables.

These are some basic EDA for our house price data set. In the next section, we are going to perform feature engineering to prepare our train set and test set for machine learning.

Featuring Engineering

We consider numerical and categorical features separately. The numerical features of our data set do not directly lend themselves to a linear model and the features violate some of the necessary assumptions for regression such as linearity, constant variance or normality. Therefore, we perform log(x+1) transformation for our numerical features, where x is any numerical feature, to make numerical features more normal.

Also, it is a good idea to scale our numerical features.

For categorical features, we perform several transformations as summarized below.

-- Fill NA using zero.

-- Group by neighborhood for linear feet of street connected to property and fill NA using the median of each group (neighborhood).

-- Transform "Yes" and "No" features such as having central air conditioning or not to one and zero, respectively.

-- Using "map" to transform quality measurements to ordinal numerical features.

-- Perform one-hot encoding on nominal features.

-- Sharan's three strategies. [Add the strategies here]

We also generate several new features summarized below.

-- Generate several "Is..." or "Has..." features based on whether a property "is..." or "has...". For example, since most properties have standard circuit breakers, we create a column "Is_SBrkr" to characterize those properties having standard circuit breakers.

-- Generate some aggregated quality measures to simplify the existing quality features. We aggregate those features into three broad classes, bad/average/good, and encode them to values 1/2/3, respectively.

-- Generate features related to time. For example, we generate a "New_House" column by considering if the house was built and sold in the same year.

For dealing with outliers, we filter out the properties having a living area of more than 4,000 square feet above grade (ground).

There are also some minor features considered here. The total number of features is 389 and we have 1456 and 1459 samples for the training and test sets, respectively. Now, let's do machine learning.

Ensemble Methods

We consider six machine learning models: XGboost, Lasso, Ridge, Extra Trees, Random Forest, GBM.

For each models, we perform grid search with cross-validation to find the best parameters for the corresponding models. For example, for Kernel Ridge,

We found that Random Forest, GBM, and Extra Trees have serious overfitting problem.

Finally, we use an ensemble model which consists of Lasso, ridge, and XGboost with equal weights as our model.

Stacking

We consider out-of-folder stacking. At the first level, we use XGboost, Random Forest, Lasso, and GBM as our models. At the second level, we use the outputs of the models from the first level as the new features and use XGboost as our combiner to train our model. We perform cross-validation for each model to find the best set of parameters.

Feature Selection

We consider feature importance provided by XGboost to select the important features.

The figure of feature importance shows that the feature importance decreases exponentially. We should consider using the most important features to train our model.

The figure of feature importance shows that the feature importance decreases exponentially. We should consider using the most important features to train our model.

We use a loop to see how the score varies with different number of features included in the training set and set a threshold to determine which features we want to drop from the data set.

Conclusions

In two weeks (two people, part-time), we have done EDA, feature engineering, ensembling, stacking, and feature selection. We observed that there is a huge score jump from the score without featuring engineering to the one with feature engineering. The second score jump is from the score without ensembling to the one with ensembling. Out of folder stacking didn't improve the score too much. It may be because the models are already statistically equivalent. Since the data set is very small, to improve the prediction score, We can consider different featuring engineering such as using different distributions to create different features or using feature interaction to generate new features automatically.