Housing Prices: Gnome is Where the Heart is

Project GitHub | LinkedIn: Niki Moritz Hao-Wei Matthew Oren

The skills we demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Machine Learning Project - Your goal is to explore and analyze the “Kaggle Competition: Ames, Iowa Housing” dataset to find key features that influence sales price and develop a machine learning algorithm to predict these prices.

Dataset provided by Kaggle: https://www.kaggle.com/c/house-prices-advanced-regression-techniques

Team Lambda: Mikhail Stukalo, Lina Sprague, Anisha Luthra, Daniel Chen

Introduction

Predicting housing prices is a challenge; the task involves many variables and takes some creative thinking to pinpoint the features that actually matter in order to arrive at an accurate prediction. A recent competition on Kaggle (an online data science platform) featured housing price predictions with data from Ames, Iowa. Among other things, Iowa is known for their corn production while Ames is known for Iowa State University. They also have the tallest concrete garden gnome in the world, Elwood.

Ours was a group new to Kaggle competitions, and while Kaggle had some official rules regarding the use of additional outside data (data not provided in the Kaggle dataset), one of the first things we opted to do was to pull outside data to help with our housing price predictions. This was after all, first and foremost a project we were doing for the NYC Data Science Academy. It was also our first group project.

The Group

Let me plug a paragraph in the first person perspective on a project blog that the entire group contributed to. In a competition, the group matters, and the only group that matters is your own. I was not so adamant about finding a group, but on the other hand I did not want to be left to my own devices. So that Friday after class I had texted Mike Stukalo, stating that there was a lot I could learn from him if we formed a group.

To this he texted his reply, “Sure. Let’s be a group.” Anisha Luthra was next to join the following Monday; I had thoroughly enjoyed her previous web scraping project on drug ratings from webmd.com. She was recruited in the elevator. Then, I was pleasantly surprised when Mike announced our fourth and final team member, Lina Sprague, who would have added a competitive advantage to any group. We now had a complete and awesome group: Lambda.

Now, back to the data.

Data

The Ames Housing dataset compiled by Dean De Cock originally served as an alternative to the classic “Boston Homes” dataset, a package well known in R Studio and Python. However, the 500 observations found in the Boston Homes dataset is dwarfed in comparison. The Ames dataset contains 3,000 homes and their descriptive values as 79 variables.

These variables range from “Overall Quality” and “First Floor Square Feet” to “Alley Access” and “Roof Style”. The dataset is a mix of categorical and quantitative variables. There were also many missing values, but this would not stump our team of data scientists. We decided to add even more data.

In addition to the Ames dataset, we gathered data on schools, unemployment rate, and the labor force in Ames. We also included Fannie Mae Mortgage Rates, the Dow Jones Real Estate Index (DJREI), and corn prices in our analysis.

It made sense to include corn prices because as we all know, that is what Iowa is known for. We included fixed income and rates (unemployment, labor force, and mortgage) because these were factors to be considered when pricing in a house. Different areas have various unemployment metrics and labor force values which tend to correlate with overall income. Mortgage rates were an important consideration: people apply for mortgages when buying a home.

The DJREI provided an overview of the health of the real estate industry, there is incentive to invest in real estate when the index is strong. Schools (particularly public schools) were deemed to be important for housing prices because public schools are designated to certain districts/neighborhoods.

These were all the variables which were not originally included in the dataset but could prove invaluable in our predictions of housing prices. We treated these new features as one-month-lagged variables compared to the date a house was sold. This is because people usually try to predict future outcomes based on current data.

Imputing Missing Variables

Now that we had gathered and added data, the next step was to perform various transformations on the entire dataset (train set and test set combined). This was an absolute necessity; it would ensure that our data could lend itself to the various prediction models in a way that was accurate and precise.

In order to impute missing variables we first combined the train set and test set data. We took time to understand what the “missing” values denoted. As explained in the dataset, a missing value (NA) for AlleyAccess meant that there was no alley access to the property. It did not mean that this value was missing (not recorded); therefore we could safely replace those missing values with 'None' instead of 'NA'.

A missing value for basement features could be explained by the absence of a basement. Other missing variables could actually be computed. For a missing quantitative variable such as LotFrontage, we grouped the observations based on neighborhood and used the median value to fill in the absent value.

For imputing a missing categorical value, such as “MSZoning” or “SaleType”, we again grouped by neighborhood but calculated the mode instead to use as the replacement value. We checked to see that we now had a full dataset and converted all categorical variables into dummy/indicator variables using pandas.get_dummies() in Python3.

Feature Engineering

For our response variable SalePrice, we had a look at its distribution and saw that it was right skewed. We took the log of SalePrice in order to give it a standard normal distribution, this would allow for more accurate predictions later on.

On the scatterplot we also noticed unwanted outliers. We regarded values which were more than 5 standard deviations away from the mean to be outliers. We changed these far off values to what the value of the mean was in order to keep the observation, rather than remove it outright.

We also looked at the distributions of all the numerical features and their skewness. We considered a variable skewed if their skew() was larger than 0.75. There were 19 of these variables in total. To correct for the skew we applied a log+1 transformation to these variables.

Finally, we divided the observations back into their respective train set and test set.

Methodology

We used a total of four linear models, a Random Forest Regressor, and an XGB model to make predictions on the sale prices of homes in Ames, Iowa.

The "Training RMSLE" and "Validation RMSLE" were the results we obtained from dividing the train set into its own train and validation sets. We used a test_size(validation size) of 0.3 for the train_test_split() on the train set. The test set mentioned in Imputing Missing Variables was not used until the final results testing.

Random Forest

Decision trees were used to build models of the SalePrice. For the Random Forest Regressor, we performed cross-validation to find optimal pruning parameters (min_amples_split=10 and min_samples_leaf=2) to build the final random forest model. We can see the factors with positive importance below in the bar chart below. Also shown is a scatter plot of the predicted train set results.

XGBoost

For our XGB model, we used boosted trees (gradient boosting) to model the prices. Below, for the graph on the left, the x-axis is the number of n_estimators for our XGBRegressor, the y-axis contains the RMSE-mean value.

Linear Regression Models - Lasso

For our first linear model we used a Lasso Regularization model fitted to the train set with all features included. Important regression coefficients are graphed on the right-side graph.

Linear Regression Models – Ridge

We then did the same with a Ridge Regularization model. Ridge Cross Validation was performed to find the optimal alpha value of 6, which returned the lowest RMSE.

Linear Regression Models on Selected Features

The features selected before by our Random Forest Regressor were used to create the following Lasso and Ridge regression models.

Lasso on Selected Features

Ridge on Selected Features

As was shown in the first chart of the Methodology section, the RMSLE results obtained from our linear regression models based on "selected features only" were quite similar to the results produced by the two linear regression models with "all features included".

With that aside, after we created all six of our models, we pickled them (import pickle) for later use.

Stacking Models

Because this is a blog, we have immediately arrived to the 'later use' stage. This is the part where we take the results we pickled to combine the predictive power of our models. We stacked the six models using the inverse of their error rate on the validation sets as weights to obtain our final prediction model.

Results

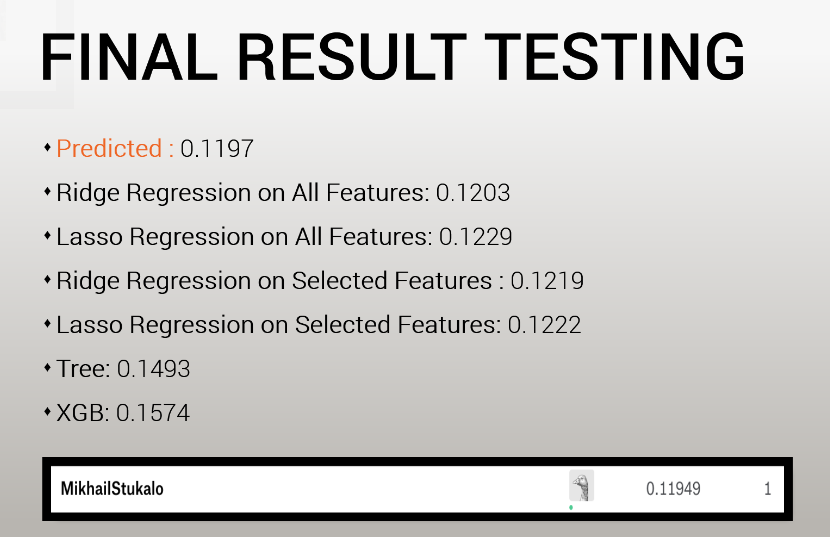

For our final result testing, we used all of our prediction models on the test dataset given by Kaggle. We also had the actual prices for the test datset on hand to grade our own predictions.

In the end, Kaggle calculated our score based on the test dataset prediction results we submitted against an unknown dataset of their own. Our final score was close to the Predicted RMSLE of 0.1197 (based on our final stacked prediction model).

For our housing price prediction results we rounded the price up to the nearest thousand. Rounding up was chosen for a practical reason: the selected model evaluation criterion (RMSLE) penalizes underestimated values more than overestimated values.

The final resulting RMSLE for our Kaggle submission was 0.11949, an excellent result for our first machine learning group project.

All of our code can be found stored on GitHub: https://github.com/MikeStukalo/Ames

Thanks!