Ames, Iowa - Predicting Housing Prices Based on Data

GitHub

The skills the authors demonstrated here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction to Ames Housing Prices- Pt. 1

Our team worked on the Ames Housing Kaggle competition to use Machine Learning techniques to predict house sales prices in Ames, Iowa. Although the data contained a sizeable amount of predictor variables and provided useful insights by way of Multiple Linear Regression, we had to extensively feature engineer and consider other linear and tree-based models due to high multicollinearity.

Additionally, we implemented a gradient boosted decision tree – XGBoost, to enhance the speed and performance of our model. The best results were achieved by a Penalized Linear Regression with Lasso, which earned a Kaggle score – Root Mean Squared Error (RMSE) of 0.12731, placing us in the top 23% of submissions.

Aside from the Kaggle competition objective, we sought to approach this project as if we were Data Science consultants for Real Estate companies who were interested in investing in rental properties in Ames, Iowa for ISU Students. In this case, our key stakeholders were companies such as Airbnb, Zillow, or Trulia, and/or Real Estate Developers who had an implicit interest in growing their Real Estate portfolio in a niche market.

Our main motivation was to provide actionable insight to our clients.

Introduction to Ames Housing Prices - Pt. 2

Before delving into the technical aspect of the project, here are some quick facts of Ames, Iowa to aid in our understanding of Ames, Iowa:

- In 2018 Ames, Iowa had a population of 65.9k people with a median age of 23.3 and a median household income of $46,127

- There were 7.42 times more White (Non-Hispanic) residents (52.4k people) in Ames, IA than any other race or ethnicity. Also, there were 7.06k Asian (Non-Hispanic) and 2.15k Multiracial (Non-Hispanic) residents, the second and third most common ethnic groups.

- The largest universities in Ames, IA are Iowa State University (Approx 9k degrees awarded in 2017) and PCI Academy-Ames (78 degrees)

- The median property value in Ames, IA is $196,400 and the homeownership rate is 40.8%, which is lower than the national average of 63.9%

- The average car ownership in Ames, IA is 2 cars per household.

Source: https://datausa.io/profile/geo/ames-ia/#civics

Data on Ames Housing Prices

The data provided by Kaggle contained 80 variables that were split into their respective data measurement categories: Interval Scale (Quantitative), Categorical, or Ordinal. Some quantitative features include the square footage of lot fronts and bathrooms, as well as car capacity of garages.

Additionally, categorical features included were type of sale and zoning information, whilst features representing fireplace and basement quality- Poor to Excellent, were deemed as Ordinal. Once categorizing these variables, our next step was feature engineering.

Feature Engineering

The two approaches used for Feature Engineering were as follows:

Approach I

The first approach involved a careful examination of each feature and its values. The value distributions were also studied to determine whether there were outliers or other abnormalities.

Many features appeared to be very closely related and therefore there was a big possibility of multicollinearity between them. We decided to get rid of the features that we thought were redundant or did not provide important information to reduce the total number of features before modeling.

Also, we converted some categorical and numerical features to binary. For example, instead of the fireplace quality, we were more interested in whether the house had any fireplaces.

Additionally, ordinal features were converted to numeric to take advantage of ranking patterns present in these features.

A log transformation was applied to features such as SalePrice and GrLivArea to reduce the inherent skewness inherent in them.

Thus, we only used the features that we selected and transformed for our models.

Approach II

In the second approach, we decided to use all existing features and engineer some additional features and used Lasso regression to determine the most important features. The features we engineered were created by combining other relative features together.

These two approaches led to very different results, the latter being the more successful of the two. The following are details about the approaches and the comparisons of the results.

Workflow

Here’s the workflow we determined:

The key steps involved in our workflow included data cleaning, imputation of missing values as well as feature transforming and engineering.

We chose to merge train and test data for the cleaning procedure.

There is a risk of leakage when dummifying and imputing the missingness in concatenated train and test data. The reasons we thought it was not critical in this particular case are as follows:

- All possible categorical values were provided in the data description

- Imputing the missing categorical values with the most frequent class did not affect the test set as the categories were really imbalanced, and most frequent classes were the same in both sets.

Dealing with Outliers

In the scatter plot of SalePrice against GrLivArea, we can see some outlier observations: 2 very large houses sold for a cheap price, and 2 very large and expensive ones.

As the purpose was to predict regular sales prices, we selected all observations with GrLivArea less than 4000 SF.

Imputing Missing Values

For most of the features, N/A meant that there was no such feature for the observation, therefore we imputed 0 values for numerical features and “None” for categorical.

There were also other features for which the values were really missing. The missingness was categorized as MCAR and imputed with the most frequent values.

“GarageYrBlt” had 159 missing values where there was no garage, the missing years were imputed with the year the house was built in.

Converting Categorical Features

Many of the categorical features had a clear ranking pattern, so we converted them to ordinal features.

Creating New Features for Ames House Attributes

Some quantitative features that needed rearranging are as follows:

Total...

- Porch area

- Full Bathrooms

- Half Bathrooms

- Rooms - subtracted Bedrooms

- Floor area

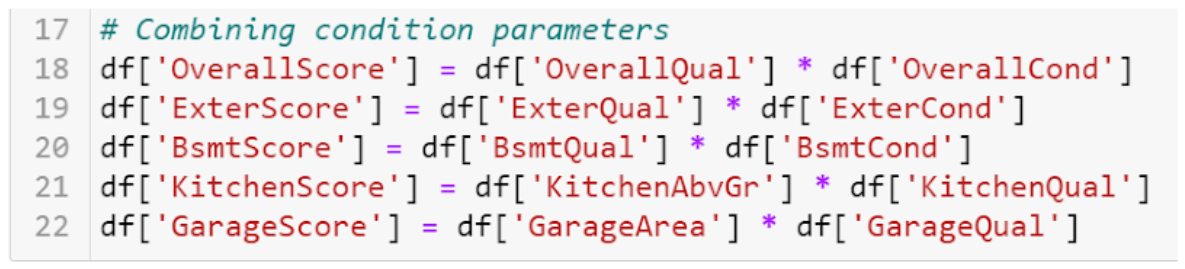

Multiple features were paired because they were representing similar ideas such as Overall Quality, Overall Condition, and Garage qualities. We thought that these features could be combined into new features like overall scores, and came up with an idea of getting a product of the related features:

Skewed Feature Transformation

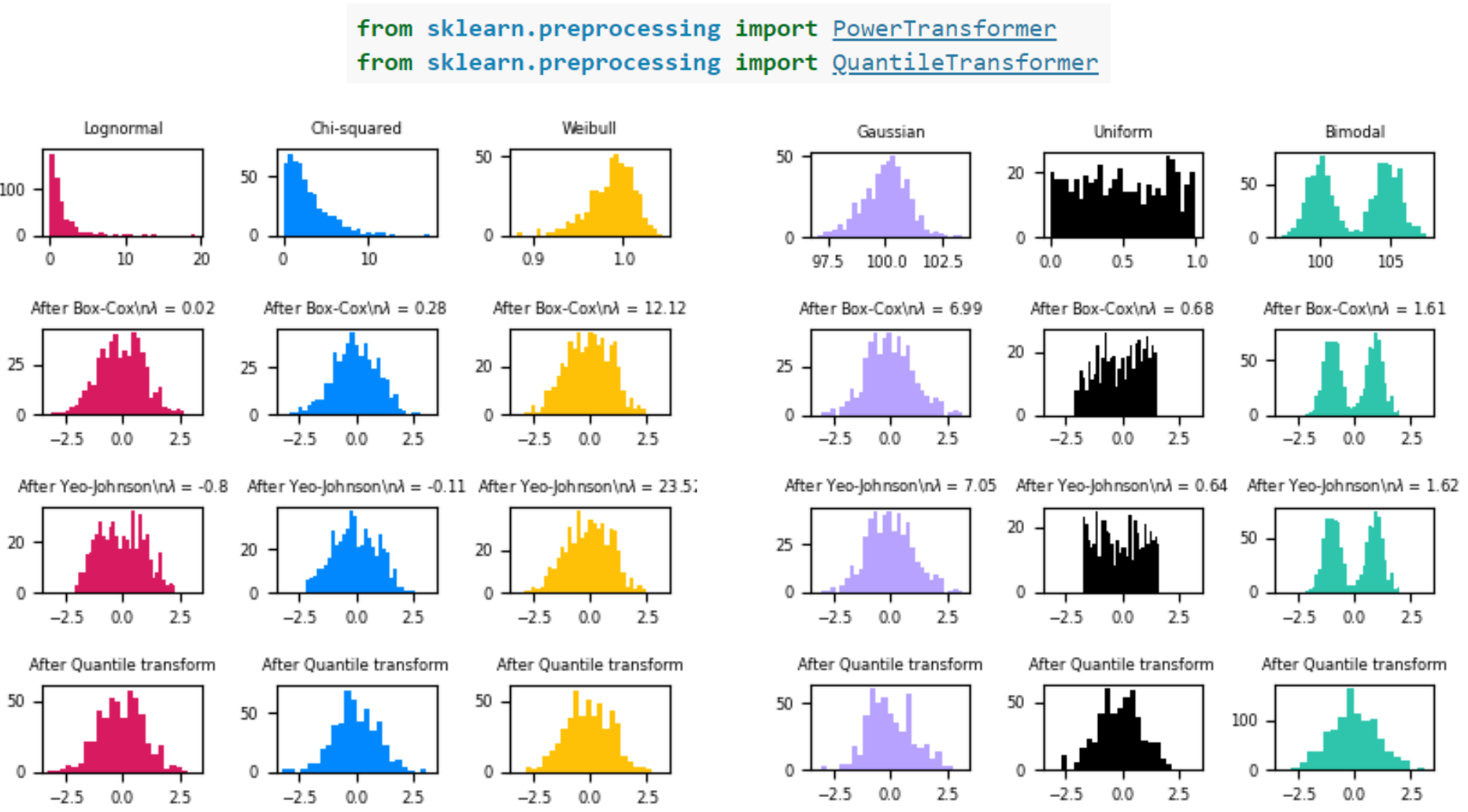

Most of the numerical feature distributions were skewed, so a logarithmic transformation needed to be applied.

We were concerned about the 0 values that were imputed as indicators of absence of the feature for a particular observation, but decided to test them nonetheless.

We also learned about other interesting transformations provided in scikit-learn package that help normalize feature distributions.

Exploratory Data Analysis on Ames Housing Prices

Prior to building a predictive model, we underwent exploratory data analysis on our features to familiarize ourselves with their distributions. We checked continuous numerical features to see if they fit a normal distribution.

For categorical and ordinal features, we checked the distributions as well as the relative sizes of each class. This was particularly useful for the generation of new features, mentioned previously.

Below is an example of a distribution plot of home sale prices generated for one of the features - overall quality of the home, on a scale of 1-10. The lower-quality homes are sold for less money overall; home prices with the overall quality of 8 or above tend to generally be more expensive.

Model Evaluation on Ames Housing Prices

Multiple Linear Regression

The first technique we tried in order to accurately predict sales prices of homes was multiple linear regression. We expected that a lot of our features may be highly multicollinear; meaning, they are correlated with one another. Consequently, we were only able to include a small subset of our entire features. As to be expected, this limited our predictive accuracy. Our strongest model could only account for 79.3% of the variation in house price sales using either of the two feature engineering approaches.

To include more of the original features in our predictions, we modeled a penalized linear regression using Lasso.

Lasso - Pt. 1

Lasso regression allowed for us to include all of our features in the model. We explored a variety of possible values for the tuning hyperparameters until we found a value that would minimize the over-fitting on our training set. We used a 10-fold cross-validation method to do so.

As we increased the strength of our penalization on the model’s features, the number of features included in the model decreased.

As the strength of the penalization increased, our test set accuracy increased to a point, and then sharply began to decrease. We found the penalization value at which the test set accuracy was its highest, accounting for roughly 92% of the variation in house sale prices.

Overall, our lasso models were quite successful, particularly in comparison to the multiple linear regression models. Using feature engineering Approach 1, the test R-squared was 0.8894 (accounting for 88.94% of the variation in sale prices). For Approach 2, the test R-squared was 0.9301 (accounting for 93.01% of the variation in sale prices).

|

Feature Engineering Approach 1 score |

Feature Engineering Approach 2 score |

|

0.8894 |

0.9301 |

Using lasso allowed us to gauge the importance of each feature. Lasso penalization method works by minimizing the strength of the features’ coefficients as the penalization parameter increases in magnitude. All coefficients will eventually shrink to 0 at a strong enough penalization value. Features whose coefficients have shrunk to 0 are not included in the predictive model. Using these penalized coefficients, we were able to indicate which variables were the most important in the prediction of sale prices.

Lasso - Pt. 2

As can be seen in the figure above, general living area square footage is the most important feature by a wide margin. Some of the other substantive features (as determined by Lasso) include 1st floor home square footage; overall lot area (in square feet); overall quality of the home (on a scale of 1-10); the number of car capacity in the home’s garage (0 if the home has no garage); and, the quality of the home’s basement.

There were 9 features in total that we had engineered. Their feature importances are seen in the figure below. Of those, four were highly important in the lasso model. These included: the home’s number of half bathrooms; the home’s total square footage on all of its floors; a metric of the overall home score derived from the home’s quality and condition ratings; and a metric of overall basement score derived from the basement’s quality and condition ratings.

Three features were considered moderately important and slightly contributed to the lasso model. These were: two metrics derived from the quality and condition of the kitchen and garage, respectively; and a metric of total porch area (including open, enclosed, and screened porch area). There also were two features that the lasso regression did not consider useful for the prediction of sales prices: total number of full bathrooms in the home; and a metric derived from the quality and condition of the home’s exterior.

Decision Tree Ensembles

Random Forest

In addition to penalised linear regression, we also tried the Random Forest algorithm because it is a flexible and easily implementable algorithm that produces great results even without hyperparameter tuning. The nonlinear nature of the algorithm makes it interesting to test out on our data. However one limitation of the algorithm is that it can only make a prediction that is an average of previously observed labels. This becomes problematic when training and testing data have different ranges and distributions.

Applying Random Forest to both the collections of features generated through the two approaches mentioned previously, we obtained the results denoted by table X. The scores here denote the amount of variation that the method accounts for in the sales prices.

|

Feature Engineering Approach 1 score |

Feature Engineering Approach 2 score |

|

0.87485 |

0.90442 |

In order to improve the score, we tried to apply grid search on hyperparameters such as maximum depth, maximum features, number of estimators, minimum sample leafs and maximum sample fit. However, we realized that grid search did not improve upon these results.

XGBoost

Therefore, we tried to improve the score by testing out a gradient boosting algorithm called XGBoost (eXtreme Gradient Boosting). XGBoost is an ensemble learning method that involves training and combining individual models to get a single prediction. This algorithm expects to have the base learners which are uniformly bad at the remainder so that when the observations are combined, bad predictions cancel out and better ones sum up to form good predictions.

It was found that after doing grid search to tune the hyperparameters, this algorithm did not perform better than Lasso regression and Random Forest. This could be due to the feature engineering. Several issues with overfitting were observed which did not improve after grid search. Table Y shows a score chart of the performance of XGBoost with the two feature engineering approaches.

|

Feature Engineering Approach 1 score |

Feature Engineering Approach 2 score |

|

0.85421 |

0.88957 |

Conclusion About Ames Housing Price Predictions

In conclusion, the key takeaways from the project were as follows:

- Understanding the data and being creative with feature engineering is important for accurate predictions as can be observed from our model performances.

- Lasso regression yields a highly accurate model which allows for the precise estimation of housing prices in Ames, Iowa.

- With the current setup, Random Forest performs better than XGBoost after being trained on the features we produced. However, there is scope for improvement in tuning and regularization to prevent overfitting the data.

Future Work on Ames Housing Prices

Additionally, we identified some potential next steps to improve and develop the project further. One of the things that could benefit the project would be the development of a web-based application that would suggest neighborhoods based on desired housing parameters and purpose. Furthermore, the existing models can be further tuned to improve their performance and new models can be explored to improve accuracy.