Analyzing Data To Predict Housing Prices in Ames, Iowa

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Github Repo | LinkedIn

Introduction and Objective Statement

In this project I will be analyzing house prices for the town of Ames, Iowa, using a data set provided for a Kaggle competition. This dataset contains 1,460 observations and 80 features that capture many aspects involved in predicting the market value of a house.

The objective of this analysis is to generate value to a company by providing house price predictions that can be used in a return on investment analysis. That could be useful for real estate groups that want to make better decisions about property investments, for example. The machine learning tools used in this analysis are very versatile and can be used in a wide range of prediction applications. The models used include: regularized multiple linear regressions (ridge and lasso), support vector machines (RBF kernel), and decision tree ensembles (random forest and XGBoost).

Exploratory Data Analysis

Before starting to create our models, it’s important to become acquainted with the data and its structure. The objective is to help identify obvious errors, better understand patterns within the data, detect outliers or anomalous events, and find interesting relations among the variables.This helps ensure our models are robust and correctly represent reality. It also shows us that we are asking the right questions for our stakeholders interests.

When looking at variables and their description file, I noticed there are three distinct categories: numerical (quantitative), categorical nominal, and categorical ordinal. The numerical variables include lot area, number of bathrooms and bedrooms, among others. Nominal features include zoning, type of street, etc. Ordinal features contain overall condition, overall quality, and others.

Exploring the Target Variable Data

In this project our target will be the Sale Price of the property. First, I reviewed the histogram of the distribution of prices.

We notice a high right skew for sale prices, which can create issues with our models. To address that, I applied a log transformation to adjust it to a more normal distribution which can help deal with extreme values and improve predictions.

I also discovered some outliers when comparing the above ground living area with the log of the sale price.

These outliers did not make much sense because this variable is very important in real estate and they had a very different relationship than the other homes. Doing some research I found that according to the statistics professor who originally supplied the housing data, the outliers are “Partial Sales that likely don't represent actual market values,” so I will exclude them.

Exploring the Data on Overall Quality and Condition

I also wanted to get an idea of how the quality and condition ratings affected the sale price. The first term rates the overall quality of the material and finish of the house; the second one gives its condition or how well it is preserved.

We can see that for quality there is a clear linear relationship with price. For condition, the relationship is not as clear, though it does seem to affect price, especially for lower ratings.

Exploring Zoning Classification

I also wanted to take a look at the distribution of houses sold by zoning classification so we can get a better idea of what type of market this is. It seems the vast majority are Residential Low (RL) with 78.8% of all properties, followed by Residential Medium (RM) at 15.0%, and some Floating Village Residential (FV) at 4.5%. We also get an idea of the price distribution based on the classification.

Bedrooms and Bathrooms Data

Since the number of bedrooms and bathrooms is a key metric in residential real estate transactions I wanted to get an idea of how this feature was distributed in this dataset.

As we expected, there is a cluster of values between 2 and 4 bedrooms and 1 and 2 full bathrooms with some outliers.

Neighborhoods Data

Analyzing the price by neighborhood reveals some clear relationships. Some had a very narrow price spread while others such as StoneBr and Somerst had a large variation.

Missing Data and Imputation

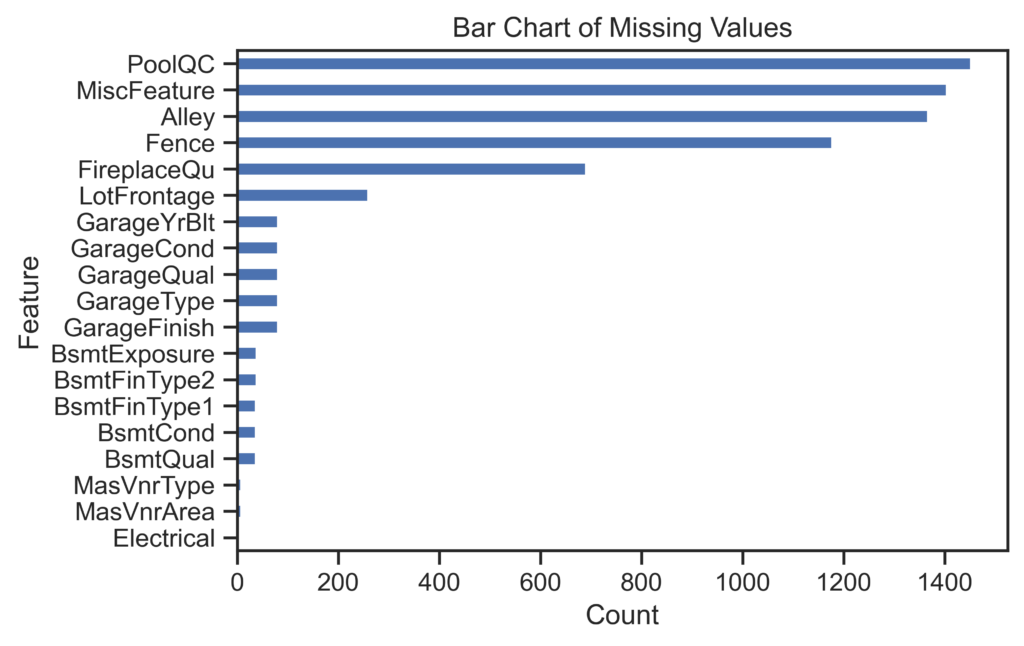

In this section our objective is to detect missing data and find a way to deal with it. Imputation was applied when it was possible. When not, other adequate solutions had to be analyzed case by case.

By looking at the data description file, I could infer that most of the missing values are because the characteristic does not apply. For example, there is no pool quality score because there is no pool. I tested that by pulling all missing quality scores for houses with pools and found it was empty.

In this case it will make sense to assign categorical values to a new category called 'None' and numerical values to '0.’ This will more accurately represent this characteristic.

After doing this I still had one feature which has missing values: lot frontage. It would not make sense for it to be missing because all lots must have some area that is adjacent to the street. In this case there is no ideal solution. One option is to delete the column, but that would cause the information in it to be lost. Since the missing values are only 17.7% of all values I opted not to do that. The other two options are to impute them or to leave them. Leaving them would not allow some models to work, so I chose to impute them with the median value for that category.

Feature Engineering

This part is as much art as it is science with endless possibilities. I did my best to create a comprehensive list of new features from the raw data that can potentially enhance our models performance.

Using domain knowledge and the information from the exploratory data analysis, I have come up with some ideas of new variables to create.

The following is a list of all the new features created:

Dummifying and Train/Test Split

The process of dummifying will help our linear model perform correctly, although it is not strictly necessary for our tree based models. We will dummify before doing the train/test split because we don't find a risk of slippage since making dummies is not data dependent, it is just an application of a fixed transformation.

We tried two versions for the linear models: a dummified version that preserves most ordinal categories and another where all categorical variables are dummified with one hot encoding. Both choices have pros and cons. When we preserve the value of the ordinal predictors, we keep that information but the model will interpret the distance from each value to the next as having equal magnitude, which is most often not the case.

For the tree based models, I used the LabelEncoder function from Scikit Learn Preprocessing library which will automatically transform categorical variables to numbers.

Finally, I did a train test split on the data randomly selecting 20% of the variables for the test set, which will be used as the final benchmark to test the performance of the different models.

Transforming Skewed Variables

In order to reduce the probability of violating some key assumptions (linearity, normality, and constant variance) I did a transformation using the Yeo Johnson method on features that present a high skew to make the distribution more Gaussian-like. Although this does not guarantee a complete fix for those issues, it will likely improve them. Another benefit is that it can help strengthen the linear relationship and thus its prediction capabilities. However, I must note that this could lead to more overfitting and losing interpretability.

These next graphs shows some examples of the transformations done:

From the graphs we can see that some features, such as the 1stFlrSF and LotFrontage, had an adequate transformation. However, some features that had many 'zeroes or sparse values had non-optimal shapes post transformation. I decided it was still worth it to keep the transformation. The value gained from the proper transformations could be helpful, and the non-optimal ones would not be really harmful.

Modeling

Multiple Linear Regression

In this section I will show the methodology and results of different machine learning models.

My first intuition when approaching this problem was that a linear model could do particularly well even though it's relatively simple because the underlying explanations that set the value of a house are driven mostly by linear decisions done by humans. For example, square footage, number of rooms, and location tend to have strong linear impact on a home’s value because they are some of the most important features for prospective buyers.

The first model I tested was a multiple linear regression. Although I expected to have an issue with overfitting due to the large number of predictors, I wanted to get an idea of the results. The R-squared came at 0.96 for the train dataset and 0.87 for the test set, which indicates high overfitting, as expected.

In order to deal with this issue I applied regularization using both Ridge and Lasso, which adds a penalty term to the minimization function to reduce the weight of features that have little statistical importance.

Ridge Data

As mentioned before, we have two dummified datasets. We tested a range of values for the hyperparameter Lambda on both to see which produced the best results in the test set. The following is the graph of said results:

For the ridge models, the results were almost the same for both dummy versions, reaching a test set R-squared of 0.897 when using a Lambda value of 0.362.

When looking at the most important features based on the beta value we see that they are categorical values.

Some of the features included are if the house is severely damaged (Functional_Sev) or if the sale type was warranty deed with cash (SaleType_CWD). Since we only did a normalization and not a standardization of features, it is reasonable to expect that important predictors that have a much larger standard deviation, such as total square feet, are not shown.

Lasso Data

Next, I tested several values of Lambda on a Lasso model and got the following results:

Again the results for the two dummified sets were similar, but this time they had slightly different results. The second Lasso model, which discards the ordinal values and just uses one-hot encoding for all categorical features, performed slightly better with an R-squared of 0.896 with a Lambda value of 0.362.

Again the most important predictors were some categorical features as seen in the next graph:

Support Vector Machines

I also tried training a support vector regressor using a radial basis function kernel that can capture complex non-linear decision boundaries.

I did some hyperparameter tuning using a 5-fold cross validation and ended with a C (regularization parameter) of 147, gamma of 9e-9, and epsilon (error insensitive magnitude) of 0.1. The overall result was not impressive at a R-square test score of 0.668. However, this model might still have some predictive power that other models lack that could prove useful in a stacked model.

Random Forest

Next I tried a random forest model, which is normally quite effective despite its simplicity. The advantage of tree ensembles over our linear model is that they can better capture non-linear relationships.

In particular, a random forest model creates several weak predictors which are used in parallel, which means each tree has an input into the decisión as if it were a committee casting a vote. The theory behind this is that various points of random noise will cancel each other out, leaving only the signal.

I did some hyperparameter tuning with a 5-fold cross validation and was able to get a R-squared test score of 0.893 with the following feature importance:

XGBoost

Finally, I trained a XGBoost model, which is considered more complex than a random forest predictor. Although both are tree ensembles, the XGBoost model uses a sequential system, meaning each tree tries to explain the error left by the previous tree models. It also has several additional hyperparameter, such as a learning rate that helps prevent overfitting by limiting the weight of each tree iteration, and regularization parameters that can prune individual trees, among others.

Surprisingly, with a 5-fold cross validation, we only got a R-square test score of 0.888, which was lower than the simpler random forest model. Nonetheless it was quite powerful. The key features identified in order of importance were the following:

Analyzing Results

Comparing Models

Since the objective of this analysis is to help a business make an investment decision, it is important to translate its results to the relevant metrics. In this case, I decided to compare results based on the root mean square error, which would give us the average error in the predicted price. For now this error is based on the untransformed target variable, log of US$ sale price, but we will transform it back to US$ sale price after comparing models.

We found out that the best model is the multiple linear regression using ridge regularization with the first set of dummy variables, although all the MLR results are very similar. The tree models also performed well, though they were slightly less precise.

In order to be able to get a better idea and interpret the model results I graphed the scatterplot of the predicted vs actual results in US$, instead of a log of US$, by transforming the predicted values back to sale price.

Conclusions and Future Work

I reached the following conclusions from the analysis:

- The best model is a simple regularized multiple linear regression. I assume this is because the underlying decision is made in a strongly linear fashion by people looking to purchase houses.

- As we can see from the graphs, both models tend to do a much better job for predicting prices under US$ 400 thousand. We can use this information to inform our return on investment analysis, making sure to account for the extra variation on properties above this price.

- The average error, either above or below, in the sale price prediction is of US$ 27,912 or 13.9% for our best model. If we exclude houses with predictions above US$ 400 thousand, this goes down to US$ 22,338 or 13.78%.

- While some errors are quite small, the largest error is 112.2%, so it would be advisable to do a human check before making an investment decision.

Regarding future work, one thing that could be done if increasing accuracy could have a big impact is implementing a stacking regressor. This procedure can further improve the results by combining different models through a meta-regressor.

In essence, since different models can have particular strengths, the meta-regressor finds the best way to combine them to create a more robust predictor.

While doing some research on this topic I found that on similar situations this application has been very successful, reducing RMSE by over 50%.

It could also prove useful to analyze the observations where we had the largest errors to attempt to find some insights that could be applied to our models to help them take into account such differences.