Data Driven House Price Predictions

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Your new home is waiting in Ames, Iowa. Let us explore the real estate market in Ames based off of data.

Background

This project encompasses the process of predicting the house sale prices in Ames, Iowa. The data set from Kaggle provides 80 features that contribute to predictions and I the various models were trained for accuracy.

Interesting Data Points

Before diving into the technical aspects of the project, here are some interesting facts about Ames.

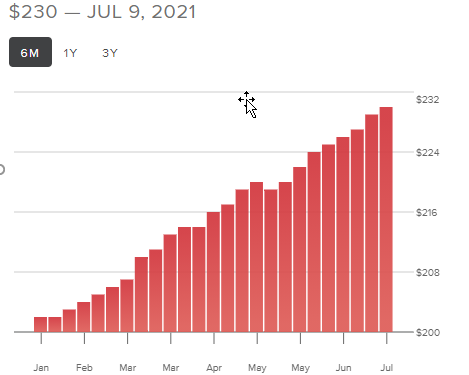

As of July 2021, at the time of this writing, the average price per sqft in Ames is $230.

Below graph shows the home ownership rate is below 35% only.

Now that we have explored some real facts, now it’s time to delve into real estate facts from the data set.

Table of Contents

EDA

Data Cleaning

Removal of outliers

Feature selection

Model selection

Model performance

Exploratory Data Analysis

There are 80 features in the dataset, and some are numerical, and some are categorical. First, we look at the correlation between the features and highlight the features that has value >0.5.

Here are the important features:

Data Pre-processing - Treating null values.

There are lot of features with null values, instead of dropping, those are populated with ‘None’ for categorical and ‘Mode’ for numerical features. Below graph shows all the features with null values.

Analyzing Outliers in Important Features Based on Data

In the scatter plot of GrLivArea vs Saleprice, we see some outliers, couple of large houses sold for a cheap price. Our objective is to predict the regular prices, so we will consider only the ones with below 1000 sqft in out feature selection.

Overall Quality

As the sale price highly dependent on overall quality of the different features of the house, if the overall quality is high then the sale price is higher.

Removal of Outliers

Here is how I have removed the outliers for below features.

Dummification of Categorical Features

All the categorical variables are processed for label encoding and then some of the features are combined for use in model selection.

TotalBsmtSF+ 1stFlrSF+ 2ndFlrSF is combined as TotalSF for easy analysis.

Model Selection Based on Data

For model selection, I have started from training conservative models to efficient models, to evaluate the accuracy and performance. I have trained Lasso, Ridge regressors with alpha value ranging from 0.1 to 0.8. Random forest regressor is trained with the depth of 5 and it predicted with 78%. I have also used KNN, Gradient boosting and SVM. XGBoost regressor’ learning rate with 0.1-0.9 predicted with 84%.

Model Performance

After fitting each model, compared the model performance. The metric we have used is the root mean squared error.

Overall Gradient Boost predicted with over 87% accuracy.

Future Work

Continue to explore best methods to reduce overfitting and parameter tuning. Apply other machine learning algorithms such as AdaBoost or train the XGBoost with more better estimators. Gather more latest data for accuracy.