Machine Learning in Python: Predicting House Prices

Background and motivation

Housing is one of the most valuable economic assets an individual can purchase during his adult life. Therefore, making the right decision on whether to buy a house and the price to pay are so important.

In the following, we explore different machine learning techniques and methodologies to predict house prices in Ames, Iowa, as part of an open Kaggle competition. The data contains a train and a test dataset with almost 3000 house sales between 2006 and 2010 along with around 79 features that describe each of the sold houses. The main objective is, then, to predict house prices of the test dataset based on the features of each of the houses once our model has been trained.

We formed a multidisciplinary team of 4 people and followed the data science lifecycle to predict the house prices. In the figure below the different steps of our workflow are shown.

Exploratory Data Analysis

The initial job of any data scientist is to understand the problem that we are facing and understand what is required. This is done while we gain domain knowledge about the subject we are studying.

The first question to answer when working with machine learning models is what shape and distribution our response variable, the Sale Price, has. As shown in the graph below, the initial distribution of the Sale Prices showed a right skewness (1.88) that had to be addressed to ensure optimal regression results. For this purpose, we applied both, a logarithmic (skew=0.12) and the Box Cox transformation, which brought the skewness factor closer to the desired zero (0.12 and -0.008, respectively). We also tested whether removing outliers in the dataset benefits the normal distribution but turned out to be unnecessary, especially as it can introduce errors when testing the model.

Next step was to investigate the correlation between the response variable and the different numeric attributes in order to identify potential issues with multicollinearity as well as to get an idea of strong predictors for sale prices. Shown by the correlation matrix below, we found some interesting highly correlated features such as the areas of the basement, 1st and 2nd floors as well as garage area and cars. We used this information to further clean up our data and for further feature engineering.

Next step was to investigate the correlation between the response variable and the different numeric attributes in order to identify potential issues with multicollinearity as well as to get an idea of strong predictors for sale prices. Shown by the correlation matrix below, we found some interesting highly correlated features such as the areas of the basement, 1st and 2nd floors as well as garage area and cars. We used this information to further clean up our data and for further feature engineering.

Scatter plots (not shown) are very useful not only to find relations between variables but also to visually identify outliers so we produced scatter plots between the different variables to actually identify two outliers that were not considered in the model phase.

Data Preprocessing and feature engineering

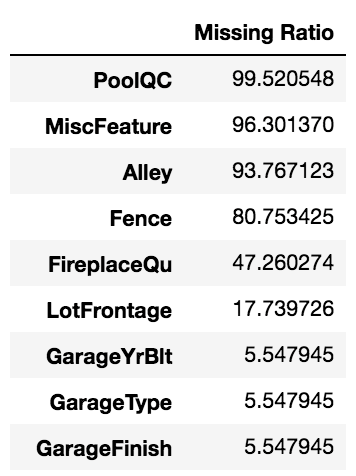

Before selecting the best machine learning model, most time is spent with pre-processing, in particular feature engineering. In the test set alone, there were features with over 80% missing values.

To aid in handling these missing values, the provided text about variable descriptions allowed us to fill accordingly. Most NA values were changed to “None” or 0 if, for example, there was no garage present. While imputing these values, there were some features, such as PoolQC and PoolArea that showed us we need to pay careful attention to features that may have been errors in value reporting.

|

|

In this example, we found that there were three observations incorrectly labelled as not having a pool when actually, PoolArea shows that there is a pool. Being mindful of situations like this are key in having meaningful features for the modelling process.

Another important skill is verifying the after-effects of imputation on the distribution of the target variable. At first, we treated almost all numerical features the same by imputing missing values with 0. One feature that we had to return to was LotFrontage. After the modelling process, we realized that it didn’t make much sense to assume a house could have 0 lot frontage and so we chose to assume its values are close to the median lot frontage of houses in the same neighbourhood. Shown below are the before and after of the LotFrontage distributions for different imputation methods. Our decision to impute with the median values got confirmed by the fact that using 0 altered the LotFrontage distribution and created two peaks. Also, it is important to note that we want to consider only the median LotFrontage of neighbourhoods from only the training set and not the test set.

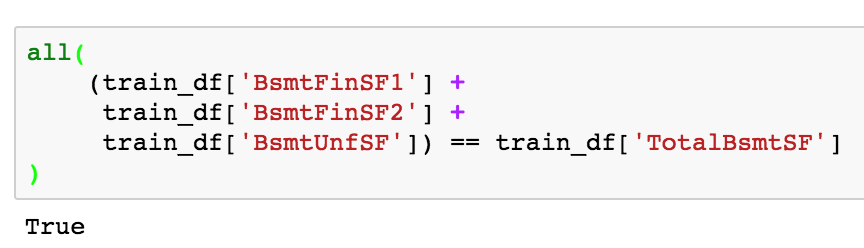

Furthermore, we chose to condense features and values that could be represented as one by, for example, dropping three features pertaining to basement square footage as it is represented by an existing feature.

Lastly, in order to gain a dataset that is suitable for different machine learning models, we needed to transform categorical to numerical values using appropriate methods as algorithms such as Decision Trees do not accept categorical values. For our purposes, we chose 2 different transformation tools: the one hot encoder and the LabelEncoder function. Ordinal variables pertaining quality features including basement, fireplace, or pool quality were transformed to numerical values in a way that conserved the order of quality.

Machine Learning

We used two main groups of algorithms to model the relationship between our features and the house price, namely linear models, and tree-based models.

Linear Models

Some of the numerical features showed a linear relationship with sale prices. So, we suspected that linear models will provide useful prediction for this particular project. We tried Ridge, Lasso and Elastic net regression and consistently got R2 values above 0.80 after tuning the parameters of the models. For Ridge and Lasso regression, we tuned the hyperparameter alpha using 5-fold cross-validation. The alpha parameter decides how much weight to be put on the regularization term in the cost function. Alpha equal to 0 reduces the model to an unregularized ordinary least square problem, large alpha prefers a simple model where the linear coefficients are close to zero.

For elastic net we tuned an additional parameter “l1_ratio” which describes how to mix Ridge and Lasso regression. L1_ratio equal to 0 reduces the model to pure Ridge regression, whereas l1_ratio equal to 1 reduces it to pure Lasso regression. The three classes of models gave very similar results with tuned hyper-parameters, with Lasso performing slightly better than the other two.

|

|

|

|

One thing to note is, when we applied box-cox transformation to sale price, we get a better linear fit (higher R2). This is expected, as box-cox transformation of the feature variables and the target variable make the distributions more normal. This usually leads to a better linear fit.

For the most expensive houses, linear models tend to underestimate the price. The relationship maybe non-linear in that price-range which cannot be captured by the linear models.

Tree models

In theory, tree-based models like Random Forest can capture some aspects of the non-linear behaviour as described in the discussion of the linear models. A Random Forest model in scikit-learn has many hyper-parameters. We tuned the following parameters using grid search.

"n_estimators": the number of trees in the forest, "min_samples_leaf": the minimum number of samples required to be at a leaf node, and "min_samples_split": the minimum number of samples required to split an internal node.

It is to be noted, grid search is a computationally expensive procedure. It takes up a long time to converge on a best result if we decide to scan thoroughly in the parameter space using small step size. We could have used randomized search or the Bayesian search to tune the parameters which could have been faster.

One fine aspect of Random forest is that it provides a fast mechanism to compute feature importance. We have used scikit-learn’s RandomForestRegressor object which employs the mean decrease in impurity (or gini importance) mechanism. The most important feature was found to be total area of the house, followed by the year the building was built and garage size.

|

|

Random forest did not improve estimation by the simpler linear models. The training dataset was very small (less than 1500 entries), which may have led to overfitting. It may also need more extensive tuning of parameters to give better results. Because it takes a long time to do parameter tuning we reserved that time-consuming task for more advanced boosted algorithms.

The final Boost

We have learned that Feature Engineering is a powerful method to increase the accuracy of a predictive model. Although very effective, feature engineering can be very time-consuming. Another great way is to apply boosting algorithms, which are the most powerful weapons of a data scientist.

There are multiple boosting algorithms like Gradient Boosting, XGBoost, AdaBoost, Light GBM, Gently Boost and more and every algorithm has its own underlying mathematical concepts. Boosting algorithms are particularly famous in Kaggle competitions as they reduce bias and variance in supervised learning as well as combine multiple weak learners to build strong predictors – all in once! In principle, boosting algorithms start out with a very weak learner by assuming that the mean value of the target is the prediction for all observations. In the next step, the algorithms calculate the error it made for each observation and attempts to minimize this error, resulting in a new prediction. From here, the previous steps will be repeated until the error is minimized and the final model is built.

For the prediction of the housing prices in Ames, we compared the Gradient Boost with the slightly more sophisticated XGBoost algorithm. In order to compare the accuracy and RMSE of each model, we split the test dataset into a test set with 75% of the data and a validation set with 25% of the data. While Python allows a fairly easy implementation of boosting algorithms, optimization requires some more hacking in terms of hyperparameter tuning.

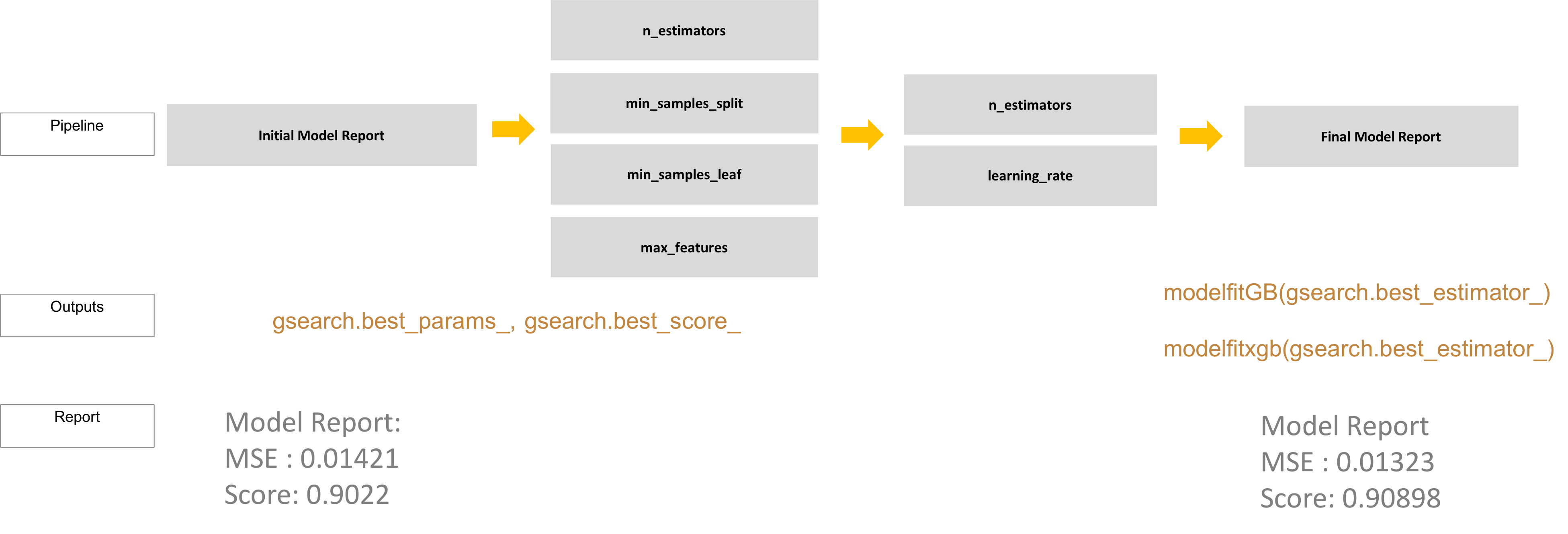

Although it was tempting to deploy a wide grid search on all parameters at once and go for dinner while the algorithm is running, it is certainly not best practice to do so. We resisted this temptation and approached the parameter tuning as strategic as possible. First, we determined the number of trees (n_estimators) that allow the system to run fairly fast with the default learning rate of 0.1. The number of features and learning rate show a reciprocal relationship with each other and generally speaking, it is better to start out with a higher learning rate and lower number of trees and come back to it after tree-specific parameters were optimized. The maximum depth of the final tree model, the minimum number of samples required to split a node, or the maximum number of features used to calculate predictive values are such tree-specific values. The maximum depth and minimum number of samples for splitting have the biggest effects on the model and should be tuned first. To accelerate the process, it can be helpful to also set the parameter “n_jobs” to -1 as it will use all available CPUs and therefore decrease the processing time. Best parameters of each model were used to train the next model.

The scheme below displays the tuning strategy described above. Best parameters of each grid search were fed into the functions modelfitGB and modelfitxgb that returned a model report with the accuracy score and RMSE based on internal cross-validation via k-folds as well as the precision on the validation dataset. After the tree-specific parameters were determined, we increased the number of trees up to 2000 and decreased the learning rate by a factor of 100 to get a more robust model.

The metrics measured (accuracy and RMSE) revealed a slight decrease in the error by 0.001 and improvement of the accuracy by 0.006 for the GradientBoost model, underlining that boosting models give a good benchmark solution per se and that parameter tuning only leads to minor improvements.

To visualize what boosting does, we also compared the feature importance metrics before and after parameter tuning of the GradientBoost model. Before boosting, we can see that the feature “TotalSF” is dominating.

After boosting, we were able to derive values from many more features and the weight of importance was more evenly distributed.

The Xgboost model showed similar results with similar feature importance. For datasets with a large number of features, the additional parameter “reg_alpha” comes in handy as it allows to include L1 regularization and therefore dimensionality reduction, which is not possible with the GradientBoost model. In addition, Xgboost can perform cross-validation at each iteration and chooses the model with the highest accuracy, whereas GradientBoost models require to add a cross-validation step manually within the function.

Together, we have successfully implemented ensemble boosting algorithms to achieve accuracies and precisions that surpassed the simpler models such as linear models or random forests. The power of boosting algorithms lie in the ability to learn from previous errors and build a newer model that accounts for that. However, if the dataset is too small, boosting algorithms suffer from over-fitting and have to be applied with caution!

Main conclusions

- Background knowledge is important but don't let it 'cloud' your judgement

- EDA is important to remain open for new discoveries of relations among variables

- Reporting results for non-expert users requires easy-to-understand visualizations and language

- e.g. presenting results in common units ($USD of RMSE)

- When handling a dataset with multiple variables (like this one) it is helpful to "become friends" with available descriptors.

- Python's scikit-learn makes tweaking models easy, but cleaning and transforming features is still more of an art that requires a bit of creativity and a luck.

- Linear regression gives very good result for this particular project. Transformation of data improves quality of fit.

- GBM/Xgboost powerful to give good benchmark solutions but not always best choice

- Small datasets (like ours) can benefit from a simple (linear) model