Using Data to Predict House Prices in Ames, Iowa

The skills the authors demonstrated here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

GitHub

Data Science Introduction

The aim of this project was to use data predict house prices in Ames, Iowa as part of a Kaggle competition and to understand the attributes that contribute to the price of an individual house. There is a multitude of factors that go into the price of a house and pinpointing their effect is crucial in the real estate industry. Our approach to exploring this question was to use pair programming, but as a whole group of 4, and to spend time investigating many different models, rather than shortlisting and tuning a smaller number.

Exploratory Data Analysis

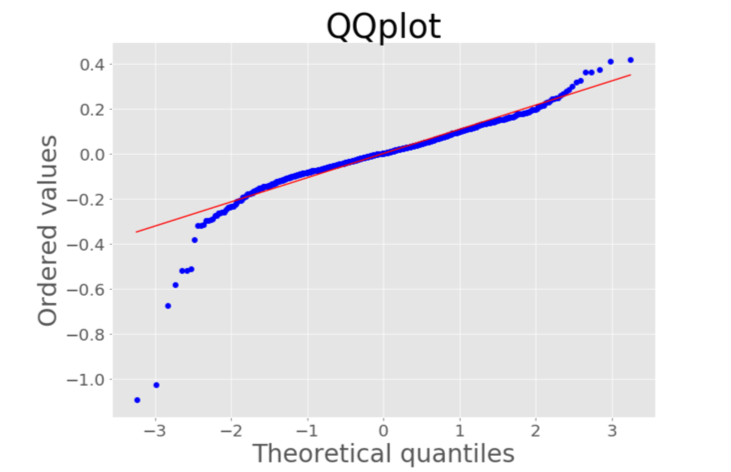

Our first task was to prepare the data for modelling. We began with the form of the target variable (the sale price), which was highly skewed. This can lower the performance of machine learning models (e.g. violating homoscedasticity in linear regression), and so we used a box-cox transformation to normalize it. It turned out that the optimum lambda returned by the box-cox function was very close to zero, so for interpretability, we decided to just use the value of zero (i.e. taking the natural logarithm). This vastly improved the normality of the target, as can be seen from the quantile plots and histograms below.

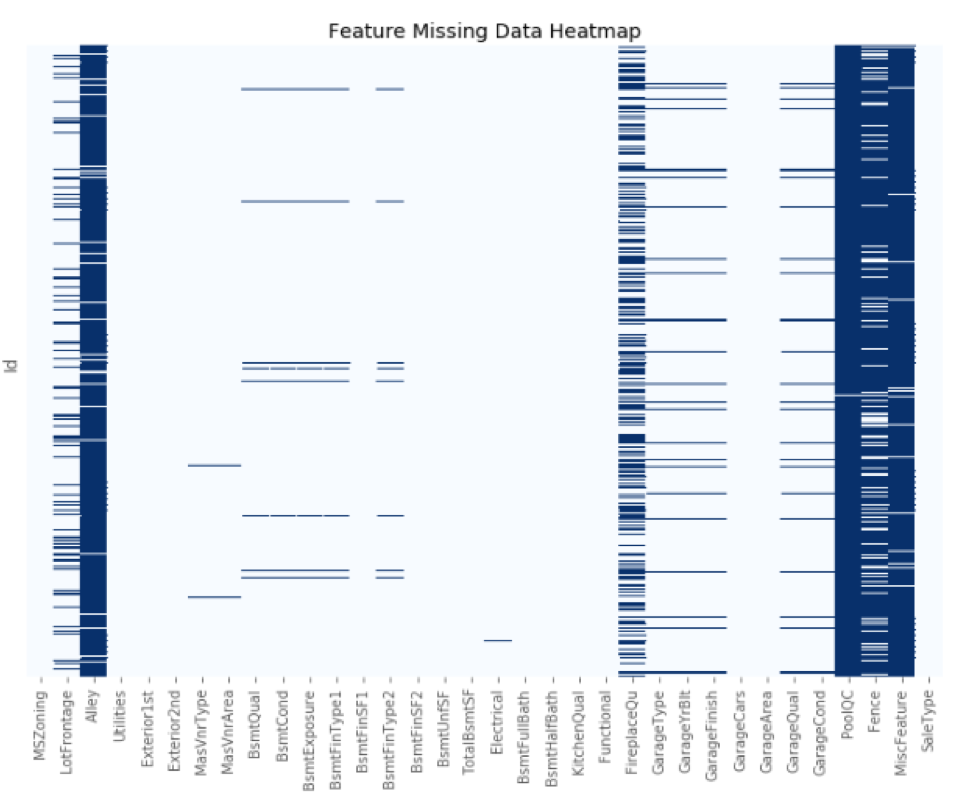

Missingness

There were 3 types of missingness in the data. The first was data missing completely at random such as those columns with only one (or in fact no) dark blue bar in the above heatmap. From the heatmap, there was also clearly data missing at random (e.g. attributes pertaining to the basement). To deal with these we mostly used random imputation as the proportion of NaN’s in any one column was never very large.

We did however work on a column by column basis, so if there was a clear majority of some value in a column we imputed using that. The final type of missingness that we encountered was NaN’s that really meant “None” (e.g. NaN was used to mean no pool in the PoolQC column). For most of these columns, we were able to compare to some other column to work out whether the value was truly a NaN or not, except for the case of the Fence column, which we had to drop instead.

Feature Engineering

After completion of the EDA, the next step of this project was to conduct some feature engineering. This dataset contains a large number of features, especially after the categorical features are dummified. Therefore, our team’s aim was to reduce these features as much as possible so as to avoid the curse of dimensionality and multicollinearity effects, without losing any important information.

First immediate feature selection

The first immediate feature selection was to drop a few of the categorical columns that had extremely low variance as they did not offer much information to help the predictions. One example of this was the utilities feature which had the same value for all but one of its observations.

Next step

The next step of the process was to observe the correlations between all quantitative variables and the target, Sale Price. From this, we can drop any features that have a particularly low correlation. In this instance, we dropped ‘MiscVal’ which represents the value for the miscellaneous features of the house. This figure is also useful as it highlights the important features that have high correlation such as the overall quality of the property and the square footage above ground.

Further investigation

After some further investigation of the features in the dataset, it was decided that several of the features were very similar and could be combined. This decision was made based on logical reasoning by the team and was also influenced by the multicollinearity plot showing the R-squared values for each feature, which will be discussed later. The equations below show the four features created as derivatives of existing features.

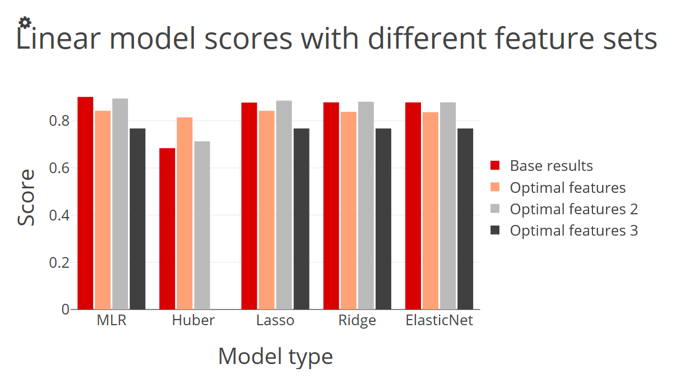

As a result of the above, we are able to convert 15 different features into four whilst maintaining all the same key information. After these four features were created, the first step of feature engineering was completed and what remained was our base feature set. From this base feature set we then developed three more optimal feature sets in an attempt to make a better prediction, or at least maintain the prediction accuracy with fewer features. These three optimal feature sets can be found in the table below.

Each of these feature sets was generated in a different manner. To create optimal features 1 we generated the lasso graph to show the change in value of the coefficient for each feature as lambda increases. From this graph, an ‘optimal’ value of lambda was manually selected and all features whose coefficients were non zero at that lambda were kept. The second set was created in a similar manner, however, instead of manually selecting the value of alpha, a grid search algorithm was used to find the optimal value of the parameter. At this value, all features with non-zero coefficients were kept.

The final set

The final set was developed by a bootstrapping technique on the lasso regression model. One thousand samples were run and the averages and standard deviations of the results were found to develop approximate 95% confidence intervals for the coefficient values. If the interval for a specific coefficient did not cross zero, then it's corresponding feature was kept for the optimal feature set. We also note from the above table the only three attributes consistent across all three sets are the year remodelled, overall quality and the total number of bathrooms.

As briefly mentioned earlier, a multiple linear regression model was run and the following graph was generated in order to test for multicollinearity amongst the features in the dataset. The figure below shows the result after columns were dropped. The initial result of this graph displayed a high R-squared value for the garage cars and garage area attributes of 0.8. We therefore dropped garage cars which has a significant effect on the R-squared value of garage area. We note that Overall Quality and TotalSF (total square footage) still have relatively large collinearity values, however as they are so important in the prediction we did not drop them.

Modelling - Linear Models

We began our modelling by exploring all linear model options, including multiple linear regression, Huber regression, ridge regression, lasso regression, and elastic-net regression. Before we began training our data we double checked that all of the assumptions for linear regression were met within the dataset including linearity, normality, constant variance, independent errors, and minimal multicollinearity. We have touched upon trying to reduce multicollinearity and transformed the data so as to adhere to linearity within the previous section already, but below are a few visuals showing that the other assumptions hold true.

Q-Q plot

The Q-Q plot shows the distribution of residuals against the distribution of theoretical values and the straight line indicates fairly good normality in the data. Also shown is a residual plot which shows the spread of residuals throughout the target values. The even distribution of positive and negative residuals across the data shows constant variance. Lastly, as a check for independent errors, we ran a Durbin Watson statistic which resulted in a value very close to 2 on a scale from 0-4, which clarifies that the errors are independent.

Modelling Procedure

As part of our modelling procedure, we took a few different routes and looked at a few different measurements to evaluate how well the model fitted the data. In one instance we performed 5-fold cross-validation and found the R-squared average from all folds. We also split the training set data into an 80%-20% train-test split so that we could evaluate the performance of our model on unseen data whilst still knowing the true target values. Lastly, we created a function to calculate the adjusted R-squared as the multitude of features used always increases the regular R-squared, so the adjusted R-squared is a better measure to compare the different optimal feature sets.

Multiple linear regression model

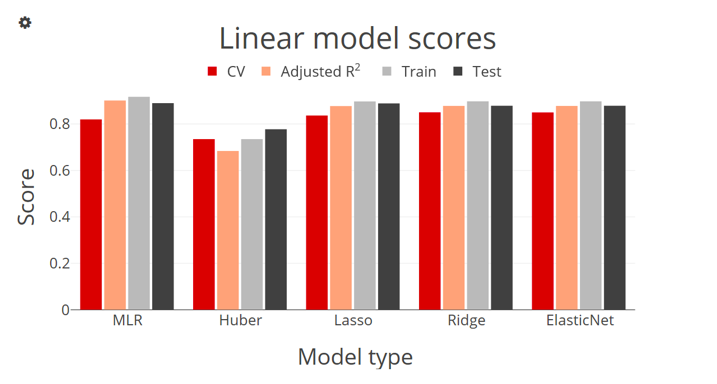

\We first looked at a baseline multiple linear regression model. The model performed well but we recognize the high possibility of multicollinearity because of the multitude of features even after our feature selection process. As a result we switched our focus to penalized models including ridge, lasso, and elastic-net. These models penalized large coefficients so as to lower the effect of multicollinearity, thus increasing bias minimally whilst largely decreasing model variance. We also used a Huber model which is known for handling outliers in the dataset. But because the dataset was so vast with minimal outliers, and the fact that the Huber model utilizes the median instead of the mean, it performed the worst out of all linear models.

The elastic-net model performed the best out of the penalized regression models. Most of the models also seemed to slightly overfit the data when we performed the train-test split. As part of our optimal feature selection, we utilized the lasso model’s results to determine the features with the greatest predictive power. As shown in the graphs below we ran all linear models again using the different optimal feature sets.

From the results above it seems our baseline feature set and optimal feature set 2 performed the best across all models except for Huber, which we have already accounted for as not a good fit in this situation. From comparing the scores alone, it seems as though our feature selection did not do a lot to improve on the model performance, but the optimal features improve interpretability because optimal features 2 gets almost the exact same score as the base feature set, using only half the number of variables. Again, R-squared will always increase with the number of features used.

Modelling - Non-Linear Models

We next explored the non-linear models and how well they performed on the housing prices. Below we present 4 different scores for each of 6 different models. We compare how the mean of a 5-fold CV, adjusted R-squared, and train and test scores vary between non-linear models and our best linear model.

Random forest model

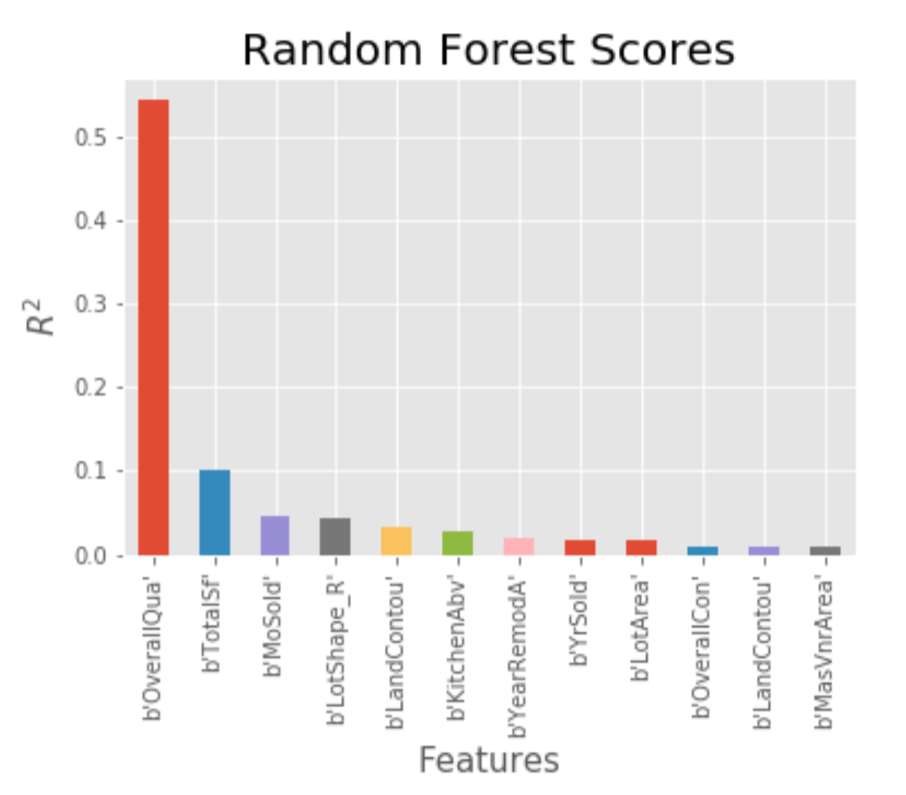

The random forest model performed the best in the train set. Gradient boost, however, yielded the lowest Root Mean Square Error (RMSE). Non-linear models overfit the data significantly as the graph shows the train score being higher than the test for all non-linear models. The tree based models tended to perform better than the linear models with gradient boosting performing better in particular. Below we examined the feature importance of the Random Forest model:

It was interesting to see that the important features were, in great part, similar to the results of the Lasso regression feature selection; OverallQual (Overall Quality) and TotalSF (Total Square Footage) were the most important features. However it was a surprise to see MonthSold and YearSold included in the features above since they had shown little correlation to the SalePrice in the correlation heatmap.

Conclusion and Future Work

We found that fitting a gradient boosting regressor to a vecstack of all of our used models, both linear and non-linear, achieved the best score (lowest RSME) of 0.12909. Our first run model, elastic net, achieved 0.13730 with optimal hyperparameters, alpha (lambda) = 0.00016 and l1_ratio (rho) = 0.384.

For future work, we determined it would be necessary to have better statistical feature and model selection. The Akaike Information Criterion (AIC), Bayesian Information Criterion (BIC) and Recursive Feature Elimination with Cross Validation (RFECV) would all be useful in building better models through improved analyses for feature selection.

We will also return to our missing data imputation process and use a KNearestNeighbors (knn) imputation instead to analyze its effect on the results. In addition to all of this, we were curious to see what the results would be if we had used a Label Encoder for our nominal categorical variables rather than one-hot encoding, as this tends to improve tree-based model’s performance. Finally, we will also return to do greater feature engineering, including outlier removal and feature scaling.

Please follow along as we look to improve our housing price prediction modelling and explore our github page to see our Python code.