Studying Data to Predict Housing Prices

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

Background

The Ames Housing dataset was compiled by Dean De Cock for use in data science education. He proposed this dataset to data scientists as an alternative to the often cited Boston Housing dataset.

The Kaggle competition challenges (aspiring) data scientists to predict the final price of each home. This is not going to be an easy task. The data set has 70 explanatory variables that describe different aspects of a residential home in Ames, Iowa.

Predicting house prices is a challenging endeavor as there are a multitude of factors and variables the need to be taken into account when it comes to real estate valuation. In this challenge, creativity and ingenuity is key to be able to find the important variables that can give the most accurate prediction of house prices.

Predicting housing prices is a challenge; the task involves many variables and takes some creative thinking to pinpoint the features that actually matter in order to arrive at an accurate prediction. A recent competition on Kaggle (an online data science platform) featured housing price predictions with data from Ames, Iowa.

Visit our repository for all related codes.

Data cleaning and processing

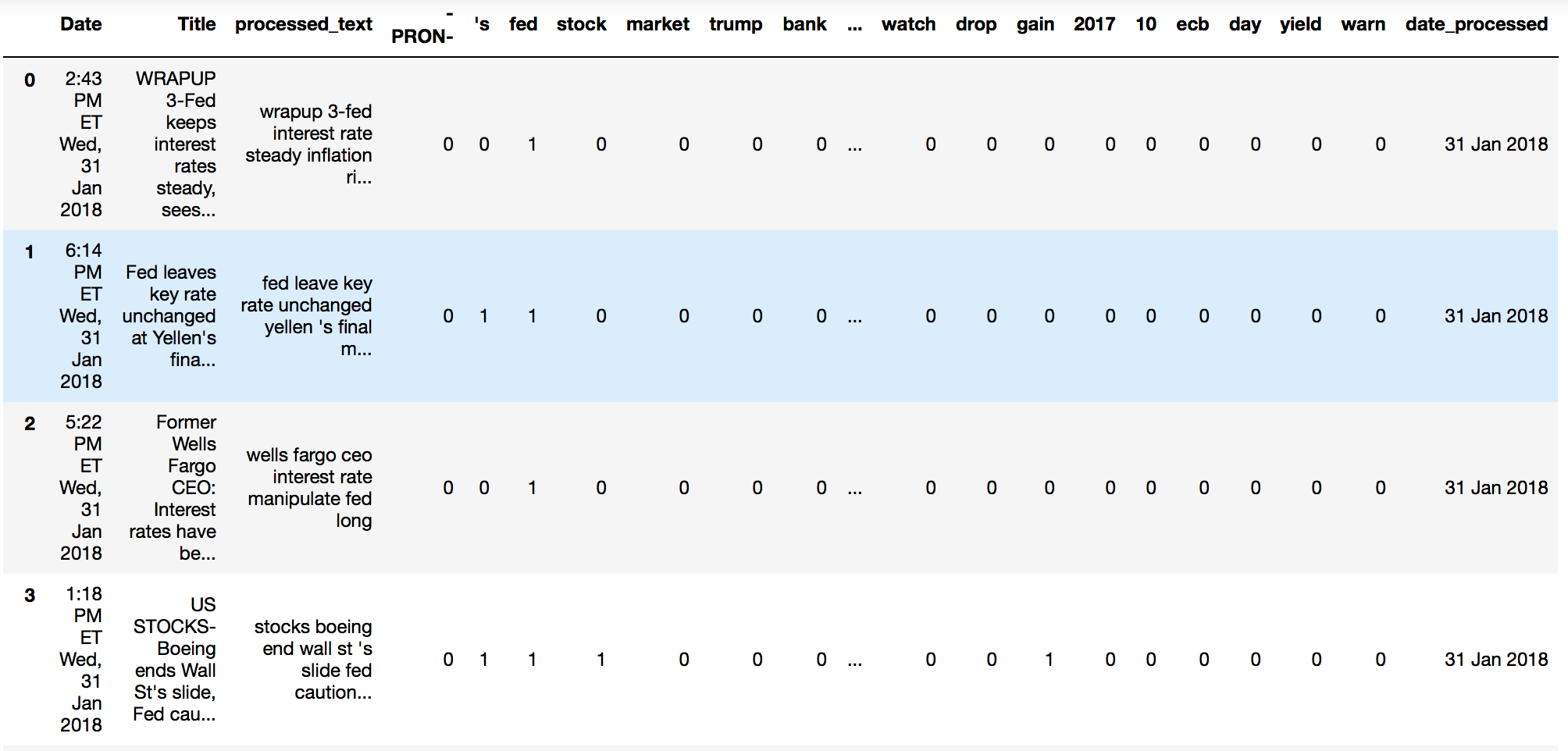

The Data Set

The Kaggle competition provided the data as as train.csv (with house prices), and test.csv (new observations with all the same features as train.csv, but missing the Sale Price).

We processed the train.csv dataset, performed feature engineering by a number of methods and optimized model hyperparameters using K-fold splitting, performed a stacking algorithm of different models based on train/test optimization.

We predicted the house prices for the houses listed in the test.csv file and saved it as the required format for Kaggle submission.

Missing data

- Top columns with null values:

With first glance we noticed that a lot of features have None value. However, with further analysis it shows that most cells with “NA” values actually mean “No”.

Example : PoolQC - Pool Quality - “NA” means “No Pool”.

With this finding, we updated all meaningful “NA” values to “No” or numeric 0

Removing Outlier

Two houses with the most SF(square feet) had average sale price. Based on the data publisher suggestion[1] we decided to remove these two outliers.

Dummify Categorical Features

- Nominal category features:

- Dummy with removal of most common value

- Ordinal category features:

- Digitalize

- Example: For KitchenQual (Kitchen Quality) feature, the original data values are Po(Poor), Fa (Fair), TA (Typical/Avg), Gd (Good), Ex (Excellent). We converted the values to numeric 1,2,3,4,5

Feature combination

Based on our understanding of the dataset , we have also implemented the following strategy to create some meaningful new features:

- Create BsmtScore

- (BsmtType1 * SF1 + BsmtType2 * SF2) / (SF1 + SF2)

- Create GarageScore (GarageQual/GarageCond/GarageFinish too correlated)

- (GarageCond + GarageQual + GarageFinish) / 3

- Define TotalBath

- FullBath+BsmtFullBath+0.5*HalfBath+0.5*BamtHalfBath

- Convert “Year Built” to “Years Since” concept

- YearsAgoBuilt, YearsSinceRemodel, YearsSinceSale

- Convert SaleMonth (1-12) to Seasons

- Dec-Feb = Winter, Mar-May = Spring, Jun-Aug = Summer, Sep-Nov = Autumn

Feature Removal

- Features with less than 4 values:

- After dummy all the nominal category features, there are 158 columns. 19 features have less than 4 values in the training dataset and were removed to reduce statistical noise

- Check Correlation of each feature. Used 0.70 as the threshold to remove features that may cause multicollinearity problem. Features removed are:

| MSSubClass_90 | SaleCondition_Partial | MSSubClass_190 | GarageCond | MSSubClass_80 | MSSubClass_50 |

| PoolArea | GarageCars | Fireplaces | MSZoning_FV | RoofMatl_Tar&Grv | TotRmsAbvGrd |

| MSSubClass_85 | MSSubClass_60 | MSSubClass_45 | MSSubClass_120 |

- Other special features need to be removed:

- GarageYrBuilt-- Age of garage: Impossible to reconcile homes without a garage, as this is a numeric, not categorial, value.

- Misc Feature -- MiscFeature and MiscValue (value of Misc Features)

- MSSubClass -- Can be directly inferred by combination of HouseType, BldgType, and YearBuilt. Often highly (>0.9) correlated with HouseType

Data type checking

After all data cleaning work. We are ready to move to the feature engineering work. The last thing is to make sure all data now are in numeric type.

Data on Feature Engineering

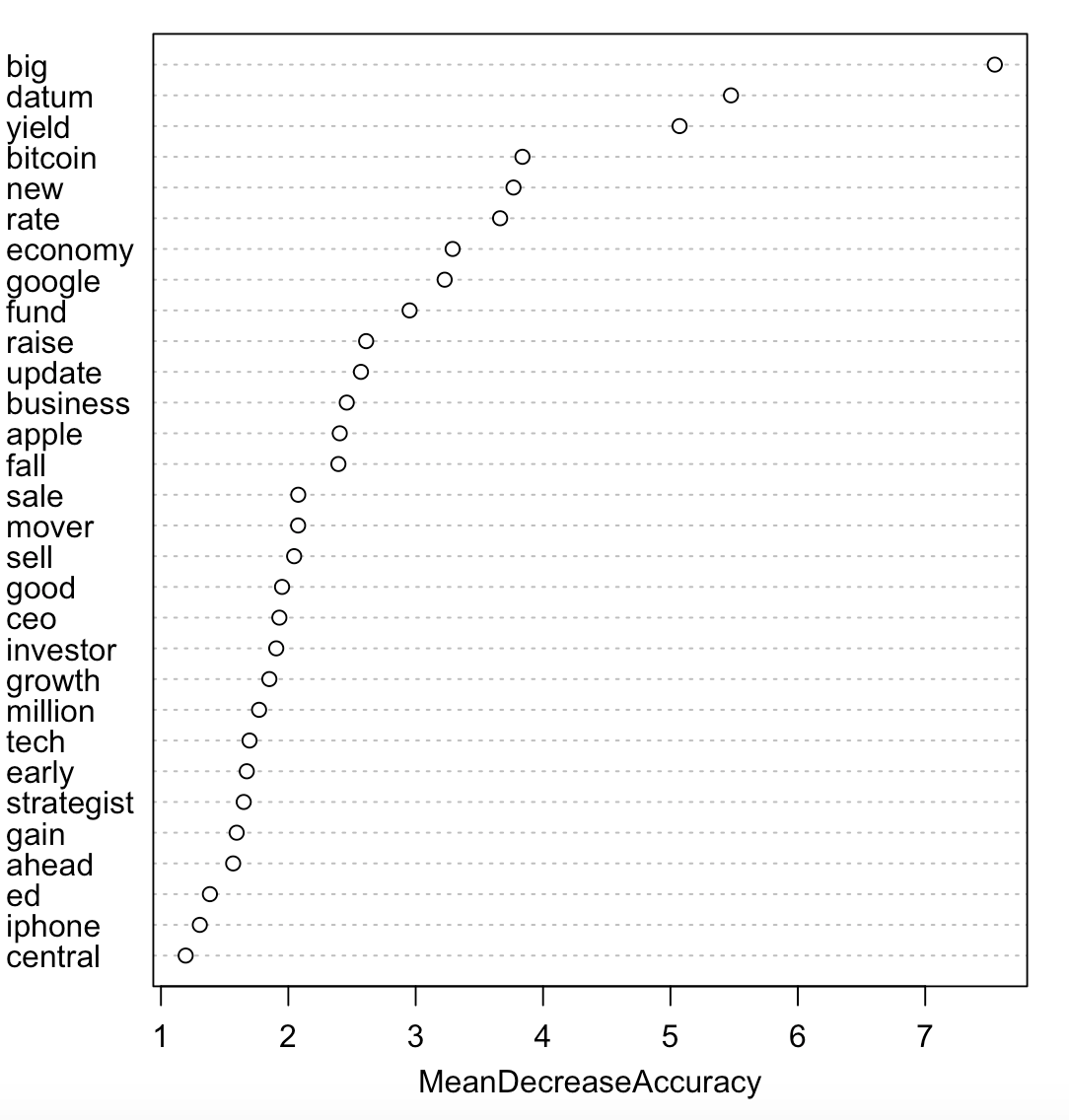

Three models were used for feature engineering:

- AIC - forward selection, we got a 64-feature list

- Lasso - lambda was decided by using 5-fold cross validation. With penalty we got a 110-feature list

- Boost - based on Gradient Boost, we selected the most important 70-feature

In the next step, we manually compared the 3 feature lists. We kept the features which are listed as important or significant in all lists. For features not exist in all three lists, we trained the model with/without the features. Then compared the training result (RMSLE).

We finalized a 61-feature list. For details please refer to appendix table 1

Modeling and hyperparameter optimization:

Data was training using five models to get the prediction of the house prices:

- Linear - K-Fold testing of multiple linear regression

- Elasticnet - 5-fold Cross Validation was used to get the optimal alpha and rho hyperparameters balancing Ridge/Lasso regularization algorithms

- Ridge - 5-fold Cross Validation was used for lambda hyperparameter choosing, numeric features are all standardized to avoid large number impacts of penalization

- RandomForest -- K-Fold testing and hyperparameter optimization using the RandomForest Regressor algorithm from sklearn

- GBoost_AIC - K-Fold hyperparameter optimization and modeling using GradientBoosting Regressor from sklearn

Model stacking and train/test comparison:

Stacking model, where a 24% XGBoost, 36% Gradient Boost, 20% Random Forest, and 20% linear stacked model was found to be best against the test set.

Score evaluation

| Test Location | RMSLE Score |

| Local | 0.1126 |

| Kaggle | 0.1301 |

Data Optimization:

Based on the training and test result. We noticed that there is an overfitting issue.

Because during feature engineering phase, a lot of manually comparison work was conducted to optimized the training score (training RMSLE). This can be the main reason of the overfitting.

We decided to simplify the feature selection process. Instead of using 3 models we focused the feature engineering work with AIC model only. In order to get a competitive feature list, we decided to use “both selection” procedure ( Begin with either a model that only has an intercept term or a model that includes all parameter terms, then sequentially add or remove the predictor that has the most/least impact on the model, respectively).

In this way, we finalized a 71-feature list (Appendix table 2)

Data was still trained with the same five models mentioned in the modeling section. We used the same model stacking strategy. Stacked grid search: 40% gBoost, 25% Linear, 35% RandomForest.

This time, the training score was a little higher than our last round training score. However, we noticed that the test RMSLE score is much lower. The training and test score are closer to each other.

Score evaluation

| Test Location | RMSLE Score |

| Local | 0.122 |

| Kaggle | 0.118 |

To be continued:

- For feature removing, instead of randomly removing one of the highly related features (high correlation value), we could do a comparison test to see which feature may contribute better in the model.

- For some numeric features we didn’t consider the distribution of the data. We could do some further check to consider features which may not be normally distributed. According to the result, we could use log or square strategies to normalize data.

Reference:

[1] De Cock, D. Journal of Statistics Education, Vol. 9, #3 (2011)

Appendix:

Table 1: 61-feature list selected by using AIC, Lasso, Boost

| TotalSF | LotArea | BsmtExposure | Neighborhood_NridgHt | BsmtQual | Exterior_MetalSd | MasVnrType_BrkFace |

| TotalBsmtSF | Street_Grvl | Exterior_BrkFace | Neighborhood_Crawfor | OverallQual | ScreenPorch | Neighborhood_NoRidge |

| GarageArea | TotalBath | KitchenQual | SaleType_New | Neighborhood_NWAmes | Foundation_Slab | BldgType_2fmCon |

| Exterior_Stucco | PoolQC | RoofMatl_WdShngl | BldgType_Duplex | HouseStyle_1.5Fin | HouseStyle_SLvl | GarageType_BuiltIn |

| GarageType_No | GarageScore | Neighborhood_Sawyer | ExterCond | Neighborhood_BrDale | Exterior_CemntBd | Neighborhood_Blmngtn |

| BsmtScore | Neighborhood_StoneBr | BldgType_Twnhs | MSZoning_FV | Neighborhood_BrkSide | MoSold_Spring | GarageType_Detchd |

| OverallCond | RoofStyle_Hip | BedroomAbvGr | Condition_Feedr | BsmtCond | Neighborhood_NPkVill | BldgType_TwnhsE |

| YearsAgoBuilt | TotRmsAbvGrd | SaleCondition_Abnorml | MasVnrArea | Functional | Condition_Artery | LotConfig_CulDSac |

| Exterior_ImStucc | Fence_GdPrv | HouseStyle_2Story | MasVnrType_Stone | HouseStyle_SFoyer |

Tabel 2 : 71-feature list selected by AIC both selection procedure

| TotalSF | GarageAge | YearsSinceSale | SaleType_New | Neighborhood_Edwards | Exterior_HdBoard |

| TotalBsmtSF | GarageCars | KitchenQual | Fireplaces | CentralAir_N | LowQualFinSF |

| LotArea | TotalBath | Neighborhood_NoRidge | ExterQual | Neighborhood_BrkSide | Neighborhood_Mitchel |

| GarageArea | EnclosedPorch | SaleCondition_Family | MSZoning_FV | LotConfig_FR2 | KitchenAbvGr |

| LotFrontage | ScreenPorch | 3SsnPorch | Condition_Feedr | GarageFinish | LandSlope_Mod |

| OverallQual | Neighborhood_StoneBr | BedroomAbvGr | Condition_RRAe | GarageQual | MasVnrType_BrkFace |

| BsmtScore | PoolArea | SaleCondition_Abnorml | LotConfig_CulDSac | GarageType_CarPort | MSSubClass_50 |

| OverallCond | TotRmsAbvGrd | Condition_Artery | Neighborhood_Veenker | Electrical_FuseA | Street_Grvl |

| YearsAgoBuilt | Functional | Neighborhood_NridgHt | Neighborhood_Somerst | Exterior_MetalSd | HeatingQC |

| MasVnrArea | BsmtExposure | Neighborhood_Crawfor | FireplaceQu | Exterior_WdShing | SaleType_ConLI |

| WoodDeckSF | Exterior_BrkFace | BsmtQual | MoSold_Winter | MSSubClass_70 | MasVnrType_BrkCmn |

| Foundation_BrkTil | GarageType_Basment | Neighborhood_NWAmes | Neighborhood_ClearCr | Foundation_Slab | |