Using Data to Analyze: Ames House Pricing

Introduction & Background

Real estate transactions are fraught with anxiety because neither the seller nor the buyer wants to get the short end of the stick. Ascertaining the value of a home is not quite as simple as the old maxim of “location, location, location,” though neighborhood does play an important role. But other features also are important in determining the right price for a house. Our primary objective was to create and assess regression models to accurately predict the home prices in Ames, Iowa based on the data set acquired from Kaggle.

The dataset consists of over 2500 house sale records between 2006 and 2010. It consists of 80 initial features with 37 numeric type features and 43 categorical string type features. Given the goal of the project, the Sale Price was our target variable.

Exploratory Data Analysis (EDA)

To gain a better understanding of the data we were working with, we started with some basic exploratory analysis. As our first step, we verified the number of missing values to determine if they contributed to our model or if their exclusion would improve them.

While the dataset appears to have a large number of missing values, the majority of NA values indicate that a property does not have a particular feature. For example, the missing values for pool quality ("PoolQC") are houses that do not have pools. This is also the case for feature variables like fireplace, alley, fence, etc.

Distribution of our dependent variable. (Sale Price)

To gain a better understanding of our dependent variable, we created a histogram plot of the Sale Price to understand its distribution spread. Looking at the first plot, we can see that our Sale Price skews to the right. With this in mind, we performed log transformation on the Sale Price to normalize the distribution in order to gain accurate statistical analysis and improve performance of our linear models.

As shown in the graph below, we found the sale type categorized as ‘Other’ has the most significant variability and the greatest amount of outliers among all the sale types in our dataset.

The median price of ‘Other’ is closer to the bottom of the box, and the whisker is relatively shorter on the lower end of the box, indicating that the distribution of the data for ‘Other’ is skewed toward the higher prices. Also, when we do a value count of the Sale Type variable, we found ‘Other’ has 2396 counts as a top type, while the second most frequent type --’Home just constructed and sold’-- has only 79 counts. Because ‘Other’ is such a vague label, we need to more carefully examine the label to give it a more explicit definition in the future data collecting process.

Besides “Other,’’ the box with the largest interquartile range and highest median sales price is ‘Home just constructed and sold,’ as expected. After the new construction type, ‘Contract 15% Down payment regular terms’ homes have the second-largest median price and extensive interquartile range compared to houses labeled as ‘Contract low Down payment and low interest’ with the lowest median price and skewed-to-upper-quartile distribution.

Such a difference reminds us that since better quality houses tend to have higher sales prices (as shown in the modeling section), a targeted marketing strategy combined with a desirable location for such homes may help facilitate the sales process and justify the higher prices.

Another exciting finding happened when we drew the boxplot above. House foundation with poured concrete made the houses price-inelastic with high variability and many outliers. Homes built with stone, on the other hand, tend to have a sizable interquartile range and no outliers. For housing developers in Ames, choosing poured concrete as the foundation seems to be a safe choice, while choosing stone as a foundation for houses at a lower price range appears to be a stable choice.

While exploring our Ames dataset, we came across another interesting find when comparing the sale price against overall house condition ratings, as seen above. While the overall quality rating of the house showed a linear relationship with sale price, with sale price increasing with the quality rating, the overall house condition did not display this direct relationship.

On the contrary, the houses with condition ratings of 5 seemed to have a higher average sale price than houses with higher ratings from 6 to 9, with an average sale price of $202,167.33. This is higher than the average sale price of the dataset overall, $178,059.62, and the average sale price for condition rating 9, $199,765.85. Naturally, we wanted to investigate further.

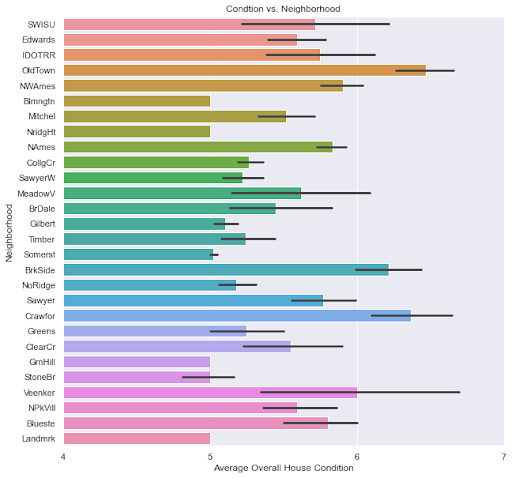

Next we looked at the average overall condition compared to the neighborhood of the house. We were able to see that certain neighborhoods had higher average conditions, while others were lower. In particular, we saw that OldTown, Brookside and Crawford had higher average ratings, surpassing 6, while Northridge Heights, Stone Brook, Greens Hills and Somerset had lower ratings averaging closer to 5.

Finally, we decided to compare the neighborhood to average sale price to further explore this relationship. When plotting this, we saw that many of the neighborhoods with higher average sale prices were the same neighborhoods with lower average ratings, and vice versa. Therefore, we concluded that neighborhood carried more importance than house condition when determining the sale price.

It appears that people value desirable neighborhoods and are willing to pay more money for a house in neighborhoods, such as Northridge Heights, Stone Brook, Greens Hills and Somerset. What specific benefits these neighborhoods have over others would be a topic for further analysis.

Data Preprocessing Methodology & Feature Engineering

As alluded to earlier, due to possible multicollinearity and presence of mixed data types in our dataset, multiple strategies/approaches were attempted for feature selection and feature engineering. For illustration purposes, below graph visualizes the distribution of sale price in Ames, Iowa based on “Year Built.”

From the above graph, we are able to identify that the rate of increase in sale price for homes built after 1980 is exponentially higher than for homes built before 1980. The same trend persists for homes remodeled after 1980-1990. Based on this knowledge, we created a new feature which checks whether a home was built after 1980 or was remodeled after 1980. Furthermore, since original features exhibited strong correlation with the sale price as well, they were retained.

For some features like, porch type, wood deck square feet, second basement type area, pool area, we discovered that additional square footage or type have minimal impact on the sale price. As illustrated in the graphs below, these features have no inherent correlation and provide no insightful information for our model. Instead, these features were transformed to indicate whether a property has the aforementioned features regardless of their type or square footage.

We also worked on tackling problems associated with imbalanced classes in our categorical features. As mentioned earlier, some of our categorical features are highly imbalanced.

To alleviate this problem while maintaining the authenticity of our data, we decided to combine minority classes of certain features. It is important to note, minority classes were combined based on a strong positive relationship to sale price as to not to interfere with the authenticity of our dataset.

In some cases, to counter multicollinearity and high co-variance, we combined features with the aim to reduce dimensionality while maintaining high model performance. As an example, the below graph shows how basement, 1st floor and 2nd floor square footage were combined to create a new variable that encapsulates this information. Although this method netted positive results, it was also evident in some of our models that the original features were also imperative to our model performance.

In some of our models, this method was used interchangeably to determine whether or not we should retain our original features while also adding a new feature indicating total square footage. Albeit in some of our models the newly created total SF columns performed magnificently, it was determined that in our final model it will not be used due to information loss in the case of dropping original features and high correlation with Grd. Live area.

We also analyzed NA values in our dataset to find optimal ways to compute the missing values. We were able to determine that missing values in our data are non-random. Rather, missing values in the dataset indicate the absence of certain features in a property. Based on this knowledge, some features were imputed with the use of IterativeImputer (numerical features) and KNN Imputer (categorical features) of the SKLearn library.

As mentioned earlier, some features like PoolQC, Misc., Pool Area were dropped outright as the ratio of missing values surpassed 95%. Some columns such as, Year Sold and Sale Type, were dropped due to possible data leakage concerns (Data Leakage Explained).

Besides using statistical testing and correlation matrices, we also utilized our Lasso model (more on this below) as a method of feature selection. Based on the combination of these methods, we attempted different models with varying degrees of dimensionality to determine and compare model performance. Although most effective models were contingent on high dimensionality, we were able to use the aforementioned methods to reduce dimensionality while also maintaining the robustness of our models.

These are some of the methods we used as part of our feature selection/engineering. As it would be extremely lengthy and, in all likelihood, very monotonous, not all of the methodologies were included in this section.

We started our modeling process by doing a multi-linear regression using backward elimination stepwise regression. Our initial data cleaning gave us over 130 features, and we wanted to reduce that amount to find the top 40 features that affect the sale price. We first split the data set into a training set and testing set and then ran the backward elimination stepwise regression.

Overall quality, location(neighborhood) of the house, Year built, Garage area, Total basement square feet and Presence of Cul-de-sac are among the main features the model identified as affecting the sale price. We achieved an R2 value of .9257 for the Multi Linear Regression model and then plotted the real values of the test set against the predicted values to observe the strength of the relationship. The figure below shows a strong relationship between the values, but we wanted to attempt to penalize the model by performing a Lasso regression analysis to gain a better understanding of the dataset.

Another model we examined was the elasticNet model, because it is a penalized linear regression model that includes L1 and L2 penalties, elasticNet combines the benefits of ridge and lasso regression. After cleaning the data, feature selection, pipeline and gridsearch to find the best alpha and L1 values for our model, we achieved an accuracy score of 0.929 for our training data and 0.929 for our test data. We then plotted the real values against the predicted values for the training and test data and observed the strength of the linear relationship of the data, as well as the strength of our model.

Then, we looked at the coefficients for our data to see which features influenced our model. We saw that the Greens Hills neighborhood was given the highest positive coefficient for neighborhood, but is not featured in our next Lasso model. We also observed that near positive off-site features--park, greenbelt, etc and Commercial zoning– were given negative coefficients, reinforcing the importance of location for house buyers.

The next model we completed was the LassoCV regression model. The LassoCV model was a strong model for our dataset because it naturally selected subsets by shrinking coefficients with minor contributions to the model to zero, so that we don’t have to build many different models with different subsets. Another benefit of Lasso regression is that it favors subsets of features that have less collinearity, which would improve the interpretability of our model.

We proceeded to use these benefits on our cleaned and prepared dataset to calculate and use the best alpha to achieve optimal accuracy scores. Ultimately, we achieved accuracy of 0.918 on the training data and 0.930 on the test data. Again we plotted the real values against the predicted values for the training and test data and observed the strength of the linear relationship of the data, as well as the strength of our model.

When looking at the importance of the features for our model, we see that LassoCV chose 76 features and eliminated the other 11 features. Ground floor living area and overall house quality were given the highest importance, with many other important features relating to square footage or neighborhood. Basement exposure and square footage had various effects on the model, showing how one area of the house could potentially affect the sale price in many ways.

Of the neighborhoods featured, Somerset, Crawford, Northridge Heights and Stone Brook had the most positive influence on sale price. They are the same neighborhoods we saw previously when comparing house condition against the neighborhood and sale price.

Closing Remarks About Data

As anticipated, due to the linearity of our dataset, all linear models performed well when compared to more complex models. Though SVR (Support Vector Regressor) and GBR (Gradient Boosting Regressor) models performed better with the training dataset (~98% r2 score), both of these models generated identical test scores when compared to our regularized linear models. Thus, we decided that regularized linear models are preferred for this dataset due to its simplicity and interpretability (White-Box vs Black-Box).

As for investors, there are some features to prioritize when buying properties in Ames, Iowa. For starters, since the dataset is for the period of 2006-2010 and we are currently living in an entirely different economy, you need a time machine to go back in time and start investing in Ames real estate. On a serious note, for our models we found that Neighborhood, Year Built, Living Area Size, Overall Quality, Garage Cars/Area, Total Bedrooms, Lot Config. (cul-de-sac), Year Remodeled had an immense impact on the sale price of a property. Investors can use this information to exploit micro and macro economic trends and maximize profit.

As an example, for homes with more than 1.0 Garage Cars (identical to Garage Area) every additional 1.0 Garage Cars space equates to about $9,000 increase in average home price and peaks at 3.0 where the relationship subsides and eventually inverses.

Another example would be the counter-intuitive nature of the relationship between Neighborhood, SalePrice and Condition. For some neighborhoods like Northridge Heights, Stone Brook and Somerset, lower average property condition and higher average property value is analyzed. This suggests that investors should prioritize location over the condition of a property.

Moreover, investors can also take advantage of seasonality trends present in our dataset. More specifically, investors can net additional revenue of approximately $16,000 on average if a property is sold in January. Investors should also avert selling properties during the months of March-May when the average home value tends to drop to its lowest point. On the other hand, investors looking to buy should do so in the months of March-May and save about $7,000 on average.

As illustrated earlier, home Year Built has a significant impact on Sale Price. For homes built before 1980, average sale price is expected to be around $145,000, while for homes built after 1980, this figure jumps to $224,000. Moreover, investors can increase property value (built after 1980) by remodeling/renovating. On average, the sale price of homes built after 1980 and remodeled after 2000 is about $15,000 more than homes with no remodeling done after 2000. When implementing this strategy, investors should prioritize renovating bathroom(s) and kitchen before moving to other features.

Additionally, for properties with two bedrooms and one bathroom, investors are advised to build an additional bathroom (half or full) if home layout permits. The average difference of sale price between 2BR/1BTH and 2BR/2BTH properties is substantial – almost $100,000 or 55%(!).

These are some, not all, of the recommendations we can give to potential investors looking to buy properties in Ames, Iowa. To learn more about similar micro and macro economic trends in the United States, feel free to reach out for possible consultations. Contact information is referenced above.

Future Work with Data

The data was taken from 2006-2010; we would like to add data from 2011-2022 to have it reflect more current trends. With much more data, we would likely achieve higher performance from our models and could even potentially use more complicated models. A future path of investigation would be to source more data for our model training.

We would also like to reduce feature dimensionality with more efficient feature engineering. We could do this by constructing a more in-depth exploratory analysis of the features and even combine some similar features to reduce multicollinearity.

Adding external data to add features to the analysis, such as inflation, unemployment data, CPI (Consumer Price Index), housing market trends, & other economic factors to gain a deeper understanding of the housing market at any particular time frame would be imperative to our research.

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Connect on Linkedin!

Tigran Vardanyan

Amanda Manfredo

Chance Xu

Raunak Padore

Visit Github for source code.