Archive Intimate Partner Violence News Documents

Project GitHub | LinkedIn: Niki Moritz Hao-Wei Matthew Oren

The skills we demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

There are many resources for archiving incidents of "gun violence" that the media cites in news reports. However, there is a serious lack effort when it comes to tracking intimate partner violence (IPV) in the public domain. Unfortunately, because general gun violence archive involve reports of incidents across the nation where definitions of relationships and crimes that involve them differ, they suffer from being too broad for serious Intimate Partner Violence Intervention (IPVI) research. Also, because we know that a large number of serious IPV offences involve violence without firearms, the focus on incidents involving guns leaves them out.. These issues make it difficult to know the the true scope of IPV offences. Therefore, our team was tasked with engineering a prototype for archiving serious IPV incidents that would allow for a robust analysis of IPV while circumventing the problems introduced by using more general gun violence archives.

The Data

We decided to use a cross-section of the Gun Violence Archive to get URLs for violent offences that involved "significant others". Because we needed an alternative class to IPV, we also pulled URLs for violent offences that did not involve "significant others." From this we received about 3,200 URLs to IPV news documents and about 3,200 URLs for non-IPV news documents.

Now faced with the task of needing to scrape thousands of different domains with different page instructors, we developed a naive scrapper that would scrape bulk text from these URLs and validate that it was news content. From this we were able to collect a data set of news documents, 2729 labeled as IPV and 2720 labeled as non-IPV. This allowed us to get the volume of data suitable for training a language model that can categorize articles as being related to an IPV offense in real-time.

Feature Engineering

We used term frequency-inverse document frequency (TF-IDF) to engineer features for our classification model. TF-IDF is a measure of the amount of important information a word in a document provides relative to a corpus. This is achieved by calculating the product of term frequency (i.e. TF) and the inverse document frequency (i.e. IDF) for every word in the corpus. The formula for term frequency and inverse document frequency can take many forms. We used scikit-learn's TFIDF class, which uses the raw count to get the term frequency of each word in a document. Then it uses the following formula for inverse document frequency: ln(N/DF)+1, where N is the number of samples and DF is the number of documents that the word appears in. By default it then uses L2 norm to normalize the term vectors; this assure the scores are between 0 and 1.

So, for every word in the corpus we now have a score of how important that word is to each document. A high TF-IDF for a word w, indicates that w appears in the document but is rare across the corpus, and a low TF-IDF means w shows up in the document but is common in the corpus. A TF-IDF of zero means that the word does not show up in the document but does show up in the corpus. Now that we have useful numerical information about each document, we can begin to train a model that will be able to account how important each word is for a given class (i.e. IPV vs non-IPV).

The Model

We decided to utilize regularized logistic regression for the binary classification task at hand. We had enough data to run cross-validation and search for hyper-parameters that would help with reducing the variance with such a high-dimensional data set. This model slightly outperformed a multinational naive bayes model trained on CV. The Logistic regression best model in the CV had a R² of .85.

The Prototype

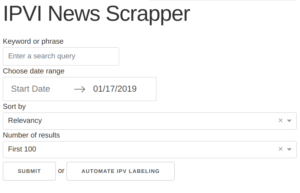

With this model, we created a prototype application that can be used to automate the classification and archiving of IPV news articles. This was achieved by creating an interface of a news query API in Dash that will return information about an article including its URL.

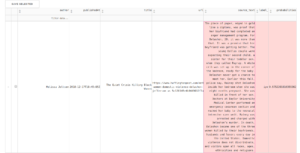

By using Dash as an interface, even a low technically skilled end user can search the web for potential IPV articles, and download them to be saved in the IPV archive. When the user has inputted a manual query, she is presented with all matches in the form a a data frame.

She can then manually save articles to the global IPV archive that will grab the text via the same naive scraper used to get our training documents.

This process can also be automated. The button labeled "Automate IPV Articles" activates the IPV classification model.. Once clicked, it will return a data frame of articles along with a prediction score for how confident it is in its predicted label. The model has a probability estimate of 97.5% that the following article is about IPV.

At this point you can manually add the article to the IPV archive or add all article that meet a certain estimated probability threshold. For example, you can add all articles published on a certain day from a certain domain that have a probability estimate above .90 of being about IPV.

Conclusion

A full production version of this application could leverage additional models for more fine grained labeling and analysis. For instance, a member of our team created a model for labeling whether an the IPV incident is a repeat offence. Built into the archive is also the ability to scrape additional IPV articled from the Gun Violence Archive. So between keeping in sync with the Gun Violence Archive and the automated IPV search functionality, even the prototype archive has the potential to be a very robust resource for those analyzing intimate partner violence in the news.