Predicting Behavior to Retain Customers Through Marketing

Introduction

Marketing is the action or business of promoting and selling products or services, including market research and advertising. Businesses are often inundated with large amounts of data within different departments. One of the most important departments is that of marketing which often times deals with how to generate new customers as well as keep current customers.

This capstone project is based on a Kaggle competition named "Telco Customer Churn". The goal of the project is to predict whether customers will leave or stay with their telecom provider based on relevant customer data. This is known in the marketing industry as "customer churn". It is defined as the loss of clients or customers.

Dataset

The dataset was obtained from Kaggle. The origin of the data is the "IBM Watson Analytics Community." The raw data contains 7043 rows (customers) and 21 columns (features). The data includes a variety of customer data such as "gender", "senior citizen", "tenure", "monthly charges", etc. The last column in the dataset is the "churn" column which is our primary target in this exercise.

Preprocessing

The first steps of this project was to examine the data and gain an understanding of what would be important.

Of the 21 feature columns, it was determined that two (2) were "integers" (int64), one (1) was "floating" (float64) and the remaining eighteen (18) were "object" or strings. The three numerical columns were: "senior citizen", "tenure", and "monthly charges". However, we had one more column "total charges" which had to be converted from an "object" to a numerical data "int64".

Dummy variables were also applied to the eighteen (18) "object" variables since these were categorical in nature.

Data Exploration

The dataset was explored through some "visualization" tools to gain a general understanding of the customer data. Here are some examples of some issues that were examined:

- A pie chart was used to analyze our target column "Churn".

73.5% (5174) customers did not churn or leave the telecom company whereas 26.5% (1869) customers did churn and leave.

- Bar chart was used to group and compare the amount of customers that churned with respect to the length of the customer contracts:

Customers on month-to-month contracts have a large amount of churn.

- Another barchart was used to also compare churn across gender.

Churn rates across males and females seems approximately identical.

Feature Exploration

A correlation matrix was used to get a sense of how each feature correlates to other features.

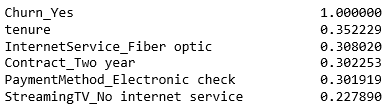

The top 5 features showing the most correlation are below:

The length of the contract tenure has the most correlation to churning.

Modelling

All the features were used in several different models to determine which one is the best at predicting customer churn. To test the models, the data was split into a "70% - 30% train test split". First the models were instantiated and then fitted. The models were fit using "X_Train" and "y_train", and then scored using "X_Test" and "y_test".

"Cross validation" with "grid search" was used to select the best hyper-parameters for the model that seemed to perform the best. The best hyper-parameters were then used on the full train set, and tested on the model test set.

The models that were evaluated were: "Logistic Regression", "Random Forest" "Decision Trees", "K Nearest Neighbors", "Neural Network", and "Support Vector Machines". The performances of the models were evaluated using the r-squared value. A high r-squared value means a higher accuracy.

The top 3 performers based on the "test dataset" were:

- Logistic Regression

- Neural Network

- Decision Tree - Bagging Classifier

Logistic regression had the best performance with respect to to the test dataset when measured with the r-squared.

"Cross validation" with "grid search" was used to further tune the hyper-parameters. The best parameter was an "L2" logistic regression with a "C" value of 0.0061. The r-squared results are shown below for the logistic regression:

- Logistic Regression

R-squared for training dataset - 0.8049

R-squared for test dataset - 0.8012

Conclusion

In conclusion, the logistic regression was the best predictor of customer churn based on the customer data. Greater accuracy may be derived by exploring whether any features can be removed or engineered to add more predictive value.