Data Analysis on House Prices in Ames, Iowa

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Objective

The Ames Housing dataset was compiled by Dean De Cock for use in data science education. The dataset contains 2,919 observations and 80 explanatory variables describing various aspects of residential homes in Ames, Iowa.

The goal of the project is to apply machine learning techniques to predict the sale price of each home in the dataset with the help of the features provided.

Data Transformation

Missingness

Our first course of action is to identify the missingness within the data. Even though numerous variables had missingness upwards of 90%, we were able to salvage the data by making certain assumptions after thorough inspection. We assumed features such as alley, garage, fence etc. were missing because the house simply didn't have those features, thus replacing them with not available.

Features which were missing a smaller percentage of values such as electrical, utilities and lot frontage were replaced with the mode. Whereas, numerical features month sold, year sold, subclass and garage year built were converted to categorical values.

Missingness Plot

Missingness Plot

Skewness

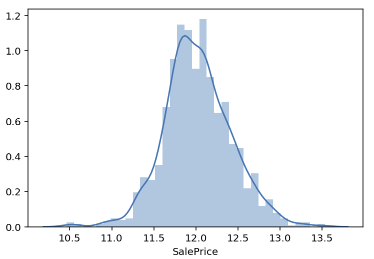

Upon closer inspection of the distribution of our target variable, Sale Price, we see can it skewed to the right. In order to improve the normality of our distribution a log transformation was performed.

Outliers

The figure below plots the relationship of the sale price with respect to the ground living area, which as expected, should be linear i.e the greater the space we're purchasing the more we have to pay. Two points with ground living areas exceeding 4800 square feet and below a sale price of $200K immediately draw attention. In my opinion, it was safe to remove these two outliers when we compare other houses greater than 4000 square feet selling for north of $700K.

Data on Feature Engineering

Categorical Features

For our categorial features we used pandas' "get_dummies" function to one-hot encode the values. This process converts our categorical variables into a form that could be provided to our machine learning algorithm.

Numerical

A power transform will make the probability distribution of a variable more Gaussian. This is considered a "variance-stabilizing' transformation. For numerical features we used SciKit Learn's "Power Transformer" function and its Yeo-Johnson approach. This is a generalized version of the transform where a parameter is found that best transforms a variable to a Gaussian probability distribution.

Model Selection

The root mean squared error (RMSE) was used to evaluate the fit of the models. This is also the metric Kaggle used to evaluate the predictions submitted by users. Grid Search Cross Validation and Randomized Search Cross Validation with K=5 were used for Random Forest and XGBoost, respectively.

Models and HyperTuning

The individual models trained were the Random Forest and XGBoost models. Our initial RMSE for the Random Forest model was 0.25376 and 0.22943 for XGBoost, which was clearly the superior algorithm. Tuning the hyperparameters for XGBoost model via a Randomized Grid Search allowed us to greatly improve our RMSE to 0.20367.

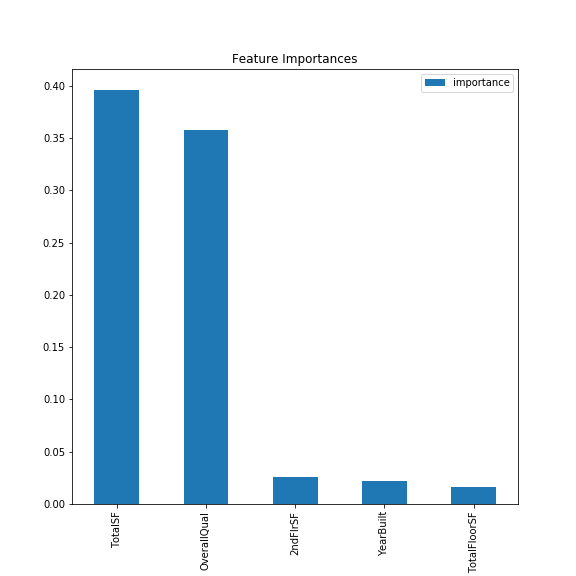

Feature Importance

In the figure below we see the top five features in terms of importance according to the random forest model. We see that the total surface area feature we created combining the total basement surface area and ground living area has the highest weight followed by the overall quality of the house. The importance given to these two features makes intuitive sense as they are highly sought out traits by someone purchasing a house. Home buyers ideally want larger living spaces and good quality houses.

Conclusion

After submission, our score placed us in the top 10% of submissions. With the help of various machine learning techniques such as one-hot encoding, power transformations and tuning hyperparameters, we were able to vastly improve our model. A myriad of approaches could've been taken to address missingness, feature engineering and model selection, which is what made this project captivating.