Studying Data From Machine Learning to Predict Housing Price

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

Inspired by the accomplishments of the women in the movie, “Hidden Figures” we named our team after the movie. We are an all-girls team of three who come from diverse parts of the world -- Lebanon, India, and China.

This blog post is about our machine learning project, which was a past kaggle competition, “House Prices: Advanced Regression Techniques.” The data set contains “79 explanatory variables describing (almost) every aspect of residential homes in Ames, Iowa…[and the goal is] to predict the final price of each home” (reference).

We collaborated on certain parts of the project and completed other parts individually as if it were a research project. The goal of the project, as aspiring data scientists, was to utilize our arsenal of machine learning knowledge to predict housing prices.

The following blog post is categorized into four main parts: Exploratory Data Analysis & Feature Engineering, Creating Models, Conclusion, and Relevant Links.

-

Exploratory Data Analysis & Feature Engineering

- Multicollinearity

We started the project research by analyzing the data and visualizing it. Real estate is a new area for all three of us, but we managed to gain some interesting insights. For example, some variables are closely correlated with one and other. Some pairs are correlated by nature, such as “Basement finished area” and “Basement unfinished Area” while other pairs were correlated by deduction, such as “Overall condition” and “Year built.”

- Seasonality

Next, we explored the data to see if there were trends in sales prices associated with seasons. We found out that there are more sales during summer. Research shows that high supply doesn’t necessary means high price since the price of housing normally peaked around summer. One theory is that when there are more options in the marketplace, people are more likely to find their ideal house and put down the deposit.

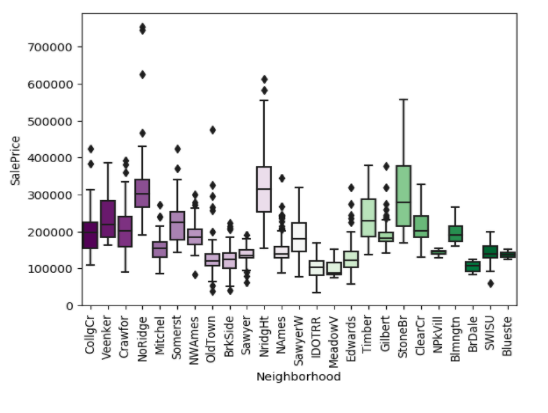

- Neighborhood

The neighborhood is an important factor when it comes to buying houses. Since the raw data does not contain school district information and crime rate, neighborhood was an important factor, implying above factors. After plotting out the graph down below, we went back and checked the accuracy, neighborhood with the higher price was equipped with high-end facilities, besides school districts and great locations. We can also see the variance of an expensive neighborhood is typically higher, which explains the skewness of the sales price density as well.

- Creating Models

- Approach 1: Neha Chanu

“Everything should be made simple as possible, but not simpler,” said Einstein and I took this advice when I started creating models. First, I focused on simpler models, such as lasso and elastic net, before creating more complex models. Besides lasso and elastic net, I utilized gradient boosting regression, XGBoost, light gradient boosting, and random forest algorithms to build models.

To ensure how well the models were predicting sales price, I split the training data into two parts. One part was used to train my models, and another part was to check how well the trained model predicted sales prices. Through cross-validation techniques such as parameter and hyperparameter tuning, the best possible metrics were calculated to check for model performance. This metric was called cross-validation score.

Although I created several models, I selected the top 5 best models based on the cross-validation scores and combined them by averaging.

- Approach 2: Fatima Hamdan

After trying the linear models approach, it is good to see the data from a different angle, so I decided to try the tree-based models approach. These are the steps I followed:

1. Random Forest Feature Importance Analysis

Why start with Random Forest? Random Forest Model is considered one of the best models for feature importance analysis. The reason is that the tree-based strategies used by random forests naturally ranks by how well they improve the purity of the node.

The below graph shows the 30 most important variables in our dataset:

The accuracy score of Random Forest Model on the house price prediction is 0.88. Here comes the question: Is it the best accuracy score?

The following step is a comparison between several tree-based models to check which model has the best accuracy score in predicting House prices.

2. Comparison between the following regression models

After applying each of the following models on the data set, different accuracy scores were achieved as shown in the following graph:

The Gradient Boosting Model has the highest Score with 0.90 and with error 0.095. So, in the following steps, I relied on Gradient Boosting Model in my analysis.

3. Hyper-Parameter Tuning of the model with the highest score

To have a better performance of the gradient boosting model on our data set, I used the GridSearch function to tune the parameters of the model. After several trials of GridSearch, the following parameters were chosen with specific ranges:

Loss: Huber or Ls

Learning Rates: Range( 0.0001, 0.001,0.01,0.1,1)

Number of Estimators: Range(100, 1000, 10000, 14800)

Maximum Depth: Range(1 -> 15 )

Here are some of the parameters that gave me the lowest mean squared error:

Since stacking or averaging several models together might give a better accuracy score in the prediction, two gradient boosting models were used in the analysis depending on the first two rows of the above table as parameters.

On the other hand, linear models might help in improving the score as well. I used the following two models that gave me good accuracy scores as well.

4. Linear Models: Lasso & Ridge

In Ridge model analysis, after the parameter tuning step, I chose alpha to be 10. The error of this model is 0.11. In Lasso model analysis, the same steps of the previous models were applied and the error is 0.11.

5. Averaging Several Models

To sum up, the final model was the average of the 4 best models together: Gradient Boosting Model 1, Gradient Boosting Model, Ridge Model and Lasso Model. The final model scored 0.11846 on Kaggle House Price Prediction Competition.

- Approach 3: Lainey

Due to the lack of observations, the first step should be linear models. I started by implementing Lasso and ridge, both yield 0.92 (pretty strong) CV scores.

Since the data contains a lot of categorical variables, I was curious how well tree based model fit the model. Random forest, Extreme Random forest, XGboosting all yield okay result at the best during cross-validation. The best performer has to be Gradient boosting, which Fatima mentioned in details.

I also explored a little in SVM and finally combined my models using stacking.

-

Conclusion

Each one of us collaborated on the initial exploratory data analysis and feature engineering part. Then we worked on creating predictive models individually. Thanks for reading this blog post. Feel free to leave any comments or questions and reach out to us on through LinkedIn.

- Relevant Links