Studying Data to Predict house prices

The skills we demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

House prices are affected by various features such as home functionality, area of house, kitchen condition, garage quality, etc. Purchasing a house is a lifetime investment that requires enough research to make the right decision at right time. From customer point of view, this project aims to provide tools and data to help decide which houses are undervalued or overvalued on the basis of various features, so that one can save some dollars in one’s pocket after buying house.

Questions from the company's point of view might include what are the conditions that could be improved to sell the house at better price and satisfy customers for their life time investment. These techniques can also be applied to competitions using machine learning to solve in sites like Kaggle, Zigbang, etc and these techniques are highly demanded in market. Other goals of our group included mastering these machine learning techniques. The primary goal of this project was to predict house price in Iowa using various machine learning techniques.

Data:

For this project we used Ames Housing Dataset introduced by Professor Dean De Cock in 2011. Altogether, there are 29,019 observations (including trainee sets) of housing sales in Ames, Iowa between 2006 to 2010 with 79 provided features.

Data pipeline:

Beyond viewing the task as a student project, our team additionally aimed to build an automated process that would allow the user to predict sale prices in different settings moving forward. For building simple and efficient cooperative environment, we performed our own research about the data and as our ideas matured, it was deployed in specific directory and controlled (as shown in figure below). We made two pipelines- one data transforming pipeline using various transformers such as NAN remover, scaler, outlier remover, etc. and another model building pipeline for predicting house prices. The general schematic of our pipelines for fast iteration process is shown below:

Simple but efficient cooperative environment

Pipelines for fast iteration EDA visualization: In order to better understand the data, we started with exploratory data analysis. Here are some of the examples of data visualization. For more detailed EDA visualization, please check our Github link below. We first checked a correlation plot to identify features that are highly correlated with one another.

Correlation map between various household features. Here is an example of some boxplots of OverallQaulity vs SalesPrice where the plot is linear while the plot of SalesPrice vs GarageCars is not linear, due to outliers.

Box plot of SalesPrice vs OverallQuality (right) and SalesPrice vs GarageCars Automatic data transformer for outliers: In order to remove outliers, we first measured Z score, created effective strategy to remove outliers, and finally made a pipeline element to remove outliers as shown below.

Here is an example of how we removed values that were not available (NAN) for garage related data. We tried to remove NaN values using automatic outlier remover, however it did not improve our accuracy. So 31 out of 35 columns containing NaN values were addressed manually.

Here is an example of how we removed values that were not available (NAN) for garage related data. We tried to remove NaN values using automatic outlier remover, however it did not improve our accuracy. So 31 out of 35 columns containing NaN values were addressed manually.

House Model:

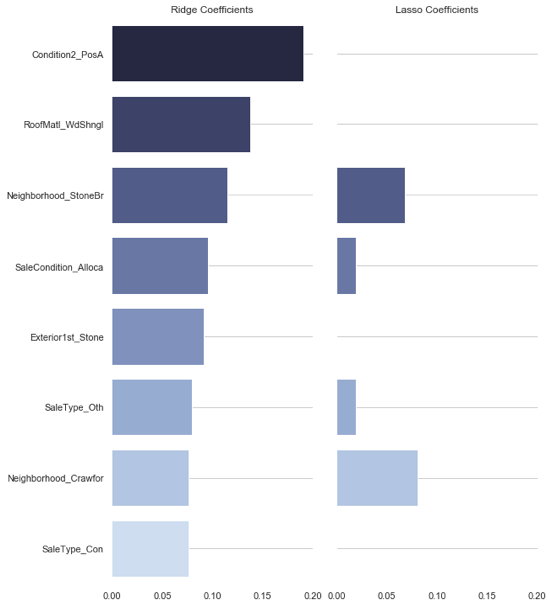

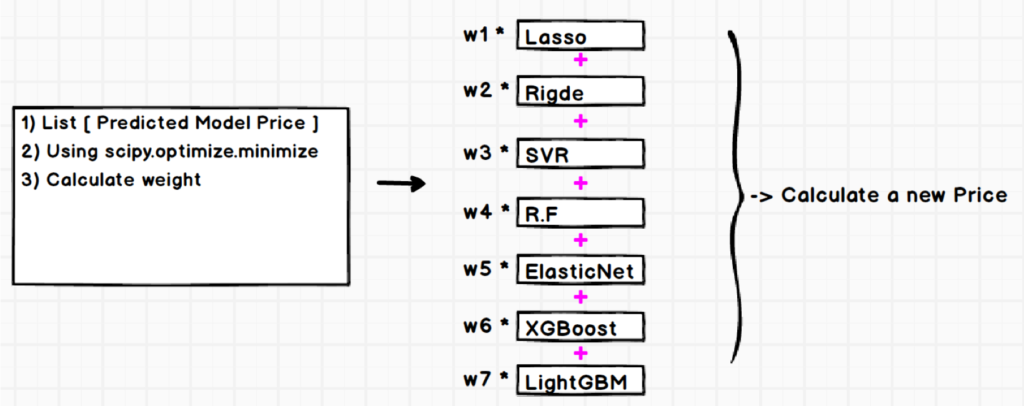

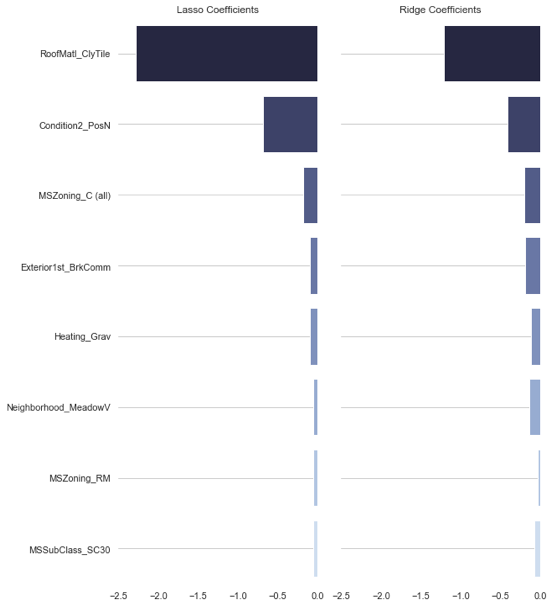

For building our house model, we started with Lasso Regression, which was fast and easy to apply. It also gave us a good result. Then we tested other supervised learning methods such as Ridge, Elastic net, SVR random forest, Boosting, and compared the results. Finally, we ensemble various models into meta-model by stacking and averaging. The figure below illustrates our final house model.

Stacking: Stacking is a method that combines predictions of several different models. Using this method, various ML algorithm can be combined to produce better predictions. This is a powerful machine learning approach as it can incorporate multiple models of various types. This allows the weaknesses of one model to be compensated by the strengths of other models. Various models can be combined using meta regressor, an algorithm that combines different models.

Averaged Model: We also used another approach of combining various models by assigning weight to each. But averaging the models did not perform better than stacking model, so we used stacking approach to make our house model.

Hyperparameter Tuning: Tuning parameters is a very important component of improving model performance. A poor choice of hyperparameters may result in an over-fit or under-fit of the data for the model. We can adopt three different methods in tuning hyperparameters: random search, grid search, and Bayseian optimization. For our house model, we used grid search as various references where available. This proved easier to use, and works better with our small data size. We employed 5-fold cross validation using grid search for optimizing the hyperparameters.

Results: The final results of the various models used for predicting sale prices are summarized in the table below:

| Model | Train RMSE | Kaggle Score |

| ElasticNet | 0.10879657 | 0.11684 |

| Lasso | 0.10869445 | 0.11691 |

| Ridge | 0.11043459 | 0.11562 |

| SVR | 0.11368618 | 0.12019 |

| Randomforest | 0.15893652 | 0.17354 |

| Xgboost* | 0.12115257 | 0.12965 |

| lightGBM* | 0.13731836 | 0.14344 |

We did not use Xgboost or lightGBM for our final model as accuracy was not better. The results for our final house model are presented in the table below.

| Model | Train RMSE | Kaggle Score |

| StackingRegressor | 0.11069786 | 0.11554 |

| AveragingRegressor | 0.12458452 | 0.12066 |

Kaggle result: We were placed in top 3% (11/18/2018 ) in Kaggle competition and was the highest Kaggle score achiever in the September, 2018 cohort. Here is our Kaggle score distribution graph with number of submissions.

Conclusions: For our house model, we used stacking which performed better than the averaging model. It was not possible to analyze the effect of individual features on the house sale price which was a draw back for stacking model. From this model, we conclude following points:

- Skewness, Z score, variables to select as well as tuning model hyperparameter were very important

- Feature engineering such as filling in NANs ( 0 , mode , median ), binning, and using domain knowledge, etc. were most important to predict the house sale price

- GBM/Xgboost though powerful to give good benchmark solutions but not always not best choice for model fitting

Future directions: In order to improve this model's performance, we would consider improving following aspects in the future :

- Tune parameter for Xgboost, lightGBM model

- Apply clustering analysis to create new features

- Investigate more feature engineering potentials

- It would be nice to get time series event data and study the effects of 2008 recession on house sale price and predict its effect in case of recession in future

Our team: Basant Dhital, Jiwon Chan, and SangYon Choi completed this project. Please find all codes for this project in following Github link https://github.com/chazzy1/nycdsaML.