Studying Data to Predict Housing Prices in Ames, Iowa

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction:

Houses comprise the most common means of accumulating personal wealth for any family. However, as the measure of that value is not always clear, it becomes necessary to assess the data and value of the house between sales. These assessments are used not just for setting a sales price but for other purposes like second mortgages and home owners’ insurance. It is therefore necessary to make them as accurate as possible.

This project reviews important features to achieve accuracy in predicting housing prices. I employ regression models and tree based models, using various features to achieve lower Residual Sum of Squares error. While using features in a regression models, some feature engineering is required for better prediction. As these models are expected to be susceptible towards over fitting, Lasso, ridge regressions are used to reduce it.

About the data

The Ames Housing dataset was compiled by Dean DeCock for use in data science education. It has been hosted as part of the Kaggle competition.

The Task

The goal of this project is to use EDA, Visualization, data cleaning, preprocessing/Feature Engineering , and linear models, tree based models and XGBoost to predict home prices given the features of the home and interpret your linear models to find out what features add value to a home. This data was originally taken from Kaggle. And the project is built using R.

With 79 explanatory variables describing every aspect of residential homes in Ames, Iowa, this project challenges us to predict the final Sale Price of each home. Many features do not have a strong relationship with Sale Price, such as Year Sold. However, a few variables, like overall quality and lot square footage are highly correlated with Sale Price.

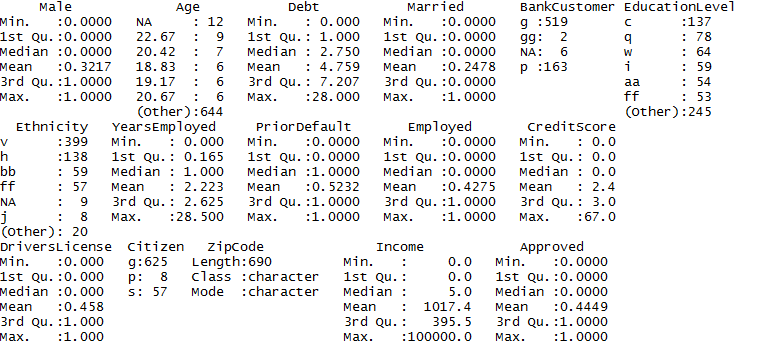

Pre-processing Data

There are 36 relevant numerical features of the following types:

MSSubClass: This identifies the type of dwelling involved in the sale. It is encoded as numeric but is in reality a categorical variable.

Square footage: Indicates the square footage of certain features, i.e. 1stFlrSF (first floor square footage) and GarageArea (size of garage in square feet).

Time: Time related variables like when the home was built or sold.

Room and amenities: Features like the number of bedrooms, bathrooms.

Condition and quality: Subjective variables rated from 1–10.

The supervised machine learning models need clean data to find an approximated relationship between the target sale price and the input features. On the other hand, the input features often need to go through nonlinear transformations, often called feature transformation. To achieve this goal, the following pre-processing steps were taken:

- Handling Missing values: Many of the variables had NaN values that needed to be dealt with. NaNs for the Continuous variables have been replaced by a Zero and a “None’ for some of the Categorical variables, and by the most frequent category for some. For GarageYear Built, its been replaced by the YearBuilt assuming both the years would be the same.

- Transform: Some of the ordinal fields were transformed to numeric ones by assigning the levels a numeric value on a scale of 1 -5. That means, something rated ”poor quality”y was assigned a value of 1 , while something ”excellent” was assigned a value of 5.

Feature Engineering

Feature engineering is the process of transforming raw data into engineered features which leads to a better predictive model, resulting in improved model accuracy on unseen data. Each of the features in our model is given certain weight and it determines how important is that feature towards our model prediction. Some of the steps followed are:

- Creating dummy variables/One Hot encoding for the categorical variables.

- As there were many features that were correlated, they are grouped together to create new features.

- Scaling the data

Exploratory Data Analysis (EDA)

Numeric Variables

- Look at the correlation among the numeric features and select those features that have a correlation of more than 0.5 with the Sale Price and plot the correlation.

- Measure the skewness of the data. Select the features having a skewness > 0.8, and do a log transformation of those features.

- Normalize the data by centering and scaling the fields.

- We also look at the distribution of the target variable SalePrice. As the distribution is skewed, we performed a log transformation and normalized the field.

Distribution of SalePrice:

Distribution of SalePrice after Log transformation:

Most of the variables that deal with the actual physical space of the apartment are positively skewed , which makes sense, as people tend to live in smaller homes/apartments apart from the extremely wealthy.

Sale Price also has a similar positively skewed distribution — I hypothesize that the variables dealing with the actual dimensions of the apartment have a large impact on Sale Price.

Models

Here comes the exciting part -- modeling! I started with the obvious choice of Multiple Linear Regression using the features that are highly correlated to SalePrice, instead of reading all the features. I used the powerful “caret” package for most of the modeling .l. A brief explanation on the caret package below:

Caret is short for Classification And REgression Training.

The advantage of using caret is it provides a consistent interface to all of R's most powerful machine learning facilities. And an easy interface for performing complex tasks. That means that, you can train different types of models with one easy, convenient format. You can also monitor various combinations of hyperparameters and evaluate performance to understand their impact on the model you are trying to build. Additionally, the caret package helps you decide the most suitable model by comparing their accuracy and performance for a specific problem.

Below are the models that were tried using caret package:

- Multiple Linear Regression

- A naive Ridge Regression against the scaled data

- A naive Lasso Regression against the scaled data

- ElasticNet Regression against the scaled data

- Random Forest

- XGBoost

MLR:

I got started with creating the Multiple Linear Regression model using the top 10 features that are highly correlated with the SalePrice. This would help me to serve as a baseline model. Once I have the R square from MLR, I used the regression techniques(Lasso, Ridge, Elastic Net) to see if there is any improvement in the accuracy of the model.

The caret package contains functions to streamline the model training process for complex regression and classification problems. One of the primary tools in the package is the train function which can be used to

o evaluate, using resampling, the effect of model tuning parameters on performance

o choose the "optimal’’ model across these parameters

o estimate model performance from a training set

There are options for customizing almost every step of this process. Options that I have used include the following:

Implemented a Grid Search Cross Validation method to tune for the best hyperparameters. I started with a fairly coarse grid search tuning over large gaps in the parameters and ended with a very fine search to zero in on the best parameters.

To change the values of the tuning parameter, either of the tuneLength or tuneGrid arguments can be used. I have used the tuneGrid option. The train function can generate a candidate set of parameter values, and the tuneLength argument controls how many are evaluated. In the case of GLMNET, the function uses a sequence of integers from the sequence provided. The tuneGrid argument is used when specific values are desired.

A data frame is used where each row is a tuning parameter setting and each column is a tuning parameter. To modify the resampling method, a trainControl function is used. The option method controls the type of resampling and defaults to "boot". Another method, "cv", is used to specify K-fold cross-validation K is controlled by the number argument and defaults to 10. In our model, we used K=5 for cross validation.

Ridge regression model

We can get the list of important features using varImp() function during Lasso Regression. Here are the top 20 important features we got:

Finally, to choose different measures of performance, additional arguments are given to trainControl. The expandgrid is used to pass a sequence of lambda values that are used for tuning. Lastly, the function will pick the tuning parameters associated with the best results. Since we are using custom performance measures, the criterion that should be optimized must also be specified. The bestTune provides the best lambda and alpha value. Use results$RMSE to get the Root Mean Square Error for each model. Out of the 3 techniques, Lasso and ElasticNet did equally well.

Regression Techniques

This logic holds true for all 3 Regression techniques. Calculate R-square for each alpha, we will see that the value of R-square will be maximum for the alpha values provided below. So we have to choose it wisely by iterating it through a range of values and using the one which gives us lowest error. The alpha values corresponding to each are:

Ridge: alpha = 0

Lasso: alpha= 1

Elastic Net: alpha = 0.5

After trying the linear regression techniques, I now switch to non-linear models like Random Forest and XGBoost to evaluate their performance. As our data consists of a large number of categorical features, I assume Random Forest would be a good option because of its inherent resiliency to non-scaled and categorical features.

Data from Random Forest:

The caret package in R provides an excellent facility to tune machine learning algorithm parameters. However, not all machine learning algorithms are available in caret for tuning. The choice of parameters is left to the developers of the package, namely Max Khun. Only those algorithm parameters that have a large effect (e.g. really require tuning in Khun’s opinion) are available for tuning in caret.

For Random Forest, only the mtry parameter is available in caret for tuning. Due to its effect on the final accuracy, it must be found empirically for a dataset. I derived mtry by taking a square root of the number of variables in our training set which got us a value of 20.I have used a method "repeatedcv" in the traincontrol function . A 10-fold cross-validation and 3 repeats slows down the search process, but is intended to limit and reduce overfitting on the training set.

control <- trainControl(method="repeatedcv", number=10, repeats=3)

XGBoost:

XGBoost is the most popular machine learning algorithm these days. Regardless of the data type (regression or classification), It offers strong accuracy performance and high speed across multiple types of dataset. It belongs to a family of boosting algorithms that convert weak learners into strong learners. A weak learner is one which is slightly better than random guessing.

XGBoost shines when we have lots of training data where the features are numeric or a mixture of numeric and categorical fields, like in our case. It is also important to note that XGBoost is not the best algorithm out there when all the features are categorical or when the number of rows is less than the number of fields (columns).

The most critical aspect of implementing xgboost algorithm is selecting various parameters used in tuning the model. There are mainly three types of parameters: General Parameters, Booster Parameters and Task Parameters. Here we train the model using the grid search and get best values of hyperparameters.

We convert the training and testing sets into DMatrixes: DMatrix is the recommended class in XGBoost and its recommended to use xgb.DMatrix to convert a data table into a matrix.

This is the grid space to search for the best hyperparameters

Finally, for our data, we got the following results using xgb_caret$bestTune

nrounds max_depth eta gamma colsample_bytree min_child_weight subsample

44 1000 5 0.05 0 1 4 1

Data Results

Our top performing models are:

MLR: RMSE is 0.170

Ridge: RMSE is 0.1382241

Lasso: RMSE is 0.1290906

ElasticNet: RMSE is 0.1290153

RandomForest: RMSE is 0.1431506

XGBoost : RMSE is 0.128208

From the above results, we can say XGBoost is the winner. However, Elastic Net, Ridge and Lasso were close too. What’s surprising is the performance of Random Forest. It performed worse than the linear models. Some reasons that might have led to the poor performance could be the grid search on mtry, nodesize, sampsize, classwt. Probably adjusting these couldn’t improve the model.

Ensembling

One another approach of ensembling is Stacking, for combining the models.

In stacking, multiple layers of machine learning models are placed one over another where each of the models passes their predictions to the model within the layer above it and the top layer model takes decisions based on the outputs of the models from the layers below it. I have taken the output from the top 3 models viz XGBoost, Lasso and ElasticNet and took an average of the predicted SalePrice and output the new Saleprice to an Excel spreadsheet for submission.

Conclusions

Predicting the SalePrices of the Ames Housing project based on machine learning technique was quite a challenge. The vast number of features made it even more challenging. The key for creating the best model is Feature Engineering. Some of the features had more than 10 levels, and encoding those would only increase the dimensions of dummified features. I believe that the best option is to group the relevant levels into one to simplify the levels, though I haven’t implemented that here.

To conclude, if you are trying to improve your model accuracy, you need to first understand your data and its features. The more information you can extract, the better your odds for a robust model. For that, you need a domain expert who can guide you in toward the best direction.