Predicting Iowa House Prices using Supervised Machine Learning Algorithms

Authors: Daniel Park, Dimitri Liakhovitski, Gwen Fernandez, & Henry Crosby

NYC Data Science Bootcamp, November 2017

Project Background & Objectives

The goal of the project was to explore the data set and try to predict housing prices in Ames, Iowa using various supervised machine learning techniques - a data science competition hosted by Kaggle.

The data set contained 79 explanatory variables describing almost every aspect of residential homes in Ames. It provided a rich opportunity for feature engineering and advanced regression techniques.

Our team worked on the project during the second half of October - beginning of November 2017.

Our Team’s Journey

Workflow:

- Understanding and exploring the data

- Brainstorming and first round of feature engineering

- Building individual predictive models

- Building model ensembles, testing on Kaggle Leader Board

- Second round of feature engineering – a different approach; shorter turnaround

- Building ensembles again, testing Kaggle Leader Board

1. Understanding and Exploring the Data

We created our github repository and fired up Jupyter Notebook. We chose to complete the project entirely in Python.

Initially, we worked individually to familiarize ourselves with the data and gather insights from exploratory data analysis (EDA) using pandas and matplotlib. We focused on the following:

- Identifying variables that are highly correlated with Sale Price (our target variable);

- Creating meaningful categories of predictors;

- Feature engineering opportunities, e.g., meaningful combinations of predictions or interactions between some of them; and

- Reducing multicolinearity.

2. Brainstorming and First Round of Feature Engineering

After the initial exploration, we came together to discuss findings. We logged all observations in a shared google sheet that proved valuable in organizing our findings and then selecting appropriate actions to take on each feature.

3. Building Individual Predictive Models

From there, our team finalized the first modeling-ready data set. After that, we devided up the work of applying the trying to predict house prices using the following machine learning algorithms:

- Regularized Linear Models

- Random Forest

- Gradient Boosting Machines (Tree-Based)

- Support Vector Machines and Linear Models with Kernel Trick

4. Building Model Ensembles, Testing on Kaggle Leader Board

After working for a while individually, we came together to look at and learn from each others’ models, which was a great learning experience. Each team member brought a deeper understanding about his/her model and showed the group a few new technical tricks discovered along the way.

To ensemble the models, we averaged individual models' predictions and then tried stacking individual model predictions using a meta model. We were pretty happy with our score on cross validation and the Kaggle Leader Board, the score being root mean squared log error (sale price was logged to make the size of the errors more proportional on the low and high side of the price spectrum). Our ensembles came out to around 0.125 on the Leader Board after scoring around 0.115 in cross validation on the training set. That 0.125 score on the Leader Board translates to a mean squared error of about 13.5% in dollar terms, good but not not quite as good as we had hoped!

5. Second Round of Feature Engineering – A Different Approach; Shorter Turnaround

6. Building ensembles again, testing Leader Board

To our surprise, and some dismay, we found that our predictive techniques performed better on the new data set ! And that despite the fact that we ignored multicolinearity, it seemed like our models were able to deal successfully with the colinearity of the house features.

After some trial and error ensembling, we arrived at an ensemble that balanced a few of our best individual models. These models appeared to balance each other in regions of housing prices where the residuals (the errors) of the model increased, usually at the low and high tails of the housing prices in Ames.

At the end, we achieved a top 8.9% position on the Kaggle Leader Board with a root mean squared log error of 0.115. This also happened to be the best in our class 🙂

The above was a high-level look at our work flow. Here is how the soup was made:

First Approach to Feature Engineering

We decided to omit variables that had virtually no variance. Such as:

- Street: Type of road access to property – as 99.6% of houses had paved road access

- Utilities: Type of utilities available – as 99.9% of houses had all public utilities

- RoofMatl: Roof material – as 98.2% had ‘Standard (Composite) Shingles’

- Heating: Type of heating – as 97.8% had gas heating

For each ‘object’ column we looked at its meaning and level frequency. For example:

We built dummies for higher incidence levels: either manually or using pd.get_dummies()

We transformed ‘object’ columns that had quantitative meaning – using our judgement: "Theory Driven" transformation of quality variables to scores, that is making up a sensible score! For example: excellent kitchen quality = 3, good = 2, average = 1, no kitchen = 0.

We excluded some numeric variables if they were redundant statistically & conceptually:

We combined some highly correlated numeric variables into one and used only the new one in our models:

For example, we combined into one new variable ‘average_quality’ the following quality-related variables that were highly correlated – and then omitted those 4 from our models.

- 'exterior_quality',

- 'heating_quality',

- 'kitchen_quality',

- 'OverallQual'

To reduce multi-co-linearity, we regressed one variable onto several others and kept only its residual:

Example of regressing age of the house onto brick & tile foundation dummy, garage perception, number of full baths, and exterior vinyl siding dummy – because age was highly correlated with all those variables:

We kept updating and studying the correlation matrix, striving to reduce predictor multi-co-linearity:

We imputed missing values using mice from ‘fancyimpute’ package:

- ‘fanicyimpute’ package is a Python translation of R package ‘mice’ – the best library for missing data imputations.

- It is a bit tricky to install ‘fancyimpute’ on Windows.

- MICE stands for Multiple Imputation by Chained Equations.

- As imputation method, we used MICE.

- Details on MICE: https://www.ncbi.nlm.nih.gov/pmc/articles/PMC3074241/

Finally, we transformed the target variable and some predictors and scaled (standardized) ALL predictors

We used the log of the dependent variable, SalePrice, for modeling:

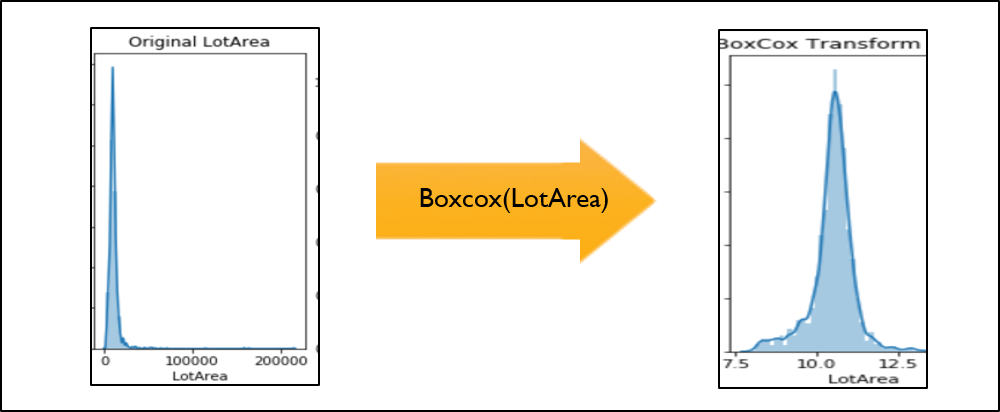

We transformed several numeric predictors using Box-Cox transformation – to make them less skewed:

We scaled (standardized) all predictors using StandardScaler.

Identifying and eliminating outliers: Outliers in the training set could bias our model!

- We decided to identify them and get rid of them – before fitting our models.

- We built a function that helps identify outliers in TRAIN – using scipy.spatial.distance:

Second Approach to Feature Engineering

We decided to leverage ‘label encoding’ to recode all truly categorical variables:

- Categorical variables that were truly numeric were recoded same as before – using ‘theory-driven’ transformation

- All truly categorical variables were recoded using label encoding

- As a result, our second dataset contained no dummy variables

- Outliers: Presence of multiple label-encoded variables might bias our distance calculations, so we just excluded 2 outliers with very large living area and very low sale price

- We tried something new now – we ignored multicollinearity!

Prediction!

Work Flow:

- Run several regression models – separately;

- Use Cross-Validated Grid Search to tune hyperparameters for each model and compare scores;

- Submit predictions to Kaggle to check models’ performance on Public Leaderboard;

- Combine individual models using several different ensembling methods.

Supervised Learning Methods Used:

- Support Vector Regression (sklearn.svm.SVR)

- Random Forests Regression (sklearn.ensemble.RandomForestRegressor)

- Gradient Boosted Regression (sklearn.ensemble.GradientBoostingRegressor)

- Linear Models:

- Ridge, Lasso, Elastic Net (sklearn.linear_model.Lasso, sklearn.linear_model.ElasticNet)

- Kernel Ridge Regression (sklearn.kernel_ridge.KernelRidge)

Individual models: Hyper-Parameters and Score on Grid Search + CV:

Ensembling methods used:

1.Simple averaging: used the best tuned hyperparameters to fit individual models and averaged their predictions

2.Stacking: used the same hyperparameters but trained a meta model (either a lasso or a gradient boosting machine) to produce predictions

3.Due to the poor performance of our tree models, we averaged the predictions of just SVR and Elastic Net

Ensemble prediction Results

Woohoo! Top 9%, 0.115 error, good enough for us!

Lessons learned

- HAVE FUN! We had a great time working together, and it was a thrill as it all came together at the end

- Wrap it up at the right moment: learn to recognize diminishing returns at each stage of the project

- Feature engineering is tough: so many ways to cut the data – hard to know a-priori what one is best

- Prediction ≠ Interpretation: Overemphasis of prediction lessens the need to understand the data and models

- Is collinearity bad? Eliminating it at the cost of losing features might not help even for linear models, or..?

- Cross-Validation or kaggle Leader Board?? The eternal question.

- Our individual linear models actually performed best for us on LB

- Our averaged model predictions performed the best on CV, close to best on LB

- Our fancy ensemble with various meta-models didn’t do quite as well

Thank you for reading!

We appreciate your time and any inquiries you might have (especially about new opportunities). Visit the github project of Henry, Gwen, Dimitri, and Daniel to see the code.

As a bonus, see below for a conceptual and sklearn based cheat sheet on the various models used above:

Regression Model and Hyper-Parameters ‘Cheat Sheet’:

Linear regression with regularization:

- Ridge and Lasso are linear models with regularization penalties: L2 and L1, respectively

- Alpha (or lambda) tunes the magnitude of the regularization penalty

- L2 and L1 are the degree of penalty, Euclidean or “block-wise” distance from the intercept only model

- From https://www.r-bloggers.com/kickin-it-with-elastic-net-regression/ :

- “Because some of the coefficients shrink to zero, the lasso doubles as a crackerjack feature selection technique in addition to a solid shrinkage method. This property gives it a leg up on ridge regression. On the other hand, the lasso will occasionally achieve poor results when there’s a high degree of collinearity in the features and ridge regression will perform better. Further, the L1 norm is underdetermined when the number of predictors exceeds the number of observations while ridge regression can handle this.”

- Elastic Net Regression blends the penalty of both regressions with L1/L2 weighting. An elastic net with full L1 weighting is Lasso and full L2 is Ridge.

Random Forest:

- n_estimators: number of trees you want to build before the maximum voting/taking averages of predictions. A larger number of trees will allow the model to perform better, but will take longer to run

- max_features: dictates the max number of features RF can try within each split

- Increasing max_features generally improves the RF model because it allows for a larger number of options to be considered for each tree, but this also increases the chance of overfitting

- max_depth: depth of tree = length of longest path from root node to leaf along a single decision tree. Minimizing the depth of individual trees within RF will fight overfitting.

Support Vector Machines (SVM):

- Kernel type - the type of kernel used. We tried:

- ‘poly' - polynomial kernel

- 'rbf' - radial basis function kernel = radial kernel

- ‘degree’ (for polynomial kernel only) – the nth degree of the polynomial (linear = 1, quadratic =2, etc.)

- ‘gamma’ (for radial kernel only): the hyperparameter involved in the radial kernel equation (and other nonlinear type kernels)

- ‘C’ - helps determine the threshold of tolerable violations to the margin and hyperplane - it's like an "error" budget.

- As C increases - the margin gets larger variance decreases, and bias increases

- Important: For regression we can't 'missclasify' so the goal is instead to maximize the size of the margin, but include as many points as possible within the margin

- ‘epsilon’ - the error term measured from any point to the hyperplane; it’s a measure for whether or not a given point is inside the margin. By setting epsilon we are controlling how much error we allow the model per point. Super small epsilon leads to extreme overfitting.

Gradient Boosting Machines:

- We used trees so, all parameters that apply to depth of tree, number of features used at each split also apply here

- ‘n_estimators’ – total number of trees we want to build

- ‘learning_rate’ – the shrinkage factor = the degree to which we shrink each successive tree’s predictions. Small values mean that the learning is happening very slowly (large shrinkage). Large values imply that we shrink each tree’s predictions less so that the trees are ‘learning’ faster. However, that could lead to our missing the optimum point we are looking for.

Kernel Ridge Regression

- Kernel ridge regression (KRR) combines Ridge Regression (linear least squares with l2-norm regularization) with the kernel trick - http://scikit-learn.org/stable/modules/kernel_ridge.html

- KRR is similar to Support Vector Machines except that the loss function is different: KRR uses squared error loss for all terms while SVM only cares about loss within a margin

- Scikit-learn allows you to tune:

- Alpha, as with ridge regression, is the scaling of the cost for the coefficients i.e. for departing from the mean model

- Kernel is the kernel trick used to map the features onto a different space e.g. polynomial, radial

- Gamma is another tuning parameter that goes into the kernel

- Degree is for poly and denotes the number of degrees of polynomial