Using Data From Yelp to Predict Michelin Stars in Restaurant

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Contributed by Tyler Knutson. He is currently enrolled in the NYC Data Science Academy 12 week full time Data Science Bootcamp program taking place between July 5th to September 23rd, 2016. Code can be viewed in its entirety on GitHub.

Context and Overview

$250 a head for food, before drinks. Data shows there are no reservations available for months at a time. Lottery systems just for a chance at booking a table. Intricate 3+ hour dining experiences with dishes more closely resembling works of art rather than cuisine. Welcome to the elegant world of Michelin fine dining.

The Michelin Guide has been published since 1900 in France and has given its infamous one, two, and three star ratings to restaurants around the world since 1931. There are currently fewer than 300 restaurants in the world with a Michelin star and while some might demur, most chefs agree that receiving recognition from Michelin is of the highest accord. Not surprisingly, most publications with "top chefs" lists litter their pages with Michelin starred chefs, who have become this century's "rock stars"...at least to those who can afford their prices.

While not all Michelin starred restaurants are created equal -- particularly in terms of price -- it is important to note just how expensive some of these eateries have become. Take famed Thomas Keller restaurant Per Se in New York City, where diners frequently spend over $500 per person for a chef's tasting menu of 10+ courses and wine pairing. Incredibly, reservations are few and far between and Keller has been able to craft an experience that drives huge demand even at prices that most likely view as exorbitant.

Objective

Clearly it seems like every restaurateur's dream to drive this level of revenue while showcasing the abilities of his/her chef. So how does one earn an elusive Michelin star?

Unfortunately, Michelin is rather secretive about its practices, particularly the identities and criteria used by its critics. This begs the question: can we use other available review data to better understand Michelin restaurants? Luckily for us, one website has extensive reviews on every Michelin starred restaurant in the world: Yelp.

Data

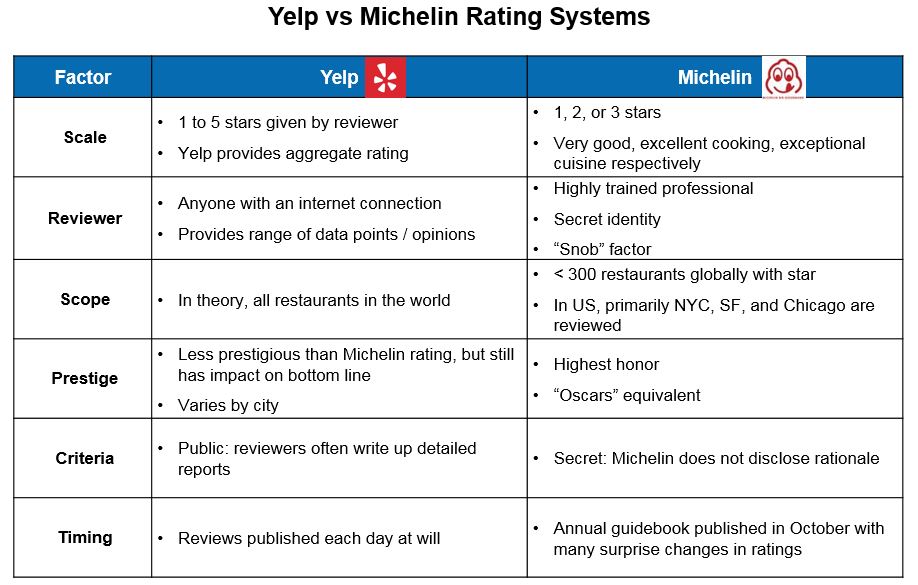

The following table compares Yelp vs Michelin:

Currently Michelin primarily reviews three major US metropolitan areas: New York, Chicago, and San Francisco. For my analysis I've focused just on the San Francisco Bay Area. Ideally it would be interesting to understand how Yelp and Michelin differ in their ratings of these high end restaurants.

Approach

For this project I focused on using web scraping techniques to collect review data from Yelp, transform it into a structured format, and perform a series of analyses. I was able to scrape nearly 100% of reviews for all 62 Bay Area restaurants that held one or more Michelin stars for at least one year between 2010 and 2016 as well as for 38 non-Michelin starred restaurants that had a similar profile (51,405 and 37,489 reviews, respectively).

My high level approach is depicted in the image below:

Extract and Transformation

I leveraged the BeautifulSoup package in Python to perform all of my web scraping of Yelp. I identified two main "table" equivalents: review data and restaurant data, and I defined two separate formulae to parse the relevant html tags on each page and collect the target fields. Then, I wrapped both formulae in another formula which loops through each restaurant's Yelp URL (and its related sub-pages) provided in a csv file and commits results to another csv output file. This output file is then loaded into R where I performed the bulk of analysis.

More detail on technical specifics including code snippets is listed in the "Technical Details" section of this post.

Baseline Data Analysis

Since criteria used by Michelin to evaluate restaurants are largely unknown we can instead establish a baseline profile of each restaurant using Yelp data. My first question was whether Michelin and Yelp star ratings agreed with one another:

As expected, Michelin and Yelp ratings are positively correlated with about a 10% difference between formerly Michelin starred restaurants (currently 0 stars) and 3 starred eateries. While there doesn't appear to be much difference in Yelp ratings between 1 and 2 starred Michelin restaurants, this may be due to the recency of the 2 starred restaurants; as time goes on I would expect the Yelp ratings for 2 star restaurants to trend higher.

So Michelin restaurants tend to have high Yelp ratings in San Francisco, but what do other metrics look like? The table below summarizes a few of the key measures:

Though we don't have comparable data for all non-Michelin restaurants in the Bay Area (the 0 stars category would not be representative as these restaurants previously had at least one star), we can intuitively make some interpretations of the data.

Michelin is Fickle

One interesting finding is that of all 62 restaurants blessed with at least one Michelin star since 2010, nearly a quarter of them have since lost their star(s) by 2016. This indicates a chef cannot simply relax after earning a coveted Michelin star and must work to maintain the superior quality of cuisine and service that earned the star in the first place.

There have been some surprises here too; the 2016 Michelin Guide stripped both Boulevard and La Folie of their stars when previously they had been quite highly regarded. The earliest example of losing Michelin's favor in this dataset occurred in 2011 when classic farm-to-table institution Chez Panisse lost its star after years of being a highly sought after booking. The most dramatic fall from grace though belongs to Cyrus, which lost 2 Michelin stars in 2013.

Everyone is a Critic

Another thing that jumps out of this chart is the sheer number of reviews these restaurants seem to garner. Despite typically being fairly expensive, it seems there is a devoted contingent of foodies that also fancies itself critics. It would be difficult to walk out of French Laundry without dropping several hundred dollars, yet over 1,500 excited diners have done just that and then been so inspired to write up a detailed review afterward (detailed meaning thousands of words with photos, typically).

Most interesting to me however is the average number of elite reviews for these restaurants. Again, we do not know the baseline rate for non-Michelin restaurants, but driving elite review rates as a percentage of total reviews between 14% and 17% seems incredibly high to me.

The reason I find this statistic so important lies in how Yelp has defined its elite program. While any Yelp user can be nominated for elite status, it's Yelp's mysterious Elite Counsel that ultimately determines who receives this title. These users are given special badges on their profiles and are invited to private parties, tastings, and other events each year. What criteria are used by the Elite Counsel?

We're not entirely sure (does this sound familiar?), but generally these are users with many friends, check ins, and of course, reviews written. It stands to reason that if elite users are carefully selected, presumably the quality of their review content would play a major factor, which potentially makes them more like a Michelin reviewer than average Yelper.

Are Elite Yelpers the Key?

Let's play with the idea that elite reviews hold higher stock than normal reviews and test a hypothesis: does elite user behavior foreshadow Michelin behavior? Michelin only publishes its ratings once per year, but what if leading up to its October release we could predict changes in star ratings based on elite Yelper activity? In our seven years of data we see 74 events where a restaurant has either gained or lost Michelin stars, so let's examine what happens for these change events vs when no change occurs.

Here we can clearly see some interesting behavior. In the year prior to the Michelin Guide release we can see dramatic differences in the growth in elite reviews. Restaurants that would go on to lose their Michelin stars actually saw a decrease in elite reviews leading up to October, those that maintained their stars saw a modest increase of 32%, and those that would go on to gain one or more stars experienced an incredible 132% growth in elite reviews on average.

Prediction

T-Test to Show Statistical Significance

Before using the % change in elite reviews as a predictive measure, let's check to make sure the difference is statistically significant given the sample size and variance. To do this I used a two-sample T-test between the No Change vs Gained Star(s) events. I did not check relative to the Lost Star(s) events since the sample size was much lower for these cases. As we can see in the image below, the difference in means for these samples is statistically significant at the 0.05 level with a p-value of ~0.006.

Sampling Potential "Up and Comers"

So under the assumption that the % change in elite reviews is a statistically significant predictor of a restaurant gaining a Michelin star, I sampled additional Yelp reviews from 38 restaurants that had a similar profile as our existing Bay Area Michelin set that do not have a Michelin star. This sample generally has a combination of high aggregate Yelp rating, large number of reviews, and 3 or 4 dollar sign ($) price level.

How do They Stack Up?

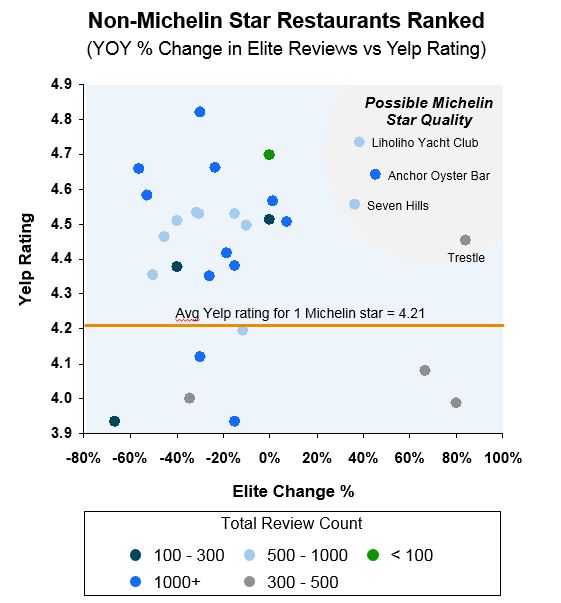

While we have established the importance of % change in elite reviews, I also wanted to understand each contender's aggregate Yelp rating as an additional data point to help inform prediction. Below I have plotted the % change in elite reviews on the x-axis with the aggregate Yelp rating on the y-axis:

In the upper right section of the plot (gray section) I've highlighted four restaurants that appear to be strong contenders for a Michelin star based on a surge in elite reviews this year as well as Yelp ratings higher than the average for a 1 Michelin star restaurant.

After examining these restaurants in more detail, I predict Trestle is most likely to earn a Michelin star within the next two years, followed by Seven Hills and Liholiho Yacht Club. While it is possible Anchor Oyster Bar could earn a star as well, I personally feel their style is a bit too casual to make the Michelin cut. I mainly believe Trestle has the highest likelihood of earning a star based on its very strong ramp in elite reviews.

While I haven't dined at Trestle yet, after examining photos I believe it has the "feel" of a Michelin restaurant, plus it is located in the Jackson Square neighborhood which hosts a number of trendy restaurants, including 2 Michelin starred Quince.

What are People Saying?

Just for fun, let's examine the word clouds of the most common two-word pairs found in a typical Yelp review of a restaurant that just earned a Michelin star vs our top contender Trestle:

While I'm not sure this tells us anything too actionable, it is interesting to note that "tasting menu" is the number one two-word pair cited by Yelp reviewers for recently starred Michelin restaurants. Come to think of it, my most memorable Michelin experiences have been chef's tasting menus, so I suppose this makes sense. I also think it's fascinating that "foie gras" ranks so highly in San Francisco reviews since it has only been legal in California since early 2015.

Trestle's most frequently used two-word pair is "prix fixe", which is logical as they offer an affordable coursed menu for a set price. I'm not sure whether Michelin reviewers would view this as closer to a tasting menu or as a less expensive alternative, so it's not clear to me whether this would work in Trestle's favor or not.

Regardless of whether these predictions hold, this analysis has definitely given me a new set of ways to visualize where to eat next in the Bay Area, particularly the next time I'm hungry for "ice cream" and "pork belly" in the same seating.

Technical Details

First I loaded a file with relevant starting point URLs for each Michelin restaurant:

Next I defined a formula using BeautifulSoup to parse restaurant specific html tags for relevant fields:

I then created a similar formula to capture review specific data:

Both formulae are wrapped in an iterative formula to work through each restaurant's URL (and related sub-pages):

Finally, results are output to csv files for analysis using R: