Predicting Stock Movement of Hang Seng's Components

Introduction

This post is about the capstone project we are supposed to deliver at the end of the NYC Data Science Academy Data Science Bootcamp program. This is the continuation of my first project delivered for the NYC Data Science Academy bootcamp.

What is a stock market index?

A stock market index (or just “index") is a number that measures the relative value of a group of stocks. As the stocks in this group change value, the index also changes value. If an index goes up by 1% then that means the total value of the stocks which make up the index have gone up by 1% in value.

For example, suppose that an index, say, ABC, is made up of four companies. As of the end of yesterday’s trading day, the ABC index was set at 4,123 points. Today, two of the companies went up in value, one company dropped in price and the fourth company stayed the same – the total value of those stocks went up by 2% so the ABC index is now 2% higher or 4205 points.

Stock index, trend, and market predictions present a challenging task for researchers because movement of the stock index is the result of many possible factors such as a company's growth and profit-making capacity, local economic, social and political situations, and global economic situation.

The index chosen for analysis in this project is Hong Kong's Hang Seng index. I will be discussing the 30 major components of Hang Seng index below. The detailed list of the components are given below in the Source Data section.

Literature Review

Basically, there are two kinds of analysis which investors perform before investing in a stock. First is the fundamental analysis. In this, investors look at intrinsic value of stocks, performance of the industry and economy, political climate etc. to decide whether to invest or not. On the other hand, technical analysis is the evaluation of stocks by means of studying statistics generated by market activity, such as past prices and volumes. Technical analysts do not attempt to measure a security’ s intrinsic value but instead use stock charts to identify patterns and trends that may suggest how a stock will behave in the future. I have performed technical analysis in this project.

Although there are many arguments and discussions regarding which analysis is better, there have been many works existing in the literature for technical analysis. I quote only a few of them here, which I felt required for my analysis.

Regarding Hang Seng index, Ou and Wang (2009) used ten data mining techniques to predict price movement of Hang Seng index. The approaches include Linear Discriminant Analysis (LDA), Quadratic Discriminant Analysis (QDA), K-nearest neighbor classification (KNN), Naive Bayes based on kernel estimation, Logistic Regression (LR) model, Tree based classification, neural network, Bayesian classification with Gaussian process, Support Vector Machine (SVM) and Least Squares Support Vector Machine (LS-SVM). They achieved an accuracy of about 84% with their SVM model. They used raw Open, High, Low, Close (OHLC) values as predictors. The only drawback in their analysis is that during the experimental years, which they chose for analysis, 2001-2006, it was quite a smooth period, without any shocks or notable crashes in the stock market.

The next work, I would like to mention is that of Patel et. al. They proposed using deterministic input variables with Artificial Neural Network (ANN), SVM, Random Forest Classifier (RFC), and Naive-Bayes models to predict Indian stock market index trend. They were able to achieve an accuracy of about 90% with RFC. The highlight in their work is that, they used ten technical indicators, like moving averages, rate of change of OHLC values, etc. instead of raw prices as predictors. The period, 2003 - 2012, used for their experimental analysis was also strong enough with ups and downs in the market.

The other works, I referred to, are those of Inthachot et al. (2016) and Magnus Olden's thesis (2016). The former employed ANN and genetic algorithms in Thailand's SET50 index for 2009-2014, with eleven technical indicators as predictors and achieved an accuracy of around 62% - 68%, while the latter experimented with many machine learning, deep learning and ensemble techniques in Norway's index (OSLO stock exchange) for 2011- 2015 and showed an accuracy of around 60%-63%.

Up to my knowledge, only very few works were able to achieve a high accuracy by considering subsets of the major components. If all the major stock components of a particular index are analyzed, then the researchers were able to achieve an overall accuracy of around 60% - 68%, irrespective of the methods and indices. But, the notable point is that, in one case, SVM model outperformed, while in other, ANN did and in some other cases, ensemble techniques were found to be fruitful. I could understand that every model has something to convey about stock market movement. I put forth the following research questions for this project.

Research Questions:

- How to train each model to extract its best or in what way a model has to be trained to make it to produce better accuracy, particularly, for Hang Seng’s components?

- Which stocks will influence the movement of a particular stock component of the Hang Seng index?

The problem is modeled as a two-class classification (prediction) problem, that is, whether or not the price of a stock would rise, for each of the 30 components of the Hang Seng index. The classifiers considered to extract their best in this project are Logistic Regression (LR), Support Vector Classifier (SVC), Random Forest Classifier (RFC) and Gradient Boosting Classifier (GBC).

Source Data

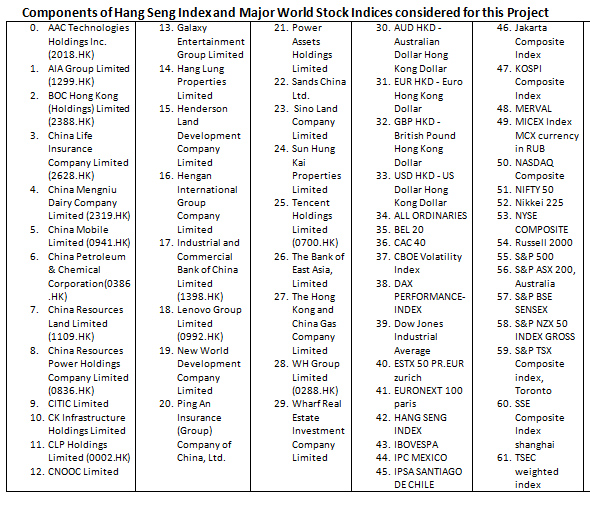

I sourced the data from the Yahoo finance and Investing.com. I collected the historical data for five years from 11th March 2013 - 8th March 2018 of the Hang Seng index, some of the world major stock indices, 30 major stock components of the Hang Seng's index, and the currency rates between Hong Kong Dollars (HKD) and other four major currencies. The entire list is given in the following table. They are indexed from 0 and will be referred in the later sections with the index.

The data was in very good quality so that I had to do almost no cleaning. I renamed the columns and then merged all the data in to one table. While merging, it produced some missing data, because the local holidays in a country may be working days in Hong Kong. Those values were filled with previous day's values. I rechecked the data for missing values and if existed, those were filled with zero.

Performance Evaluation Metric

There are many metrics used to evaluate a classifier model. The universal metric used in stock market prediction is the accuracy.

Accuracy is the percentage of correctly classified samples. I used mean accuracy of all the components as the first metric.

I have used ROC AUC score (the Area computed Under the Receiver Operating Characteristic Curve from prediction scores) as another metric, because accuracy may be misleading sometimes. Area under ROC curve is the area under the curve plotted between true positives versus false positives. I used mean ROC-AUC Score of all the components as the second metric for this project.

Sample ROC

Exploratory Data Analysis

Clustering Techniques:

I used clustering techniques to find some patterns in the stock movement. In fact, I tried to categorize the stocks as bullish, bearish and normal stocks. Bullish stocks are those showing upward trend, bearish are those showing downward trend and normal stocks are a mixture of both. The number of clusters should be fixed as 3 for this process. I fit the model with KMeans clustering technique. The clusters however are not properly grouped around 0.

Visualizing Cosine Distance Values as a Heat Map

There should be one cluster containing only positive values, one cluster with only negative values and the third one with a mixture of both. But that did not happen in this case. There were few negative values in the positive cluster set and vice-versa. It means that the upward and downward trends in the given data are not determined by 0 (positive and negative values).

Experimental Setup I

First, I considered raw prices of OHLC values as predictors. I had to do a preliminary test to set the time line for the prediction, meaning how many days of data were used to predict forward, that is, whether one day data was used to predict the next day's data or five days' data were used to predict the next day's data and so on. The selection list is as follows:

Predictors:

OHLC values and Volume traded for

- each of the 30 components

- Hang Seng Index

- Major world stock indices

Span:

- 1 day - Number of Predictors: 290

- 5 days - Number of Predictors:1040

Target variable is defined as follows:

Target =[1 if next day’s close > current day’s close, else -1]

Then, the train and the test sets are selected using scikit-learn's train_test_split.

Experimental Setup II

In this case, instead of raw OHLC values, I considered one of the technical indicators, mentioned in the literature, as predictors, that is, percentage change of OHLC values, but the volume variable is kept as it is.

I changed the definition of target variable as:

Target = [1 if next day's % Change in Close price > 0.5, else 0]

But the threshold of 0.5% cannot be taken for granted. So, I had to do one more preliminary test in this case, to decide the suitable threshold value as 0.5% or 0.1% or 0%.

The selection list is as follows:

Predictors:

Volume traded and percentage change of OHLC values, for

- each of the 30 components

- Hang Seng Index

- Major indices

- Currency

Span:

- 1 day (previous) - Number of Predictors: 310

- Span: 5 days - Number of Predictors: 1060

- Span: 10 days - Number of Predictors: 1810

Then, the train and test sets are selected using scikit-learn's train_test_split.

Experimental Setup III

This setup is same as the setup II, except that, in this case, instead of using train_test_split, I pre-defined my train and test sets data as:

Train set data: 11th March 2013 – 26th May 2016 (first three years)

Test set data: 27th May 2016 – 7th March 2018 (last two years).

The reason behind this pre-selection is that in the earlier two setups, as I used train_test_split, the 'Date' variable is treated as discrete and got shuffled. But, in real world scenario, it may not be practically suitable for time series analysis. Whereas, in my new definition of train and test sets, the 'Date' variable is treated as continuous.

Another point to be mentioned is about my selection of test set data from May 2016. This period was the crucial moment in the stock market world, because of the U.S. presidential election and it is well known how the market showed a downward trend during November - December, 2016 (showed in my first project). Literally, I train my model in a smooth period and test it in a harder period, in the sense that, if a model is able to perform well in a harder period, it would do in any period.

Experimental Results:

The following tables represent some of the experimental results of the above mentioned three setups.

I would like to add that in Setup I, I constructed a simple ANN model as well, which yielded an accuracy of around 62% with 1 day span. But, because of time factor, I could not continue with it on the other setups.

Among the three setups, it is clear from the table that Setup II shows the highest accuracy performance. But, as previously discussed, discrete 'Date' may not be practically suitable for real world scenario, hence, I ruled Setup II out. Among the remaining two setups, except the LR model, all other models showed higher accuracy in Setup III. But as mentioned in the literature that raw prices may produce bias and variance, I selected Setup III to continue to perform preliminary tests.

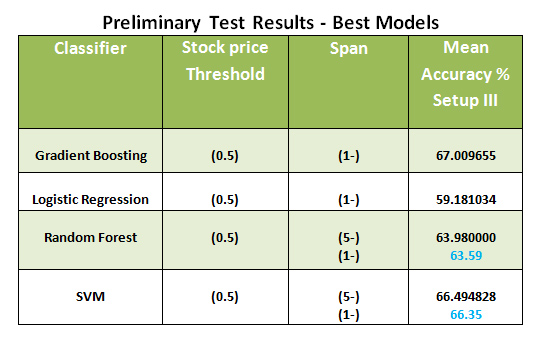

After rigorous preliminary tests, the best setup has been chosen. The results are mentioned in the following table.

It could be observed that two models outperformed with 1 day span, whereas two other models with 5 days span. But, as the results for 1 day and 5 days span were more or less similar in the two cases, I decided to continue with 1 day span and 0.5% threshold for all the four models. The reason for the stocks could not be categorized around 0 in clustering technique (above) is clear from the results that 0.5% is the best threshold. With that setup selection done, I started to make attempts to improve the accuracy.

Techniques to improve Accuracy

Changing train and test Sets:

My first technique to improve accuracy is by changing the train and test sets mentioned in Setup III. I changed the train set data to first four years (11th March 2013 - 6th March 2017) and test set data to the last year (7th March 2017 - 8th March 2018). Literally, I am training the model in a harder period and testing it in a smooth period. The compared results are shown below in the following table.

As expected, all models showed an improvement in the accuracy with the changed train and test sets.

Feature Engineering:

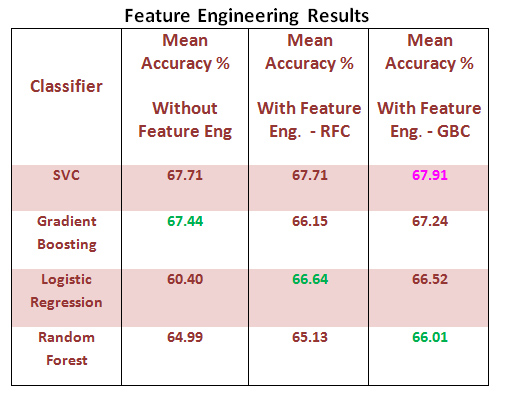

My second technique to improve accuracy is to apply feature engineering. First, the code is written to extract the 20 best features through RFC. I then re-trained the reduced model with all the four classifiers. Then, the 20 best features were extracted through GBC and the reduced model is re-trained with all the four classifiers. Before discussing about the results, it is interesting to note that for a particular stock, other stocks' prices are very important than its own OHLC values, for example, for Stock_0 (refer first table), the 'Volume' variable of Stock 58 is the very important feature. The top 20 features of stock_0 (AAC Technologies Holdings Inc.) are described in the following figure.

Top 20 Features of AAC Technologies Holdings Inc. with GBC

This means that the stock AAC Technologies Holdings Inc. is heavily influenced by New Zealand's index, the stock Henderson Land Development Company Limited and so on. So, if a trader is trading on a stock, I would suggest that he should have a close watch not only on the stock's own OHLC values but also on the influencing stocks of the considered stock.

Let us discuss the results below. The compared results are shown in the following table:

SVC reached the highest accuracy (among others) of around 68%, hence I continued my journey with the other three models to extract their best.

In the case of GBC, I varied the number of features to be extracted to be 10, 20, 50, 100, 150, 200 and 300. I then re-trained the model with GBC with each of the mentioned number of features. It could be observed that each stock has its own specific requirement to reach its best performance. I would suggest to go with specific feature requirements in case of GBC to get better performance. Please refer to GitHub for the results table. Then, I was left with only two models, LR and RFC to extract the best accuracy.

Hyper-parameter Tuning using Spark:

My final attempt to improve the accuracy is to tune the hyper-parameters. I chose Spark for memory efficiency. I worked with Spark using Databricks.

It has been mentioned in the literature that Spark may not produce good results with small-sized data set. So, I first tested the models (LR and RFC) without hyper-parameter tuning and compared their performances with that of Python. The compared results are mentioned in the table below:

It could be observed that in the case of LR, the performances of Python LR and Pyspark LR were more or less similar. But in the case of RFC, there was a huge difference. Instead of trusting Pyspark for RFC model, I left the model out. But there is nothing to worry about RFC, because GBC model took care of that as well, as RFC is a sub case of GBC.

Then, I continued to hyper-parameter tuning with the Pyspark-LR model. After tuning the hyper-parameters, the mean ROC-AUC score was 0.61, which was a good improvement from 0.56 before hyper-parameter tuning in Python. The Pyspark notebook I wrote can be found on Databricks. The LR model reacted well to feature engineering and hyper-parameter tuning.

Conclusion

The results might indicate that

- Different machine learning algorithms will perform differently on different stock markets.

- The model which gets trained in a smooth period and predicts well in shock period may be considered for future predictions.

- The stock movement of a stock is not only dependent on its own OHLC values, but also on other stocks.

- It would be better for a trader to watch those prices (previous day), as well, which are influencing the movement of the stock at hand. This would be the take-home message for a trader.

Future Directions

I plan to

- Use other technical indicators mentioned in the literature and analyze the possibility of improving the accuracy;

- Extend this study to other major world stock indices.

This project provided a great way to employ various supervised machine learning techniques, experiment with clustering technique, construct a simple ANN and apply Spark. It was the best experience to work on this project. Thanks to NYC Data Science Academy for providing me a wonderful opportunity to work with scikit-learn and Databricks.

Code and data can be found on GitHub.