Data Scraping For Malicious Intent

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

"Things that go bump in the night." The phrase is a well known sentiment found in movies, poems and books. It's the word "night", however, that gives the saying its power. Since we can't see, anything could be there. We are...uncertain. And if data science is fundamentally about anything, it is about removing uncertainty. Which leads us to an area in this modern world that is especially cloaked in darkness: malicious cyber activity.

One aspect of this large domain concerns malicious URLs (Uniform Resource Locator) and the websites they point to. Trying to determine if there is any malicious intent associated with any specific URL that has not been previously identified (and therefore most likely on a Blacklist) is a very hard problem that has been worked on for many years and continues to be. Numerous vendors have sprouted in the space to sell data and/or analysis in an attempt to shed some light in the area.

Fortunately for this project, I found at least one security-oriented website that did some basic, target URL analysis that was free and had some potential data worth scraping.

Data Source

If we look at past and current research, it appears most have focused on at least two main aspects in determining malicious intent. One is the URL string itself (so processing lexical features), and the other is the contents and structure of the website in question.

In this exercise, I'm making use of the latter with the help of a website called urlQuery<dot>net. It's my intention to see if there is enough data elements generated during the website's analysis to create a reasonable data set. It is, however, worth noting that there are some substantial hurdles in this and other real commercial strategies when trying to pre-determine malicious activity due to adversarial techniques and the inherent distributed nature of the Internet. Some of these issues will be pointed out at the end of this post.

Selected URLs to test were scraped from the Moz Top 500 Domains website.

Data Scraping Process

urlQuery<dot>net allows a user to submit a URL of interest for its own backend servers to load and analyze . Note that there some other interesting options in this page as well. Since a growing portion of malware (if not the majority) will probe a user's browser to determine its type/version/plugins/etc and therefore its vulnerabilities, this page allows the user to select a few of these options so that the testing process can identify itself as something potentially more tantalizing.

Submitting a URL to the service will eventually take several metrics and then produce a report page.

The entire process from submit to a complete report page goes through two redirects and a javascript rendering of all the main data. The dynamic nature of this site made the Selenium Webdriver perfect for programmatically submitting URLs and fetching reports. After obtaining access to the fully rendered html, Beautiful Soup was leveraged for selecting elements for my feature set. These included the following 20 items:

| Url

(string) |

IP Address (string) | ASN (string) | IP Country (string) | Report Date (string) | User Agent (string) | # Http Tranxs (numeric) | JS Executed Scripts (numeric) | JS Executed Writes (numeric) | JS Executed Evals (numeric) |

| UrlQuery alerts (binary) | Snort (binary) | Suricata (binary) |

Fortinet (binary)

|

MDL (binary) | DNS BH (binary) | MS DNS (binary) | Openfish (binary) | Phishtank (binary) | Spamhaus (binary) |

The binary variables were actually more tables of textual assessments by anti-malware companies. These assessments could be fairly substantial and probably worthy of their own deep inspection. But in the interest of time, I just rolled them up into a simple binary signal if something negative had been seen.

Issues Arise

It became quickly apparent that the five hundred domains would not only be insufficient for training any type of classifier via logistic regression, but that the relatively slow process of urlQuery's analysis would also take a substantial amount of time.

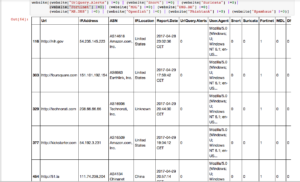

A quick look at the sample data scraped from Moz's 500 most popular domains:

Not many of the domains had been red-flagged as suspicious by commercial anit-malware companies. However, at least three of the sites provide an example of one of the major difficulties in this type of endeavor. As you can see, many of these infected sites are valid companies or government websites. What is know as a "Waterhole" attack routinely compromise legitimate websites to infect a certain class of users, or to bypass Blacklists for a more general malware campaign spread.

Other metrics like the number of HTTP transactions and JavaScript executions may provide a signal into intent of the loaded website.

Possibilities and Hurdles

One of the biggest issues in this space is the variability in URL strings themselves and the dynamic nature of web content. By definition, malicious actors do not wish to be identified and therefore constantly change their methods and source of attacks.

The use of legitimate online advertising platforms as a vector of infection is another great example of piggybacking on ordinary Internet transactions. The pace of change makes any potential classifier have to update itself at almost realtime speeds. This is a volume and velocity challenge, at a minimum. It is why deep learning methodologies are the primary trend in this area. AI may be the only effective way to chase down uncertainty, when it desperately does not want to be found.