Jigsaw's Text Classification Challenge - A Kaggle Competition

Introduction

This post is about the third of the four projects we are supposed to deliver at the NYC Data Science Academy Data Science Bootcamp program.

It is a Machine Learning project and a Kaggle competition.

Overview

Jigsaw, formerly Google Ideas, is a technology incubator created by Google, and now operated as a subsidiary of Alphabet Inc. Based in New York City, Jigsaw is dedicated to understanding global challenges and applying technological solutions.

As Kaggle puts it, "The Conversation AI team, a research initiative founded by Jigsaw and Google (both a part of Alphabet) are working on tools to help improve online conversation. Discussing things we care about can be difficult. The threat of abuse and harassment online means that many people stop expressing themselves and give up on seeking different opinions. Platforms struggle to effectively facilitate conversations, leading many communities to limit or completely shut user comments".

Jigsaw and Google started to research on this topic and their special area of focus is the study of negative online behaviors, like toxic comments (i.e., comments that are rude, disrespectful or otherwise likely to make someone leave a discussion).

Data Description

Competition data set is available at Kaggle. A large number of Wikipedia comments are provided which have been labeled by human raters for toxic behavior. The problem has only one predictor variable, 'comment_text', which is to be labeled or classified with respect to six target variables.

The target variables are the following types of toxicity:

- toxic

- severe toxic

- obscene

- threat

- insult

- identity hate

The training set consists of labeled 159,571 comments (observations) and the test set consists of 153,164 comments to be labeled.

The goal of this competition is to create a model which predicts a probability of each type of toxicity for each comment.

Literature Review

So far, Jigsaw and Google have built a range of publicly available models served through the Perspective API, including toxicity.

But the current models still make errors, and they don’t allow users to select which types of toxicity they’re interested in finding (e.g. some platforms may be fine with profanity, but not with other types of toxic content).

Through this competition, they have proposed a challenge to build a multi-headed (multi-labeled and multi-class) model that is capable of detecting different types of toxicity, as mentioned above.

Evaluation Metric

Due to changes in the competition data set, the evaluation metric of the competition has been changed and updated on Jan 30, 2018.

Submissions are evaluated on the mean column-wise ROC AUC score (the Area computed Under the Receiver Operating Characteristic Curve from prediction scores). In other words, the score is the average of the individual AUCs of each predicted column.

Receiver Operating Characteristic (ROC) is a metric to evaluate classifier output quality.

ROC curves typically feature true positive rate on the Y axis, and false positive rate on the X axis. This means that the top left corner of the plot is the “ideal” point - a false positive rate of zero, and a true positive rate of one. This is not very realistic, but it does mean that a larger area under the curve (AUC) is usually better.

The “steepness” of ROC curves is also important, since it is ideal to maximize the true positive rate while minimizing the false positive rate. More details can be found at Wikipedia.

My Research Question:

What is the potential difference in toxicity (or the amount of conversation heat) between the classes?

Basic Exploratory Data Analysis

The following figure gives information about how texts in the train set are classified or distributed under each class.

Distribution of Classes

From the above figure, it could be observed that very few texts were labeled under severe toxic, identity-hate and threat classes.

My Kaggle Submissions

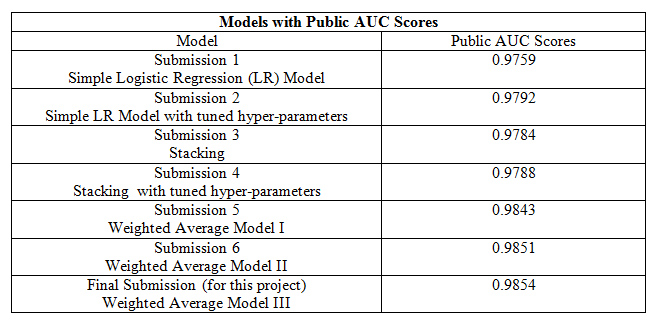

I discuss the table content in detail as follows.

Feature Engineering, Model Selection, and Tuning

Python's scikit-learn can deal with numerical data only. To convert the text data into numerical form, tf-idf vectorizer is used. Tf-idf Vectorizer converts a collection of raw documents to a matrix of Tf-idf features. tf–idf or TFIDF, short for term frequency–inverse document frequency, is a numerical statistic that is intended to reflect how important a word is to a document in a collection or corpus. More details can be found at Wikipedia.

First, I started with a One Vs Rest Classifier model. I used neither the test set nor feature engineering at that point. Instead, I split the train set itself into a training set (70%) and a test set (30%) using the 'Train-test-split' parameter in scikit-learn. Then, I constructed a pipeline with a Count Vectorizer or a tf-idf vectorizer and a classifier. I trained the model with Logistic Regression (LR), Random Forest (RF), Gradient Boosting (GB) and Support Vector (SVC) classifiers. Among them, the LR model outperformed all other models. But, the score was not up to the mark. To improve the score, I switched to use binary classification model for each class.

In this case, I considered the combined train and the test sets given in the data. I fit the predictor variable in the entire data set (train and test sets) with tf-idf vectorizer, in two different ways: First, by setting the parameter analyzer as 'word' (selects words) and the second by setting it to 'char' (selects characters). 'char' analyzer was important because the test set had many foreign languages and they were difficult to deal with by considering just the 'word' analyzer.

I then set the parameter, ngram range. An n-gram is a contiguous sequence of n items from a given sample of text or speech. The items can be phonemes, syllables, letters, words or base pairs according to the application. The n-grams typically are collected from a text or speech corpus. More details can be found at Wikipedia.

Setting the parameter, ngram range to (1,1) (collection of one word or character at a time) and min_df (minimum document frequency) to 0.0001, resulted in 62,311 word features and 2,500 character features. I stacked (combined) the word and the character features and transformed the text data into two sparse matrices for the train and the test sets, respectively, using tf-idf vectorizer. Then, I fit the train set with LR, SVC, Multinomial Naive Bayes (MNB), RF and GB classifiers. In this case also, the LR model outperformed others. So, I predicted the test set with LR model and made my initial Kaggle submission with predicted probabilities. The public leaderboard score was 0.9759 for this submission. The corresponding ROC curve is shown below.

ROC Curves for Various Classes

Hyper-parameter Tuning:

The hyper-parameters, I decided to tune in tf-idf vectorizer were ngram range and min_df, as I felt they played a crucial role in this problem.

Before tuning, I went through the data to understand its patterns. The following patterns could be observed to distinguish between the classes:

Threat: If a text contains words like ‘kill’, ‘shoot’, ‘murder’, or ‘gun’, then it was labeled as a threat.

Identity-hate: If a text points out at some community or a religion, like, ‘Nigerian', 'Jews', 'Muslim' or 'gay', then it was labeled as identity-hate.

Obscene: The text containing vulgar and offensive words was labeled as obscene.

Severe Toxic: The text containing offensive and hurtful words had been classified as severe toxic.

Insult: This class is interesting. If hurtful words were shot at some person, then those texts were classified as an insult, that is, the texts containing a noun or a pronoun together with vulgar or offensive or hurtful words were labeled as an insult.

Toxic: All other categories, like, ‘sorry’, ‘thanks’ or any general discussion fell under toxic class. This made one to be difficult to find a particular pattern and hence to classify it properly.

It is to be noted that as this is a multi-labeled model, hence a text will be labeled under more than one class. But if a text contains above observed patterns, then it will be for sure labeled under the respective class and may also be labeled under other classes. There are intersections in class labels.

Then I did some pre-processing and feature engineering to both the train and the test sets.

I cleaned the data by removing stop words (English) and punctuation, using nltk. Instead of manual selection, I selected the important words (features) in each class using tf-idf vectorizer by varying ngram range and min_df parameters for each class. The code is written in such a way that the text (both train and test sets) contains only the selected important words. It took around four hours for compilation. I transformed the resulting data frame to a csv file, named, 'imptext.csv', to use it in later processes.

Then with the reduced or cleaned text, I fit the tf-idf vectorizer again to the entire data set with 'word' analyzer and 'char' analyzer, as discussed above. This process yielded 25,265 word features and 28,000 character features, which had been combined and transformed to two sparse matrices, for train and test sets respectively. With that pre-processing done, I fit the train set with LR and MNB classifiers. The LR model outperformed this time as well. I predicted the test set with this model. There was an improvement in the score but it was not up to the mark. I transformed the predicted probabilities to 'impwords.csv' file for later usage.

While I was finding ways to improve my score, I got inspired by Mr.Vitaly Kuznetsov's talk at the NIPS 2014 conference. He had discussed about the tricks to win a Kaggle competition using ensemble techniques, which is one of the main areas of his research. A complete ensembling guide can be found at Kaggle.

It has been discussed on the website about how to create ensembles from the submission files, with or without re-training the model. There are many ways of ensembling. I used the following three of them for this problem and decided whether to re-train the model or not in each case.

Ensembling - Voting Classifier - Soft:

This method computes the weighted average of the submitted models. This can be done with or without re-training the model. I chose not to re-train this model for the given problem. I implemented this by choosing one or two of my best submissions and the third one from Kaggle and then assigning equal weights to the selected models or a little higher weight to the best model. This is done to avoid overfitting the model.

Ensembling – Voting Classifier – Hard:

This model predicts the labels based on majority rule. More details about usage of this model can be found at scikit-learn documentation.

In this case, I re-trained the model by fitting hard voting classifier to LR and MNB models. It yielded the accuracy of the three models, LR, MNB and the ensemble model, which paved way for further analysis of each class.

Accuracy - Hard Voting Classifier

From the above figure, it can be observed that toxic and insult classes performed comparatively low than the other classes. This may be due to the fact that a proper pattern could not be observed in those classes.

Stacking (re-training the model):

This is also a type of ensembling. But, the model has to be re-trained.

I imported a submission file from Kaggle with 0.9854 score, which contains the predicted probabilities only. I then computed the labels by setting the threshold to 0.5. I re-trained the model with the entire data set and predicted the test set.

I then tuned the thresholds. I searched for various thresholds and chose the best one for each class such that the difference in predicted probabilities between my model and the imported model should be the minimum. It resulted in different thresholds for different classes, like, 0.8 for toxic class, 0.4 for severe toxic, 0.5 for obscene class and so on.

Above processes yielded public AUC scores of 0.9792, 0.9784 and 0.9788. Please refer to the table for details.

Kaggle Submission 5 - Weighted Average (without re-training model):

As I mentioned before, I selected two of my best submissions and the third from Kaggle and assigned weights as follows:

- My Submission 4: 0.9788 (with weight 0.3)

- My Submission 2: 0.9792 (with weight 0.3)

- From Kaggle: 0.9854 (with weight 0.4) - the same model which I used for stacking.

It yielded the public AUC score of 0.9843.

Final Kaggle Score for this project:

I repeated this process: selecting one of my best models and the other from the Kaggle (the same model, used above), assigning equal weights (0.5) to each and computing the weighted average. After five iterations, it resulted in a public AUC score of 0.9854. This submission was my final Kaggle submission for this project. I plan to update my score before the competition deadline.

Conclusion

- Classes ‘Obscene’ and ‘Threat’ are easy to classify.

- Deeper analyses are required for finding patterns in ‘Toxic’ and ‘Insult’ classes.

When I checked the Kaggle discussion board, I understood the following: Standard Machine Learning (ML) algorithms yielded a maximum score of 0.9792, irrespective of any approach. In order to improve the score further, one has to employ Deep Learning (DL) techniques. Simple neural network model yielded a score of just 0.977. Extensive DL techniques and various ensemble techniques yielded a score of above 0.98.

This leads to the following future directions.

Future Directions

I plan to

- analyze the problem by employing DL techniques and model the problem using

- Recurrent Neural Networks (RNN)

- Convolutional Neural Networks (CNN)

- Bi-directional RNN + Gated Recurrent Unit (GRU)

- FastText (from Facebook) and Glove (from Google)

and

- observe the data, find patterns in each class and get in-depth knowledge of the data before training the model, because I believe that only if I understand the data, I can train the model perfectly.

This project provided a great way to understand and employ various machine learning models in text data, to understand the system requirements of neural network models as well as to learn new techniques like ensembling and stacking, which are some tricks to win a Kaggle competition (as mentioned by Mr.Vitaly). It was an astonishing experience to work on this project. Thanks to NYC Data Science Academy for providing me a wonderful opportunity to work with scikit-learn and nltk.

Code and data can be found on Github.