Transportunities

Contributed by Jake Lehrhoff. Jake took NYC Data Science Academy 12 week full time Data Science Bootcamp program between Sept 23 to Dec 18, 2015. The post was based on his second class project (due the 4th week of the program).

The Situation

If you ask a room of New Yorkers to raise their hand if they experienced a transportation woe in the last week, you can expect just about every hand to go up, angrily or wearily. And anyone who doesn’t raise their hand is likely to have a quiet moment of joy, recognizing the miracle of seven straight days of unperturbed subway rides and easily accessible taxis.

(Photo by Andrew Burton/Getty Images)

But of course New Yorkers have transportation woes. In just 305 square miles there are 8.5-million of us, and—from someone who commutes to midtown—about as many tourists, give or take. The enormity of NYC’s transportation needs makes it ripe for data science’s helping hand.

Solutions Make for New Problems

The last decade has seen an explosion of transportation opportunities beyond the traditional options of subway, bus, taxi, or our own two feet, most notably, Uber and Citibike. And the success of these newcomers is not just due to their unique offerings or that the transportation space was ripe for disruption: these data-conscious companies look to improve the transportation experience by understanding how we, the customers, engage with their product.

As businesses that provide the same fundamental service, Uber and Citibike face many of the same problems. Bikes have to be placed where riders want to start their trips, just as Uber drivers have to be in the vicinity of their fares. Uber and Citibike only exist as solutions to our transportation problems if they are convenient, efficient, and cost effective. If there are no available bikes at the nearest bank or if there is a 15-minute wait for an Uber, customers may feel better served by more traditional options.

Uber has even greater problems, as drivers aren't employees but "contractors." Uber drivers have the latitude to work when and where they like, completely at their own discretion. So what guarantees that "contractors" decide to work where the demand is?

An Investigation

All it takes to avoid these problems is data and wherewithal. With six months of pickups from April to September, 2014, we can paint a picture of customer engagement and propose best practices for maximizing effectiveness and efficiency.

Changing ridership is a function of more than just time and location. By adding weather data from the National Oceanic and Atmospheric Association, we can answer all sorts of fascinating questions. Do Union Square residents still ride Citibikes when it drizzles? Are fewer Ubers hailed around midtown on warm days? Are late morning commuters more likely to take an Uber if it's pouring? Suddenly, it’s not simply a question of when and where to we ride, but why.

The App

For a 360º view of the data, I’ve developed a Shiny app that offers both basic exploratory data analysis and a tool to visualize Uber and Citibike pickups with high specificity. Open the app and test out the scenarios below.

A look at monthly ridership shows a steady upward trend among Uber customers and a steep increase in the spring before a level summer for Citibike. More data is necessary to confidently predict what these trends mean, whether Uber’s upward trajectory depicts continual market gain and Citibike’s data shows a seasonality of use or not.

The fourth tab, "Heat Map," offers greater control to your investigation of Uber and Citibike ridership.

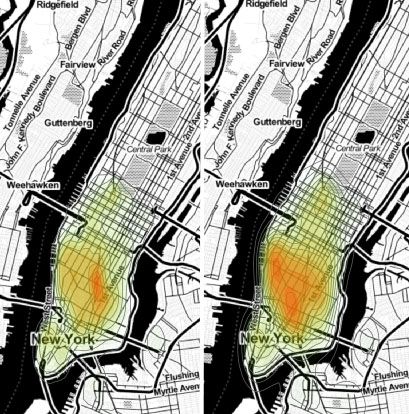

Consider this scenario: You're an Uber driver. It's 6am on a weekday. You're awake because commuters are awake. Also, you left the bedroom window open to enjoy the fresh spring air, but now a tree-full of birds are celebrating life like a symphony of car alarms. But more importantly, commuters. Off to work. Where will you find the most pickups? What part of town do you want to make your way back to after each drop off? What parts are Uber deserts?

Let's take a look. Set the app to "Uber," "May," "6-7am," "Weekdays," "No Rain," and "Any."

Now let's say you let yourself sleep in (you deserve it). By 9am, where has the densest ridership moved? What happens as you keep driving into the afternoon?

We can answer similar questions with the Citibike data. It's still May, an exciting moth for Citibikers because the weather improves with each passing day. Does Citibike need to be more careful with its redistribution effort on warm afternoons? Are different areas of town affected?

Overall, Union Square is a busy area for spring Citibike pickups, but warm days see more riders around Fulton Street and the West Village.

Play around with all of the options to discover what combination of time and weather paints the most unique customer engagement.

Future Directions

Really, this is the tip of the iceberg. Both of these companies face the fundamental problem of migration. If an Uber driver brings a commuter from the Upper East Side to downtown, that is one fewer driver in the UES to pick up the next downtown commuter. Eventually, the system will be overwhelmed and an area may become an Uber "desert." The same is true in a much more startling fashion for Citibike. Late commuters may have to hunt for a bike, or else, rely on alternative transportation. Equally frustrating, what happens when there's nowhere to park your bike? Visualizing pickups alone can't solve these problems.

Additionally, this investigation did not take into account Uber wait time. Identifying times and locations where Uber riders have to wait an unreasonable amount of time for a ride (and thus might choose to grab a cab or hop on the subway) would offer significant value to Uber as a company.

Conclusions

Uber and Citibike have thrived since their launches because they make our lives easier. But there is still room for improvement. Uber's main hiring draw--that contractors can work when and where they like--is admirable, but that doesn't mean that it necessarily serves the customers. The simple action of pinging a driver before a rainstorm, urging them out the door to make some quick cash, may help flood the streets with cars when eager riders are huddled under awnings, hoping against logic and experience that a car will miraculously accept their request.

All transportation options have their downsides. Walking takes too long. Cabs are expensive. The subway is crowded and smelly and breaks down and is filled with angry people. Uber and Citibike aren't perfect either, but they can use data science to improve their product tremendously.

The Code

All of the data and code can be found in my public github repository. Enjoy!

Gathering Data

There are three sources of data: Uber, Citibike, and weather. The Uber data comes courtesy of fivethirtyeight.com's github repository, as they had previously requested proprietary Uber data and was granted six months of pickups. The Citibike data is openly available through their website--they are generously open-source. Weather data was granted by the National Oceanic and Atmospheric Association, amazingly, within 5 hours of the request.

Munging Data

"Clean" data does not mean that it is ready for analysis. R doesn't automatically know that something that looks like a date is a date . Similarly, vast datasets have to be pared down to the relevant data. timeDate and dplyr were invaluable tools in these tasks.

Weather

First, the weather data had to be simplified, as it contained readings from all the weather stations in New York City. For the purpose of these analyses, one would suffice. I chose the Central Park observatory and applied the following filter function:

library(dplyr) library(timeDate) weather <- read.csv('data/weather.csv') weather <- filter(weather, STATION_NAME == "NEW YORK CENTRAL PARK OBS BELVEDERE TOWER NY US")

Now that my thousands of rows were down to a couple hundred, the columns had to be selected. I chose to limit the scope to precipitation, minimum temperature, and maximum temperature. Finally, the date variable can be formatted as actual dates.

weather <- select(weather, DATE, PRCP, TMAX, TMIN) weather$DATE <- as.POSIXct(as.character(weather$DATE), format = "%Y%m%d")

Uber and Citibike

The Uber and Citibike data came in large files, one per month, all munging was done individually to avoid corrupting a large file and losing large swaths of time and effort. Thankfully, the single most important variable--the location of the pickup--required no attention beyond normalizing the column names between data sets. The real munging concerned creating the variables that I was interested in visualizing.

The below function turns the date column into a time object, creates a column specifying if a given pickup occurred on the weekend, creates a time column parsed from the date, and joins the weather table. For flexibility, the function takes time format as an argument so it can be applied to both datasets. Two examples of this function applied are included below.

timefunc <- function(df, column, format){ df$date <- as.POSIXct(column, format = format) df$weekend <- sapply(df$date, isWeekend) df$time <- format(df$date, format="%H:%M") df$DATE <- as.POSIXct(format(df$date, format="%Y-%m-%d")) df <- inner_join(df, weather, by="DATE") return(df) } uber4 <- timefunc(uber4, uber4$Date.Time, "%m/%d/%Y %H:%M:%S") citi4 <- timefunc(citi4, citi4$starttime, "%Y-%m-%d %H:%M:%S")

Global Summaries

For the plots on the third tab of the app, I needed a way to look at global ridership. For this, I created a function called "makeridership." It summarizes monthly data by precipitation and temperature data before being combined with other ridership data. Then the important columns are added: weekday vs. weekend, drizzly days, stormy days, hot days, and month.

makeridership <- function(dataset, ridership){ summarise(group_by(dataset, DATE), count=n(), PRCP=mean(PRCP), TMAX=mean(TMAX), TMIN=mean(TMIN)) %>% mutate(., weekend = sapply(.$DATE, isWeekend)) %>% rbind(ridership, .) } uberridership <- makeridership(uber5, uberridership) # ... citiridership <- makeridership(citi5, citiridership) # ... citiridership <- mutate(citiridership, drizzle = PRCP > 0 & PRCP <100, downpour = PRCP >=100, hot = TMAX>267, ride="Citi") uberridership <- mutate(uberridership, drizzle = PRCP > 0 & PRCP <100, downpour = PRCP >=100, hot = TMAX>267, ride="Uber") ridership1 <- rbind(uberridership, citiridership) ridership1$DATElt <- as.POSIXlt(ridership1$DATE) ridership1$mon <- ridership1$DATElt$mon+1

Finally, with cleaned data, we can summarize the number of rides taken on each type of day. We are ready to graph global data.

ridership1 <- select(ridership1, count, ride, mon) ridership1 <- group_by(ridership1, ride, mon) %>% summarise(., sum(count))

Map Object

The only other work before we move on to the Shiny app is to create the map object that we will lay the heat map over. ggmap's "get_map" function allows specification of any location in the world. For a central view of Manhattan, I've chosen Columbus Circle.

map <- get_map(location = "Columbus Circle", zoom = 12, source = "stamen", scale = c(1200,1200), maptype = "toner") map1 <- ggmap(map, extent="normal", fullpage = TRUE)

Heat Map Function

The heatmap is created by calling "mapfunc" and layering it on the google map. That function lives in another R file called "helpers." It is a stat_density2d plot, using the longitude and latitude from the monthly datasets.

mapfunc <- function(df){ m <- map1 + geom_density2d(data=df, aes(x=Lon, y=Lat), color = "grey40") + stat_density2d(data=df, aes(x=Lon, y=Lat, fill=..level.., alpha=..level..), size = 1, bins = 16, geom='polygon') + scale_fill_gradient(low = "green", high = "red") + scale_alpha(range = c(0.00, 0.25), guide = FALSE) + theme(legend.position = "none", axis.title = element_blank(), text = element_text(size = 12)) print(m) }

Shiny

Shiny apps have two main components, the ui or "user interface" and the server. Think of these pieces as the pretty things you tap on your phone and whatever on earth is happening inside there that makes those pretty things work. Below is the code for a few of the selectors on the "Heat Map" page. These selectors don't actually filter anything. They are merely buttons, like the keys on your keyboard. However, when activated, they talk to other code within the system which executes a command of sorts. For example, in the ui there are "radioButtons" to select Uber or Citibike, a "selectInput" for the month, and a "sliderInput" for the time of day.

UI

radioButtons("transportation", label = h3("Pick your ride"), choices = list("Uber", "Citibike"), selected = "Uber"), selectInput("month", label = h3("Pick a month"), choices = list("April","May","June","July","August","September"), selected = "April"), sliderInput("time", label = h3("Time of Day"), min = 0, max = 24, value = c(0,24)), actionButton("goButton","Map me!")

The Server

Each of those selectors function as filters. First, the transportation button selects the dataset, "ubersample" or "citisample."

ride <- reactive({ switch(input$transportation, "Uber" = ubersample, "Citibike" = citisample) })

Then, our dataset--now called ride()--is pushed through a series of filters. The month filter searches the column in our dataset named "mon" and only selects rows where the value is 4. The time filter has more moving parts, but functions similarly: the "time" column of our dataset is searched and rows are selected where time is greater than or equal to the minimum input time and less than or equal to the maximum input time. Finally, when you click the "goButton," our filtered data is pushed through the plotting function, and the app is populated with your specific map.

ridefilter2 <- reactive({ # Month filter if (input$month == "April") { ridefilter <- ride() %>% filter(., mon == "4") } # ... # Time filter mintime <- input$time[1] maxtime <- input$time[2] ridefilter <- ridefilter %>% filter(., time >= mintime, time <= maxtime) # ... heatmap <- eventReactive(input$goButton, { mapfunc(ridefilter2()) })

So, it's not a single function that puts the heat map on the page, but the interaction between the UI and the server--selecting data sets, filtering rows, and compiling the remaining data into a beautiful image.