Two ways of scraping job listings

Searching for the ideal job can be a difficult and tedious task, especially when there are many possibilities and great competition. One has to find a good balance between quantity and quality of applications, and the last thing we're looking for is spending time on an application that turns out to be not quite what we expected.

As such, it would be a great idea to develop a platform that helps us filter through the vast amount of open positions and takes us directly to the ones that better suit us. This project takes care of the first step: gathering data on job openings using scrapy.

To achieve this, we took two different approaches. A first one searches separately for each company, while the other one uses search engines. The scope of our results is restricted to Data Science related positions, although the scripts can easily be extended to include other areas.

First Approach

This approach consists of scraping the careers section from a few companies' website. Because each company has its own systems and web interfaces, it requires writing a script for each company separately. This translates into a high initial cost, as well as a high maintenance cost due to the likelihood a company is to change its web layouts, job listing structure or internal protocols. Also, there are only so many companies we can cover, so many more would be inaccessible using this approach. On the other hand, by scraping separately for each company, it is possible to absorb each one's listing style, yielding more structured and cleaner information.

With the above considerations in mind, this approach only makes sense if applied to the largest employers in our area of interest, Data Science in this case. As a way to find large companies, and since this is a web scraping project after all, we decided to start by scraping the Fortune 500 list of companies. As one can inspect, that page contains a list of companies that increases as the user scrolls down, making it impossible to extract beyond the top 20 companies with scrapy. To circumvent this limitation, we listened to AJAX requests and were able to detect a very convenient one that returns not only the visible information (rank, company name and revenue) but in fact essentially all the information you'd be able to find on the Fortune 500 website on each company.

At this point we gathered a considerable amount of information on the top 500 companies worldwide in terms of revenue, so it's time to look for job listings. Our initial focus was on technological companies.

Apple

View of Apple job listings web interface

Starting with Apple, we find that the page changes dynamically so, as was done for the Fortune 500 list, we must listen for AJAX requests. These in turn yielded well structured information, so there was no need to parse HTML.

One of the peculiarities of this case was the use of POST requests, requiring a certain familiarity with scrapy's FormRequest:

The entire code for the spider can be found here.

View of Facebook jobs web interface

Facebook, while among the companies we scraped being the least challenging technically, it offered a few other challenges of its own. For instance, each job listing can have more than one location. Also, including all the US locations in the query seems tedious, so we filtered them as we parsed.

The code for the spider can be found here.

Amazon

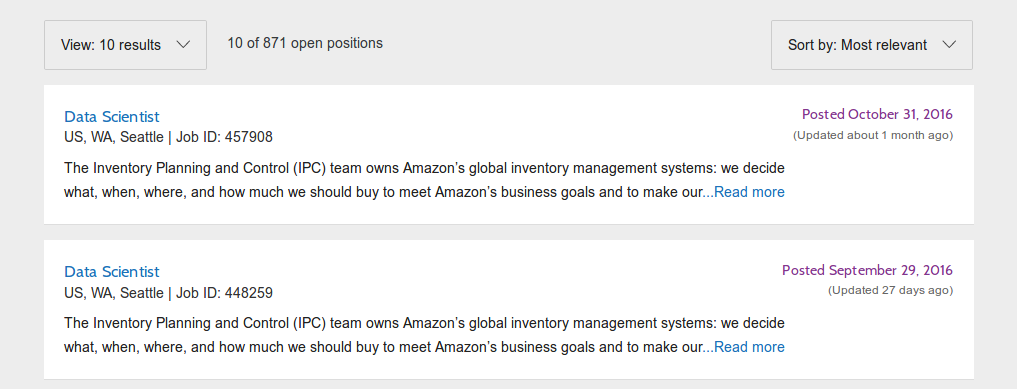

View of Amazon jobs web interface

Amazon was by far the company that yielded the greatest amount of results for "Data Scientist". Also, as it is evident by this map, is also the company with positions most spread around the country.

Much like in the Fortune 500 list, Amazon's list expanded within the same page, this time with the user pressing a button. After listening for asynchronous requests, we found one that returns the list of positions, including all the details for each job listing. This means we could scrape all the listings with a single parser. However, the URL for the asynchronous request was exactly the same as for the HTML page, the only distinction being in the HTTP headers.

The entire spider code can be found here.

Due to time constraints, it was not possible to individually scrape more companies until the time of this publication.

Pipeline

In order to keep accumulating job listings over time, avoid scraping duplicates, and also as a way to safely interrupt long scraping tasks, we store the listing ids in a separate file and load them as the spider starts, checking for the presence of the id before scraping each job listing.

To make this process easier as the number of spiders increases, we created a spider class that incorporates such features, to be used as a super class for almost all the spiders we implemented.

Then, we made sure the parsers would access the set self.ids, and also that the write pipeline includes new ids as listings are appended to the .csv files.

For more details, you can check the source code for the pipelines and idspider.

Second Approach

This approach consists of using job listings search engines, such as Indeed and Dice. Using these platforms allows us to find many more results, for both large and small companies, and without having to implement many spiders. On the other hand, the data we gather this way isn't necessarily well structured.

Both platforms provide an API that returns a list of jobs that match a given search query. However, in order to find the details for each listing, we must visit the webpage. Given the one spider fits all aproach, the data gathered won't be as consistent and clean as for the first approach.

With Indeed we scraped 5,672 listings, while Dice gave us 63,430. After a brief analysis, we found the most common words present in job descriptions.

Using the query "Data Scientist" |

Also using queries "Data Engineer" and "Business Analyst" |

Having this incredible amount of listings also allowed us visualize on a map how the positions are spread geographically.

For more details on the spiders, you may check the source code for Indeed and Dice.

Next Steps

Moving forward, there are a few improvements we can make on the web scraping front:

- Expand the amount of individual companies under the first approach,

- Use more relevant search terms,

- Keep track of whether certain listings are or not alive,

- Use Selenium for the hardest interfaces to scrape (such as Google).

In terms of data analysis and job recommendations, we can employ a few NLP tools to match jobs with candidates.

Source code and Visualization tools

In order to visualize the geographical dispersion of job listings, we created an R Shiny application that displays maps for individual company jobs and also for jobs found on the search engines. You can find it here.

All the relevant source code can be found on github. Due to storage concerns, the .csv files in the repository are either outdated or, in the case of Dice, not present at all (Dice's file was too large to be included).