Data Web-scraping reddit: user behavior and top content

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

What is reddit? Some use it as an easily-accessible repository for cat pictures. Others use its news aggregation to quickly overview current events. Even more use it as a platform to communicate with other like-minded individuals over the internet. At its core, reddit is a content-aggregation site. Users act as content-creators, submitting links, content, or meta-content to the site. It has become exceedingly popular in recent years, data shows accruing over 70 billion page-views in 2014.

As of 2017, reddit is the fourth most visited site in the United States, according to statistics website Alexa. It is the seventh most visited site in the entire world. More than 4% of adults in the United States use reddit, of which 67% are male. Most of these users fall into a younger demographic -- think ages 18 to 35.

When we look at reddit, we are looking at one of the largest internet populations in the entire world. Furthermore, we are looking at a site dominated by the most valuable consumer demographic: male, ages 18 to 35. To any prospective advertiser, reddit is a veritable gold-mine of information; it is imperative to understand more about what drives reddit users and content.

Project Goals and Vision

I came into this project with two broad questions:

What patterns can we identify in user behavior and reddit's structure?

and,

From a marketing perspective, what is the best way to advertise on reddit?

My intuition was that the most successful advertising campaigns on reddit would consist of content that is most similar to the content that is already successful on reddit. Thus an analysis of user behavior was critical: what characteristics separate top posts from all the others? Can we identify certain attributes or strategies that maximize the effectiveness of submitted content?

That is not to say that reddit is a monolith which can be simply characterized. Reddit is a vast, sprawling community with myriad sub-communities, known as 'subreddits', all with different cultures, standards, and demographics. Stopping short of creating individual strategies for each separate subreddit, my goal was then to find out if we could identify communities that consisted of multiple subreddits. If successful, we would essentially have identified marketing buckets which could be used in a more granular advertising approach.

The Data

Faced with the time constraint of 1 week to gather data, I neglected to create a web scraper that would actively gather post data from reddit in favor of a scraper that would gather static historical data, the latter approach gathering far more data within the time limit than the former. I then planned to scrape the top posts from the top 150 subreddits (with 150 being an arbitrary number). A notable limitation here was that reddit only lists the top 1000 posts per subreddit; any analysis was then skewed by any characteristics that the top posts have. However, because my analysis only wanted to analyze the most successful posts, this was a desirable artifact of my data selection.

Scraping Data

I initially intended to scrape reddit using the Python package Scrapy, but quickly found this impossible as reddit uses dynamic HTTP addresses for every submitted query. Essentially, I had to create a scraper that acted as if it was manually clicking the "next page" on every single page.

The Selenium package for Python proved to be the most applicable tool for my task: Selenium loads every request within an instance of a web browser, making it possible to program in mouse movements and clicks. But the major drawback to the package also happens to also be its strength. Because Selenium actually loads an instance of a web browser, it is less time-efficient than other web-scrapers like Scrapy which send direct queries. More extensive data-scraping (millions of observations) would have been relatively infeasible, but the package was sufficiently quick for my collected data-set (approximately 130,000 observations).

The way my scraper worked was as follows. I fed a list of 150 subreddits into the scraper. From each subreddit, my scraper then created a relevant URL which it then navigated to (this URL was the subreddit filtered by top posts of all time). Finally, the scraper collected information per each post, information which corresponded to a series of XPaths.

Every observation I collected (i.e. each datum for each post) contained the following information: subreddit; title; the domain that the post linked to; the username of the user who submitted the post; the number of upvotes the post received. Observations were then fed into a separate .csv file for each subreddit. More detailed information regarding my code can be found through the GitHub link provided below.

Data Visualization

Upvotes

For my own curiosity, I first chose to look at the number of upvotes each post received over time. This graph happened to illustrate the growth of the site: more recent posts correlate with a higher number of upvotes, suggesting that the amount of users has increased over time.

An interesting artifact of the data occurs around halfway through 2014, marked on the graph. In December 2016, the reddit administrative body implemented a revised method of calculating upvotes. The number that is portrayed as the number of upvotes isn't actually the sum amount of upvotes, but the sum amount of upvotes fed through a black box algorithm. The way the algorithm works isn't known to the public, so the displayed number of "upvotes" is the best that we can go off of for any external analysis.

Adjusted Upvotes

In any case, the effect of the algorithm change was essentially to "better reflect" the actual number of upvotes. In practice, the change dramatically inflated the upvote value that we see. But, as you may note from the graph, this algorithm only retroactively changed scores after a certain date (June 29, 2014). To ensure the integrity of any further analysis, I attempted to apply a correction to the data that were not affected by the retroactive change. You can see the effects of this correction below:

This gives us a much smoother progression across the entire data-set. You may notice an extreme outlier in 2012, highlighted in red. This is not an error: the datum represents a Q&A post that former President Barack Obama participated in on reddit.

Upvote Distribution

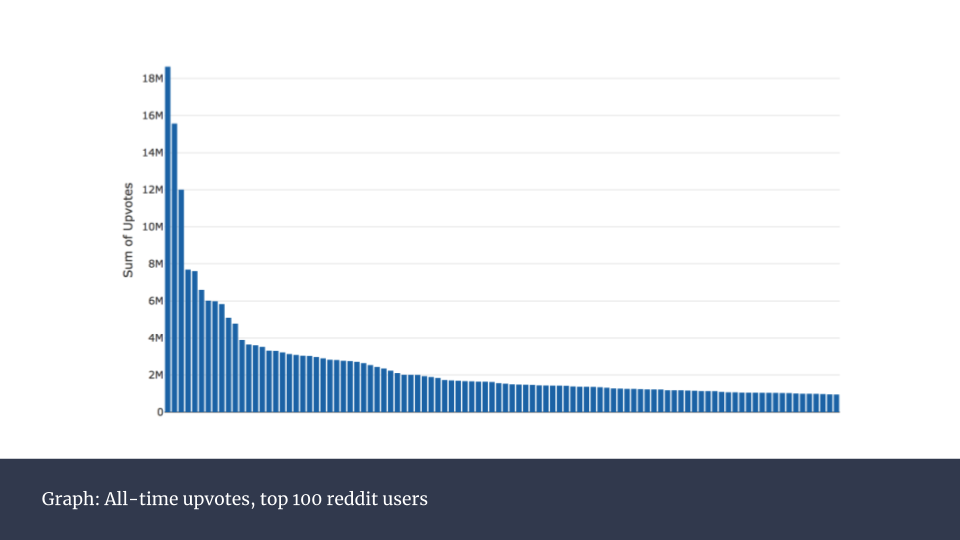

In the interest of finding successful user behavior, I then graphed the upvote distribution among the top 100 reddit users.

You may notice a significant top-heavy distribution. In fact, the top 100 users account for over 12% of all upvotes. This is a massive inequality, considering that reddit had more than 1.6 billion unique visitors per month in 2017, which suggests that the posting behavior of top users must heavily differ from casual visitors to the site. The next step was to identify some characteristics of successful posts.

Time of Day vs Upvotes

The above graph is an aggregation of all posts, organized by the time of day in which they were posted. You will notice a significant dip between 00:00 and 12:00, after which the mean number of upvotes remains relatively constant.

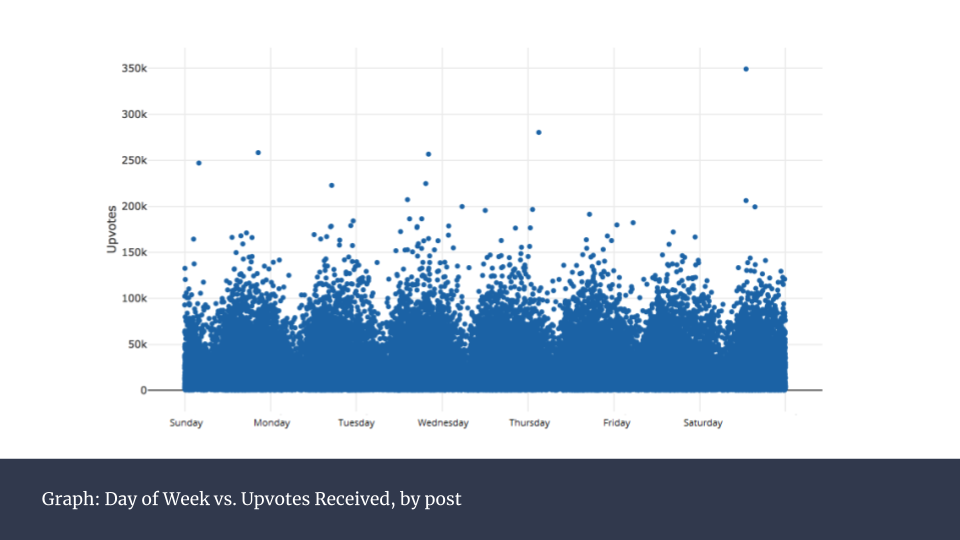

Day of Week vs Upvotes

A similar graph grouped by day of the week does not yield any particular insights:

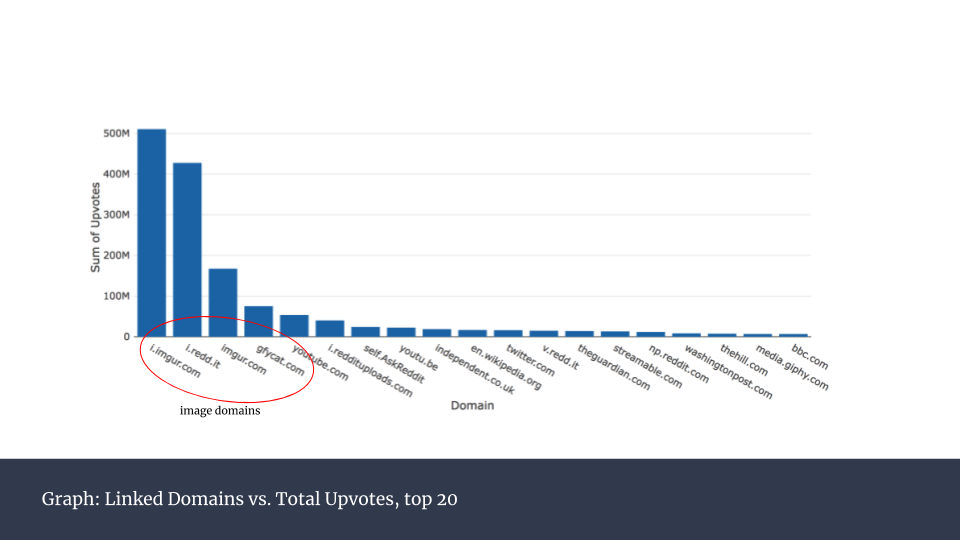

Next, I identified which linked domains were most well-received (i.e. had the most upvotes). I grouped each domain, summed up the number of total upvotes, and graphed the result:

Domain vs Upvotes

An interesting find was that the top four domains were all image domains. In other words, the content of the most successful posts consist almost solely of images. This is not surprising, as images are the most easily and quickly digestible content on the internet -- we see this trend across a myriad of sites.

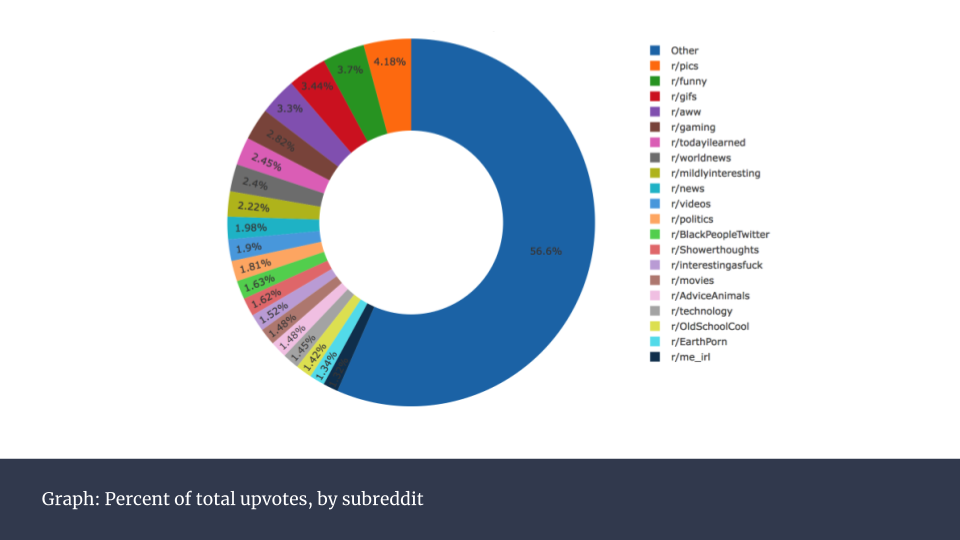

Sum Upvotes per Subreddit

Finally, I graphed the sum upvotes per subreddit. An important note is that because I was only able to scrape 1000 posts per subreddit, the chart below is not representative of the larger upvote trend. The more popular subreddits (e.g. r/pics, r/funny) receive a dramatically higher volume of posts, and total number of upvotes for these subreddits would be much larger than suggested below.

The subreddits with the most upvotes almost perfectly match up with the most popular subreddits, with the notable exception of r/announcements, the most subscribed-to subreddit. However, only reddit staff can post to r/announcements, which explains the disparity.

Communities Within Reddit

Finally, I set out to identify communities within reddit. Using the algorithm described by Raghavan et al. in Near linear time algorithm to detect community structures in large-scale networks, I created a network correlation model. Vertices within this model consisted of the top 20 subreddits, and edges (i.e. connections between the vertices) between vertices were weighted by number of posts between those respective subreddits. A heatmap using the values associated with these weights, similar to a Pearson Correlation Chart, is depicted below:

Reddit Network

You may note a number of strong correlations, for example, between r/movies and r/todayilearned, and r/movies and r/gaming. A more visual representation of these correlations, in the form of a network graph, is below:

Above, only the edge weights with values greater than the edge weight mean are selected. Red values correspond to stronger weights, black values to weaker weights. You may also notice colored groups: subreddits within each group can be thought of as a "community". That is, they share a common community of users that cross-post between each of these subreddits. This kind of analysis is particularly useful if we want to identify buckets of users that share commonalities. One marketing approach that works for r/movies is then perhaps likely to work for r/todayilearned.

Conclusion

While only scratching the surface of reddit's data-mine, the data analysis yields a surprising amount of information about the site.

A number of characteristics are common among posts that perform exceptionally well. A good post should be posted at the right time, it should consist of the right content (i.e. an image), and it should reside within a certain subreddit. A lot of this is extremely intuitive, but it's useful to have data backing up any assumption.

Lastly, reddit's users can be broken down into certain communities with a high degree of certainty. While my analysis only covered 20 individual subreddits, a future project might be to look at a greater number of subreddits, as well as the comments section within each subreddit. Further correlation might await such a bold project.

Check out the code on GitHub, here.