Data Study on Car Resell Value

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

When you purchase a brand new car, the manufacturer's suggested retail price, the MSRP, is predetermined. But when you sell your car, and ownership of the vehicle changes hands, how do you determine what the car is worth? You most likely go to Kelley Blue Book or CarFax to get an appraisal of your car. There are many factors that contribute to a car's value, from mileage to engine type. In this project I will show you how you to use data from machine learning to model car resell value.

The Data Set

The data I am using was gathered from Kelley Blue Book's website (www.kbb.com) using a Python scripts and Scrapy.

How Scrapy Works

Scrapy allows you to program a bot (i.e. a Python script) that collects whatever data you want from a webpage. Although I won’t go in to depth about all the code a Scrapy bot requires, I will outline key points in Scrapy bot design.

- Initialize a Scrapy bot directory . Starting a Scrapy project is easy: After installing Scrapy got to your Python terminal (such as Anaconda Prompt) and type:

scrapy startproject <Name of Project>This will create a Scrapy directory folder with all the files you need to start web scraping - Setup the “Items.py” Script . This script tells the bot what elements of a website you want to scrape . For this project I want to scrape the details about the used car that is being listed

- Setup the “Spider.py” script . This is the brain of the bot. The Spider script essentially “crawls” the webpage and collects the data you assigned in the Items script. This is a hefty script! I will save the explanation of this code for a blog post.

- Setup the “pipelines.py” script . This script tells the bot how you want to save the data it collects. CSV format? Text file? It’s up to you!

- Setup the “settings.py” script . This script controls the settings of the bot like how fast should the bot scrape. You can change these settings here.

scrapy crawl <name of spider> The spider bot will begin to crawl the site, collect the items you specified, and save them in a predetermined format in the root of your project directory!Model Creation

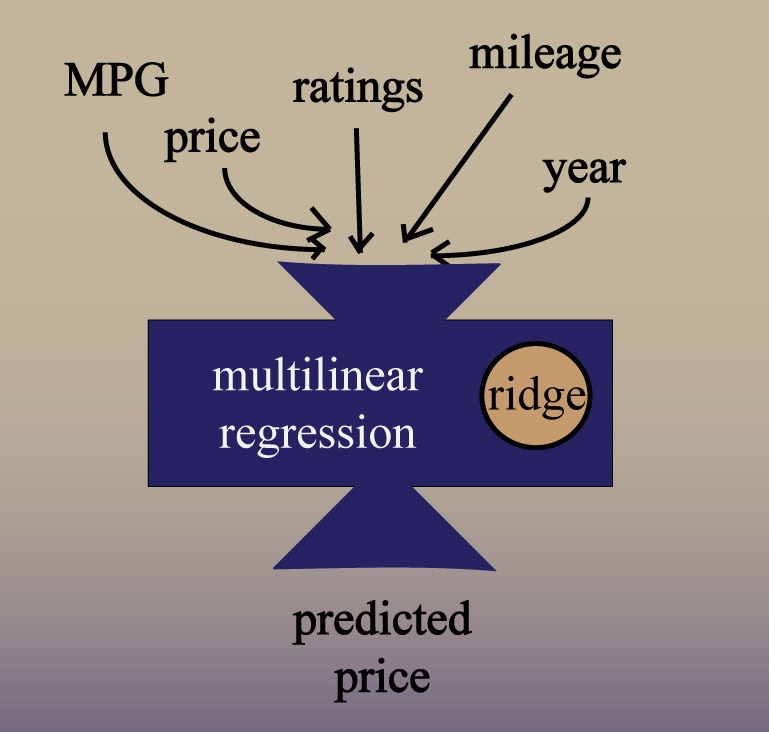

For simplicity's sake, I will focus on only quantitative features in my model creation: Year, consumer review, expert review, mileage, MPG, and price. In a complete data science project, I would also account for the categorical variables.

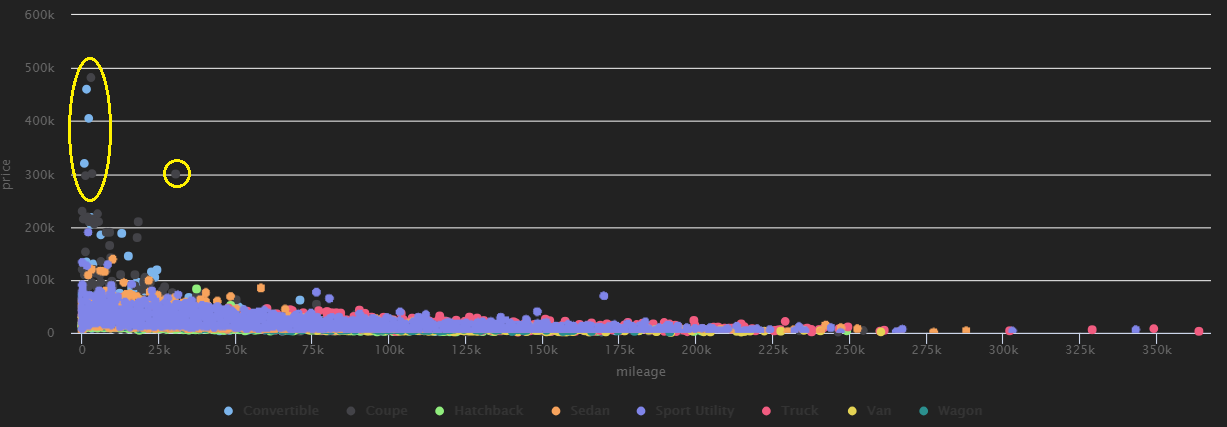

I remove outliers like some of the luxury/rare vehicles that are skewing the distribution.

Data on Mileage (x-axis) vs. Price (y-axis)

I apply "Box-Cox" transformation to help normalize the distribution of skewed features.

Finally, I train the model on 80% of the data and test on the remaining 20%. I use cross-validation (10-fold) to ensure that I sample a different set of data points each time I train the model. As is best practice, I use regularization (in this case Ridge) to better tune the model.

I apply the model to test set data and get a root mean squared log error (RMSLE) of 0.263

I plot the actual prices vs. the predicted prices in a density histogram:

Actual Price vs Predicted Price

We see here that our predicted prices closely mirror our actual prices.

Future Steps

Below are a few steps we can take to better improve our model:

- Encode our categorical variables and add them to our model

- Test this model on data the model has never seen before

- Test different machine learning algorithms and ensemble these models together