Data Web Scraping Pre-owned Vehicle Pricing

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

The basic concept of 4Ps in marketing is Price, Product, Promotion and Place. Price is always in the first place and it is the most important factor when executing a marketing mix. Because of my background in marketing and automobile industry, I decided to scrap the second largest classified automotive website: Cars.com. With over 58,000 used cars' observations within 30 miles range of New York City Area across a total of 34 brands, I was able to do some handy practice in Data cleaning & manipulation and simple multiple linear regression modeling with this dataset I scrapped with Python Scrapy.

Data Scraping Process

Gladly, cars.com is a comparatively structured website. With the location radius set to 30 miles and zip code at 10001, the search result page is showing that there are 61,304 results match my filter. However, after a further investigation, the page stops at the 2000th record, which makes sense because it is really unnecessary to have every result in this one page. With this constraint, I had decided to parse the data I needed by each automobile make.

The first layer of my scraping job is to get the make IDs. At the left side filter options UI, with the make list that user can select, each make has a unique mktid embedded within its link. My first step is to scrap all that mktids, with them I can generate a list of urls corresponding to the search result of each make. After that, since the program would not know how many pages that each make's results have, so the second layer is to parse the number of results match my filter and then generate another list of urls for each search result page.

The final step is simple, with the two URLs lists I have created before, I generally have a unique URL for each page of each make. I just need to iterate through them, get the details' link for each car and scrap the data that I needed. The whole scraping process did take a while, but I ended up with around 58,000 observations, which is nearly all used-cars data within 30 miles of zip code 10001 at New York City.

DATA CLEANING AND EDA

The data I have has 16 features in total, they are the title, year, make, model, mileage, the price of the car, also some interesting details like the cty/hwy fuel consumption, interior/exterior colors, dealer zip code/dealer review score and engine/transmission type. I did some data cleaning before the EDA, including remove observations contain NAs in some important feature like price/mileage/make, transform some string data type into the integer, and aggregate the drive type/exterior color into some major groups. This step is necessary for the following modeling step because all NAs have to be handled before the modeling step.

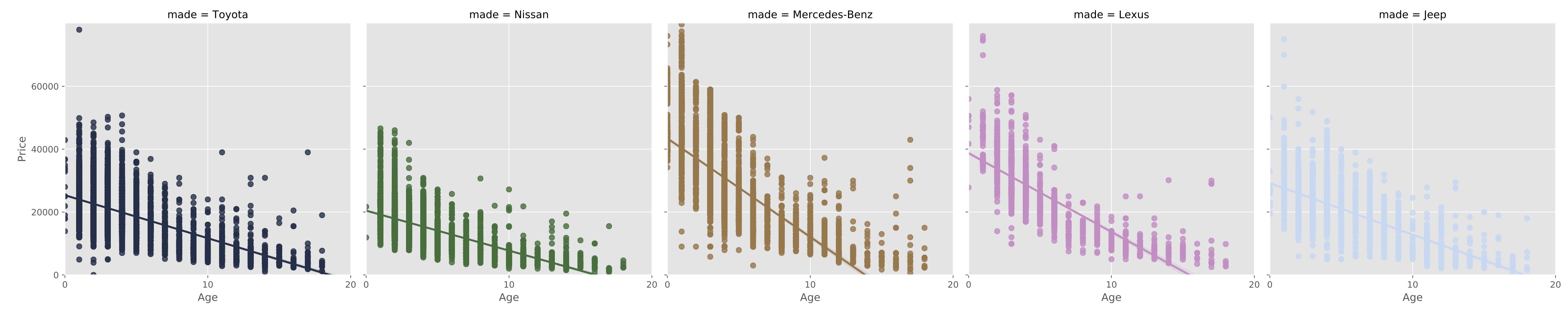

After inspecting the data, I decided to only do some EDA and modeling on the top 10 brands with the most observations due to the sample size. One of my initiate question is what is the relationship between used cars' age, mileage and price across all brands? So with this question in mind, I plot all the data of top 10 brands into scatter plot, and visually inspect them first.

From the plot above, the effect of mileage and age is definitely different between japanese brands like Nissan and luxury brands like Mercedes and BMW. For further investigations, I planned to use multiple linear regression to modeling their price changing trend.

Modeling

According to the inspection of plots from my EDA, there is definitely a linear relationship between used cars' mileage/age and its price. Therefore I want to create a linear model that is able to appraisal a used cars' value base on its make, model, mileage and age. With the inspection of polynomial curves in the relationship, I generated a new feature which is the age squared.

Unsurprisingly, for luxury brands like Mercedes and BMW, the new feature does actually improve my test R square a lot, and finally based on the test R square of my models, I can approximately appraisal the value of a used car base on its basic information. Some more feature work I am planning to do is consider more features into my models like drive type, exterior, and interior colors and make my model more accurate.