Sentiment Analysis on Stock Ideas Articles

Introduction:

I am interested in training a model that could determine overall sentiment of articles/sentences related to stocks. This model could be useful in analyzing enormous amount of information from news websites, social media, etc. to pick up trends. The first step in training such a model is to obtain a large amount of labeled data. To that end, I chose to scrape a very popular website that features articles on all aspects of stocks contributed by a large community. In particular, I choose to scrape the articles about long ideas and short ideas. This would serve as labeled training set where long ideas correspond to positive sentiment and short ideas correspond to negative sentiment. The codes for scraping can be found here.

Difficulties:

The website is notorious for its anti-scraping measures. To overcome this problem, I first tried to use TOR. However, I still get blocked most of the time. The reason could be that the known TOR proxies are blacklisted. ( Here is a tutorial on how to set up TOR with scrapy.)

The second method I tried was actually simpler but efficient. I obtained a list of most commonly used user agents and a list of recently verified proxies that support https websites. After tweaking the settings of scrapy a little bit, I found this method worked quite well.

I let the spiders crawl for several hours and I ended up with roughly 8500 articles on long ideas and 10000 articles on short ideas. Then I realized that this dataset is quite small! Although I didn't try, I suspected that a model from scratch would not work very well. ( More specifically, a good sentiment analysis in this situation would require understandings of sequential meaning of the texts and thus require a more complicated model like LSTM etc.)

What could we do if we are short on resources to build a powerful model? Build a simpler model on top of someone else's fine-tuned model. This strategy is known as transfer learning and it worked very well in this case! More details on the model are contained in a later section.

Some Wordclouds:

Before digging into the details of the model, let's look at the word clouds first to get an overall impression on what words are more frequent (and thus potentially more influential on the sentiment analysis) :

First, the word cloud for all articles on long ideas:

Notably, we could see words like "strong" and "growth" that indicate positive speculation.

Next, the word cloud for all articles on short ideas:

Notably, we could see words like "may" and "likely" that express uncertainties and words like "decline" and "issue" that are associated with negative speculation.

However, we could see that there are many neutral words that are common between the two word clouds. This indicates that a model that relies solely on the word counts may not perform well.

Models:

As I said before, my model is built on a pre-trained model. In particular, this tutorial is the source of the idea. The "black-box" model is a word embedding model provided here. Roughly speaking, word embedding model maps words ( which are usually coded as one-hot vectors in the first place ) to vectors in a Euclidean space of a certain dimension so that words with similar meanings or words with similar functionalities will be "close" to each other. Interested readers can learn more about it from this tutorial.

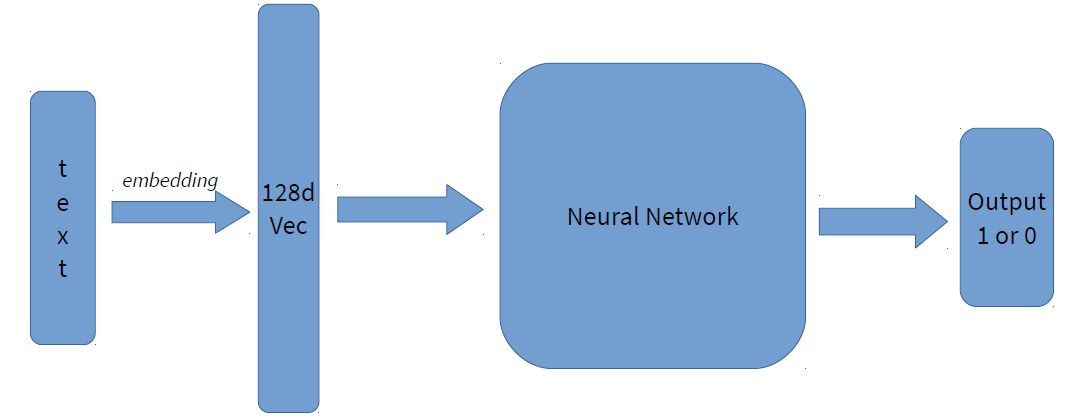

Once we have the tool to embed words, we simply take the proper weighted sum of word vectors to serve as the embedding of the whole articles. Then we run a standard neural network to predict the outcome. The graph could be summarized as follows:

For comparison, I trained 4 models based on the same idea:

- Freezing the pre-trained embedding and training only the neural network part

- Training the neural network together with the embedding

- Freezing a random embedding and training only the neural network part

- Training the neural network together with a random embedding

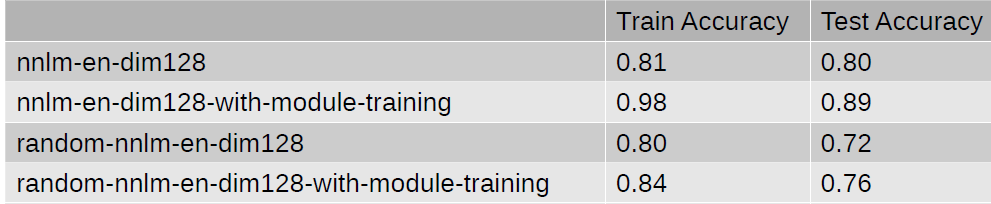

And the results are summarized in the table below:

Here, nnlm-en-dim128 is the name of the word embedding model that we use.

Note that even with the fixed random embedding, the training accuracy is still comparable to the model using a more meaningful embedding. However, the test accuracy is much lower, indicating that our neural network is overfitting the training set. Also note how fast the model is overfitting the training set if we train the neural network together with the embedding.

Finally, let's look at the confusion matrix of the first model:

Each row is normalized. Note that our model performs better on negative examples (i.e. articles on short ideas). The possible reasons are: First, there are more negative examples. Second, there are neutral words that are used both in positive and negative examples, and our model cannot distinguish sentiments based on contexts.

Future works:

- There are many hyper parameters to be tuned in the model. If we do cross-validations, then we could improve on the result.

- Consider the following sentences: " A is great while B is bad " and "B is great while A is bad." The first is positive towards A and negative towards B, and the second is the exact opposite. However, our model will treat these two sentences as the same because only the sum of the word vectors is considered. I hope that in the future, I could improve the model so that it could also take into consideration the central subject. One potential solution is to embed subject in a different way or to use a recurrent neural network.