The sentiment under movie reviews from 1999 to 2017

Motivation:

Ten years ago, when I was still a young boy, I liked to read reviews of films on TV shows on the internet. Back then, people were generally more polite. Saying “great” did not necessarily mean that the person really liked the things they commented. In fact, it came to be applied just to anything not bad. As the trend to inflate the praise of things continued, expressions like “That is the best thing I have ever heard!” started to appear to distinguish what really is good from the merely not bad.

I wanted to find out whether people did in fact change the sentiments expressed by particular phrase. I set out to collect some data and apply data science to determine the answer.

The Dataset:

I collected the reviews of movie/TV shows on IMDb from 1999 to 2018. For each year, I scripted the reviews of the top 150 movies/TV shows shown on that year.

Graph 1: The yearly distribution of the number of reviews and the mean length of the reviews. The blue bar shows the number of the reviews in each year. IMDb was founded in 1999, and the reviews kept rapid growing from that year until 2005. The number of reviews is low in 2018 because this project is done in March 2018, so only three month is included. The dark blue line shows the average length of the review, which shows a stable trend.

Method and Algorithm

I want to build the model to find the sentiment of the movie review. The sentiment of the review for each person is different. When some people says “the movie is good,” it may just mean the movie is in average rank. Others may use “ the movie is good” to show that this movie is the best movie he has ever seen. For that reason, judging people’s intended meaning is difficult. However, the platform of IMDb provides another way to show the attitude of people: the rating. The range of rating is from 1 to 10, the higher the better. If someone comments, “the movie is good” but gives a rating of 5, we will not consider the word “good” a positive word in this comments. If someone use the word “good” and gives a rating of 10, we consider the word “good” has a strong relation with the positive attitude.

Although every person has his/her own rating criteria, we can still analyze the trend along the year.

I trained a machine learning model to predict whether the review is positive or not. When the model was trained and performed well, I analyzeds the model to find which phrases prove more important. For example, if the word “good” is more important in my 1999 model, but “best” is more important in 2017 model, that indicates that people had tended to use the word “good” to show their positive opinion in the past but have generally shifted to use the word “best” in 2017.

The details of the models are:

1: I built a scrapy programming to get reviews from 1999 to 2018 and grouped it by years.

For each year I trained a machine learning model. Notice that the reviews in 2018 may also contain the review of a movie that came out in 1999.

2: I gave each review a binary label. If the rating associated with that review was higher than 7, it is labeled “positive.” If the rating is lower than 4, it will islabeled as “negative.” The other ratings are dropped to gain a better prediction accuracy.

3: I used the tf-idf method (https://en.wikipedia.org/wiki/Tf%E2%80%93idf) to split the review in to phrases. The tf-idf frequency of the phrase is used as features. The phrase here can contain at most two words. So the phrase “good” and “not good” will both be considered, and a comment with “not good” will be less likely to be mispredicted as a positive one.

4: I trained a machine learning model to predict whether a review is positive or negative for each year. I used the random forest model. The feature importance of a random forest model shows how important a role a phrase takes, and we can use the change of feature importance to analysis the sentiment change.

Graph 2: The ROC curves of three machine learning model on 2017 movie reviews set. ROC curve is used for judging the performance of a binary classifier. The larger the area under the curve, the better the classifier. If you predict randomly, the curve is a straigth line from (0,0) to (1,1). All the curves here are above that straight line, which means all the model are somehow workable. The random forest is chosen for later analysis because only a random forest model has feature importance among those three methods.

The feature importance may not be robust when the dataset is changed a little. However, random forest is an ensemble method and take the average result of several decision trees, so the fluctuation of the feature importance is reduced a lot.

Result

The whole phrase set is very large. To interpret my result, I selected the top 20 phrases ( according to the appearance counts). The graph appears below.

Graph 3: Feature Importance of the random forest classifier (Positive Words). In the Top 20 phrases, there are 6 words -- “believe”, “good”, “great”, “love”, “like”, “best” -- that have a positive meaning.

From the graph, I could conclude that people tended to use “believe” , “good”, “great” in early years to show a positive sentiment but started to use “best” more recently.

Graph 4: Feature Importance of the random forest classifier (Negative words). There are 13 phrases in the Top 20 lists have a negative meaning: “bad”, “awful”, “boring”, “poor”, “terrible”, “waste”, “worst”, “horrible”, “worse”, “crap”, “stupid”, “disappointment”, “waste time”.

From the graph, the word “stupid” was more important (which means the model has a higher chance to predict a review as negative when the word “stupid” appears in that review) during 2006-2009, and the word “waste time” has took a more important role in recent years.

One interesting thing is that the phrases with negative meanings occurs more in the Top 20 lists, and the value of the feature importance is larger. It means the random forest model make its prediction more rely on the phrases with negative meaning. That may imply that when someone says some positive words about the movie, he/she may not really mean that he likes it. But when he/she says some negative word, he/she really dislikes it. People tend to be generous with praise.

There are some words do not contain emotion. I put the graph of the neutral words below for reference.

Graph 6: The feature importance of the Random Forest Classifier (Neutral Words). Neutral words includes “really”, “supposed”, “thing”, “time”, “trying”, “way”, “contains”, “film”, “movie”, “spoiler”, “watch”, “just”, “plot”, “spoilers”, “money”, “series”. Notice: The word “spoiler” and “spoilers” are the labels given by the IMDb platform when it believes the review is a spoiler. Both the positive and negative reviews could be labeled as “spoilers”.

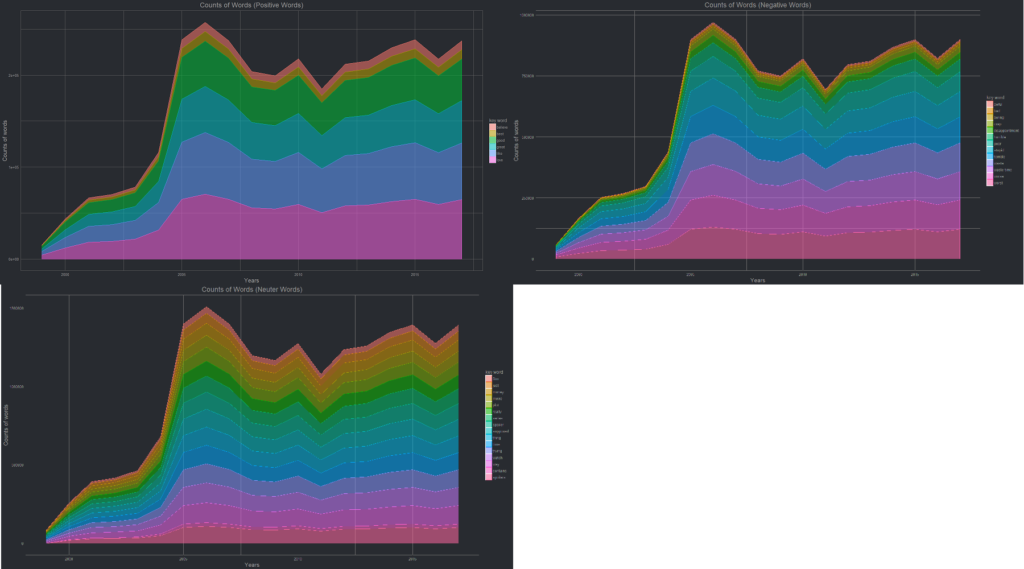

I also graphed the counts of those phrases in each year to help understand the sentiment of the reviews.

Graph 7: The counts of Phrases in each year.

The portation of each words remains stable from 1999 to 2018. It shows that the feature importance of the random forest model have little influence about the portation of the phrases. What we figure out in graphs 4-6 is the change of people’s sentiment under those phrases.

Conclusion:

After scraping and analysing almost 40,000 reviews on IMDb, I found that people do tend to use different phrases to reflect their sentiments about a film or show from 1999 to the present. Some word used in 1999 may have a different sentiment in the year 2017. Also people are more honest about their feelings when using phrases with negative meaning.

This project is just a start and not perfect. But when the wind of machine learning whisk the veil of the goddess of literature and reveal a small part of her beauty which has hidden for 19 years, I can not help to stay and marvel at the magic power of data science.