WebScraping Data in Public Goods

The skills I demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

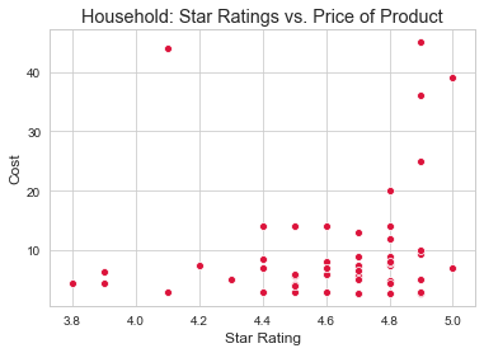

For my web scraping project, I chose to scrape product information from Public Goods, a membership-based online home goods store with a focus on quality, sustainability, and simplicity. My main objective was to analyze the scraped product data to discover trends in price and ratings across product categories – ranging from Personal Care, Household, Grocery, Supplement & Vitamins, Pets, and CBD. In analyzing product data, I sought to not only discover if price points played a factor in customer ratings and reviews, but also what categories yielded the most engagement.

Scraped Data

Using python to build my Selenium web scraper, I crawled through 250 product pages on Public Good’s website. Information that I specifically targeted was the product’s name, product description, core features, main ingredients, price, volume, ratings, and number of reviews. With python I was able to do the bulk of my preprocessing and created a pandas dataframe containing the main variables I wanted to analyze. Once creating the dataframe I used Seaborn to visualize the data, and create the plots showcased in this blog.

Data Outcomes

- In some cases, the lower the price of the product, the higher the rating. This is not true across all categories.

- Number of reviews may be affected by price point, but there are other features to consider.

- Star ratings from 3 – 4 have a lower range of number of reviews. Whereas star ratings from 4 -5 have a more inclusive range.

- The highest engagement in regards to star ratings and number of reviews were found in the grocery and household product category.

Next Steps

- Investigating if variables such as: core features and main ingredients influence star ratings and reviews.

- Text Sentiment Analysis / NLP

- Acquiring revenue data.

- Integrating competitor data – i.e Brandless, Amazon Prime, etc.

- Acquiring actual data on how negative reviews effect business revenue and churn.