Data Study on SeriousEats

The skills the author demoed here can be learned through taking Data Science with Machine Learning bootcamp with NYC Data Science Academy.

Introduction

Cooking dinner at home is healthier and more affordable than eating out. But it can be difficult after a long day at work to summon the energy to get dinner on the table. SeriousEats is recipe blog that has high-quality content data that can be leveraged to make it easier for their users to cook at home.

If we use the total number of ratings as an indicator of popularity, SeriousEats was most popular in 2011 after receiving two James Beard awards. Since then, popularity has declined even though the recipe quality has remained high (Figure 1). By developing new tools and recipes, SeriousEats could rejuvenate their user base and see an increase in their advertising revenue.

Data

Figure 1. The number of ratings (left) suggests that SeriousEats' popularity may be declining, despite recipe quality (right) staying high.

Figure 1. The number of ratings (left) suggests that SeriousEats' popularity may be declining, despite recipe quality (right) staying high.

I scraped the SeriousEats website for recipe data to identify areas to generate new content and build a recipe suggestion tool to make it easier to find recipes. I used the Scrapy python package to extract data from individual recipes (Figure 2). In total, I collected 13 features including ingredient list and ratings from 12,620 recipes.

Figure 2. My scrapy spider started at seriouseats.com and parsed through each topic until extracting the individual recipes. Numbers indicate the number of pages scraped at each level.

Figure 2. My scrapy spider started at seriouseats.com and parsed through each topic until extracting the individual recipes. Numbers indicate the number of pages scraped at each level.

My first goal was to identify areas to generate new content. SeriousEats has over 50 recipes for French, Mexican, and Italian cuisines. While these are recipes have very high ratings, adding a few more of these recipes may not attract more users. Instead, adding more recipes from African or Caribbean cuisines may be more promising. These cuisines are underrepresented with less than 10 recipes, but have high average (and median) ratings (Figure 3).

New content may not be enough to draw more users. If you have tools that make life easier, then I think you would see an increase in readership. I built a prototype recipe suggestion tool to make it easier to decide what to make for dinner. A user can type in whatever ingredients are in their fridge or whatever they have a craving for and this tool will give them a link to a recipe that closes matches those ingredients.

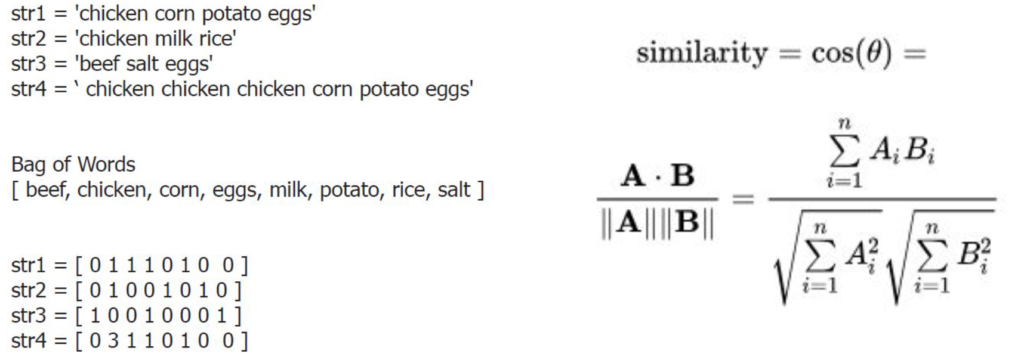

Cosine Similarity

To build my tool, I used a common technique in Natural Language Processing called cosine similarity. If you think of every word as an axis, you can create a vector for each sentence. Now that your sentence is a vector, you can calculate how close it is to another vector by taking the cosine between the two (Figure 4). This elegant approach allows us to easily quantify the similarity between two collections of words.

Figure 4. Cosine Similarity can be used to determine how similar two sentences are to each other (left, middle). Image Credit: Vit Novotny and Michael Penkov.

Figure 4. Cosine Similarity can be used to determine how similar two sentences are to each other (left, middle). Image Credit: Vit Novotny and Michael Penkov.

I applied this approach to determine the similarity between ingredient lists (Figure 5).

- Make a Bag of Words that has every ingredient from every recipe.

- Count the number of times each ingredient appears in a given recipe.

- Calculate the cosine similarity between your search and each recipe.

- Return the link of the recipe with the highest score.

On my first test run, I entered ingredients which I thought would return a shrimp curry: shrimp, coconut, cilantro, curry. Instead, the search tool recommended a coconut and chocolate dessert! Not even close! So why did my test fail?

Figure 5. Ingredient lists can be converted to vectors and compared in the same way (left). Using the L2 normalization prevents recipe length from biasing our search results (right).

Figure 5. Ingredient lists can be converted to vectors and compared in the same way (left). Using the L2 normalization prevents recipe length from biasing our search results (right).

Looking back at my code, I realized I wasn’t properly normalizing my similarity score. The dessert recipe used coconut 12 times which skewed my results. To account for this, I took advantage of the set data type in Python which stores unique occurrences of items. I also added in the L2 normalization to prevent recipe length from impacting the cosine similarity score.

Conclusion

After these modifications, my tool returns the expected result. As a prototype, I think this suggestion tool has a lot of promise to attract users. The current Serious Eats search tool returns suggestions with some but not all of your items. Also, the current search tool often returns a large number of recipes which doesn't help if you're feeling indecisive. Using my approach, SeriousEats can attract new users by making it easier to decide what’s for dinner.