What Makes it Funny?

Adam Cone is currently in the NYC Data Science Academy 12 week full time Data Science Bootcamp program taking place between April 11th to July 1st, 2016. This post is based on his third class project - Web Scraping (due on the 6th week of the program). The video of his presentation is here:

At Slightly Warped I found 387 offensive jokes in text form with no pictures. I wrote a Python script to scrape these 387 offensive jokes off of the website using the bs4, requests, and string libraries.

After decomposing each joke into a Python list of words, I wrote further code to obtain, for each joke, the values of three integer variables: word count, curse word count, and number of exclamation points. For this project a curse word was an element of list of 41 character strings that I cobbled together from internet research and my own imagination. I considered these three variables independent.

I read each of the 387 jokes with the intent to gather, as the independent variable, whether I found each joke funny. However, I noticed internally that whether a joke was funny wasn't the determination that interested me. I noticed that the jokes, even when I liked them, would also be associated often with unpleasant feelings, like sadness or disgust. I imagine this is why they are clearly labeled "offensive jokes" and not simply "jokes". Generally, this alloyed feeling led me to wonder whether, on balance, I was pleased I had read the joke or not. So, although I set out with the intention to determine whether a joke was funny, I ended up recording whether a joke was worthwhile: was I pleased that I had heard it? This variable was a 2-level factor: 'yes' or 'no'. Of the 387 jokes, I found 87 worthwhile ('yes') and 300 not worthwhile ('no').

With three independent integer variables and one dependent factor variable, I imported the data into R as a data frame. I proceeded to investigate whether each independent variable, on its own, had a significant relationship to the dependent variable. I predicted that I would find so significant relationships between any of the individual independent variables and the dependent variable.

First, I looked at word count. The distribution of word count for the 387 jokes is given in the histogram below.

The majority of the jokes are relatively short (30 words or fewer), with a few long outliers. To visually determine the distribution of word count at each level of the dependent variable, I used a box plot, below.

These distributions looked very similar to me. The median for 'no' is 16 and the median for 'yes' is 18. So far, I saw little evidence of a significant relationship between word count and subjective joke worthwhileness.

I performed Welch's t-test to determine whether the 'yes' and 'no' populations might have different mean word counts. Welch's t-test requires the assumptions of normality of the sample mean; and independent, random sampling. To verify normality, I confirmed that each population has N>30 (87 for 'yes', 300 for 'no'). On it's own, this would have been sufficient, but I also checked the qqplots for each sample, and they seemed acceptable. Verifying independence was more difficult: these jokes had been, as far as I could tell, curated by one Jason Donner. Presumably, he didn't pick random jokes. To pass the independence/randomness requirement, I consider my populations as follows. The 'no' population is the total set of jokes that Jason Donner would publish on his site if he read them, and that I wouldn't rate as worthwhile. The 'yes' population is the total set of jokes that Jason Donner would publish on his site if he read them, and that I would rate as worthwhile. Based on this definition, I'm comfortable asserting that the samples I have were the result of an independent and random process by which Jason Donner was exposed to the jokes.

H_0 for Welch's t-test is that the 'yes' and 'no' populations have the same mean word count. The p-value for the test was ~7.6% so I fail to reject H_0 based on the standard 5% significance level. So, overall, I found little evidence for a relationship between word count and my response to the jokes.

Next, I looked at the curse word variable. The sample curse word distribution is illustrated in the following histogram.

310 of the 387 jokes contained none of the 41 words I identified as curse words. To determine whether the distribution of curse words in the 'yes' and 'no' samples differed, I used a violin plot. I used a violin plot instead of a box plot over my default preference because the box plot had too much over-plotting to clearly communicate the distributions.

The median number of curse words in both the 'yes' and 'no' sample jokes was 0. The distribution for the 'yes' sample looks significantly taller, but it's possible that it just is accounting for a few outliers, which are visible in the histogram. Both distributions have heavy bases and don't look that dissimilar to me.

Again, after checking normality with qqplots and having already verified independence/randomness from the word count EDA, I performed Welch's t-test on the curse word samples. The p-value for the Welch's t-test was ~4.1%. While this is technically significant at the 5% significance level, it seemed unconvincing to me, given the violin plot. To further investigate, I considered each joke as either containing a curse word or not: the number of curse words became irrelevant. I thought this might mitigate the effect of the few heavy curse-word outliers. This left me with the following table.

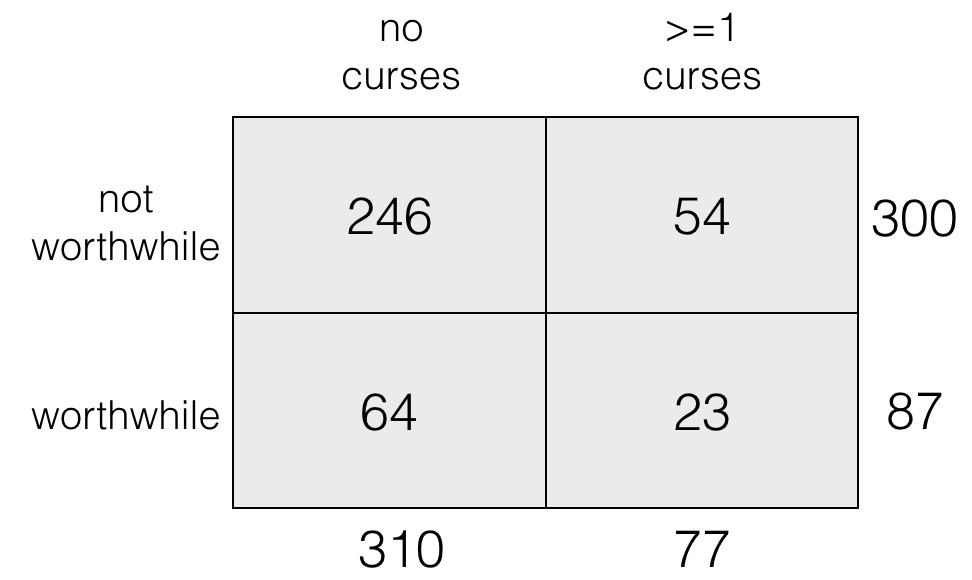

Now, since I had, in my own tortured way, already verified independent, random sampling, the only assumption necessary for the chi-squared test, I ran a chi-squared test to determine whether these variables, with curse words considered as a binary variable, were independent.

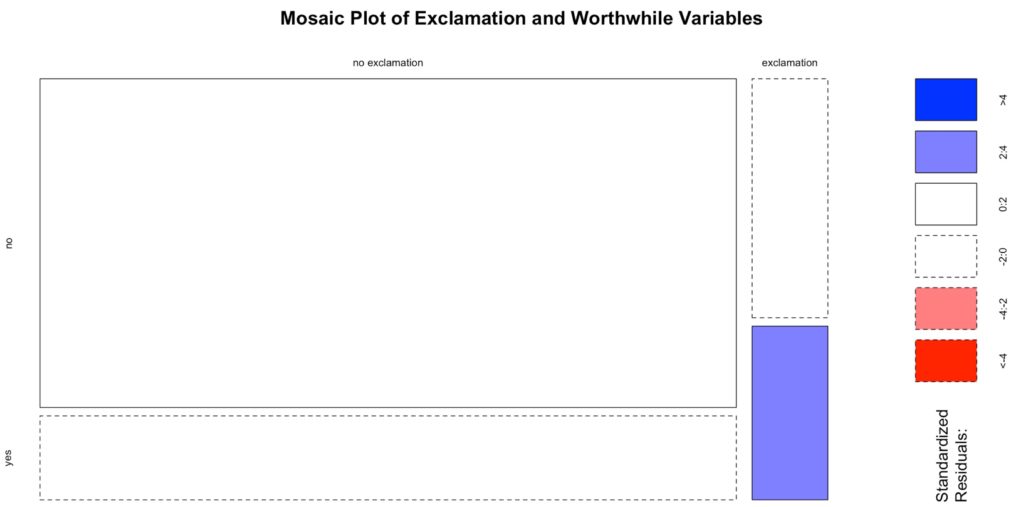

H_0 for the chi-squared test was that the variables are independent. The mosaic plot for the chi-square test was

None of the four variable combinations yielded large residuals. Unsurprisingly, the p-value for the test was ~11.3%, which is not significant at the standard 5% significance level. Therefore, I failed to reject H_0.

So, my take-away is that I failed to find convincing evidence for a relationship between curse words and my response to the jokes. Although, when considered as an integer variable, the curse words showed a technically significant relationship to my response, the p-value was high and I sense that this was largely a result of a few outliers with high curse word count.

Finally, I investigated the relationship between exclamation points and my responses to the jokes. Here is the distribution of exclamation points in the entire sample of 387 jokes.

349 of 387 jokes had no exclamation points. Most of the rest had one exclamation point, with one outlier of 5 exclamation points. To visualize the distributions of exclamation points in the 'no' and 'yes' samples, I used a violin plot, for the same reasons outlined above.

The median number of exclamation points in both samples was 0. The 'yes' distribution looked significantly taller and thinner to me. As before, I looked to verify a significant relationship with Welch's t-test after verifying normality. H_0 for the Welch's t-test was that the 'yes' and 'no' populations had the same mean exclamation point count. The p-value for Welch's t-test was ~2.3%, so I rejected H_0.

Now, although this p-value was stronger that the p-value for the curse word Welch's t-test, I wasn't completely sure this wasn't also largely due to the few outliers. Therefore, as with the curse words, I considered the exclamation point variable as binary: each joke either had at least one exclamation point or didn't. This way, the outliers with large numbers of exclamation points wouldn't have an effect. The resulting table was:

Now, since I had, in my own tortured way, already verified independent, random sampling, the only assumption necessary for the chi-squared test, I ran a chi-squared test to determine whether these variables, with exclamation points considered as a binary variable, were independent.

H_0 for the chi-squared test was that the variables are independent. The mosaic plot for the chi-square test was

Here, there is a significant residual for the combination of worthwhile jokes and exclamation points: more than expected! The p-value for the chi-squared test was ~0.4%, which is significant at the standard 5% significance level.

Overall, I see significant evidence of a relationship between exclamation points and my response to the sample jokes. The Welch's t-test p-value was low and I can't dismiss this as some kind of outlier effect, since the chi-squared p-value was even lower.

Summary and Conclusion

In conclusion, I failed to find significant evidence of single-variable relationships between word count or curse words and joke response. I did find significant evidence of a relationship between exclamation points and joke response. This was a surprise.

If I worked on this project further, would like to pursue

- responses from different people,

- multi-variable relationships, and

- honing response metric.