When Life Gives You (Lulu)lemons

ABOUT ME

My name is Stella Kim and I am data scientist interested in helping businesses make data-driven decisions by leveraging customer information to improve sales.

Here is the link to the Shiny application, and here is the link my GitHub where you can find the associated code.

BACKGROUND

Customers now have a wide platform to communicate their thoughts and opinions about brands and products much more effectively, whether through online websites, social media, customer service, physical stores. However, while the digital age has allowed consumers and brands to connect at a higher level than ever before, companies are still lagging behind customer needs and expectations. In order glean the most insight out of their customers, companies must follow closely along their entire journey to examine their purchasing habits, likes, dislikes, and a variety of other personalized information. Bottom line: know your audience.

While I considered scraping reviews from multiple websites (including Sephora, Athleta, Nike, Colourpop, & Other Stories), what piqued my interest in Lululemon was the unusual distribution of ratings. While most companies are known to delete (at least some) negative reviews and plant highly-rated reviews, I found it odd that several (if not all) Lululemon products (women's tights in this case) had a peak at 2- and 5-stars, which could be (1) from customers at opposite ends of the extreme (very happy and very mad customers) in a scenario where Lululemon actually does not delete reviews or (2) from artificially-planted (fake 5-star reviews).

The main motivation for this project was to search through ratings and reviews patterns that can explain any gaps in customer satisfaction, either by product or by customer segment, to see how Lululemon (or any company) could improve these issues, because ultimately, customers are free agents who decide where, when, and how to spend their money.

DATA COLLECTION

My project involved scraping the lululemon athletica website for product information and reviews. Specifically, I used Selenium to collect URLs for individual women's tights.

I subsequently scraped ratings, reviews, and user profile information (username, location, athletic type, age, body type, likes, and dislikes) for each product, as well as any responses by the company. Overall, I scraped 13,825 different reviews from 35 different products, with reviews ranging from 2015 to 2019.

INTERACTIVE WEB APPLICATION

With this data, I created a multi-purpose web application, which can be used to parse through multiple components such as product ratings, reviews, customer profiles, and natural language processing (sentiment analysis and word clouds).

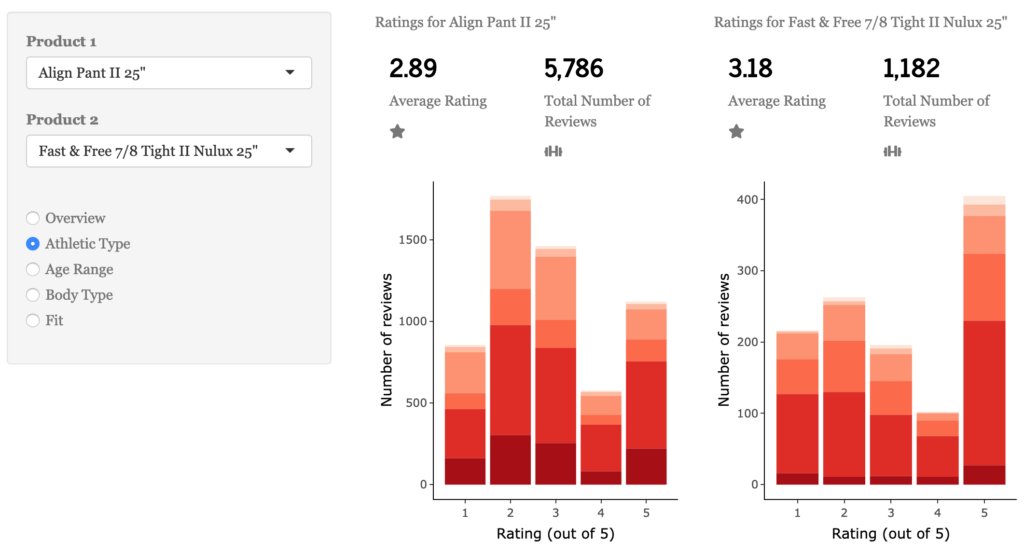

Overall, the average rating of all women's tights is 2.98. Breaking down all reviews starting from 2015, there is an obvious peak of 2- and 5-star ratings. I was interested to see whether there was a shift in these ratings from a relative year-to-year perspective. From a visual perspective, there does seem to be a shift from 2- and 3- to 5-star reviews from 2017 to 2018.

I chose to look ratings from a product perspective, breaking down individual products based on customer profile. This includes athletic type, age range, body type, and fit (of product).

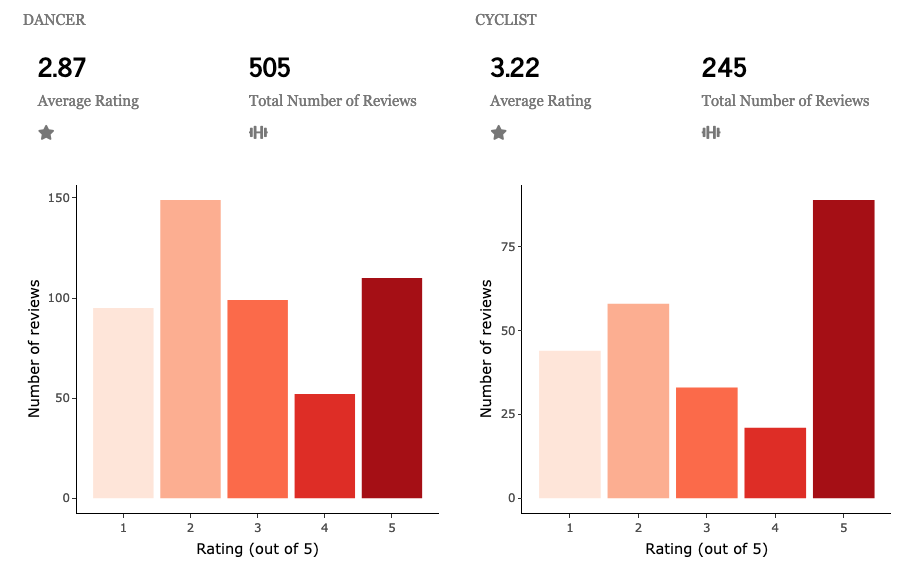

I also chose to look at products from a customer perspective. Athletic type is broken down into 5* categories: sweaty generalist, dancer, yogi, runner, and cyclist. Age range is down into 8* categories: under 18, 18-24, 25-34, 35-44, 45-54, 55-65, over 65, and "I keep my age on the D.L.". Body type is broken down into 6* categories: petite, solid, curvy, athletic, slim, and muscular. Finally, fit is broken down into 7* categories: second skin, tight, snug, flowy, just right, roomy, and oversized.

*N/As are not counted as a category here

There weren't huge differences between athletic types, with dancers giving the lowest average rating at 2.87 stars and cyclists giving the highest average rating at 3.22.

Similarly, there weren't many obvious differences between different body types, with athletic customers giving an average rating of 2.99 stars and solid (whatever that may mean) customers giving an average of 3.28 stars.

Customer age range, however, showed a more distinct pattern. As the age range increased, customers tended to be more generous with their ratings. Customers under 18 gave an average rating of 2.93; 18-24 gave an average of 2.7; 25-34 gave an average of 3.04, 35-44 gave an average of 3.54; 45-54 gave an average of 3.91; 55-65 gave an average of 4.22; over 65 gave an average of 4.34 (with only 35 reviews total); and "I keep my age on the DL" gave an average of 3.54, but this category could be any mix of ages. This shows that while the older market is seemingly more satisfied with their product, there is room for improvement with the younger market.

Continuing this trend, the fit of the tights also showed a dramatic shift. In order from tightest to loosest (second skin, tight, snug, just right, roomy, flowy, oversized), the average rating for each category was 3.53, 3.19, 3.11, 3.08, 2.17, 1.34, and 1.7 stars. Personally, I would think that "just right" would be the perfect fit, but everyone is different! Clearly, the more closely the tights fit the customer, the more well-regarded the product is.

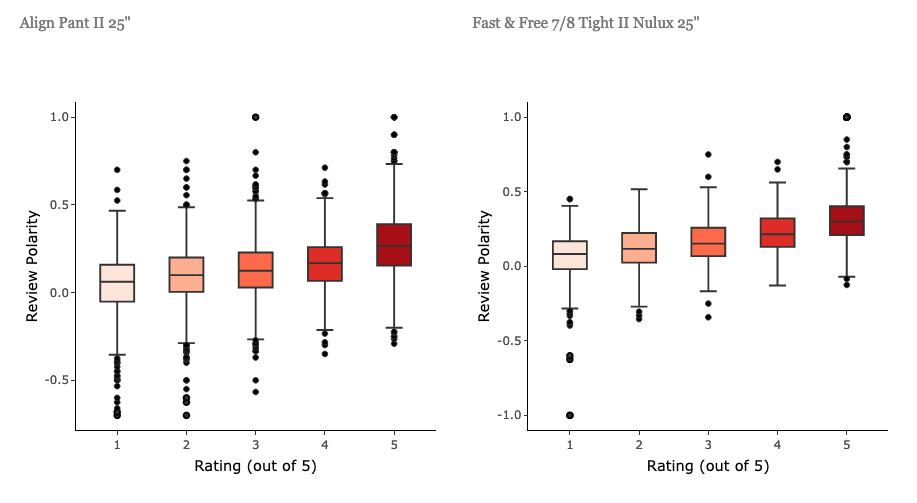

I also examined the reviews for each product through sentiment analysis. Unsurprisingly, the polarity of reviews increased with ratings. However, subjectivity remained relatively stable across all ratings. One feature I would like to work on is to do further sentiment analysis on reviews by different customer profiles, to see whether these patterns in age range and product fit are also reflected in the polarity and subjectivity of reviews.

Continuing with the reviews, I also generated word clouds for each product. Unsurprisingly, the words "pants" and "leggings" showed up most frequently, indicating that these should be added as stop words when I go back and re-process the data. I am also interested in breaking down word clouds by rating, to see the most common praises (i.e "comfortable") versus most common complaints (i.e. "pilling").

Finally, I was also interested in analyzing company response rates. Customer service is a key component in running a successful business, by gently reminding customers that businesses are listening to their input. The average response time for each product does not vary much, with most products hovering around 1 day. The response rate, on the other hand, varied from product to product, with the 4-star ratings receiving the least and negative ratings receiving more responses.

FUTURE WORK

This application is a work in progress, and there is more that I would like to do with all of the information that I scraped. I would like to add more descriptive analyses on the various customer groups and would like to include more analyses involving natural language processing. In particular, I would like to look more into the "age range" and "fit" categories to determine the most common complaints in negative ratings, which would give a good idea of how Lululemon could cater to these clients.

For more information on customer/journey analytics, check out these links:

(1) https://www.mckinsey.com/business-functions/marketing-and-sales/our-insights/five-questions-brands-need-to-answer-to-be-customer-first-in-the-digital-age

(2) https://www.mckinsey.com/business-functions/operations/our-insights/mastering-the-digital-advantage-in-transforming-customer-experience

(3) https://www.mckinsey.com/business-functions/marketing-and-sales/our-insights/from-touchpoints-to-journeys-seeing-the-world-as-customers-do

(4) https://www.mckinsey.com/business-functions/marketing-and-sales/our-insights/irrational-consumption-how-consumers-really-make-decisions

(5) https://www.bain.com/insights/management-tools-customer-segmentation/

(6) https://www.bain.com/insights/predictive-analytics-can-widen-the-aperture-for-potential-sales-snapchart/